SolarWinds VP Offers 2024 Predictions on AI

As CIOs are either in the process of implementing AI into observability efforts,

or at the very beginning stages, Stewart says data hygiene and management is

going to be a key factor. “One of the key components is really understanding

where you’re at on that observability journey,” he says. “There are a lot of

disparate tools and different observability offerings that may be very segmented

… The key is having the full data set across that stack that allows the AI

technology to leverage that data, because if the engines don’t have the data

across the stack, then there’s going to be parts of the puzzle that are missing,

and AI is just not going to be able to accommodate.” ... “IT budgets aren’t

getting bigger,” Stewart says. “And in many cases, budgets are shrinking based

on concerns with the economy. Folks are looking for ways to save and some of

that will certainly come through automation and efficiencies. And some of that

will come through tool and vendor consolidation. The ability to leverage various

AI technologies is certainly something that people are interested in to realize

those efficiencies.”

Beware of hidden cloud fees

Fees can complicate cost management more so when transferring data across

different cloud platforms, which is typical for multicloud deployments. Also,

various factors such as location, geography, and data type can significantly

impact the size of these charges. Egress charges, levied for data transferred

out of a cloud service provider’s network, are now a hot button, even though

they’ve been a part of cloud bills for years. High egress charges can inflate

operational costs and restrict organizations from transitioning between cloud

providers or moving their data to more cost-effective alternatives, even back to

their enterprise data centers. As one of my clients put it, they feel their data

is being held for ransom. ... Of course, many are looking to the cloud providers

charging these fees to fix the issues. They may not be legally obligated to

remove these fees, but they are listening to cloud users and have taken steps to

reduce egress fees. Many enterprises are questioning their need to be in the

cloud in the first place and could move to other platforms if costs are too

high. Much of the repatriation that’s occurring is purely for cost issues. All

things being equal, companies would rather stay in the cloud. If enterprises

could get relief from annoying fees, this could keep some companies in place on

the public cloud providers.

New study urges industry to address generational division in tech skills

As artificial intelligence becomes increasingly common in industries, experts

are urging companies to address the gaps to sustain organisation capability.

“Technology is transforming organisations – faster and more diverse than ever.

Communication, collaboration, financial savings, productivity and security are

underpinning these shifts and forming the catalyst for change,” said Greg Weiss,

an HR consultant, onboarding expert, and the founder of Career365. Capterra’s

study identified the three primary challenges that hinder the speed of digital

transformation. These include the usage gap among employees, limited access to

resources or training, and the constant introduction of new tools making it

difficult to adapt. The research also revealed that while millennials are

naturally inclined to digital tools (87 per cent), baby boomers and Generation Z

are equally drawn to new technology (85 per cent). “The appetite is definitely

there. It’s a matter of how these employees are facilitated and bridging the

digital generation gap is crucial. A cookie-cutter approach to training and

support doesn’t work in a divergent workforce – as their alignment differs,”

Weiss said.

The Case for (and Against) Monorepos on the Frontend

Monorepos aren’t just for enterprise applications and large companies like

Google, Savkin said. As it stands now, though, polyrepos tend to be the most

common approach, with each line of business or functionality having its own

repo. Take, for example, a bank. Its website or app might have a credit card

section and an auto loan section. But what if there needs to be a common

message, function or even just a common design change across the divisions?

Polyrepo makes that harder, he said. “Now I need to do a coordination thing with

team A, team B,” Savkin said. “In a polyrepo case, it can take many months.” In

a monorepo, it’s easy to make that one change in as little as a day, he added.

It also enables sharing components and libraries across development teams.

Monorepos helped Jotfrom, an online forms company based in San Francisco, reduce

its technical debt on the frontend, according to frontend architect and

engineering director Berkay Aydin. Aydin wrote last week about the company’s

move to a monorepo for the frontend. “We don’t have multiple configs or build

processes anymore,” Aydin wrote. “Now, we’re sure every application is using the

same configurations.”

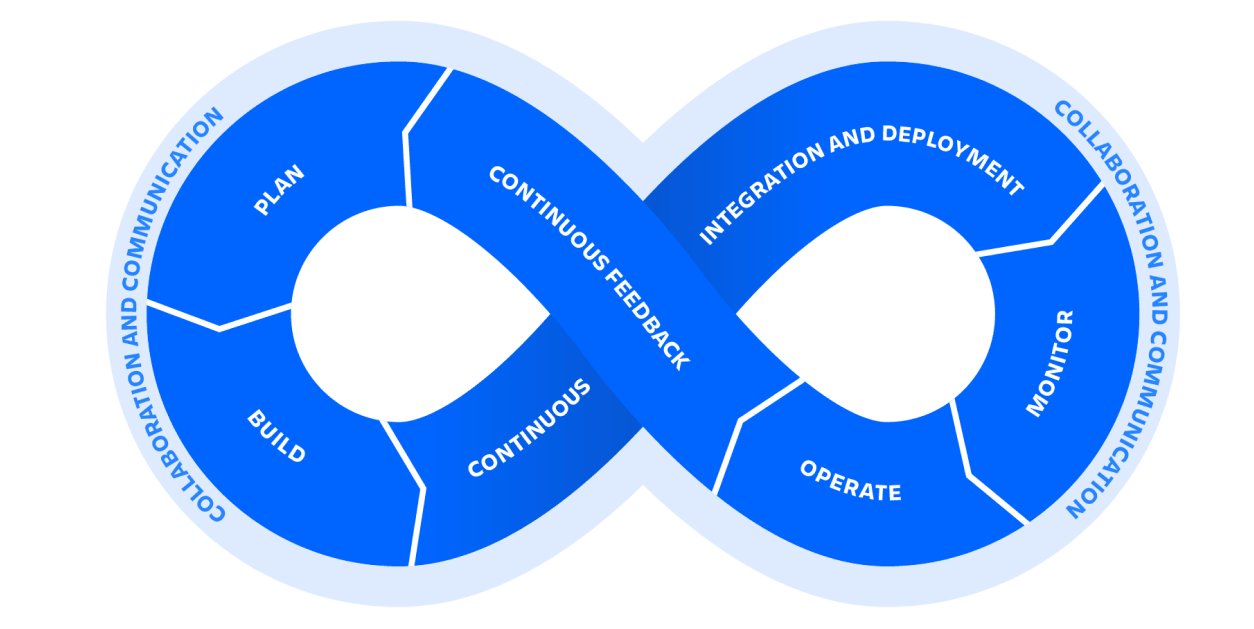

Enterprises struggle with Agile methodology, reports long-standing survey of practitioners

According to the report, Agile is most successful at small companies. “Those in

small companies are more likely than those in medium and large ones to say they

are satisfied [with Agile],” the report states, and “74 percent of small

companies (versus 62 percent at large companies) said at least 50 percent of

their applications were delivered on time and with quality”. A key problem,

which will not be a surprise to developers, is that “the business side is very

slow to embrace Agile. Almost half of survey takers pointed to a generalized

resistance to organizational change or culture class as the reasons why the

business side is not embracing Agile, up 7 points from 2022.” ... Scrum is a

specific Agile methodology and used by 63 percent of Agile teams, according to

the report, which also states that Scrum has been the most popular Agile

methodology since 2006 when the survey was first conducted. That said, even

Scrum has many variants and the survey states that “the Agile landscape

continues to be very fragmented.” 22 percent of survey respondents said that “we

don’t follow a mandated framework” and 12 percent that “we created our own

enterprise Agile framework.”

The OWASP AI Exchange: an open-source cybersecurity guide to AI components

In the context of AI systems, OWASP’s AI Exchange discusses development-time

threats in relation to the development environment used for data and model

engineering outside of the regular applications development scope. This includes

activities such as collecting, storing, and preparing data and models and

protecting against attacks such as data leaks, poisoning and supply chain

attacks. Specific controls cited include development data protection and using

methods such as encrypting data-at-rest, implementing access control to data,

including least privileged access, and implementing operational controls to

protect the security and integrity of stored data. Additional controls include

development security for the systems involved, including the people, processes,

and technologies involved. This includes implementing controls such as personnel

security for developers and protecting source code and configurations of

development environments, as well as their endpoints through mechanisms such as

virus scanning and vulnerability management, as in traditional application

security practices. Compromises of development endpoints could lead to impacts

to development environments and associated training data.

NIST Offers Guidance on Measuring and Improving Your Company’s Cybersecurity Program

The publication is designed to be used together with any risk management

framework, such as NIST’s Cybersecurity Framework or Risk Management Framework.

It is intended to help organizations move from general statements about risk

level toward a more coherent picture founded on hard data. “Everyone manages

risk, but many organizations tend to use qualitative descriptions of their risk

level, using ideas like stoplight colors or five-point scales,” said NIST’s

Katherine Schroeder, one of the publication’s authors. “Our goal is to help

people communicate with data instead of vague concepts.” Achieving this goal,

according to the authors, involves moving from qualitative descriptions of risk

— perhaps using broad categories such as high, medium or low risk level — to

quantitative ones that carry less ambiguity and subjectivity. An example of the

latter would be a statement that 98% of authorized system user accounts belong

to current employees and 2% belong to former employees. The team developed the

new draft guidance partly in response to public requests and feedback from a

pre-draft call for comment.

What is credential stuffing and how can I protect myself? A cybersecurity researcher explains

Credential stuffing is a type of cyber attack where hackers use stolen usernames

and passwords to gain unauthorised access to other online accounts. In other

words, they steal a set of login details for one site, and try it on another

site to see if it works there too. This is possible because many people use the

same username and password combination across multiple websites. It is common

for people to use the same password for multiple accounts (even though this is

very risky). Some even use the same password for all their accounts. This means

if one account is compromised, hackers can potentially access many (or all)

their other accounts with the same credentials. ... The best way is to never

reuse passwords across multiple sites or apps. Always use a unique and strong

password for each online account. Choose a password or pass phrase that is at

least 12 characters long, is complex, and hard to guess. It should include a mix

of uppercase and lowercase letters, numbers, and symbols. Don’t use pet names,

birthdays or anything else that can be found on social media. You can use a

password manager to generate unique passwords for all your accounts and store

them securely.

54% data fiduciaries lack experience in enforcing data protection laws

)

The research findings are based on the provisions of India's Digital Personal

Data Protection (DPDP) Act that was enacted in August 2023. The rules for the

Act are yet to be released for public consultation. The findings are part of the

research carried out by the think tank Esya Centre in a report called "An

Empirical Evaluation of the Implementation Challenges of the Digital Personal

Data Protection Act 2023: Insights and Recommendations for the Way Forward." It

has involved insights from 16 industry stakeholders, of which 13 are data

fiduciaries and three are experts. "India has come a long way from the early

iterations of the Data Protection Bill to the enactment of the Digital Personal

Data Protection Act, 2023. The decision to eschew localization requirements and

a compliance-heavy framework heralds a commitment to a progressive framework. It

is now time to ensure that the prospective rules maintain the forward-thinking

approach underpinning the parent Act and preserve a compliance-light data

protection regime in the country," said Meghna Bal, Head of Research, Esya

Centre.

Navigating The 'Fog Of A Cyberattack': Critical Lessons In Governance From The SEC Cybersecurity Rule

The short breach notification timeline attached to the SEC’s new cybersecurity

disclosure rule is loud and clear: C-Suite leaders and boards have important

work to do in ensuring their organizations can quickly identify, understand and

publicly disclose material cybersecurity events and impacts. In this case, the

expression “fog of war” is a useful analogy for understanding a critical

complication. The term recognizes that many factors on which action in war is

based are “wrapped in a fog of greater or lesser uncertainty.” The fog of a

cyber event will similarly make the four-business-day timeline incredibly

challenging. ... Instead of making battle plans mid-crisis, prepare now,

establishing how incidents are identified, how reports get written and who’s

responsible for determining materiality. Create rough boundaries for evaluating

materiality (e.g., questions to ask, example incidents) to make decisions as

clear as possible. Incomplete information is better than no information. You may

not have a complete picture to share publicly, and that’s okay. But when you do

your initial disclosure, establish when your next update will be

shipped.

Quote for the day:

“The more you loose yourself in

something bigger than yourself, the more energy you will have.” -- Norman Vincent Peale