AI gold rush makes basic data security hygiene critical

APIs, in particular, are hot targets as they are widely used today and often

carry vulnerabilities. Broken object level authorization (BOLA), for instance,

is among the top API security threats identified by Open Worldwide Application

Security Project. In BOLA incidents, attackers exploit weaknesses in how users

are authenticated and succeed in gaining API requests to access data objects.

Such oversights underscore the need for organizations to understand the data

that flows over each API, Ray said, adding that this area is a common challenge

for businesses. Most do not even know where or how many APIs they have running

across the organization, he noted. There is likely an API for every application

that is brought into the business, and the number further increases amid

mandates for organizations to share data, such as healthcare and financial

information. Some governments are recognizing such risks and have introduced

regulations to ensure APIs are deployed with the necessary security safeguards,

he said. And where data security is concerned, organizations need to get the

fundamentals right.

Microsoft pushes for government regulation of AI. Should we trust it?

By focusing on legislation for the dramatic-sounding but faraway potential

apocalyptic risks posed by AI, Altman wants Congress to pass important-sounding,

but toothless, rules. They largely ignore the very real dangers the technology

presents: the theft of intellectual property, the spread of misinformation in

all directions, job destruction on a massive scale, ever-growing tech

monopolies, loss of privacy and worse. If Congress goes along, Altman, Microsoft

and others in Big Tech will reap billions, the public will remain largely

unprotected, and elected leaders can brag about how they’re fighting the tech

industry by reining in AI. At the same hearing where Altman was hailed, New

York University professor emeritus Gary Marcus issued a cutting critique of AI,

Altman, and Microsoft. He told Congress that it faces a “perfect storm of

corporate irresponsibility, widespread deployment, lack of regulation and

inherent unreliability.” He charged that OpenAI is “beholden” to Microsoft, and

said Congress shouldn’t follow his recommendations.

Ghostscript bug could allow rogue documents to run system commands

The problem came about because Ghostscript’s handling of filenames for output

made it possible to send the output into what’s known in the jargon as a pipe

rather than a regular file. Pipes, as you will know if you’ve ever done any

programming or script writing, are system objects that pretend to be files, in

that you can write to them as you would to disk, or read data in from them,

using regular system functions such as read() and write() on Unix-type systems,

or ReadFile() and WriteFile() on Windows… …but the data doesn’t actually end up

on disk at all. Instead, the “write” end of a pipe simply shovels the output

data into a temporary block of memory, and the “read” end of it sucks in any

data that’s already sitting in the memory pipeline, as though it had come from a

permanent file on disk. This is super-useful for sending data from one program

to another. When you want to take the output from program ONE.EXE and use it as

the input for TWO.EXE, you don’t need to save the output to a temporary file

first, and then read it back in using the > and < characters for file

redirection

Island Enterprise Browser: Intelligent security built into the browsing session

It is essential to begin with the fact that Island policies are

straightforward to configure. By the nature of the Application Boundary

concept mentioned above, there is usually little need to focus on the painful

granular efforts of traditional data protection approaches. Leveraging such

facilities will ensure that organizational data remains within the corporate

application footprint, allowing data to move freely when desired across that

footprint, but can prevent the spillage of corporate data into undesirable

places. ... Island has very flexible logging and audit features. Because the

browser is a natural termination point for SSL traffic, Island does not have

to leverage complex break-and-inspect mechanics required by countless security

tools to gain visibility and control. The result is that Island has unimpeded,

very natural visibility over application usage. Most importantly, the ability

to have dexterity in audit logging delivers complete privacy for the user at

the proper times, anonymized but audited logging at other times, and even deep

audit over any application engagement at other times.

Get Ahead of the Curve: Crafting a Roadmap to a Successful Data Governance Strategy!

Crafting a seamless data governance plan is crucial for any organization that

wants to move from data anarchy to order. A well-designed data governance plan

can help ensure that data is accurate, consistent, and secure. It can also

help organizations comply with regulatory requirements and avoid costly data

breaches. To create a seamless data governance plan, it is important to start

by identifying the key stakeholders and their roles in the data governance

process. This includes identifying who will be responsible for data

management, who will be responsible for data quality, and who will be

responsible for data security. Once the key stakeholders have been identified,

it is important to establish clear policies and procedures for data

governance. This includes defining data standards, establishing data quality

metrics, and creating data security protocols. It is also important to

establish a system for monitoring and enforcing these policies and procedures.

By following these steps, organizations can create a seamless data governance

plan that will help them move from data anarchy to order.

History Never Repeats. But Sometimes It Rhymes.

Imagine Red Hat succeeds in eliminating all vendors it calls “rebuilders” from

Enterprise Linux. Congratulations, Red Hat! You’re now king of the hill, and

all users who want a “true” Enterprise Linux will be purchasing Red Hat

subscriptions! What will this do for the Enterprise Linux ecosystem According

to Mike McGrath, Red Hat’s Vice President of Core Platforms, this will allow

Red Hat to invest all that extra subscription money into creating new and

innovative open source software and employing lots of new open source

developers. Maybe. But having been in the industry for a long time, my

suspicions are that IBM shareholders might have other uses for that money.

More likely, in my opinion, is that users, who value freedom and control over

their own computing destiny more than anything else, will swiftly migrate off

the RHEL platform. Where will they go? That’s where my crystal ball isn’t so

good. Maybe some will go to Debian and derivatives. Some will go to SuSE

Enterprise Linux. The short-sighted ones will migrate workloads back to the

welcoming arms of Microsoft Windows, or, being more charitable about

Microsoft, an Enterprise Linux distribution running on top of Microsoft

Azure.

How to Address AI Data Privacy Concerns

Companies developing AI systems can take several approaches to protecting data

privacy. Data scientists need to be educated on data privacy, but company

leadership needs to recognize they are not the ultimate experts on privacy.

“Companies also can provide their data scientists with tools that have

built-in guardrails that enforce compliance,” says Manasi Vartak, founder and

CEO of Verta, a company that provides management and operations solutions for

data science and machine learning team. “Companies have to deploy a variety of

technical strategies to protect data privacy; there is an entire spectrum of

privacy preservation technologies out there to address such issues,” says

Adnan Masood, PhD, chief AI architect at digital transformation solutions

company UST. He points to approaches like tokenization, which replaces

sensitive data elements with non-sensitive equivalents. Anonymization and the

use of synthetic data are also among the potential privacy preservation

strategies. “On the cutting edge, we have techniques like fully homomorphic

encryption, which allows computations to be performed on encrypted data

without ever needing to decrypt it,” says Masood.

India’s stock market regulator Sebi releases cybersecurity consultation paper

Cybersecurity experts hailed the consultation paper by Sebi as a step in the

right direction. "By and large these entities are becoming very fertile

targets of continuing cyberattacks and cybersecurity breaches," said Dr. Pavan

Duggal, cyber law expert and practicing advocate at the Supreme Court of

India, adding that there has been a need felt for quite some time for a

consolidated cybersecurity and cyberresilience framework. "Sebi had come up

with a cyberresilience framework some years ago, but the intersection of

cybersecurity and cyberresilience had not been addressed. It is also an

extension of what the existing principles of law are already stating," Duggal

said. "Under the new updated IT rules 2023, every regulated entity has to

adopt reasonable security practices and procedures to protect third-party

data. In Sebi-regulated entities, these could become the parameters of due

diligence on cybersecurity," Duggal said, adding that in the absence of a

dedicated cybersecurity law and cyberresilience law, the framework assumes

more relevance.

Taking the risk out of the semiconductor supply chain

Even before the most recent supply chain challenges, political leaders around

the world have been taking a close look at the current semiconductor supply

chain model. Semiconductors across the global economy have the potential to

shape supply chains for numerous commercial electronics, as well as components

essential to critical infrastructures, such as telecommunications and

financial services. Perhaps more importantly, the supply of semiconductors has

worldwide security implications, affecting national and regional defense and

emergency response capabilities. Given its geopolitical impact, many

policymakers concluded that the existing semiconductor supply chain model is

too risky and are responding accordingly. Some of that risk is being addressed

at national and regional levels, such as the U.S. CHIPS Act and the EU Chips

Act. However, investments in these initiatives are heavily focused on building

new wafer fabrication facilities, or “fabs.” While fabs make up a critical

part of the manufacturing process, increased fab production alone cannot

better secure the global supply chain.

Are cloud architects biased?

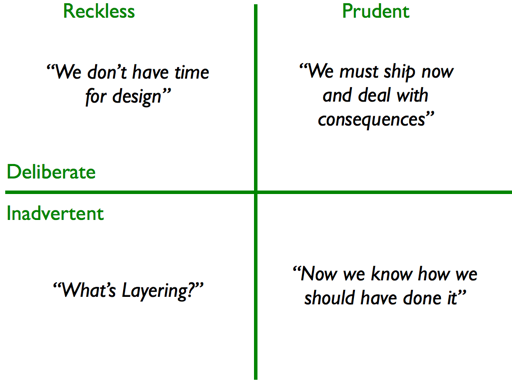

Don’t get me wrong; this does not mean a specific technology stack is

incorrect. At issue is that we’ve pulled back from working from the

requirements to the solutions, and now things are the other way around. The

reasons that many people are “compromised” are easy to define. Everything

works. You put up a technology stack to adapt to solve the problem; however,

if it’s not the fully optimized solution, it will cost the business millions

of dollars over its life cycle, and at some point, it will stop working and

will have to be fixed. There is no immediate punishment for picking

underoptimized solutions. Therefore, success is declared, and the project

leader moves on to other decisions with their bias reinforced by the false

perception of success. This dysfunctional process makes things worse and

creates so much technical debt. I’m not suggesting that cloud architects are

getting money under the table to pick one technology stack over another. I am

concerned they have not opened their minds to other options, even significant

changes such as leveraging traditional on-premises solutions over cloud-based

ones or vice versa.

Quote for the day:

"The litmus test for our success as

Leaders is not how many people we are leading, but how many we are

transforming into leaders" -- Kayode Fayemi