"AI becomes the UI, meaning that the pull-based/request-response model of using apps and services gradually disappears," Agarwal wrote. "Smartphones are still low IQ, because for the most part you have to pick them up, launch them and ask something, and then get a response back. In better-designed apps, however, the app initiates interactions via push notifications. Let's take this a step further so that an app, bot, or a virtual personal assistant using artificial intelligence will know what to do, when, why, where and how. And just do it." ... When it comes to machine learning, Agarwal wrote, the advances we'll see in 2019 are part of a logical evolution of that technology. "The most valuable data comes with context," he said, "what you've done before, what questions you've asked, what other people are doing, what's normal versus odd activity. And the best understanding comes from the depth of data in domain-specific use cases, such as manufacturing, marketing campaigns, e-commerce sites, or IT operations center.

Email trustworthiness: Here’s how to avoid looking like spam

At the same time SPF was being published, a second standard was in the works: DKIM (DomainKeys Identified Mail), which was a cryptographic solution for ensuring that content wasn’t tampered with during message transport. Creating standards around where a message originates and what’s in the message when it’s received versus when it was sent greatly help with establishing the trustworthiness of a given email and the sender that’s sending it. But again, this was not a total and complete solution to the global epidemic of spam. DKIM, along with SPF, became the foundation for DMARC (Domain-based Message Authentication, Reporting and Conformance) in 2011. DMARC allows the sender of an email to create a set of instructions for the receiving domain on what to do if the message fails an SPF or DKIM check. This policy makes it very difficult to spoof brands and deliver fraudulent messages to unsuspecting recipients, or hijack pieces of content to fool filters.

While advances in recognition algorithms are important, improvements are more pressing on the sensor side to provide higher quality input for the algorithms to analyze. In an interview with Bloomberg last month, Sony's sensor head Satoshi Yoshihara indicated that 3D camera sensors with advanced depth sensing are coming in 2019. Sony's depth sensing method relies on measuring the time it takes for invisible laser pulses to travel to the target and back to the handset. ... Despite these advances, legal frameworks for biometric security are still inadequate, with neither apparent interest or desire for policymakers to address the problems. While legal protections exist against forcing suspects to disclose passwords to, or unlock devices for the convenience of law enforcement, biometric authentication can be exploited by anyone with physical hardware access. In 2018, police in Ohio unlocked an iPhone X by forcing a suspect to put their face in front of the phone.

Microsoft Releases Surface Diagnostic Toolkit for Business

The Surface Diagnostic Toolkit for Business has two "modes," a desktop mode and a command-line mode, according to Microsoft's documentation. The desktop mode, which has a graphical user interface, is used to assist end users in help-desk fashion or it can be used to create a "distributable .MSI package" for deployment on Surface devices, where the end users are the ones to carry out the tests. Using the toolkit's command-line mode, IT pros can collect details about a Surface device's system information. They also can gather Surface device health indicators via built-in Best Practice Analyzer capabilities. The toolkit will show information about any missing drivers or firmware updates. It'll also report on the warranty status of a Surface device. The tests carried out by the toolkit's Best Practice Analyzer segment will check the state of a Surface device's BitLocker encryption and Trusted Platform Module, and whether or not Secure Boot protection has been enabled on the device's processor.

HHS Publishes Guide to Cybersecurity Best Practices

The goal of the guidance is to aid healthcare entities - regardless of their current level of cyber sophistication - in bolstering their preparedness to deal with the ever-evolving cyber threat landscape. "I spend a lot of time in healthcare providers that run the gamut in size and security maturity and still the top two questions are either: 'Where do I start?' or 'What do I do next, now that this part is done,'" says former healthcare CIO David Finn, an executive vice president at security consulting firm CynergisTek. "The days of small providers not knowing what to do or large providers thinking they've done all they need to do are over," adds Finn. HHS notes in a statement that the "Health Industry Cybersecurity Practices: Managing Threats and Protecting Patients" document is the culmination of a two-year effort involving more than 150 cybersecurity and healthcare experts from industry and the government under the Healthcare and Public Health Sector Critical Infrastructure Security and Resilience Public-Private Partnership.

Three Ways Legacy WAFs Fail

At the time, a drop-in web application security filter seemed like a good idea. Sure, it sometimes led to blocking legitimate traffic, but such is life. It provided at least some level of protection at the application layer — a place where compliance regimes were desperate for solutions. Then PCI (Payment Card Industry) regulations got involved, and the whole landscape changed. ... Most people weren’t installing WAFs due to their security value — they just wanted to pass their mandatory PCI certification. It’s fair to say that PCI singlehandedly grew the legacy WAF market from an interesting idea to the behemoth that it is today. And the legacy WAF continues to hang around, an outdated technology propped up by legalese rather than actual utility, providing a false sense of security without doing much to ensure it. If that isn’t enough for you to show your legacy WAF the door, here are three more reasons why legacy WAFs should be replaced.

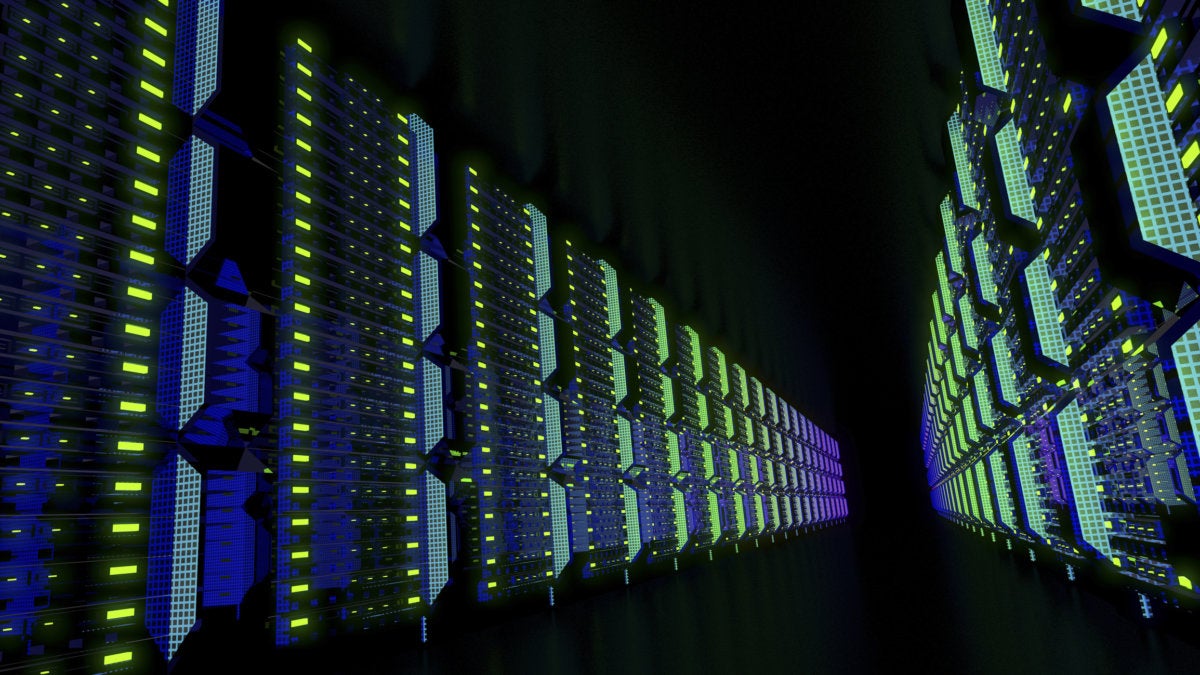

Poor data-center configuration leads to severe waste problem

The EPA estimates e-waste, disposed electronics, now accounts for 2 percent of all solid waste and 70 percent of toxic waste, thanks to the use of chemicals such as lead, mercury, cadmium and beryllium, as well as hazardous chemicals such as brominated flame retardants. A lot of that is old servers and components. And much of that is due to poor configuration and management, according to a study from server vendor Supermicro. In a survey of people who purchase and administer data-center hardware (pdf), only 59 percent of the 361 respondents consider energy efficiency important when building or leasing a new data center. It's fourth on the priorities list behind security, performance, and connectivity when managing existing data centers. The result? About 58 percent of respondents did not know their data-center Power Usage Effectiveness (PUE). PUE measures how efficiently you cool your systems.

Now is the time to get serious about your cloud strategy

The move away from enterprise data centers has been less aggressive than predicted. It seems that many applications and data sets can’t live anywhere else according to enterprise IT, and while cloud computing is an option, IT views it as a tactical solution. The fact is that cloud computing is no bed of roses. Costs are typically higher than expected, migration is typically costlier and more complex than expected, and operations are much more laborious than expected. However, cloud computing keeps you out the hardware and software procurement and operations weeds, letting you move faster, And, if you’re smart in its usage, cloud computing can make things much cheaper and lower risk. Generally speaking cloud computing makes you more agile and cheaper most of the time. So why is there such a slow movement to move to cloud computing and shut down enterprise data centers?

Reviewing 2018, predicting 2019

Software as we know it has fundamentally been a set of rules, or processes, encoded as algorithms. Of course, over time its complexity has been increasing. APIs enabled modular software development and integration, meaning isolated pieces of software could be combined and/or repurposed. This increased the value of software, but at the cost of also increasing complexity, as it made tracing dependencies and interactions non trivial. But what happens when we deploy software based on machine learning approaches is different. Rather than encoding a set of rules, we train models on datasets, and release it in the wild. When situations occur that are not sufficiently represented in the training data, results can be unpredictable. Models will have to be re-trained and validated, and software engineering and operations need to evolve to deal with this new reality. Machine learning is also shaping the evolution of hardware. For a long time, hardware architecture has been more or less fixed, with CPUs being their focal point.

Will greater clarity on regulation 'considerably expand' .. crypto market?

Self-regulation will be necessary, because global regulatory bodies move incredibly slowly, especially in such a complex space as the world of digital currencies. “In 2019, the cryptocurrency market is set to radically evolve,” confirms Green. “We can expect considerable expansion of the sector largely due to inflows of institutional investors.” “Major corporations, financial institutions, governments and their agencies, prestigious universities and household-name investing legends are all going to bring their institutional capital and institutional expertise to the crypto market.” “The direction of travel has already been on this path, but there is a growing sense that institutional investors are preparing to move off the sidelines in 2019.” This prediction is optimistic, and if anything is certain in the crypto space, it is that of uncertainty.

Quote for the day:

"The leader has to be practical and a realist, yet must talk the language of the visionary and the idealist." -- Eric Hoffer