Partner Across Teams to Create a Cybersecurity Culture

Just because a software engineer doesn’t work on the security team doesn’t mean

that security isn’t their responsibility. In addition to the standard security

training, you can further empower your engineering teams by training and

encouraging them to think like hackers. I was fortunate enough to work for a

company some time ago that scheduled annual competitions with prizes and

bragging rights. These competitions served as security training and engaged us

in a series of engineering puzzles that included SQL injection, cross-site

scripting (XSS), cryptography and social engineering. ... Even with

well-implemented training programs and a dedicated cadre of security-minded

engineers building your applications, there is still plenty for your security

engineers to work on. The shared-responsibility model will reduce the risk of

successful phishing attacks or other malicious activity, but it won’t remove it

entirely. Ideally, security teams will move from a place where they are

constantly fighting fires to one where they can engage in strategic initiatives

to further improve security for the organization, automate risk detection

wherever possible, and prepare your organization for the future.

Agile Doesn’t Work Without Psychological Safety

Soon after implementing agile, many organizations revert to the default position

of worshiping at the altar of technical processes and tools, because cultural

considerations seem abstract and difficult to operationalize. It’s easier to pay

lip service to the human side and then move on to scrumming, sprinting,

kanbaning, and kaizening because these processes serve as tangible, measurable,

and observable indicators, giving the illusion of success and the appearance of

developing agile at scale. Begin your agile transformation by framing agile as a

cultural rather than a technical or mechanical implementation. In doing so, be

careful not to approach culture as a workstream. A workstream is defined as the

progressive completion of tasks required to finish a project. When we approach

culture as a workstream within the context of agile, we classify it as something

that can be completed. Culture cannot be completed. Yet I see agile teams

attempting to project-manage it as part of the work breakdown structure, as if

it has a beginning, middle, and end. It doesn’t.

Inside the U.K. lab that connects brains to quantum computers

While BCIs and quantum computers are undoubtedly promising technologies emerging

at the same point in history, the question is why bring them together – which is

exactly what the consortium of researchers from the U.K.’s University of

Plymouth, Spain’s University of Valencia and University of Seville, Germany’s

Kipu Quantum, and China’s Shanghai University are seeking to do. Technologists

love nothing more than mashing together promising concepts or technologies in

the belief that, when united, they will represent more than the sum of their

parts. Sometimes this works gloriously. As the venture capitalist Andrew Chen

describes in his book The Cold Start Problem, Instagram leveraged the emergence

of camera-equipped smartphones and the simultaneous powerful network effects of

social media to become one of the fastest-growing apps in history. Taking two

must-have technologies and combining them doesn’t always work, though. Apple CEO

Tim Cook once quipped that “you can converge a toaster and a refrigerator, but,

you know, those things are probably not going to be pleasing to the user.”

Three ways COVID-19 is changing how banks adapt to digital technology

Bank leaders face the difficult task of balancing the traditional approach to

risk management with the need to respond quickly to a crisis that has created

massive changes to their operating environment. Criminal cyber activity,

including fraud and phishing attacks, has increased as more employees work

remotely. However, as one participant said: “We have not yet seen the massive

increase in sophisticated, advance persistent threat cyber attacks that we

normally associate with events like these.” As banks shift from crisis mode,

their boards need to address new emerging risks, such as video and voice

communication surveillance with everyone using Zoom and other platforms, data

security controls for the use of personal equipment, and cases of third and

fourth parties falling victim to cyber issues. ... As the economic impacts of

the pandemic become clearer, banks are updating risk models and stress

scenarios in an attempt to stay ahead of the curve. However, uncertainty in

the operating environment continues to pose challenges. A lack of regulatory

harmonization may further complicate benchmarking among peers across

countries, though there is hope that this will improve soon.

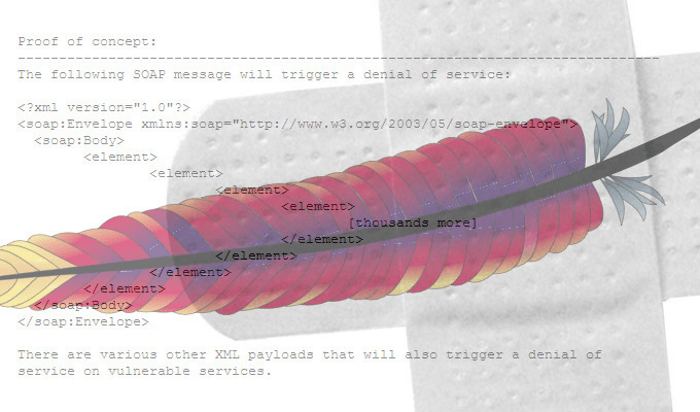

The threat of quantum computing to security infrastructure

The report states:”The encryption technologies that are securing Canada’s

financial systems today will one day become obsolete. If we do nothing, the

financial data that underpins Canada’s economy will inevitably become more

vulnerable to cyber criminals.” In the US, as noted above, the National

Security Agency took an early lead in identifying the perceived threat. On

January 19, 2022, an action from the US president came public. The White

House issued a “Memorandum on Improving the Cybersecurity of National

Security, Department of Defense and Intelligence Community Systems.” The

document shows the urgency needed to address perceived major threats. It

outlines major actions to avoid security lapses that would be created by

quantum computers targeting critical secret data and related infrastructure.

It also identifies the management responsibilities in the various agencies

to implement these measure within a matter of months. This perceived threat

to existing cybersecurity will generate a great deal of private industry and

bring well-funded new companies into the business of transition to new

security solutions.

AI fairness in banking: the next big issue in tech

“People want to be treated fairly by an agent whether artificial or not. The

difference for a lot of applications is that people are not aware of the

full extent of the decision making and the statistical regularities across a

larger population where some of these issues can arise. There is a lot of

cynicism around these decisions.” He adds that there are technical as well

as organisational solutions that financial services providers need to apply.

This, combined with policies of transparency about the processes in place

all combine to provide an overall strategy. He adds: “The first thing is to

have processes of regularly reporting on and examining and making

corrections to data that is used to train models as well as to test them.

“So, a simple test is representation of people that belong to legally

protected categories by race, age, gender, ethnic origin and religious

status to determine if there is enough data to represent each of these

groups with accurate models. In addition, these is a need to determine

whether there are other inputs to the model or features that could be

corelated with these protected classes and have a potentially adverse or

discriminatory impact on the output of the model.”

4 common misunderstandings about enterprise open source software

It might seem natural to download community-supported bits from the Internet

rather than purchase an integrated product. This is especially the case when

the community projects are relatively simple and self-contained or if you

have reasons to develop independent expertise or do extensive customization.

(Although working with a vendor to get needed changes into the upstream

project is a possible alternative in the latter case.) However, if the

software isn’t a differentiating capability for your business, hiring the

right highly-skilled engineers is neither easy nor cheap. There’s also the

ongoing support burden if your downloaded projects turn into a fork of the

upstream community project. And if you don’t want them to, you’ll need to

factor in the time to work in the upstream projects to get needed features

added. There’s also a lot of complexity in categories like enterprise open

source container platforms in the cloud-native space. Download Kubernetes?

You’re just getting started. How about monitoring, distributed tracing,

CI/CD, serverless, security scanning, and all the other features you’ll want

in a complete platform?

Leadership when the chips are down

Particularly noteworthy is the obsessive nature of Shackleton’s encounter

with a territory so resistant to accurate perception. We risk bathos to say

that the business landscape presents challenges on a par with the South

Pole, yet the perceptual difficulties posed by Antarctica offer clear

parallels for executives and entrepreneurs. The southernmost continent is

unpredictable, unstable, and unforgiving. Compasses don’t behave normally.

Much of what appears terra firma is actually floating ice, and deadly

crevasses lurk under the snow. Snow blindness, a painful effect of the

dazzling surroundings, can make vision itself impossible. ... Shackleton’s

failings as a manager were manifest in his planning for the Heart of the

Antarctic expedition. For a trip on foot of 1,720 miles to and from the

Pole, his four-man unit brought food for just 91 days of hard labor, high

altitude, and mind-numbing cold. His return instructions to the crew of the

Nimrod, the ship that dropped off his party, were impossibly vague.

How can banks remain relevant in the fastest growing digital market in the world?

While bolting on a digital banking system may be a quick fix for incumbents,

the only way for FIs to truly keep up with the pace of change and

future-proof their business is to invest in modern architecture which offers

them the flexibility required to develop and deploy products and services at

speed. Built with advanced customisation at their core, modern platforms

enable FIs to approach product development with a different mindset to those

struggling with legacy systems. As a result, FIs benefit from faster

time-to-market, being able to scale up innovative digital operations, offer

new products or services, and respond to ever-changing market requirements

much faster. Shifting consumer behaviours, coupled with intensified

competition, is making it increasingly difficult for banks in the APAC

region to remain relevant. They are fighting not only to keep their loyal

customer base, but stay ahead of the curve by offering customers the

advanced digital services they require. Only by ensuring they have a

comprehensive, future-proof system in place, underpinning their operations,

will they truly be able to embrace the digital future.

Sustaining Agile Transformation – Our Experience

The organization needs to rethink and create a career roadmap for the Agile roles like Product Owner, Scrum Master, and Developers. The organization must build and enhance the self-paced learning experience, embed learning experience, develop role-based training, develop new learning areas, etc. For certain key roles, the organizations can focus on establishing academies such as Scrum Master Academy. This will ensure there is continuous learning and flow of trained Scrum Masters as and when needed. Coaching skills should be taught and embedded in Agile leaders and change agents. Ensure Leaders are trained and embrace foundational values and principles. Establishing and retaining a Central team such as a lean CoE will be very beneficial to oversee the transformation and support when needed. The organization can deliberate on the establishment of the CoE at divisional or organization levels. Collaborative forums like the CoPs, Guilds, Chapters, etc. should be established and run successfully.

Quote for the day:

"Leaders must see the dream in their

mind before they will accomplish the dream with their team." --

Orrin Woodward

/filters:no_upscale()/articles/federated-ml-edge/en/resources/1Picture5-3-1645004953358.jpg)

/filters:no_upscale()/articles/data-patterns-edge/en/resources/51-1644849493259.jpeg)