How to investigate service provider trust chains in the cloud

Microsoft Detection and Response Team (DART) has been assisting multiple

organizations around the world in investigating the impact of NOBELIUM’s

activities. While we have already engaged directly with affected customers to

assist with incident response related to NOBELIUM’s recent activity, our goal

with this blog is to help you answer the common and fundamental questions: How

do I determine if I am a victim? If I am a victim, what did the threat actor do?

How can I regain control over my environment and make it more difficult for this

threat actor to regain access to our environments? ... DAP can be beneficial for

both the service provider and end customer because it allows a service provider

to administer a downstream tenant using their own identities and security

policies. ... Azure AOBO is similar in nature to DAP, albeit the access is

scoped to Azure Resource Manager (ARM) role assignments on individual Azure

subscriptions and resources, as well as Azure Key Vault access policies. Azure

AOBO brings similar management benefits as DAP does.

Microsoft Detection and Response Team (DART) has been assisting multiple

organizations around the world in investigating the impact of NOBELIUM’s

activities. While we have already engaged directly with affected customers to

assist with incident response related to NOBELIUM’s recent activity, our goal

with this blog is to help you answer the common and fundamental questions: How

do I determine if I am a victim? If I am a victim, what did the threat actor do?

How can I regain control over my environment and make it more difficult for this

threat actor to regain access to our environments? ... DAP can be beneficial for

both the service provider and end customer because it allows a service provider

to administer a downstream tenant using their own identities and security

policies. ... Azure AOBO is similar in nature to DAP, albeit the access is

scoped to Azure Resource Manager (ARM) role assignments on individual Azure

subscriptions and resources, as well as Azure Key Vault access policies. Azure

AOBO brings similar management benefits as DAP does.Sharded Multi-Tenant Database using SQL Server Row-Level Security

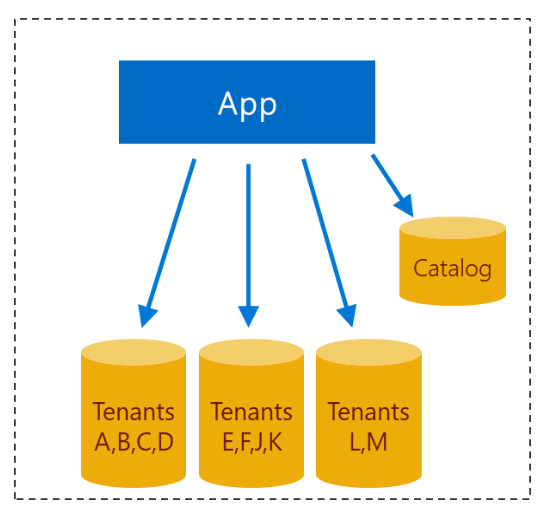

The distribution of tenants among multiple servers can be made using different

methods. An intuitive way would be like "put the first 10 tenants in this server

A, then only when needed provision a new server B and put the next 10 tenants

there, etc". Another method would be starting with a few servers and

distributing tenants evenly across those servers: Let's say you have 3 servers

called A, B, C, you'd put Tenant1 into A, Tenant2 into B, Tenant3 into C,

Tenant4 into A again, Tenant5 into B, etc. So basically tenants are distributed

according to (TenantId)%(NumberOfServers). If you don't want to have a single

catalog (which as I said before is both a bottleneck and a single point of

failure) you can spread your catalog across multiple servers (exactly like the

tenants' data) as long as your requests can be routed directly to the right

place, which would require the sharding to be based on something like the tenant

domain. ... The Security Policy TenantAccessPolicy can be used to apply filters

over any number of tables. To make sure that any table with the [TenantId]

column will always be filtered, we can create a DDL trigger that will apply the

security predicate to any new (or modified) table.

The distribution of tenants among multiple servers can be made using different

methods. An intuitive way would be like "put the first 10 tenants in this server

A, then only when needed provision a new server B and put the next 10 tenants

there, etc". Another method would be starting with a few servers and

distributing tenants evenly across those servers: Let's say you have 3 servers

called A, B, C, you'd put Tenant1 into A, Tenant2 into B, Tenant3 into C,

Tenant4 into A again, Tenant5 into B, etc. So basically tenants are distributed

according to (TenantId)%(NumberOfServers). If you don't want to have a single

catalog (which as I said before is both a bottleneck and a single point of

failure) you can spread your catalog across multiple servers (exactly like the

tenants' data) as long as your requests can be routed directly to the right

place, which would require the sharding to be based on something like the tenant

domain. ... The Security Policy TenantAccessPolicy can be used to apply filters

over any number of tables. To make sure that any table with the [TenantId]

column will always be filtered, we can create a DDL trigger that will apply the

security predicate to any new (or modified) table.

How Nvidia aims to demystify zero trust security

Nvidia is succeeding at its mission of demystifying zero trust in datacenters,

starting with its BlueField DPU architecture. Its architecture includes secure

boot with hardware root-of-trust, secure firmware updates, and Cerberus

compliant with more enhancements to support the build-out of its zero-trust

framework. One of Nvidia’s core strengths is its ability to extend and scale

DPU core features with SDKs and related software, while scaling to support

larger AI and data science workloads. Doubling down on DOCA development this

year, Nvidia used GTC 2021 to announce the 1.2 release supports new

authentication, attestation, isolation, and monitoring features, further

strengthening Nvidia’s zero-trust platform. In addition, Nvidia says they are

seeing momentum in customers and partners signing up for the DOCA early access

program. ... Morpheus monitors network activity using unsupervised machine

learning algorithms to understand typical behavioral patterns, as well as

identity, endpoint, and location parameters across multiple networks.

Nvidia is succeeding at its mission of demystifying zero trust in datacenters,

starting with its BlueField DPU architecture. Its architecture includes secure

boot with hardware root-of-trust, secure firmware updates, and Cerberus

compliant with more enhancements to support the build-out of its zero-trust

framework. One of Nvidia’s core strengths is its ability to extend and scale

DPU core features with SDKs and related software, while scaling to support

larger AI and data science workloads. Doubling down on DOCA development this

year, Nvidia used GTC 2021 to announce the 1.2 release supports new

authentication, attestation, isolation, and monitoring features, further

strengthening Nvidia’s zero-trust platform. In addition, Nvidia says they are

seeing momentum in customers and partners signing up for the DOCA early access

program. ... Morpheus monitors network activity using unsupervised machine

learning algorithms to understand typical behavioral patterns, as well as

identity, endpoint, and location parameters across multiple networks. Privacy vs. Security: What’s the Difference?

The difference between data privacy and data security comes down to who and

what your data is being protected from. Security can be defined as

protecting data from malicious threats, while privacy is more about using

data responsibly. This is why you’ll see security measures designed around

protecting against data breaches no matter who the unauthorized party is

that’s trying to access that data. Privacy measures are more about managing

sensitive information, making sure that the people with access to it only

have it with the owner’s consent and are compliant with security measures to

protect sensitive data once they have it. ... Using apps with end-to-end

encryption is a good way to boost the security of your data online.

Messaging services like Signal are encrypted end-to-end, meaning that no one

but the sender and recipient of the message can view the data. That’s

because the data is encrypted (or scrambled) before being sent, then

decrypted only when it hits your device. One caveat here is to make sure the

service you’re using is actually end-to-end encrypted.

The difference between data privacy and data security comes down to who and

what your data is being protected from. Security can be defined as

protecting data from malicious threats, while privacy is more about using

data responsibly. This is why you’ll see security measures designed around

protecting against data breaches no matter who the unauthorized party is

that’s trying to access that data. Privacy measures are more about managing

sensitive information, making sure that the people with access to it only

have it with the owner’s consent and are compliant with security measures to

protect sensitive data once they have it. ... Using apps with end-to-end

encryption is a good way to boost the security of your data online.

Messaging services like Signal are encrypted end-to-end, meaning that no one

but the sender and recipient of the message can view the data. That’s

because the data is encrypted (or scrambled) before being sent, then

decrypted only when it hits your device. One caveat here is to make sure the

service you’re using is actually end-to-end encrypted. Five principles for navigating the post-pandemic era

The pandemic has permanently changed what it means to be “at work”. Work is

no longer a place you go, but what you do. Hybrid working, and the ability

to work from anywhere, is here to stay. A huge part of this shift has been

facilitated by our capacity to invent new ways of working fit for the

digital age. Video conferencing, the cloud, instant messaging: it’s all part

of the same narrative – how technology can facilitate new behaviours and

patterns that can benefit the workforce. Network-as-a-Service (NaaS), for

example, is a secure, cost-effective subscription-based model that lets

businesses of all sizes consume network infrastructure on-demand and as

needed. Think of it like a thermostat, where you can increase or decrease

temperature to suit your needs. With a solution like NaaS, businesses can

ensure their employees have the same security and network connectivity at a

coffee shop or at home, as they would in the office. This fundamentally

changes what it means to be safe, secure and online – and employees can work

from any location.

The pandemic has permanently changed what it means to be “at work”. Work is

no longer a place you go, but what you do. Hybrid working, and the ability

to work from anywhere, is here to stay. A huge part of this shift has been

facilitated by our capacity to invent new ways of working fit for the

digital age. Video conferencing, the cloud, instant messaging: it’s all part

of the same narrative – how technology can facilitate new behaviours and

patterns that can benefit the workforce. Network-as-a-Service (NaaS), for

example, is a secure, cost-effective subscription-based model that lets

businesses of all sizes consume network infrastructure on-demand and as

needed. Think of it like a thermostat, where you can increase or decrease

temperature to suit your needs. With a solution like NaaS, businesses can

ensure their employees have the same security and network connectivity at a

coffee shop or at home, as they would in the office. This fundamentally

changes what it means to be safe, secure and online – and employees can work

from any location.Enterprise Readiness For The Digital Age: Digital Fluency And Digital Resiliency

Digital fluency is the missing ingredient in many digital transformation

efforts. In most cases, I would argue that it’s not the technology that’s

holding an employee back but the lack of digital infrastructure, Culture,

leadership, and skills, which are required to thrive alongside technologies.

Digital literacy in the workforce can be tricky, especially for a large

organization with thousands of employees. Companies must consider each

employee’s age, background, educational qualification, and current digital

literacy level. Although the challenges are beyond Diversity and Inclusion

(D&I), it also includes resistance to change, Fear of Missing Out

(FOMO), tracking the change management, continuous process of change, etc.

To be successful, businesses will need to provide the right digital tools

and training to the workforce, including leadership and cultural support to

build Tech intensity, i.e., an organization’s ability to adapt and integrate

the latest technology to develop its unique digital capability and trust

factor.

Digital fluency is the missing ingredient in many digital transformation

efforts. In most cases, I would argue that it’s not the technology that’s

holding an employee back but the lack of digital infrastructure, Culture,

leadership, and skills, which are required to thrive alongside technologies.

Digital literacy in the workforce can be tricky, especially for a large

organization with thousands of employees. Companies must consider each

employee’s age, background, educational qualification, and current digital

literacy level. Although the challenges are beyond Diversity and Inclusion

(D&I), it also includes resistance to change, Fear of Missing Out

(FOMO), tracking the change management, continuous process of change, etc.

To be successful, businesses will need to provide the right digital tools

and training to the workforce, including leadership and cultural support to

build Tech intensity, i.e., an organization’s ability to adapt and integrate

the latest technology to develop its unique digital capability and trust

factor.Why cybersecurity training needs a post-pandemic overhaul

Unless you’re training tech workers, there really is no reason to overwhelm

your learners with industry jargon. The average employee will struggle with

an overly technical language and may end up missing the point of the

training entirely while trying to memorize complicated terms. Cybersecurity

training materials should be written in layman’s terms. An accessible

training language is the first step in making any kind of training stick.

Another unfortunate side-effect of relying too heavily on industry jargon

during training is that makes the average employee unable to see how this

training could relate to their daily job operations. When your training

materials are abstract or don’t incorporate real-life scenarios, they can be

more easily disregarded as something that employees probably won’t have to

deal with. However, this could not be further from the truth, especially

with the rise of remote and hybrid working. In fact, according to Tanium,

90% of companies faced an increase in cyberattacks due to COVID-19, making

cybersecurity training more critical than ever.

Unless you’re training tech workers, there really is no reason to overwhelm

your learners with industry jargon. The average employee will struggle with

an overly technical language and may end up missing the point of the

training entirely while trying to memorize complicated terms. Cybersecurity

training materials should be written in layman’s terms. An accessible

training language is the first step in making any kind of training stick.

Another unfortunate side-effect of relying too heavily on industry jargon

during training is that makes the average employee unable to see how this

training could relate to their daily job operations. When your training

materials are abstract or don’t incorporate real-life scenarios, they can be

more easily disregarded as something that employees probably won’t have to

deal with. However, this could not be further from the truth, especially

with the rise of remote and hybrid working. In fact, according to Tanium,

90% of companies faced an increase in cyberattacks due to COVID-19, making

cybersecurity training more critical than ever.Digital transformation: 4 IT leaders share how they fight change fatigue

Digital transformation can be a never-ending journey, but there are still

key milestones and inflection points. Breaking the journey down this way

helps keep the momentum going and allows time for reflection to make any

course corrections. While it’s important to keep looking forward, don’t

forget to look back and reflect on how far the organization has come and

lessons learned along the way. Additionally, maintain an external

perspective on where the competition is and how customer preferences may be

changing. Keeping these stakeholders at the center of your plans helps keep

everyone energized and focused. Create a culture of embracing change and

uncertainty. Many large complex businesses have been focused on eliminating

uncertainty and risk, but the digital transformation journey is not one of

certainty and zero risk. Getting comfortable with that as a way of surviving

and thriving will help transformation team members realize they are not

swimming upstream, but with the current.

Digital transformation can be a never-ending journey, but there are still

key milestones and inflection points. Breaking the journey down this way

helps keep the momentum going and allows time for reflection to make any

course corrections. While it’s important to keep looking forward, don’t

forget to look back and reflect on how far the organization has come and

lessons learned along the way. Additionally, maintain an external

perspective on where the competition is and how customer preferences may be

changing. Keeping these stakeholders at the center of your plans helps keep

everyone energized and focused. Create a culture of embracing change and

uncertainty. Many large complex businesses have been focused on eliminating

uncertainty and risk, but the digital transformation journey is not one of

certainty and zero risk. Getting comfortable with that as a way of surviving

and thriving will help transformation team members realize they are not

swimming upstream, but with the current.More Than Half of Indian Loan Apps Illegal, RBI Panel Finds

Some digital lending platforms exploit users' lack of financial awareness

and charge them exorbitant interest rates, Rahul Pratap Yadav, chief

business officer and strategy at digital payments firm iMoneyPay and former

senior vice president at Yes Bank, tells ISMG. He adds that digital lenders

ensnare other customers through multilevel marketing and by offering them

referral bonuses. The lack of awareness on privacy and absence of regulatory

mandates protecting user identity has also contributed to the list of

challenges in the digital lending space, Yadav notes. He recommends that

digital lenders "have the right checks and balances in the app, and educate

borrowers on financial fraud and getting into bad debt because of financial

irresponsibility." The Indian digital lending space is also home to several

China-based actors, according to the working group. "Anyone that had access

to money and can build an app is capable of becoming a digital lender," Sasi

says. Many of these unregulated digital lending apps charge 10% to 15%

monthly interest, making the lending market a lucrative business for

companies trying to make a quick buck, he says.

Some digital lending platforms exploit users' lack of financial awareness

and charge them exorbitant interest rates, Rahul Pratap Yadav, chief

business officer and strategy at digital payments firm iMoneyPay and former

senior vice president at Yes Bank, tells ISMG. He adds that digital lenders

ensnare other customers through multilevel marketing and by offering them

referral bonuses. The lack of awareness on privacy and absence of regulatory

mandates protecting user identity has also contributed to the list of

challenges in the digital lending space, Yadav notes. He recommends that

digital lenders "have the right checks and balances in the app, and educate

borrowers on financial fraud and getting into bad debt because of financial

irresponsibility." The Indian digital lending space is also home to several

China-based actors, according to the working group. "Anyone that had access

to money and can build an app is capable of becoming a digital lender," Sasi

says. Many of these unregulated digital lending apps charge 10% to 15%

monthly interest, making the lending market a lucrative business for

companies trying to make a quick buck, he says.Guarding against DCSync attacks

Step one is to implement basic security and hygiene practices for Active Directory. The attack requires the threat actor to have already compromised a domain administrator account or any other account that has been granted the DCsync permissions. As such, monitoring the permissions of your domain head is critical so that you are aware of which accounts or groups have been assigned the powerful DCSync permissions. You might find that you should revoke permissions for some users who had accidentally been granted them years ago. ... The second focus for enterprises should be on preventing lateral movement when attackers breach the network. Organizations should control access according to the principle of least privilege. Using a tiering model—where no domain account would ever log onto systems not involved in managing AD itself—will clearly make it harder for adversaries to elevate their privileges. Access rights must be regularly reviewed to ensure users do not have privileges they do not need for their duties.Quote for the day:

"People seldom improve when they have no other model but themselves." -- Oliver Goldsmith

/filters:no_upscale()/articles/design-thinking-organizational-change/en/resources/3image003-1637325933926.jpg)