Quote for the day:

"Blessed are those who can give without remembering and take without forgetting." -- Anonymous

One Leader, Two Roles: The CISO-DPO Hybrid Model

The convergence is not without its challenges. The breadth of combined

responsibilities could potentially lead to overload and burnout for leaders

trying to keep pace with evolving technical threats and fast-changing privacy

regulations. In addition, lapses in compliance could lead to hefty penalties for

the organization, particularly as regulatory bodies are now penalizing CISOs for

faltering in their compliance and reporting efforts - a reminder that continuous

learning is not optional, but essential. This hybrid role requires people who

are multi-skilled and knowledgeable in both domains, a seemingly daunting task.

CISOs and DPOs must be viewed as closely associated partners - not as

individuals who can cause a conflict of interest - in their compliance

journey. ... A hybrid role enables faster translation of regulatory

requirements into security controls, resulting in accelerated compliance efforts

and improved resilience overall. An integrated approach thus becomes far more

efficient than individuals operating in silos, such as the DPO having to rely on

a CISO who does not necessarily have a DPO-specific mandate but only an

overarching security focus. Enterprises can create an ecosystem where security

and privacy reinforce each other, and organizations can foster collaboration,

and build trust and long-term value in an era of relentless digital risk.

The convergence is not without its challenges. The breadth of combined

responsibilities could potentially lead to overload and burnout for leaders

trying to keep pace with evolving technical threats and fast-changing privacy

regulations. In addition, lapses in compliance could lead to hefty penalties for

the organization, particularly as regulatory bodies are now penalizing CISOs for

faltering in their compliance and reporting efforts - a reminder that continuous

learning is not optional, but essential. This hybrid role requires people who

are multi-skilled and knowledgeable in both domains, a seemingly daunting task.

CISOs and DPOs must be viewed as closely associated partners - not as

individuals who can cause a conflict of interest - in their compliance

journey. ... A hybrid role enables faster translation of regulatory

requirements into security controls, resulting in accelerated compliance efforts

and improved resilience overall. An integrated approach thus becomes far more

efficient than individuals operating in silos, such as the DPO having to rely on

a CISO who does not necessarily have a DPO-specific mandate but only an

overarching security focus. Enterprises can create an ecosystem where security

and privacy reinforce each other, and organizations can foster collaboration,

and build trust and long-term value in an era of relentless digital risk.Beyond the Black Box: Building Trust and Governance in the Age of AI

Without enough controls, organizations run the risk of being sanctioned by

regulators, losing their reputation, or facing adverse impacts on people and

communities. These threats can be managed only by an agile, collaborative AI

governance model that prioritizes fairness, accountability, and human rights.

... Organizations must therefore strike a balance between openness and

accountability, holding back to protect sensitive assets. This can be achieved

by constructing systems that can explain their decisions clearly, keeping

track of how models are trained, and making decisions using personal or

sensitive data interpretable. ... Methods like adversarial debiasing, sample

reweighting, and human evaluators assist in fixing errors prior to their

amplification, making sure the results reflect values like justice, equity,

and inclusion. ... Privacy-enhancing technologies (PETs) promote the

protection of personal data while enabling responsible usage. For example,

differential privacy adds a touch of statistical “noise” to keep individual

identities hidden. Federated learning enables AI models to learn from data

distributed across multiple devices, without needing access to the raw data.

... Compliance must be embedded in the AI lifecycle by means of impact

assessments, documentation, and control scaling, especially for high‑risk

applications like biometric identification or automated decision‑making.

Without enough controls, organizations run the risk of being sanctioned by

regulators, losing their reputation, or facing adverse impacts on people and

communities. These threats can be managed only by an agile, collaborative AI

governance model that prioritizes fairness, accountability, and human rights.

... Organizations must therefore strike a balance between openness and

accountability, holding back to protect sensitive assets. This can be achieved

by constructing systems that can explain their decisions clearly, keeping

track of how models are trained, and making decisions using personal or

sensitive data interpretable. ... Methods like adversarial debiasing, sample

reweighting, and human evaluators assist in fixing errors prior to their

amplification, making sure the results reflect values like justice, equity,

and inclusion. ... Privacy-enhancing technologies (PETs) promote the

protection of personal data while enabling responsible usage. For example,

differential privacy adds a touch of statistical “noise” to keep individual

identities hidden. Federated learning enables AI models to learn from data

distributed across multiple devices, without needing access to the raw data.

... Compliance must be embedded in the AI lifecycle by means of impact

assessments, documentation, and control scaling, especially for high‑risk

applications like biometric identification or automated decision‑making.The rise of purpose-built clouds

The rise of purpose-built clouds is also driving multicloud strategies.

Historically, many enterprises have avoided multicloud deployments, citing

complexity in managing multiple platforms, compliance challenges, and security

concerns. However, as the need for specialized solutions grows, businesses are

realizing that a single vendor can’t meet their workload demands. ... Another

major reason for purpose-built clouds is data residency and compliance. As

regional rules like those in the European Union become stricter, organizations

may find that general cloud platforms can create compliance issues.

Purpose-built clouds can provide localized options, allowing companies to host

workloads on infrastructure that satisfies regulatory standards without losing

performance. This is especially critical for industries such as healthcare and

financial services that must adhere to strict compliance standards.

Purpose-built platforms enable companies to store data locally for compliance

reasons and enhance workloads with features such as fraud detection,

regulatory reporting, and AI-powered diagnostics. ... The rise of

purpose-built clouds signals a philosophical shift in enterprise IT

strategies. Instead of generic, one-size-fits-all solutions, organizations now

recognize the value in tailoring investments to align directly with business

objectives.

The rise of purpose-built clouds is also driving multicloud strategies.

Historically, many enterprises have avoided multicloud deployments, citing

complexity in managing multiple platforms, compliance challenges, and security

concerns. However, as the need for specialized solutions grows, businesses are

realizing that a single vendor can’t meet their workload demands. ... Another

major reason for purpose-built clouds is data residency and compliance. As

regional rules like those in the European Union become stricter, organizations

may find that general cloud platforms can create compliance issues.

Purpose-built clouds can provide localized options, allowing companies to host

workloads on infrastructure that satisfies regulatory standards without losing

performance. This is especially critical for industries such as healthcare and

financial services that must adhere to strict compliance standards.

Purpose-built platforms enable companies to store data locally for compliance

reasons and enhance workloads with features such as fraud detection,

regulatory reporting, and AI-powered diagnostics. ... The rise of

purpose-built clouds signals a philosophical shift in enterprise IT

strategies. Instead of generic, one-size-fits-all solutions, organizations now

recognize the value in tailoring investments to align directly with business

objectives. Establishing Visibility and Governance for Your Software Supply Chain

Even if your organization isn’t the direct target, you can fall victim to attackers. A supply chain attack designed to gain access to a bank, for example, could also poison your supply chain. The attackers will gladly take your customer information or hold your servers hostage to ransomware. Modern software supply chains are incredibly complex webs of third-party code. To properly secure the supply chain, organizations must first gain visibility into all of the components that go into their applications. This is necessary not just on a per-application basis, but across the entire portfolio. ... The first step is to start building software bills of materials (SBOMs) at build time. The SBOM records what goes into your software, so it is the foundational piece of building asset visibility. You can then use that information to build a knowledge graph about your supply chain, including vulnerabilities and software licenses. When you aggregate these SBOMs across all of your application portfolio, you get a holistic view of all dependencies. ... One final piece of the puzzle is tracking software provenance. Tracking and gating on software provenance gives you another avenue to protect yourself from vulnerable code. This is often overlooked, but given the prevalence of attacks against open source library repositories, it’s more important than ever.Avoiding chain of custody crisis: In-house destruction for audit-proof compliance

Chain of custody refers to the documented and unbroken trail of accountability

that records the lifecycle of a sensitive asset; from creation and use to final

destruction. For data stored on physical media like hard disk drives (HDDs),

solid state drives (SSDs), or e-media, maintaining a secure and traceable chain

of custody is essential for demonstrating regulatory compliance and ensuring

operational integrity. ... With the right high-security equipment, such as

NSA-listed paper shredders, hard drive crushers and shredders, and

disintegrators, destruction can occur at the point of use – or at least within

the facility – under supervision and with real-time documentation. This

eliminates transport risks, reduces reliance on third parties, and keeps

sensitive data within your organization’s security perimeter. ... Compliance

auditors are increasingly looking beyond destruction certificates. They want

transparency. That means policies, procedures, logs, and physical proof. With an

in-house program, organizations can tailor destruction workflows to meet

specific regulatory frameworks, from NIST 800-88 guidelines to DoD or ISO

standards. ... High-security data destruction isn’t just about preventing

breaches. It’s about instilling confidence both internally with leadership and

stakeholders, and externally with regulators and clients. By keeping destruction

in-house, organizations send a clear message: data security is

non-negotiable.

Chain of custody refers to the documented and unbroken trail of accountability

that records the lifecycle of a sensitive asset; from creation and use to final

destruction. For data stored on physical media like hard disk drives (HDDs),

solid state drives (SSDs), or e-media, maintaining a secure and traceable chain

of custody is essential for demonstrating regulatory compliance and ensuring

operational integrity. ... With the right high-security equipment, such as

NSA-listed paper shredders, hard drive crushers and shredders, and

disintegrators, destruction can occur at the point of use – or at least within

the facility – under supervision and with real-time documentation. This

eliminates transport risks, reduces reliance on third parties, and keeps

sensitive data within your organization’s security perimeter. ... Compliance

auditors are increasingly looking beyond destruction certificates. They want

transparency. That means policies, procedures, logs, and physical proof. With an

in-house program, organizations can tailor destruction workflows to meet

specific regulatory frameworks, from NIST 800-88 guidelines to DoD or ISO

standards. ... High-security data destruction isn’t just about preventing

breaches. It’s about instilling confidence both internally with leadership and

stakeholders, and externally with regulators and clients. By keeping destruction

in-house, organizations send a clear message: data security is

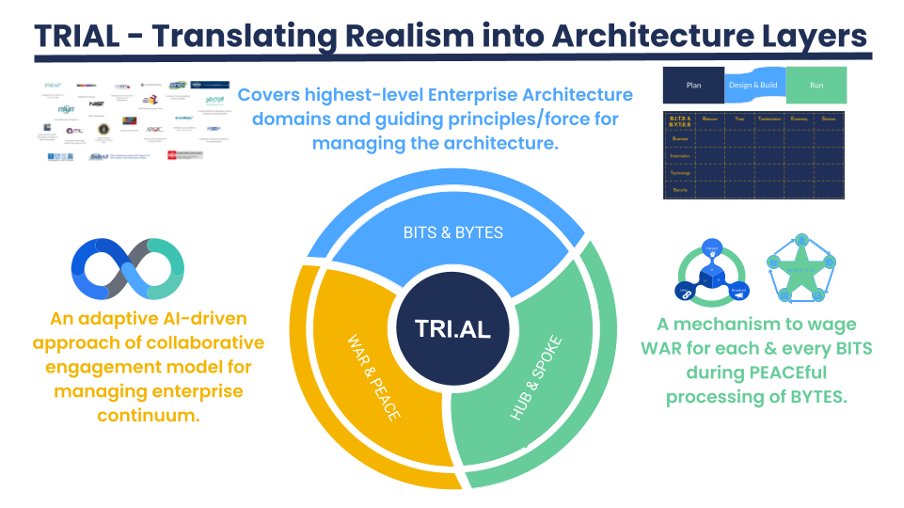

non-negotiable.If Architectures Could Talk, They’d Quote Your Boss

/articles/architecture-quote-your-boss/en/smallimage/if-architectures-could-talk-they-would-quote-your-boss-thumb-image-1760361447481.jpg) Architecture doesn’t fail in the codebase. It fails in the meeting rooms. In the

handoffs. In the silences between teams who don’t talk — or worse, assume they

understand each other. The real complexity lives between the lines — not of

code, but of communication. And once we stop pretending otherwise, we begin to

see that the technical is inseparable from the social. ... There’s a deep irony

in the fact that many of us in software come from a binary world — one shaped by

certainty, logic, and repeatability. We’re trained to seek out 1s and 0s, true

or false, compile or fail. But architecture lives in the fog — in uncertainty,

trade-offs, and risk. It’s not a world of 1s and 0s, but of shifting constraints

and grey zones. Where engineers long for clarity, architecture demands comfort

with ambiguity. Decisions rarely have a single correct answer — they have

consequences, compromises, and contexts that evolve over time. It’s a game of

incomplete information, where clarity emerges only through conversation,

alignment, and compromise. This also explains why so many of our colleagues feel

frustrated. Requirements change. Priorities shift. Stakeholders contradict each

other. And it’s tempting to see all that as failure. But it’s not failure — it’s

the environment. It’s how complex systems grow. Architecture isn’t about

eliminating uncertainty. It’s about giving teams just enough structure to move

within it with confidence.

Architecture doesn’t fail in the codebase. It fails in the meeting rooms. In the

handoffs. In the silences between teams who don’t talk — or worse, assume they

understand each other. The real complexity lives between the lines — not of

code, but of communication. And once we stop pretending otherwise, we begin to

see that the technical is inseparable from the social. ... There’s a deep irony

in the fact that many of us in software come from a binary world — one shaped by

certainty, logic, and repeatability. We’re trained to seek out 1s and 0s, true

or false, compile or fail. But architecture lives in the fog — in uncertainty,

trade-offs, and risk. It’s not a world of 1s and 0s, but of shifting constraints

and grey zones. Where engineers long for clarity, architecture demands comfort

with ambiguity. Decisions rarely have a single correct answer — they have

consequences, compromises, and contexts that evolve over time. It’s a game of

incomplete information, where clarity emerges only through conversation,

alignment, and compromise. This also explains why so many of our colleagues feel

frustrated. Requirements change. Priorities shift. Stakeholders contradict each

other. And it’s tempting to see all that as failure. But it’s not failure — it’s

the environment. It’s how complex systems grow. Architecture isn’t about

eliminating uncertainty. It’s about giving teams just enough structure to move

within it with confidence.

CIOs’ AI confidence yet to match results

According to a new survey from AIOps observability provider Riverbed, 88% of

technical specialists and business and IT leaders believe their organizations

will make good on their AI expectations, despite only 12% currently having AI in

enterprise-wide production. Moreover, just one in 10 AI projects have been fully

deployed, respondents say, suggesting that enthusiasm is significantly outpacing

the ability to deliver. ... One problem with IT leaders’ possible overconfidence

about AI expectations is that most organizations have no concrete expectations

to begin with, says Warren Wilbee, CTO of supply chain software provider

ToolsGroup. “Are the expectations a 10% productivity again, or a 2% drop in

staffing?” he says. “The expectations are ill-defined.” Other AI experts see AI

enthusiasm outpacing the difficulties of deploying the technology. In many

cases, company leaders underestimate the technology requirements and the

compliance and governance demands, says Patrizia Bertini, managing partner at UK

IT regulatory advisory firm Aligned Consulting Group. ... Many organizations’

leaders don’t understand the full implications of rolling out and using AI, he

says, with many not realizing the extent to which the technology will change the

nature of work. Instead of executing tasks, many employees will manage agents

that complete those tasks — a seismic shift. “Agentic AI holds enormous

potential, but the path to full deployment will take time, requiring effort and

investment,” he says.

According to a new survey from AIOps observability provider Riverbed, 88% of

technical specialists and business and IT leaders believe their organizations

will make good on their AI expectations, despite only 12% currently having AI in

enterprise-wide production. Moreover, just one in 10 AI projects have been fully

deployed, respondents say, suggesting that enthusiasm is significantly outpacing

the ability to deliver. ... One problem with IT leaders’ possible overconfidence

about AI expectations is that most organizations have no concrete expectations

to begin with, says Warren Wilbee, CTO of supply chain software provider

ToolsGroup. “Are the expectations a 10% productivity again, or a 2% drop in

staffing?” he says. “The expectations are ill-defined.” Other AI experts see AI

enthusiasm outpacing the difficulties of deploying the technology. In many

cases, company leaders underestimate the technology requirements and the

compliance and governance demands, says Patrizia Bertini, managing partner at UK

IT regulatory advisory firm Aligned Consulting Group. ... Many organizations’

leaders don’t understand the full implications of rolling out and using AI, he

says, with many not realizing the extent to which the technology will change the

nature of work. Instead of executing tasks, many employees will manage agents

that complete those tasks — a seismic shift. “Agentic AI holds enormous

potential, but the path to full deployment will take time, requiring effort and

investment,” he says.

What if your privacy tools could learn as they go?

The research explains that traditional local differential privacy methods tend

to be conservative because they assume no knowledge about the data. This leads

to adding more noise than needed, which harms data utility. The PML approach

narrows that gap by making use of whatever knowledge can be safely derived from

the data itself. This design shift resonates with challenges seen in industry.

... Beyond the case studies, the research provides a set of mathematical results

that can be applied to other privacy settings. It shows how to compute optimal

mechanisms under uncertainty, including closed-form solutions for simple binary

data and a convex optimization program for more complex datasets. These results

mean that privacy engineers could, in theory, design systems that automatically

adjust to the available data. The framework explains how to choose privacy

parameters to meet a desired balance between protection and accuracy, given a

known probability of error. ... This research offers a way to improve one of the

biggest tradeoffs in privacy engineering: the loss of utility caused by assuming

no prior knowledge about the data-generating process. By allowing systems to

safely incorporate limited, empirically derived information, it becomes possible

to provide strong privacy guarantees while preserving more data usefulness. The

findings also suggest that privacy guarantees do not have to come at such a

steep cost to data utility.

The research explains that traditional local differential privacy methods tend

to be conservative because they assume no knowledge about the data. This leads

to adding more noise than needed, which harms data utility. The PML approach

narrows that gap by making use of whatever knowledge can be safely derived from

the data itself. This design shift resonates with challenges seen in industry.

... Beyond the case studies, the research provides a set of mathematical results

that can be applied to other privacy settings. It shows how to compute optimal

mechanisms under uncertainty, including closed-form solutions for simple binary

data and a convex optimization program for more complex datasets. These results

mean that privacy engineers could, in theory, design systems that automatically

adjust to the available data. The framework explains how to choose privacy

parameters to meet a desired balance between protection and accuracy, given a

known probability of error. ... This research offers a way to improve one of the

biggest tradeoffs in privacy engineering: the loss of utility caused by assuming

no prior knowledge about the data-generating process. By allowing systems to

safely incorporate limited, empirically derived information, it becomes possible

to provide strong privacy guarantees while preserving more data usefulness. The

findings also suggest that privacy guarantees do not have to come at such a

steep cost to data utility.

13 cybersecurity myths organizations need to stop believing

Big tech platforms have strong verification that prevents impersonation - Some

of the largest tech platforms like to talk about their strong identity checks as

a way to stop impersonation. But looking good on paper is one thing, and holding

up to the promise in the real world is another. “The truth is that even advanced

verification processes can be easily bypassed,” says Ben Colman ... Buying more

tools can bolster cybersecurity protection - One of the biggest traps businesses

fall into is the assumption that they need more tools and platforms to protect

themselves. And once they have those tools, they think they are safe.

Organizations are lured into buying products “touted as the silver-bullet

solution,” says Ian McShane. “This definitely isn’t the key to success.” Buying

more tools doesn’t necessarily improve security because they often don’t have a

tools problem but an operational one. ... Hiring more people will solve the

cybersecurity problem - Professionals who are truly talented and dedicated to

security are not that easy to find. So instead of searching for people to hire,

businesses should prioritize retaining their cybersecurity professionals. They

should invest in them and offer them the chance to gain new skills. “It is

better to have a smaller group of highly trained IT professionals to keep an

organization safe from cyber threats and attacks, rather than a disparate larger

group that isn’t equipped with the right skills,” says McShane.

Big tech platforms have strong verification that prevents impersonation - Some

of the largest tech platforms like to talk about their strong identity checks as

a way to stop impersonation. But looking good on paper is one thing, and holding

up to the promise in the real world is another. “The truth is that even advanced

verification processes can be easily bypassed,” says Ben Colman ... Buying more

tools can bolster cybersecurity protection - One of the biggest traps businesses

fall into is the assumption that they need more tools and platforms to protect

themselves. And once they have those tools, they think they are safe.

Organizations are lured into buying products “touted as the silver-bullet

solution,” says Ian McShane. “This definitely isn’t the key to success.” Buying

more tools doesn’t necessarily improve security because they often don’t have a

tools problem but an operational one. ... Hiring more people will solve the

cybersecurity problem - Professionals who are truly talented and dedicated to

security are not that easy to find. So instead of searching for people to hire,

businesses should prioritize retaining their cybersecurity professionals. They

should invest in them and offer them the chance to gain new skills. “It is

better to have a smaller group of highly trained IT professionals to keep an

organization safe from cyber threats and attacks, rather than a disparate larger

group that isn’t equipped with the right skills,” says McShane.

_(10).jpg&h=420&w=748&c=0&s=0)