Quote for the day:

"Life is what happens to you while you’re busy making other plans." -- John Lennon

Network digital twin technology faces headwinds

Just like

Google Maps

is able to overlay information, such as driving directions, traffic alerts or

locations of gas stations or restaurants, digital twin technology enables

network teams to overlay information, such as a software upgrade, a change to

firewalls rules, new versions of network operating systems, vendor or tool

consolidation, or network changes triggered by mergers and acquisitions. Network

teams can then run the model, evaluate different approaches, make adjustments,

and conduct validation and assurance to make sure any rollout accomplishes its

goals and doesn’t cause any problems, explains Maccioni ... “Configuration

errors are a major cause of network incidents resulting in downtime,” says

Zimmerman. “Enterprise networks, as part of a modern change management process,

should use digital twin tools to model and test network functionality business

rules and policies. This approach will ensure that network capabilities won’t

fall short in the age of vendor-driven agile development and updates to

operating systems, firmware or functionality.” ... Another valuable use case is

testing failover scenarios, says Wheeler. Network engineers can design a

topology that has alternative traffic paths in case a network component fails,

but there’s really no way to stress test the architecture under real world

conditions. He says that in one digital twin customer engagement “they found

failure scenarios that they never knew existed.”

Just like

Google Maps

is able to overlay information, such as driving directions, traffic alerts or

locations of gas stations or restaurants, digital twin technology enables

network teams to overlay information, such as a software upgrade, a change to

firewalls rules, new versions of network operating systems, vendor or tool

consolidation, or network changes triggered by mergers and acquisitions. Network

teams can then run the model, evaluate different approaches, make adjustments,

and conduct validation and assurance to make sure any rollout accomplishes its

goals and doesn’t cause any problems, explains Maccioni ... “Configuration

errors are a major cause of network incidents resulting in downtime,” says

Zimmerman. “Enterprise networks, as part of a modern change management process,

should use digital twin tools to model and test network functionality business

rules and policies. This approach will ensure that network capabilities won’t

fall short in the age of vendor-driven agile development and updates to

operating systems, firmware or functionality.” ... Another valuable use case is

testing failover scenarios, says Wheeler. Network engineers can design a

topology that has alternative traffic paths in case a network component fails,

but there’s really no way to stress test the architecture under real world

conditions. He says that in one digital twin customer engagement “they found

failure scenarios that they never knew existed.”Autonomous AI hacking and the future of cybersecurity

The

cyberattack/cyberdefense

balance has long skewed towards the attackers; these developments threaten to

tip the scales completely. We’re potentially looking at a

singularity event

for cyber attackers. Key parts of the attack chain are becoming automated and

integrated: persistence, obfuscation, command-and-control, and endpoint evasion.

Vulnerability research could potentially be carried out during operations

instead of months in advance. The most skilled will likely retain an edge for

now. But

AI agents

don’t have to be better at a human task in order to be useful. They just have to

excel in one of four dimensions: speed, scale, scope, or sophistication. But

there is every indication that they will eventually excel at all four. By

reducing the skill, cost, and time required to find and exploit flaws, AI can

turn rare expertise into commodity capabilities and gives average criminals an

outsized advantage. ... If enterprises adopt

AI-powered security

the way they adopted continuous integration/continuous delivery (CI/CD), several paths open up. AI vulnerability discovery could become a built-in

stage in delivery pipelines. We can envision a world where AI vulnerability

discovery becomes an integral part of the software development process, where

vulnerabilities are automatically patched even before reaching production — a

shift we might call continuous discovery/continuous repair (CD/CR).

The

cyberattack/cyberdefense

balance has long skewed towards the attackers; these developments threaten to

tip the scales completely. We’re potentially looking at a

singularity event

for cyber attackers. Key parts of the attack chain are becoming automated and

integrated: persistence, obfuscation, command-and-control, and endpoint evasion.

Vulnerability research could potentially be carried out during operations

instead of months in advance. The most skilled will likely retain an edge for

now. But

AI agents

don’t have to be better at a human task in order to be useful. They just have to

excel in one of four dimensions: speed, scale, scope, or sophistication. But

there is every indication that they will eventually excel at all four. By

reducing the skill, cost, and time required to find and exploit flaws, AI can

turn rare expertise into commodity capabilities and gives average criminals an

outsized advantage. ... If enterprises adopt

AI-powered security

the way they adopted continuous integration/continuous delivery (CI/CD), several paths open up. AI vulnerability discovery could become a built-in

stage in delivery pipelines. We can envision a world where AI vulnerability

discovery becomes an integral part of the software development process, where

vulnerabilities are automatically patched even before reaching production — a

shift we might call continuous discovery/continuous repair (CD/CR).

AI inference: reshaping the enterprise IT landscape across industries

AI inference is a complex operation that transforms intricate models into

actionable agents. This process is essential for making real-time decisions,

which can significantly improve user experiences. ... As AI systems handle more

sensitive information, data security and

private AI

become a key part of effective inference processes. In cloud and

Edge computing

environments, where data often moves between multiple networks and devices,

ensuring the confidentiality of user information is paramount. Private AI limits

queries and requests to a company's internal database, SharePoint, API, or other

private sources. It prevents unauthorized access and ensures that sensitive

information remains confidential even when processed in the cloud or at the

Edge. ... For AI to be truly transformative, low latency is a necessity,

ensuring that real-time responses are both swift and seamless. In the realm of

AI chatbots, for instance, the difference between a seamless conversation and a

frustrating user experience often comes down to the speed of the AI’s response.

Users expect immediate and accurate replies, and any delay can lead to a loss of

engagement and trust. By minimising latency, AI chatbots can provide a more

natural and fluid interaction, enhancing user satisfaction, and driving better

outcomes. ... By reducing the distance data must travel, Edge computing

significantly reduces latency, enabling faster and more reliable AI

inference.

AI inference is a complex operation that transforms intricate models into

actionable agents. This process is essential for making real-time decisions,

which can significantly improve user experiences. ... As AI systems handle more

sensitive information, data security and

private AI

become a key part of effective inference processes. In cloud and

Edge computing

environments, where data often moves between multiple networks and devices,

ensuring the confidentiality of user information is paramount. Private AI limits

queries and requests to a company's internal database, SharePoint, API, or other

private sources. It prevents unauthorized access and ensures that sensitive

information remains confidential even when processed in the cloud or at the

Edge. ... For AI to be truly transformative, low latency is a necessity,

ensuring that real-time responses are both swift and seamless. In the realm of

AI chatbots, for instance, the difference between a seamless conversation and a

frustrating user experience often comes down to the speed of the AI’s response.

Users expect immediate and accurate replies, and any delay can lead to a loss of

engagement and trust. By minimising latency, AI chatbots can provide a more

natural and fluid interaction, enhancing user satisfaction, and driving better

outcomes. ... By reducing the distance data must travel, Edge computing

significantly reduces latency, enabling faster and more reliable AI

inference.Smarter Systems, Safer Data: How to Outsmart Threat Actors

One of the clearest signs that a cybersecurity strategy is outdated is a lack of control and visibility over who can access what data, and on which systems. Many organizations still rely on fragmented identity management systems or grant broad access to database administrators. Others have yet to implement basic protections such as multi-factor authentication. ... Security concerns are commonly quoted as a top barrier to innovation. This is why many organizations struggle to adopt artificial intelligence, migrate to the cloud, share data externally or even internally. The only way to unblock this impasse is to start treating security as an enabler. Think about it this way: when done right, security is that key element that allows data to be moved, analyzed and shared. To exemplify this approach, if data is de-identified to maintain data privacy through the means of encryption or tokenization, in a situation of a breach, it will remain useless to attackers. ... What’s been key for the organizations that succeed in managing data risk while simultaneously unlocking value is a mindset shift. They stop seeing security as a roadblock and start seeing it as a foundation for growth. As an example, a large financial institution client has built an AI-powered solution for anti-money laundering. By protecting incoming data before it enters their system, they ensure that no sensitive data is fed to their algorithms, and thus the risk of a privacy breach, even incidental, is essentially null.AI could prove CIOs’ worst tech debt yet

AI tools can be used to clean up old code and trim down bloated software, thus

reducing one major form of tech debt. In September, for example, Microsoft

announced a new suite of autonomous AI agents designed to automatically

modernize

legacy Java

and .NET applications. At the same time, IT leaders see the potential for AI to

add to their tech debt, with too many AI projects relying on models or agents

that can be expensive to deploy and maintain and AI coding assistants generating

more lines of software than may be necessary. ... Endless AI pilot projects

create their own form of tech debt as well, says Ryan Achterberg, CTO at IT

consulting firm Resultant. This “pilot paralysis,” in which organizations launch

dozens of proofs of concepts that never scale, can drain IT resources, he says.

“Every experiment carries an ongoing cost,” Achterberg says. “Even if a model is

never scaled, it leaves behind artifacts that require upkeep and security

oversight.” Part of the problem is that AI data foundations are still shaky,

even as AI ambition remains high, he adds. ... In addition to tech debt from too

many AI pilot projects, coding assistants can create their own problems without

proper oversight, adds Jaideep Vijay Dhok, COO for technology at digital

engineering provider Persistent Systems. In some cases, AI coding assistants

will generate more lines of software than a developer asked for, he says.

AI tools can be used to clean up old code and trim down bloated software, thus

reducing one major form of tech debt. In September, for example, Microsoft

announced a new suite of autonomous AI agents designed to automatically

modernize

legacy Java

and .NET applications. At the same time, IT leaders see the potential for AI to

add to their tech debt, with too many AI projects relying on models or agents

that can be expensive to deploy and maintain and AI coding assistants generating

more lines of software than may be necessary. ... Endless AI pilot projects

create their own form of tech debt as well, says Ryan Achterberg, CTO at IT

consulting firm Resultant. This “pilot paralysis,” in which organizations launch

dozens of proofs of concepts that never scale, can drain IT resources, he says.

“Every experiment carries an ongoing cost,” Achterberg says. “Even if a model is

never scaled, it leaves behind artifacts that require upkeep and security

oversight.” Part of the problem is that AI data foundations are still shaky,

even as AI ambition remains high, he adds. ... In addition to tech debt from too

many AI pilot projects, coding assistants can create their own problems without

proper oversight, adds Jaideep Vijay Dhok, COO for technology at digital

engineering provider Persistent Systems. In some cases, AI coding assistants

will generate more lines of software than a developer asked for, he says.

Hackers Exploit RMM Tools to Deploy Malware

RMM platforms

typically operate with elevated permissions across endpoints. Once compromised,

they offer adversaries a ready-made channel for

privilege escalation, lateral movement and payload delivery, including

ransomware

... Threat actors frequently repurpose legitimate RMM tools or hijack valid

credentials, allowing malicious activity to blend seamlessly with routine

administrative tasks. This tactic complicates detection and response, especially

in environments lacking

behavioral baselining. ... "This is a typical living-off-the-land attack used by many adversaries

considering the success and ease of execution. Typically, such software are

whitelisted in most of the controls to avoid blocking and noise, due to which

its activities are not monitored much," Varkey said. "Like in most adversarial

acts, getting access to the software is their initial step, so if access is

limited to specific people with multifactor authorization and audited

periodically, unauthorized access can be limited. .." ... "Treat RMM

seriously. Assume compromise is possible and build defenses around prevention,

detection and rapid response. Start with a full audit of your RMM deployment -

map every agent, session and integration to identify shadow access points: asset

management is key and a good RMM solution should be able to assist here. Layered

controls are key - think defense-in-depth tailored to RMM's remote nature,"

Beuchelt said.

RMM platforms

typically operate with elevated permissions across endpoints. Once compromised,

they offer adversaries a ready-made channel for

privilege escalation, lateral movement and payload delivery, including

ransomware

... Threat actors frequently repurpose legitimate RMM tools or hijack valid

credentials, allowing malicious activity to blend seamlessly with routine

administrative tasks. This tactic complicates detection and response, especially

in environments lacking

behavioral baselining. ... "This is a typical living-off-the-land attack used by many adversaries

considering the success and ease of execution. Typically, such software are

whitelisted in most of the controls to avoid blocking and noise, due to which

its activities are not monitored much," Varkey said. "Like in most adversarial

acts, getting access to the software is their initial step, so if access is

limited to specific people with multifactor authorization and audited

periodically, unauthorized access can be limited. .." ... "Treat RMM

seriously. Assume compromise is possible and build defenses around prevention,

detection and rapid response. Start with a full audit of your RMM deployment -

map every agent, session and integration to identify shadow access points: asset

management is key and a good RMM solution should be able to assist here. Layered

controls are key - think defense-in-depth tailored to RMM's remote nature,"

Beuchelt said.

From Data to Doing: Agentic AI Will Revolutionize the Enterprise

Where do organizations see the greatest opportunities for

agentic AI? The answer is: everywhere. Survey results show that business leaders view

agentic AI as equally relevant to productivity gains, better decision-making,

and enhanced customer experiences. When asked to rank potential benefits,

improving customer experience and personalization emerge as the top priority,

followed closely by sharper decision-making and increased efficiency. What's

telling is what landed at the bottom of the list. Few organizations currently

view market and business expansion as critical. This suggests that, at least in

the near term, agentic AI will be applied less as a driver of bold new growth

and more as a catalyst for improving and extending existing operations. ...

Agentic AI is not simply the next technology wave -- it is the next great

inflection point for enterprise software. Just as client–server, the Internet,

and the cloud radically redefined industry leaders, agentic AI will determine

which vendors and enterprises can adapt quickly enough to thrive. The lesson is

clear: organizations that treat data as a strategic asset, modernize their

platforms, and embed intelligence into their workflows will not only move faster

but also serve customers better. The rest risk being left behind -- just as the

mainframe giants once were.

Where do organizations see the greatest opportunities for

agentic AI? The answer is: everywhere. Survey results show that business leaders view

agentic AI as equally relevant to productivity gains, better decision-making,

and enhanced customer experiences. When asked to rank potential benefits,

improving customer experience and personalization emerge as the top priority,

followed closely by sharper decision-making and increased efficiency. What's

telling is what landed at the bottom of the list. Few organizations currently

view market and business expansion as critical. This suggests that, at least in

the near term, agentic AI will be applied less as a driver of bold new growth

and more as a catalyst for improving and extending existing operations. ...

Agentic AI is not simply the next technology wave -- it is the next great

inflection point for enterprise software. Just as client–server, the Internet,

and the cloud radically redefined industry leaders, agentic AI will determine

which vendors and enterprises can adapt quickly enough to thrive. The lesson is

clear: organizations that treat data as a strategic asset, modernize their

platforms, and embed intelligence into their workflows will not only move faster

but also serve customers better. The rest risk being left behind -- just as the

mainframe giants once were.

Is That Your Boss or a Deepfake on the Other Side of That Video Call?

Sophisticated

deepfake technology

had perfectly replicated not just the appearance but the mannerisms and

decision-making patterns of the company’s executives. The real managers were

elsewhere, unaware their digital twins were orchestrating one of the largest

deepfake heists in corporate history. This reflects a terrifying trend of AI

fraud that is shaking the financial services industry. Deepfake-enabled attacks

have grown by an alarming 1,740% in just one year, representing one of the

fastest-growing AI-powered threats. More than half of businesses in the U.S. and

U.K. have been targeted by deepfake-powered financial scams, with 43% falling

victim. ... The deepfake threat extends far beyond immediate financial losses.

Each successful attack erodes the foundation of digital communication itself.

When employees can no longer trust that their CEO is real during a video call,

the entire remote work infrastructure becomes suspect in particular for

financial institutions, which deal in the currency of trust. ... Financial

services companies must implement comprehensive AI governance frameworks,

continuous monitoring systems, and robust incident response plans to address

these evolving threats while maintaining operational efficiency and customer

trust. These systems and protocols must extend not only within their front

office but to their back office, including vendor management and third-party

suppliers who manage their data.

Sophisticated

deepfake technology

had perfectly replicated not just the appearance but the mannerisms and

decision-making patterns of the company’s executives. The real managers were

elsewhere, unaware their digital twins were orchestrating one of the largest

deepfake heists in corporate history. This reflects a terrifying trend of AI

fraud that is shaking the financial services industry. Deepfake-enabled attacks

have grown by an alarming 1,740% in just one year, representing one of the

fastest-growing AI-powered threats. More than half of businesses in the U.S. and

U.K. have been targeted by deepfake-powered financial scams, with 43% falling

victim. ... The deepfake threat extends far beyond immediate financial losses.

Each successful attack erodes the foundation of digital communication itself.

When employees can no longer trust that their CEO is real during a video call,

the entire remote work infrastructure becomes suspect in particular for

financial institutions, which deal in the currency of trust. ... Financial

services companies must implement comprehensive AI governance frameworks,

continuous monitoring systems, and robust incident response plans to address

these evolving threats while maintaining operational efficiency and customer

trust. These systems and protocols must extend not only within their front

office but to their back office, including vendor management and third-party

suppliers who manage their data.

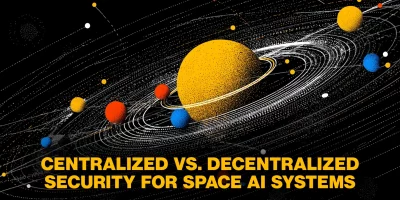

Rethinking AI security architectures beyond Earth

The researchers outline three architectures: centralized, distributed, and

federated. In a centralized model, the heavy lifting happens on Earth.

Satellites send telemetry data to a large AI system, which analyzes it and sends

back security updates. Training is fast because powerful ground-based resources

are available, but the response to threats is slower due to long transmission

times. In a distributed model, satellites still rely on the ground for training

but perform inference locally. This setup reduces delay when responding to a

threat, though smaller onboard systems can limit model accuracy. Federated

learning goes a step further. Satellites train and infer on their own data

without sending it to Earth. They share only model updates with other satellites

and ground stations. This keeps latency low and improves privacy, but

synchronizing models across a large constellation can be difficult. ... Byrne

pointed out that while space-based architectures vary in resilience, recovery

often depends on shared fundamentals. “Most systems across all segments will

need to be restored from secure backups,” he said. “One architectural

enhancement to help reduce recovery time is the implementation of distributed

Inter-Satellite Links. These links enable faster propagation of recovery updates between satellites,

minimizing latency and accelerating system-wide restoration.”

The researchers outline three architectures: centralized, distributed, and

federated. In a centralized model, the heavy lifting happens on Earth.

Satellites send telemetry data to a large AI system, which analyzes it and sends

back security updates. Training is fast because powerful ground-based resources

are available, but the response to threats is slower due to long transmission

times. In a distributed model, satellites still rely on the ground for training

but perform inference locally. This setup reduces delay when responding to a

threat, though smaller onboard systems can limit model accuracy. Federated

learning goes a step further. Satellites train and infer on their own data

without sending it to Earth. They share only model updates with other satellites

and ground stations. This keeps latency low and improves privacy, but

synchronizing models across a large constellation can be difficult. ... Byrne

pointed out that while space-based architectures vary in resilience, recovery

often depends on shared fundamentals. “Most systems across all segments will

need to be restored from secure backups,” he said. “One architectural

enhancement to help reduce recovery time is the implementation of distributed

Inter-Satellite Links. These links enable faster propagation of recovery updates between satellites,

minimizing latency and accelerating system-wide restoration.”

.webp)

/dq/media/media_files/2025/10/02/indian-factories-and-automation1-2025-10-02-11-32-01.jpg)