Experts: 'Quiet cutting' employees makes no sense, and it's costly

The practice involves reassigning workers to roles that don’t align with their

career goals to achieve workforce reduction by voluntary attrition — allowing

companies to avoid paying costly severance packages or unemployment benefits.

“Companies are increasingly using role reassignments as a strategy to sidestep

expensive layoffs,” said Annie Rosencrans, people and culture director at HiBob,

a human resource platform provider. “By redistributing roles within the

workforce, organizations can manage costs while retaining valuable talent,

aligning with the current trend of seeking alternatives to traditional layoffs.”

... The optics around quiet cutting and its effects on employee morale is a big

problem, however, and experts argue it’s not worth the perceived cost savings.

Companies reassigning workers to jobs that may not fit their hopes for a career

path or align with their skills can be demoralizing to remaining workers and

lead to “disengagement,” according to Chertok. He argued that the quiet cutting

trend isn’t necessarily intentional; it's more indicative of corporate America’s

need to reprioritize how talent is moved around within an organization.

Why We Need Regulated DeFi

One of DeFi´s greatest challenges are liquidity issues. In a decentralized

exchange, liquidity is added and owned by users, who often abandon one protocol

for another offering better rewards thus resulting in unstable liquidity on DeFi

protocols. A liquidity pool is a group of digital assets gathered to facilitate

automated and permissionless trading on a decentralized exchange platform. The

users of such exchange platforms don’t rely on a third party to hold funds but

transact with each other directly. ... There are many systemic risks currently

present in DeFi. For example, potential vulnerabilities in smart contracts can

expose users to security breaches. DeFi platforms are often interconnected,

meaning a problem on one platform can quickly spread and impact others,

potentially causing systemic failures. Another potential systemic risk is the

manipulation or failure of oracles, which bring real-world data onto the

blockchain. This can result in bad decisions and lead to losses. Ultimately,

regulated DeFi can help enforce security standards, fostering trust among

users.

Microsoft Azure Data Leak Exposes Dangers of File-Sharing Links

There are so many pitfalls in setting up SAS tokens that Wiz's Luttwak

recommends against ever using the mechanism to share files from a private cloud

storage account. Instead, companies should have a public account from which

resources are shared, he says. "This mechanism is so risky that our

recommendation is, first of all, never to share public data, within your storage

account — create a completely separate storage account only for public sharing,"

Luttwak says. "That will greatly reduce the risk of misconfiguration. You want

to share public data, create a public data externally storage account and use

only that." For those companies that continue to want to share specific files

from private storage using SAS URLs, Microsoft has added the capability as part

of GitHub's monitoring of the exposure of credentials and secrets. The company

has rescanned all repositories, the company stated in its advisory. Microsoft

recommends that Azure users limit themselves to short-lived SAS tokens, apply

the principle of least privilege, and have a revocation plan.

Chaos Engineering: Path To Build Resilient and Fault-Tolerant Software Applications

The objective of chaos engineering is to unearth system restraints,

susceptibilities, and possible failures in a controlled and planned manner

before they exhibit perilous challenges resulting in severe impact on the

organizations. Few of the most innovative organizations based on learning from

past failures understood the importance of chaos engineering and realized it as

a key strategy to unravel profound hidden issues to avoid any future failures

and impacts on business. Chaos engineering lets the application developers

forecast and detect probable collapses by disrupting the system on purpose. The

disruption points are identified and altered based on potential system

vulnerabilities and weak points. This way the system deficiencies are identified

and fixed before they degrade into an outage. Chaos engineering is a growing

trend for DevOps and IT teams. A few of the world’s most technologically

innovative organizations like Netflix and Amazon are pioneers in adopting chaos

testing and engineering.

Unregulated DeFi services abused in latest pig butchering twist

At first glance, the pig butchering ring tracked by Sophos operates in much the

same way as a legitimate one, establishing pools of cryptocurrency assets and

adding new traders – or, in this case, victims – until such time as the cyber

criminals drain the entire pool for themselves. This is what is known as a

rug-pull. ... “When we first discovered these fake liquidity pools, it was

rather primitive and still developing. Now, we’re seeing shā zhū pán scammers

taking this particular brand of cryptocurrency fraud and seamlessly integrating

it into their existing set of tactics, such as luring targets over dating apps,”

explained Gallagher. “Very few understand how legitimate cryptocurrency trading

works, so it’s easy for these scammers to con their targets. There are even

toolkits now for this sort of scam, making it simple for different pig

butchering operations to add this type of crypto fraud to their arsenal. While

last year, Sophos tracked dozens of these fraudulent ‘liquidity pool’ sites, now

we’re seeing more than 500.”

Time to Demand IT Security by Design and Default

Organizations can send a strong message to IT suppliers by re-engineering

procurement processes and legal contracts to align with secure by design and

security by default approaches. Updates to procurement policies and processes

can set explicit expectations and requirements of their suppliers and flag any

lapses. This isn’t about catching vendors out – many will benefit from the

nudge. Changes in procurement assessment criteria can be flagged to IT suppliers

in advance to give them a chance to course-correct. Suppliers can then be

assessed against these yardsticks. If they fail to measure up, organizations

have a clear justification to stop doing business with them. The next step is to

create liability or penalty clauses in contracts that force IT vendors to share

security costs for fixes or bolt-ons. This will drive them to devote more

resources to security and prevent rather than scramble to cure security risks.

Governments can support this by introducing laws that make it easier to claim

under contracts for poor security.

DeFi as a solution in times of crisis

The collapse of Silicon Valley Bank in March 2023 shows that even large banks

are still vulnerable to failure. But instead of requiring trust that their money

is still there, Web3 users can verify their holdings directly on chain.

Additionally, blockchain technology allows for a more efficient and

decentralized financial landscape. The peer-to-peer network pioneered by Bitcoin

means that investors can hold their own assets and transact directly with no

middlemen and significantly lower fees. And unlike with traditional banks, the

rise of DeFi sectors like DEXs, lending and liquid staking means individuals can

now have full control over exactly how their deposited assets are used.

Inflation is yet another ongoing problem that crypto and DeFi help solve. Unlike

fiat currencies, cryptocurrencies like bitcoin have a fixed total supply. This

means that your holdings in BTC cannot be easily diluted like if you hold a

currency such as USD. While a return to the gold standard of years past is

sometimes proposed as a potential solution to inflation, adopting crypto as

legal tender would have a similar effect while also delivering a range of other

benefits like enhanced efficiency.

Cyber resilience through consolidation part 1: The easiest computer to hack

Most cyberattacks succeed because of simple mistakes caused by users, or users

not following established best practices. For example, having weak passwords or

using the same password on multiple accounts is critically dangerous, but

unfortunately a common practice. When a company is compromised in a data breach,

account details and credentials can be sold on the dark web and attackers then

attempt the same username-password combination on other sites. This is why

password managers, both third-party and browser-native, are growing in

utilization and implementation. Two-factor authentication (2FA) is also growing

in practice. This security method requires users to provide another form of

identification besides just a password — usually via a verification code sent to

a different device, phone number or e-mail address. Zero trust access methods

are the next step. This is where additional data about the user and their

request is analyzed before access is granted.

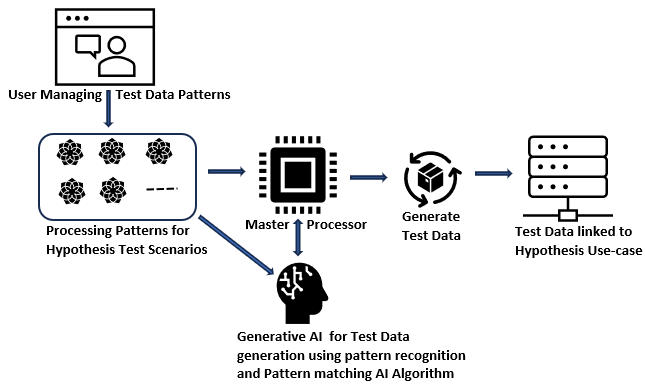

AI for Developers: How Can Programmers Use Artificial Intelligence?

If you write code snippets purely by hand, it is prone to errors. If you audit

existing code by hand, it is prone to errors. Many things that happen during

software development are prone to errors when they’re done manually. No, AI for

developers isn’t completely bulletproof. However, a trustworthy AI tool can help

you avoid things like faulty code writing and code errors, ultimately helping

you to enhance code quality. ... AI is not 100% bulletproof, and you’ve probably

already seen the headlines: “People Are Creating Records of Fake Historical

Events Using AI“; “Lawyer Used ChatGPT In Court — And Cited Fake Cases. A Judge

Is Considering Sanctions“; “AI facial recognition led to 8-month pregnant

woman’s wrongful carjacking arrest in front of kids: lawsuit.” This is what

happens when people take artificial intelligence too far and don’t use any

guardrails. Your own coding abilities and skill set as a developer are still

absolutely vital to this entire process. As much as software developers might

love to completely lean on an AI code assistant for the journey, the technology

just isn’t to that point.

The DX roadmap: David Rogers on driving digital transformation success

companies mistakenly think that the best way to achieve success is by committing

a lot of resources and focusing on implementation at all costs with the solution

they have planned. Many organizations get burned by this approach because they

don’t realize that markets are shifting fast, new technologies are coming in

rapidly, and competitive dynamics are changing swiftly in the digital era. For

example, CNN decided to get into digital news after looking at many benchmarks

and reading several reports, thinking subscribers will pay monthly for a

standalone news app. It was a disaster and they shut down the initiative within

a month. To overcome this challenge, companies must first unlearn the habit of

assuming things they know that they don’t know and are trying to manage through

planning. They should rather manage through experimentation. CIOs can help their

enterprises in this area. They must bring what they have learned in their

evolution towards agile software development over the years and help apply these

rules of small teams, customer centricity, and continuous delivery to every part

of the business.

Quote for the day:

"Strategy is not really a solo sport _

even if you_re the CEO." -- Max McKeown

/filters:no_upscale()/articles/domain-driven-cloud/en/resources/50figure-1-1694511686282.jpg)