Borderless Data vs. Data Sovereignty: Can They Co-Exist?

Businesses have long understood that data sharing has limits (or borders).

Legal separations keep data from various subsidiaries distinct or limit

sharing between partners to specific data types. Multi-tenant software

applications often require logical partitions to keep customer data private.

What is rapidly changing are new data sovereignty laws, often cloaked as "data

privacy" regulations, that enforce geographic boundaries on where data is

processed and stored. Businesses must comply with the laws of each country

where they operate, and data sovereignty presents a clear compliance challenge

as companies hurry to rethink how and where they safely acquire personal data

to share and protect. Countries enacting regulations keeping personal data

inside their borders may deem their citizens' data of strategic national

importance. More commonly, it's an enforcement mechanism that acknowledges

personal data as an asset owned by individuals that businesses must use and

share according to that country's laws. Recent data sovereignty requirements

cannot be easily bypassed or pushed to the consumer's consent.

All change: The new era of perpetual organizational upheaval

With upsets coming from all directions—whether they be supply chain

disruptions, surging inflation, or spikes in interest rates and energy

prices—companies need to focus on being prepared and ready to act at all

times. The key is not just to bounce out of crises, but to bounce

forward—landing on their feet relatively unscathed and racing ahead with new

energy. ... But it’s raising huge questions: How can companies provide

structure and support to all employees regardless of where they are? How do

they address the potential risks to company culture and the sense of

belonging, as well as to collaboration and innovation? The pandemic

exacerbated other trends, including the continuing skills mismatch in the

labor market, which the onward march of technology is intensifying. It threw a

harsh light on the challenge of workplace motivation—sometimes referred to as

the “great attrition,” with workers leaving their jobs, or quiet quitting,

essentially downscaling their efforts on the job.

A guide to becoming a Chief Information Security Officer: Steps and strategies

The technical skills are a must-have. Know all about network security, cloud

security, identity access management, adopting and adapting infrastructure,

along with tools and technologies that allow for the preservation of

organizational data privacy, integrity and computing availability. Security

engineers who are interested in becoming CISOs often focus on problem hunting.

CISOs need to not only be able to find problems, but to identify problems and

vulnerabilities that aren’t apparent to those around them. Learning to ask the

right kinds of questions and thinking about issues in unconventional ways take

time and practice. CISOs need to continuously update their mental models

when it comes to thinking about cyber security. The mental model required for

on-premise cyber security implementation is different from that required for

the cloud. As an increasing number of automation and AI-based tools emerge,

mental models will again need to be retrofitted. Many aspiring CISOs sell

their technical credentials to prospective employers. This is

important.

TinyML computer vision is turning into reality with microNPUs (µNPUs)

Digital image processing—as it used to be called—is used for applications

ranging from semiconductor manufacturing and inspection to advanced driver

assistance systems (ADAS) features such as lane-departure warning and

blind-spot detection, to image beautification and manipulation on mobile

devices. And looking ahead, CV technology at the edge is enabling the next

level of human machine interfaces (HMIs). HMIs have evolved significantly in

the last decade. On top of traditional interfaces like the keyboard and mouse,

we have now touch displays, fingerprint readers, facial recognition systems,

and voice command capabilities. While clearly improving the user experience,

these methods have one other attribute in common—they all react to user

actions. The next level of HMI will be devices that understand users and their

environment via contextual awareness. Context-aware devices sense not only

their users, but also the environment in which they are operating, all in

order to make better decisions toward more useful automated

interactions.

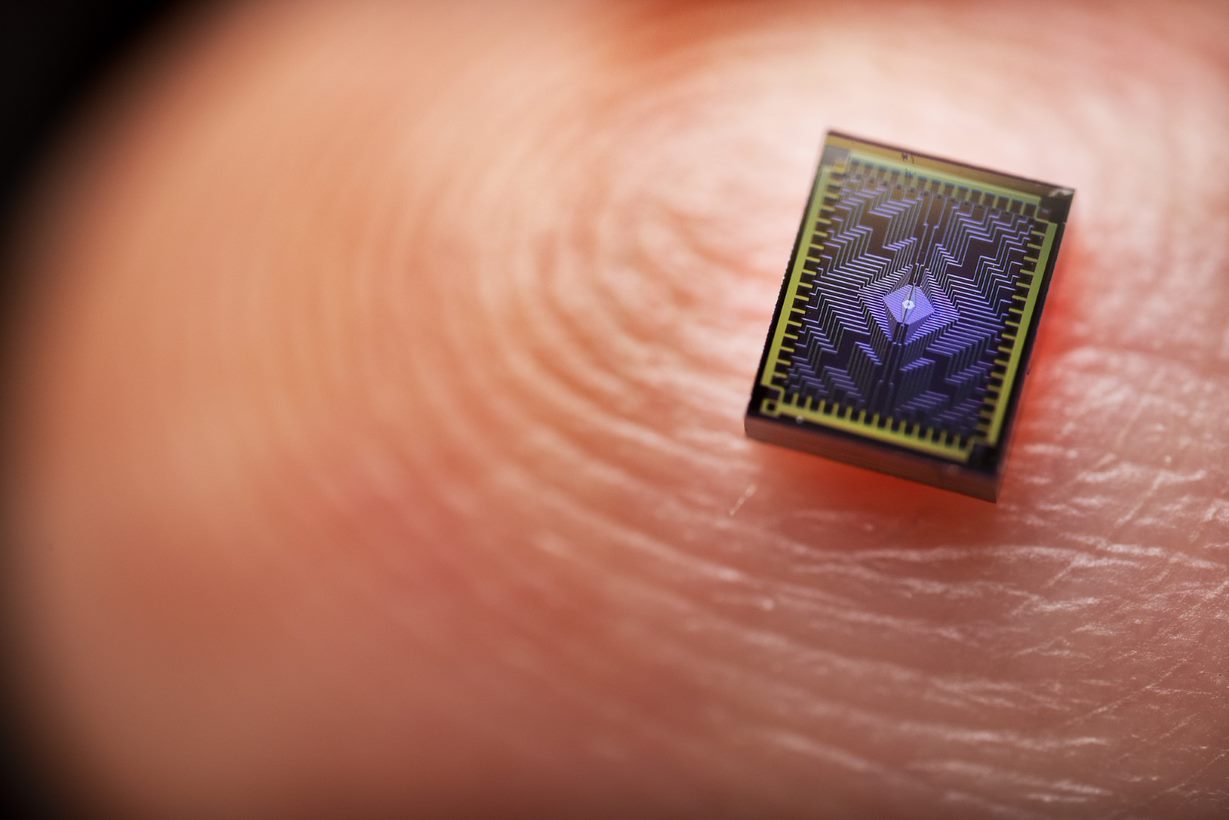

Intel Announces Release of ‘Tunnel Falls,’ 12-Qubit Silicon Chip

“Tunnel Falls is Intel’s most advanced silicon spin qubit chip to date and

draws upon the company’s decades of transistor design and manufacturing

expertise. The release of the new chip is the next step in Intel’s long-term

strategy to build a full-stack commercial quantum computing system. While

there are still fundamental questions and challenges that must be solved along

the path to a fault-tolerant quantum computer, the academic community can now

explore this technology and accelerate research development.” — Jim Clarke,

director of Quantum Hardware, Intel Why It Matters: Currently, academic

institutions don’t have high-volume manufacturing fabrication equipment like

Intel. With Tunnel Falls, researchers can immediately begin working on

experiments and research instead of trying to fabricate their own devices. As

a result, a wider range of experiments become possible, including learning

more about the fundamentals of qubits and quantum dots and developing new

techniques for working with devices with multiple qubits.

What bank leaders should know about AI in financial services

While this technology has many exciting potential use cases, so much is still

unknown. Many of Finastra’s customers, whose job it is to be risk-conscious,

have questions about the risks AI presents. And indeed, many in the financial

services industry are already moving to restrict use of ChatGPT among

employees. Based on our experience as a provider to banks, Finastra is focused

on a number of key risks bank leaders should know about. Data integrity is

table stakes in financial services. Customers trust their banks to keep their

personal data safe. However, at this stage, it’s not clear what ChatGPT does

with the data it receives. This begs the even more concerning question: Could

ChatGPT generate a response that shares sensitive customer data? With the

old-style chatbots, questions and answers are predefined, governing what’s

being returned. But what is asked and returned with new LLMs may prove

difficult to control. This is a top consideration bank leaders must weigh and

keep a close pulse on. Ensuring fairness and lack of bias is another critical

consideration.

Are public or proprietary generative AI solutions right for your business?

Internal large language models are interesting. Training on the whole internet

has benefits and risks — not everyone can afford to do that or even wants to

do it. I’ve been struck by how far you can get on a big pre-trained model with

fine tuning or prompt engineering. For smaller players, there will be a lot of

uses of the stuff [AI] that’s out there and reusable. I think larger players

who can afford to make their own [AI] will be tempted to. If you look at, for

example, AWS and Google Cloud Platform, some of this stuff feels like core

infrastructure — I don’t mean what they do with AI, just what they do with

hosting and server farms. It’s easy to think ‘we’re a huge company, we should

make our own server farm.’ Well, our core business is agriculture or

manufacturing. Maybe we should let the A-teams at Amazon and Google make it,

and we pay them a few cents per terabyte of storage or compute. My guess is

only the biggest tech companies over time will actually find it beneficial to

maintain their own versions of these [AI]; most people will end up using a

third-party service.

Governance in the Age of Technological Innovation

To keep abreast of technological change and innovation, the board needs to

ensure that its innovation and risk agendas are up-to-date, and that

innovation is incorporated into the organisation’s strategy review. This may

involve reviewing key performance indicators, performance measures and

incentives. Within the board, the appropriate composition, culture and

interactions can promote innovation. Not all board directors will have the

relevant technical expertise, but more diverse boards can build collective

literacy and enhance human capital in the boardroom, said De Meyer. Where

necessary, committees such as scientific or innovation committees can be

created to drive greater attention to these topics. In these cases, naming

matters, said Janet Ang, non-executive Chair of the Institute of Systems

Science in the panel discussion. For instance, referring to a committee as

“Technology and Risk” instead of narrowly naming it as “IT” gives it more

weight and scope. Fundamentally, boards should not only strive for conformance

but also performance, urged Su-Yen Wong, Chair of the Singapore Institute of

Directors.

Can You Renegotiate Your Cloud Bill by Refusing to Pay It?

Hyperscalers in cloud continue to face questions about the cost and

reliability of their services, especially in light of the brief AWS outage on

June 13 that affected Southwest Airlines, McDonald’s, and The Boston Globe

along with others. Further, some organizations face regulatory requirements

that preclude the use of the cloud for certain datasets and transactions, Katz

says. “There’s really no one-size-fits-all answer because every manufacturer,

every organization, every company has different requirements.” There can be

times when a cloud-first approach does not make sense for organizations. Katz

says his company worked with a client whose dataset is very transactional with

lots of changes and database read-writes. “We ran an assessment for them and

going off to the public cloud was going to be eight times more expensive a

month than keeping it on prem.” ... Much of the market is pushing toward a

cloud-first world, but the economics could become challenging in the future.

“At some point in time, the cost of doing business in the cloud is going to be

exponentially higher, usually, than if you were to buy a depreciating asset

and then kick it to the curb,” Katz says.

Red teaming can be the ground truth for CISOs and execs

What red teams can give CISOs is the cold, hard truth of how their network

stacks up against threats that could be ruinous to the business. Red teams

leave no stone unturned and pull on every thread until it unravels. This

shines light on the vulnerabilities that will harm the finances or reputation

of the business. With a red team, objective-based continuous penetration

testing (led by experts that know attackers’ best tricks) can relentlessly

scrutinize the attack surface to explore every avenue that could lead to a

breakthrough. This proactive, “offensive security” approach will give a

business the most comprehensive picture of their attack surface that money can

buy, mapping out every possibility available to an attacker and how it can be

remediated. It is also not limited to testing the technology stack; for

businesses concerned that their employees are susceptible to social

engineering attacks, red teams can emulate social engineering scenarios as

part of their testing. A stringent social engineering assessment program

should not be overlooked in favor of only scrutinizing weaknesses in IT

infrastructure.

Quote for the day:

"Leadership is just another word for

training." -- Lance Secretan