Cloud-Focused Attacks Growing More Frequent, More Brazen

One key finding is that hackers are becoming more adept — and more motivated —

in targeting enterprise cloud environments through a growing range of tactics,

techniques and procedures. These include deploying command-and-control

channels on top of existing cloud services, achieving privilege escalation,

and moving laterally within an environment after gaining initial access. ...

While attack vectors and methods are increasingly varied, they often rely on

some common denominators, including the oldest one around: human error. For

example, 38% of observed cloud environments were running with insecure default

settings from the cloud service provider. Indeed, cloud misconfigurations are

one of the major sources of breaches. Similarly, identity access management

(IAM) is another huge area of risk rife with human error. In two out of three

cloud security incidents observed by CrowdStrike, IAM credentials were found

to be over-permissioned, meaning the user had higher levels of privileges than

necessary.

Enterprise Architecture Maturity Model – a Roadmap for a Successful Enterprise

Assessment is the evaluation of the EA practice against the reference model.

It determines the level at which the organization currently stands. It

indicates the organization’s maturity in the area concerned, and the practices

on which the organization needs to focus to see the greatest improvement and

the highest return on investment. ... Development of the EA is an ongoing

process and cannot be delivered overnight. An organization must patiently work

to nurture and improve upon its EA program until architectural processes and

standards become second nature and the architecture framework and the

architecture blueprint become self-renewing. Maturity assessment is a standard

business tool to understand the maturity level of the organization. An EAM

Assessment Framework comprises a maturity model with different maturity levels

and a set of elements, which are to be assessed, methodology and a toolkit for

assessment (questionnaires, tools, etc.). The outcome is a detailed assessment

report, which describes the maturity of the Organization, as well as the

maturity against each of the architectural elements.

European Commission Wants Labels on AI-Generated Content -- Now

The regulatory push might lead to deeper scrutiny of where AI-generated

content comes from, down to its data sources. Jan Ulrych, vice president of

research and education at Manta, favors the efforts the EU is taking to

regulate this space. Manta is a provider of a data lineage platform that

offers visibility to data flows, and the company sees data lineage as a way to

fact-check AI content. Ulrych says when it comes to news content, there does

not seem to be an effective method in place yet to validate or make sources

transparent enough for fact-checking in real-time, especially with the AI’s

ability to spawn content. “AI sped up this process by making it possible for

anyone to generate news,” he says. It is almost a given that generative AI

will not disappear because of regulations or public outcry, but Ulrych sees

the possibility of self-regulation among vendors along with government

guardrails as healthy steps. “I would hope, to a large degree, the vendors

themselves would invest into making the data they’re providing more

transparent,” he says.

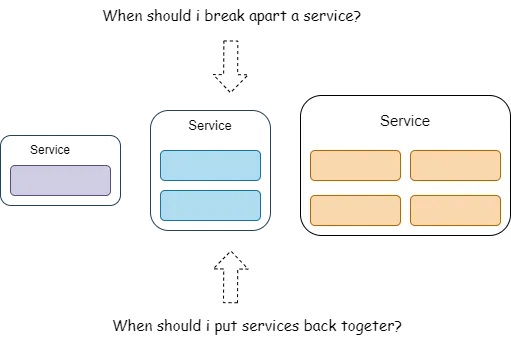

Finding The Right Size of a Microservice

Determining the right level of granularity — the size of the service — is one

of the many hard parts of a microservices architecture that we as developers

struggle with. Granularity is not defined by the number of classes or lines of

code in a service, but rather by what the service does — hence, there is this

conundrum to getting service granularity right. ... Since we are living in the

era of micro-services and nano-services, most development teams do mistakes by

breaking services arbitrarily and ignoring the consequences that come with it.

In order to find the right size, one should carry out the trade-off analysis

on different parameters and make a calculated decision on the context and

boundary of a microservice. ... The scope and function mainly depend on two

attributes — first is cohesion, which means the degree and manner to which the

operation of a particular service interrelate. The second is the overall size

of a component, measured usually in terms of the number of responsibilities,

the number of entry points into the service, or both.

What is Web3 decentralized cloud storage?

Web3 storage is, as the name suggests, decentralised, meaning the data is held

across multiple repositories. If a government agency, or hacker, wanted to

obtain confidential data, there’s no single location to raid. Unless granted

the user’s keys, there’s no way to unlock data held on Web3 storage. Security

and privacy are guaranteed. ‘For a company looking for resilient, low cost,

and predictable storage … Web3 storage is now undeniably a viable – if still

unusual – proposition’ Web3 cloud storage scales well. Local storage can run

out, but with Web3 there is always room for more (even if you may have to pay

to access the extra space). “It can also scale horizontally, accommodating the

increasing demand for data storage without centralised bottlenecks,” says

Servadei. Access speeds are acceptable. “It’s going to be slower than you’d

have a normal hard-drive or CD. But it stores data the same way Amazon S3

stores data.” Decentralised storage also a more permanent way to store files.

Hosting sites don’t last forever. Anyone wanting to access historic websites

on Geocities or 4sites or Xanga will know the annoyance of web hosts going

bust. Link rot is a curse of the internet.

To solve the cybersecurity worker gap, forget the job title and search for the skills you need

Steven Sim, CISO for a global logistics company and a member of the Emerging

Trends Working Group with the IT governance association ISACA, has adopted

this thinking. ... “They may not have the relevant [security] certification,

but they have the domain knowledge,” he says, pointing out that OT security

has some requirements that differ from IT security which makes that OT

background particularly valuable on his team. Sim says he looks for “a passion

and keenness to learn” in such candidates. He also looks for candidates who

demonstrate ownership of their work, a high degree of integrity, a willingness

to collaborate, and a “risk-based mindset.” Sim then upskills such hires by

having them receive on-the-job training and earn security certifications.

Moreover, he says drawing workers from OT helps create more collaboration with

the function and ultimately more secure OT operations. He says that result has

helped get OT leaders onboard with his recruiting efforts, adding that they

see it as a “symbiotic win-win relationship.”

Innovation without disruption: virtual agents for hyper-personalized customer experience (CX)

VAs help “hold the fort” on routine calls so live agents can focus more on

complicated interactions, but they’re smart enough to handle certain

complexities on their own. They can effortlessly navigate topics, handle a

wide range of questions, and seamlessly operate across multiple channels. The

technology also grows in intelligence with use, allowing VAs to act with

greater – comparably humanlike – awareness. For example, you might present a

customer with a choice of channels for engagement such as chat, phone, and

social media. After communicating with the customer, your VA can default to

that person’s preferred channel for future conversations. ... VAs can

hyper-personalize even routine interactions. Let’s say a customer initiates a

chat session with a VA for resetting a forgotten password. The VA can ask the

customer if they would like to switch to text messaging for a more effective

multimedia experience. If the customer accepts, the chat session will end and

the VA will seamlessly switch to SMS.

Building a secure coding philosophy

Discussing secure coding, Læarsson says: “From criteria’s definition through

coding and release – our quality assurance processes include both automated

and manual testing, which helps us ensure that we push and maintain high

standards with every application and update we do. The software we develop is

tested for both functional and structural quality standards – from how

effectively applications adhere to the core design specifications, to whether

it meets all security, accessibility, scalability and reliability standards.”

Peer review is used to run an in-depth technical and logical line-by-line

review of code to ensure its quality. Within the National Digitalisation

Programme, Læarsson says: “Our low-code development projects are divided into

scrum teams, where each team creates stories and tasks for each sprint and

defines specific criteria for these.” These stories enable people to

understand the role of a particular piece of software functionality. “When

stories are done, they are tested by the same analysts who have specified the

stories.

UK Takes the First Step to Stop Authorized Payment Scams

The U.K.'s Payment Systems Regulator said fighting APP scams requires taking an

ecosystem-level approach. Fraudsters are specifically targeting faster payment

services because of the speed of transactions, so financial institutions need to

be confident that they can authorize payments between each other, no matter what

the channel. Consumers and businesses have always trusted banks to provide

expertise and capabilities they do not possess themselves. They want to know

that their bank is doing everything it can to protect them from scammers. Ken

Palla, retired director of MUFG Bank, said the regulator has put together a very

detailed and complete document. "It is clear what is included in the policy

statement and what is excluded. The PSR wants payment firms to take

responsibility for protecting their customers at the point a payment is made. In

doing so, it expects the new reimbursement requirement to lead firms to innovate

and develop effective, data-driven interventions to change customer behavior."

Building a culture of security awareness in healthcare begins with leadership

A well-tailored security program must be just that: tailored. Many security

legal frameworks are moving from specificity in controls towards a

discretionary-based approach. This “discretionary” standard is interpreted by

governing bodies that interpret the leading-edge developments in the industry.

An organization must trace what data is stored or processed and ensure security

controls are mapped internally to an organization and externally across vendors.

Healthcare organizations must dedicate time to ensure appropriate

administrative, technical, and physical controls are in place at the

organization and its vendors to protect data stored and processed. The saying

“one size fits all” is never true for how a security program is administered and

applied in the healthcare technology industry, or any other industry. However,

the fundamental principles are the same: understanding what data is processed by

an organization, identifying true risks (internal and external) to the data,

evaluating the impacts of those risks, and whether existing controls are

adequate to reduce those risks to an acceptable standard.

Quote for the day:

"The key to being a good manager is

keeping the people who hate me away from those who are still undecided." --

Casey Stengel

No comments:

Post a Comment