Top 5 Internet Technologies of 2021

Speaking of React, 2021 didn’t see any diminishment of the popular

Facebook-derived JavaScript library. Although React-based frameworks abound, one

in particular stood out this year: Next.js, the open source framework managed by

Vercel. At the end of October, Vercel announced version 12 of Next.js, which

included ES modules and URL imports, instant Hot Module Replacement (HMR), and

something called “Middleware” that enables you to “run code before a request is

completed.” Next.js is indicative of the rise of SSGs (Static Site Generators)

over the past few years, with Gatsby and Hugo other examples. Although, there

has been a noticeable move away from pure static generation — Next.js now

describes itself as a “hybrid static [and] server rendering” framework. Next.js

developers love its ease-of-use and all the fancy features (like “edge

functions”), however not everyone is enamored with the output of Next.js-made

apps. That’s perhaps more of an indictment of React itself, than Next.js. But it

is worth noting that there is increasing pushback against React frameworks on

the web, due to the amount of JavaScript they tend to use.

Why it’s time to rethink your cyber talent and retention strategy

Organisations that don’t invest in cyber skills training and development

programmes for technical personnel and the wider workforce risk throttling their

future internal talent marketplace. Today’s increasingly digital workplace means

cyber security is everyone’s business. By extending cyber awareness and training

to all employees, organisations will be able to mobilise those individuals that

demonstrate aptitude and interest to build up their skills set and acquire

industry-recognised certifications that will help the organisation expand and

strengthen its cyber security teams. Alongside initiating a mentorship programme

to support people make a ‘job shift’ into cyber security roles, organisations

should look to facilitate defined cyber security career pathways. ... Many IT

leaders are already active members of knowledge networks and communities, that

present a rich seam of opportunity when it comes to virtually meeting and

evaluating potential candidates who are an exact match for their business, in a

highly targeted way.

On the Importance of Bayesian Thinking in Everyday Life

Surprisingly, there is no consensus as to what probability really means. In

general, there are two ways to think about it. One is to define probability as

the observed frequency of events in many trials. For instance, if one would

toss a coin many times, approximately half of the outcomes will be heads, and

the other half will be tails. The more tosses, the closer the observed

frequencies will be to 50–50. Hence, we say that the probability of tossing

heads (or tails) is 50%, or 0.5. This is the so-called frequentist

probability. There is also another way to think about it, known as subjective

or Bayesian probability. In a nutshell, this definition states that a person’s

subjective belief about how likely something is to happen is also a

probability. I might say: I think there is a 50% chance it will rain tomorrow.

It is a valid statement of a Bayesian probability, but not of a frequentist

one. ... Whichever definition of probability we adopt (and we will see

both in action shortly), probability always follows certain rules. It is a

number between 0 and 1 that expresses how certain something is to

happen.

The Future of Work is Not Corporate - It’s DAOs and Crypto Networks

As companies grow, they are no longer able to maintain a sustainable

relationship with these orbital network participants. The relationship

between the company and the participants turns zero-sum, and in order to

maximize profits, the company begins to extract value from these

participants. ... The model of a company having strict boundaries between

internal and external may have made sense in the Industrial Age, but in the

Information Age, this model leads to misaligned incentives and unsustainable

extraction. In our world of complex information and orbital stakeholders,

companies are no longer suited to help us coordinate our activity. Crypto

networks create better alignment between participants, and DAOs will be the

coordination layer for this new world. ... DAOs will eventually replace the

traditional model. A DAO is an internet-native organization with core

functions that are automated by smart contracts, and with people who do the

things that automation cannot. In practice, not all DAOs are decentralized

or autonomous, so it is best to think of DAOs as internet-based

organizations that are collectively owned and controlled by its members.

The future is not the Internet of Things… it is the Connected Intelligent Edge

It is not surprising that Qualcomm is talking about it. At its recent

Investor Day presentation, Amon shared how the company is uniquely

positioned to drive the Connected Intelligent Edge: “We are working to

enable a world where everyone and everything is intelligently connected. Our

mobile heritage and DNA puts us in an incredible position to provide

high-performance, low-power computing, on-device intelligence, all wireless

technologies, and leadership across not only AI processing and connectivity

but camera, graphics, and sensors. These technologies will scale to support

every single device at the edge, from earbuds all the way to connected

intelligent vehicles.” For Qualcomm, Amon sees this as an opportunity to

engage a $700 billion addressable market in the next decade. Amon is not

alone. ... “Qualcomm is a leader at the Intelligent Edge, driving advances

in efficient computing, wireless connectivity and on-device AI. And your

vision for a future of technology where everyone and everything is

intelligently connected is aligned with our own,” Nadella said.

Measure Outcomes, Not Outputs: Software Development in Today’s Remote Work World

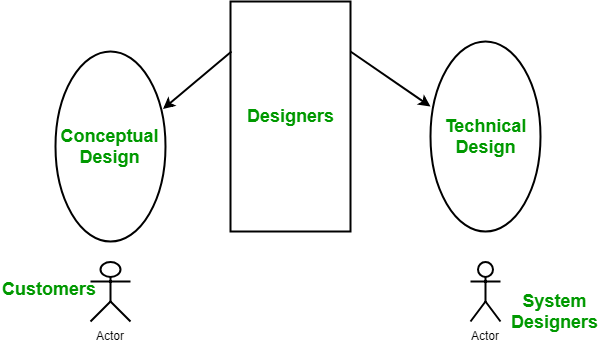

/filters:no_upscale()/articles/measure-outcomes-not-outputs/en/resources/3figure-1-1639490993399.jpg)

Lower productivity does not always mean that the developer lacks skills and

is therefore inefficient. Comparing how much code was written to how much

was moved into production provides some key insights. The first insight is

whether or not the developer was working on features that are important to

the business. Suppose the development team wrote a lot of code, but only a

small amount made it to production. In such a scenario, it could mean they

weren’t working on the right features because someone misunderstood the

business priorities or spent a lot of time on prototyping. Secondly, it is

possible that the product owner did not fully define the requirement and

kept on changing it, resulting in code churn. Code churn measures the amount

of code that was re-written for a feature to be done right. Code churn can

happen because of a) inexperienced developers writing bad code, b) the

developer’s poor understanding of the product requirements, or c) the

product owner not defining the feature well leading to scope changes, or d)

the prioritization of features not done right by the product owner.

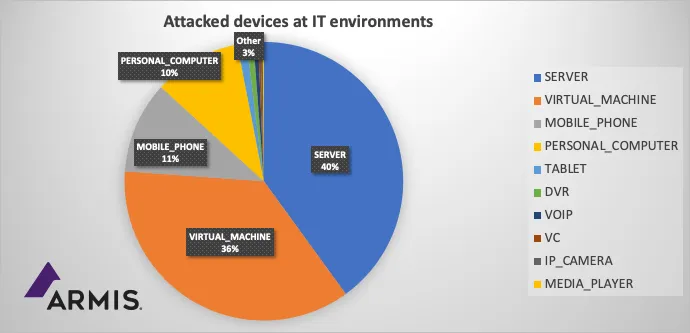

Lights Out: Cyberattacks Shut Down Building Automation Systems

The firm, located in Germany, discovered that three-quarters of the BAS

devices in the office building system network had been mysteriously purged

of their "smarts" and locked down with the system's own digital security

key, which was now under the attackers' control. The firm had to revert to

manually flipping on and off the central circuit breakers in order to power

on the lights in the building. The BAS devices, which control and operate

lighting and other functions in the office building, were basically bricked

by the attackers. "Everything was removed ... completely wiped, with no

additional functionality" for the BAS operations in the building, explains

Thomas Brandstetter, co-founder and general manager of Limes Security, whose

industrial control system security firm was contacted in October by the

engineering firm in the wake of the attack. Brandstetter's team, led by

security experts Peter Panholzer and Felix Eberstaller, ultimately retrieved

the hijacked BCU (bus coupling unit) key from memory in one of the victim's

bricked devices, but it took some creative hacking.

What Log4Shell teaches us about open source security

Nearly every organization now uses some amount of open source, thanks to

benefits such as lower cost compared with proprietary software and

flexibility in a world increasingly dominated by cloud computing. Open

source isn’t going away anytime soon — just the opposite — and hackers know

this. As for what Log4Shell says about open-source security, I think it

raises more questions than it answers. I generally agree that open-source

software has security advantages because of the many watchful eyes behind it

— all those contributors worldwide who are committed to a program’s quality

and security. But a few questions are fair to ask: Who is minding the gates

when it comes to securing foundational programs like Log4j? The Apache

Foundation says it has more than 8,000 committers collaborating on 350

projects and initiatives, but how many are engaged to keep an eye on an

older, perhaps “boring” one such as Log4j? Should large deep-pocketed

companies besides Google, which always seems to be heavily involved in such

matters, be doing more to support the cause with people and resources?

AI Comes Alive in Industrial Automation

AI and ML tools are getting used to predict future energy consumption

patterns in manufacturing. This mitigates soaring energy costs and also

helps offset climate change. AI also helps to sort out chaotic systems such

as renewables. “Training these AI models is burning tons of energy. That’s

not false. It does take energy,” said Nicholson. “But what people are

missing is that AI models are designed to help companies with enormous

physical systems operate more efficiently.” While AI takes up a lot of

processing energy, the results in efficiency savings can far outweigh the

expense in energy consumption. “AI can help us make more with less. We can

cut down on waste with optimization. We can get growth without consuming

more,” said Nicholson. “We can train an optimization model in 20 minutes to

save a company tens of millions of dollars of energy consumption per year.

The advantages can be huge. That’s already happening.” AI can help plant

managers figure out what equipment is best for what task at what time. These

are issues that are not easily soloved without computer analysis.

Backdoor Discovered in US Federal Agency Network

Avast's suspicion of network interception and exfiltration is based on its

analysis of two files the researchers obtained. The company did not provide

ISMG with the origin of the files. One of the files, through which the

threat actor initiates the backdoor, is termed as a "downloader" by Avast.

It masquerades as a legitimate Windows file named oci[.]dll and abuses the

WinDivert, a legitimate packet-capturing utility that can be used to

implement user-made packet filters, packet sniffers, firewalls, NAT, VPNs,

tunneling applications, etc., without the need to write kernel-mode code.

This allows the attacker to listen to all internet communication via the

victim's network, they say. "We found this first file disguised as oci.dll

('C:WindowsSystem32oci.dll') - or Oracle Call Interface. It contains a

compressed library [called NTlib]. This oci.dll exports only one function

'DllRegisterService.' This function checks the MD5 of the hostname and stops

if it doesn’t match the one it stores.

Quote for the day:

"Without courage, it doesn't matter

how good the leader's intentions are." -- Orrin Woodward