Software Engineering XOR Data Science

Metrics of the software such as number of the requests and response time and

error types can be extracted by writing good logs. It is also great to do data

checks in the controller class where the requests are received and forwarded to

desired services before starting the operations for both SW and DS projects

unless the UI side doesn’t do any check. Regex validations and data type checks

can literally save the applications at both performance and security sides. An

easy SQL injection may be prevented by a simple validation and it can even save

the company’s future. On the DS side, there should be further monitoring besides

the software metrics. Distribution of the model features and the model

predictions are vital. If the distribution of any data column changes, there

might be a data-shift and a new model training can be required. If the

prediction results can be validated in a short time, these results must be

monitored and warn the developers if the accuracy is below or above from a given

threshold.

What Developers Should Look for in a Low-Code Platform

To minimize technical debt, it makes sense to reduce the number of platforms on

which you build your apps. It follows that the fewer systems you have, the more

value you get from each. When choosing a low-code platform, evaluate the other

workflows you have deployed on that platform already, or plan to deploy in the

future. Let’s say you’re considering an employee suggestion box app. If you

already have, or will have, a platform where you run employee workflows, then

you not only accelerate the time it takes to create the suggestion box app, but

reduce additional technical debt with costly integrations. Bringing in a new

platform means you have to replicate the employee information, which creates

additional cost to implement and maintain. You can remove the need for that

integration by having a single system of record where your employee suggestion

box and existing employee workflows exist. Process governance is how you manage

things like the request or intake process, change management and release

management. When it’s time to release an app on your low-code platform,

theoretically it should be as easy as clicking a button. But this is where

technical governance comes in.

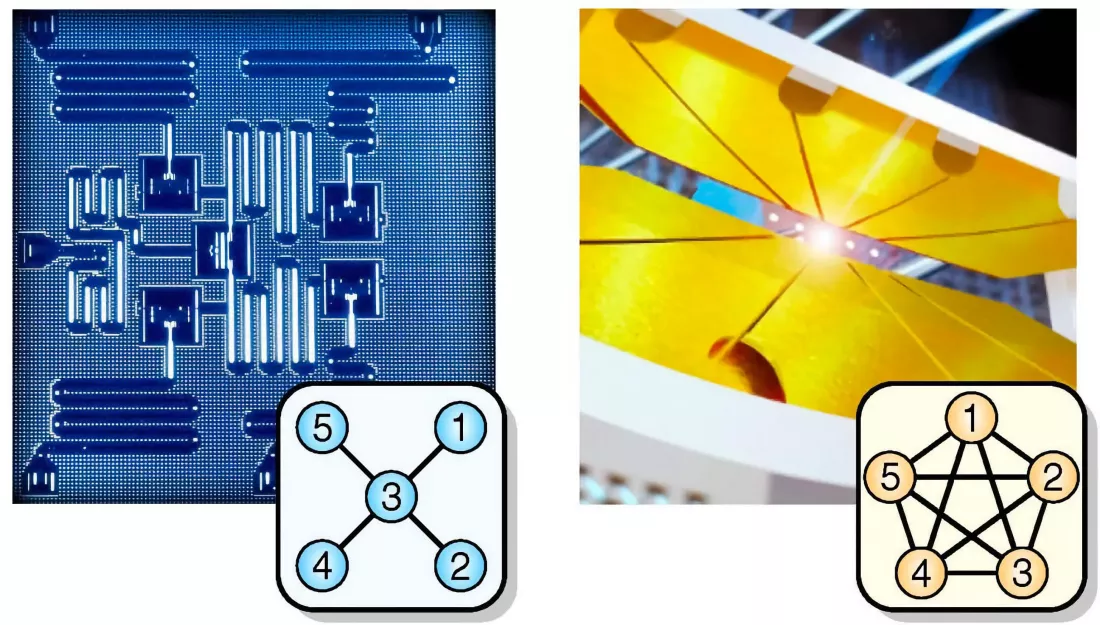

The race to secure Kubernetes at run time

Although developers now tend to test earlier and more often—or shift left, as it

is commonly known—containers require holistic protection throughout the entire

life cycle and across disparate, often ephemeral environments. “That makes

things really challenging to secure,” Gartner analyst Arun Chandrasekaran told

InfoWorld. “You cannot have manual processes here; you have to automate that

environment to monitor and secure something that may only live for a few

seconds. Reacting to things like that by sending an email is not a recipe that

will work.” In its 2019 white paper “BeyondProd: A new approach to cloud-native

security,” Google laid out how “just as a perimeter security model no longer

works for end users, it also no longer works for microservices,” where

protection must extend to “how code is changed and how user data in

microservices is accessed.” Where traditional security tools focused on either

securing the network or the individual workloads, modern cloud-native

environments require a more holistic approach than just securing the build.

How organizations are beefing up their cybersecurity to combat ransomware

Educating employees about cybersecurity is another key method to help thwart

ransomware attacks. Among those surveyed, 69% said their organization has

boosted cyber education for employees over the last 12 months. Some 20% said

they haven't yet done so but are planning to increase training in the next 12

months. Knowing how to design your employee security training is paramount. Some

89% of the respondents said they've educated employees on how to prevent

phishing attacks, 95% have focused on how to keep passwords safe and 86% on how

to create secure passwords. Finally, more than three-quarters (76%) of the

respondents said they're concerned about attacks from other governments or

nation states impacting their organization. In response, 47% said they don't

feel their own government is taking sufficient action to protect businesses from

cyberattacks, and 81% believe the government should play a bigger role in

defining national cybersecurity protocol and infrastructure. "IT environments

have become more fluid, open, and, ultimately, vulnerable," said Bryan Christ,

sales engineer at Hitachi ID Systems.

DevOps transformation: Taking edge computing and continuous updates to space

One of the biggest technological pain points in space travel is power

consumption. On Earth, we’re beginning to see more efficient CPU and memory, but

in space, throwing away the heat of your CPU is quite hard, making power

consumption the critical component. From hardware to software to the way you do

processing, everything needs to account for power consumption. On the flip side,

in space, there is one thing that you have plenty of (obviously): space. This

means that the size of physical hardware is less of a concern. Weight and power

consumption are larger issues, because those factors also impact the way

microchips and microprocessors are designed. A great example of this can be

found in the Ramon Space design. The company uses AI- and machine

learning-powered processors to build space-resilient supercomputing systems with

Earth-like computing capabilities, with the hardware components ultimately

controlled by the software they have riding on top of them. The idea is to

optimize the way software and hardware are utilized so applications can be

developed and adapted in real time, just as they would be here on Earth.

What is LoRaWAN and why is it taking over the Internet of Things?

The wireless communication takes advantage of the long-range characteristics of

the LoRa physical layer allowing a single-hop link between the end-device and

one or many gateways. All modes are capable of bi-directional communication, and

there is support for multicast addressing groups to make efficient use of

spectrum during tasks such as Firmware Over-The-Air (FOTA) upgrades. This has

led to LoRaWAN as the implementation of LoRa becoming the most widely used LPWAN

technology in the unlicensed bands below 1GHz, providing battery powered sensor

nodes with kilometres of range for the expanding applications across the

Internet of Things. A key area for this low power, long range connectivity is

smart cities. The ability to place wireless smart sensors for air quality,

traffic density and transportation wherever they are needed across the urban

infrastructure can give key insights into the activity of the city. The robust,

low power nature of the protocol allows local authorities to run a

cost-effective network with sensors in the right place, whether powered by local

power lines, batteries or solar panels.

Serious security vulnerabilities in DRAM memory devices

Razavi and his colleagues have now found that this hardware-based "immune

system" only detects rather simple attacks, such as double-sided attacks where

two memory rows adjacent to a victim row are targeted but can still be fooled by

more sophisticated hammering. They devised a software aptly named "Blacksmith"

that systematically tries out complex hammering patterns in which different

numbers of rows are activated with different frequencies, phases and amplitudes

at different points in the hammering cycle. After that, it checks if a

particular pattern led to bit errors. The result was clear and worrying: "We saw

that for all of the 40 different DRAM memories we tested, Blacksmith could

always find a pattern that induced Rowhammer bit errors," says Razavi. As a

consequence, current DRAM memories are potentially exposed to attacks for which

there is no line of defense—for years to come. Until chip manufacturers find

ways to update mitigation measures on future generations of DRAM chips,

computers continue to be vulnerable to Rowhammer attacks.

Money Laundering Cryptomixer Services Market to Criminals

Here's how it functions in greater detail: "Mixers work by allowing threat

actors to send a sum of cryptocurrency - usually bitcoin - to a wallet address

the mixing service operator owns. This sum joins a pool of the service

provider's own bitcoins, as well as other cybercriminals using the service,"

Intel 471 says in a new report. "The initial threat actor's cryptocurrency joins

the back of the 'chain' and the threat actor receives a unique reference number

known as a 'mixing code' for deposited funds," the company says. "This code

ensures the actor does not get back their own 'dirty' funds that theoretically

could be linked to their operations. The threat actor then receives the same sum

of bitcoins from the mixer's pool, muddled using the service's proprietary

algorithm, minus a service fee." As a further anonymity boost, clean bitcoins

can be routed to additional cryptocurrency wallets to make the connections with

dirty bitcoins even more difficult for law enforcement authorities to track,

Intel 471 says.

Innovation resilience during the pandemic—and beyond

Despite some decline in government spending, the overall impact on global

innovation could be minimal. We have reason to be hopeful here: in 2008–09,

increases in corporate R&D spending compensated for shortfalls in government

R&D investment. And this appears to be true again today based on what we

know so far. About 70% of the 2,500 largest global R&D spenders have

released their 2020 R&D spending data. We found a healthy increase of

roughly 10% in 2020, with roughly 60% of these largest R&D spenders

reporting an increase. This reflects a decade-long trend of strong corporate

innovation investment, which is perhaps not surprising, as the pace of progress

in domains such as artificial intelligence and biotech has increased, and many

new commercial growth opportunities have opened up around them. Of course, the

view at the sector level is more nuanced. The pandemic-era focus on well-being

and the rapid production of vaccines saw increased investments in health-related

sectors, with estimates of US government investments in the development of the

COVID-19 vaccines ranging from US$18 billion to $23 billion.

How active listening can make you a better leader

When you let the world intrude on a conversation, you unconsciously tell the

other person that they are less important than the things around them. Instead,

with every interaction strive to make a connection and show people the respect

they deserve. To do this, start by limiting distractions. That means closing

your laptop, muting your phone, and parking work problems at the door so you can

focus and engage with this person in this moment. Of the hundreds of things

littering your calendar, is any one of them more important than leading your

team? ... t’s not enough simply to silence the world. Show the person that you

are listening intently through your responses and body language: Make eye

contact and provide brief verbal affirmations or nod, modulating the tone of

your voice as well as mirroring their body mannerisms. Paraphrasing what the

other person is saying can also be a helpful tool to show you understand or are

seeking clarity. When you take time to validate what someone is saying, they

will feel comfortable sharing more.

Quote for the day:

"The art of communication is the

language of leadership." -- James Humes