The Role of Artificial Consciousness in AI Systems

What this means is that AI programs having common sense may not be enough to

deal with un-encountered situations because it’s difficult to know the limits of

common sense knowledge. It may be that artificial consciousness is the only way

to ascribe meaning to the machine. Of course, artificial consciousness will be

different to the human variant. Philosophers like Descartes, Daniel Dennett, and

the physicist Roger Penrose and many others have given different theories of

consciousness about how the brain produces thinking from neural activity.

Neuroscience tools like fMRI scanners might lead to a better understanding of

how this happens and enable a move to the next level of humanizing AI. But that

would involve confronting what the Australian philosopher, David Chalmers, calls

the hard problem of consciousness – how can subjectivity emerge from matter? Put

another way, how can subjective experiences emerge from neuron activity in the

brain? Furthermore, our understanding of human consciousness can only be

understood through our own inner experience – the first-person

perspective.

What this means is that AI programs having common sense may not be enough to

deal with un-encountered situations because it’s difficult to know the limits of

common sense knowledge. It may be that artificial consciousness is the only way

to ascribe meaning to the machine. Of course, artificial consciousness will be

different to the human variant. Philosophers like Descartes, Daniel Dennett, and

the physicist Roger Penrose and many others have given different theories of

consciousness about how the brain produces thinking from neural activity.

Neuroscience tools like fMRI scanners might lead to a better understanding of

how this happens and enable a move to the next level of humanizing AI. But that

would involve confronting what the Australian philosopher, David Chalmers, calls

the hard problem of consciousness – how can subjectivity emerge from matter? Put

another way, how can subjective experiences emerge from neuron activity in the

brain? Furthermore, our understanding of human consciousness can only be

understood through our own inner experience – the first-person

perspective. Creating a Quality Strategy

Some teams might prefer to do ad-hoc exploratory testing with minimal

documentation. Other teams might have elaborate test case management systems

that document all the tests for the product. And there are many other options

in between. Whatever you choose should be right for your team and right for

your product. ... On some teams, the developers write the unit tests, and the

testers write the API and UI tests. On other teams, the developers write the

unit and API tests, and the testers create the UI tests. Even better is to

have both the developers and the testers share the responsibility for creating

and maintaining the API and UI tests. In this way, the developers can

contribute their code management expertise, while the testers contribute their

expertise in knowing what should be tested. ... Some larger companies may have

dedicated security and performance engineers who take care of this testing.

Small startups might have only one development team that needs to be in charge

of everything.

Believe it or not, many vendors, especially in the Internet of Things (IoT),

choose not to fix anything. Sure, they could do it. Several years ago, Linus

Torvalds, Linux's creator, pointed out that "in theory, open-source [IoT

devices] can be patched. In practice, vendors get in the way." Cook remarked,

with malware here, botnets there, and state attackers everywhere, vendors

certainly should protect their devices, but, all too often, they don't.

"Unfortunately, this is the very common stance of vendors who see their

devices as just a physical product instead of a hybrid product/service that

must be regularly updated." Linux distributors, however, aren't as neglectful.

They tend to "'cherry-pick only the 'important' fixes. But what constitutes

'important' or even relevant? Just determining whether to implement a fix

takes developer time." It hasn't helped any that Linus Torvalds has sometimes

made light of security issues. For example, in 2017, Torvalds dismissed some

security developers' [as] "f-cking morons." He didn't mean to put all security

developers in the same basket, but his colorful language set the tone for too

many Linux developers.

Believe it or not, many vendors, especially in the Internet of Things (IoT),

choose not to fix anything. Sure, they could do it. Several years ago, Linus

Torvalds, Linux's creator, pointed out that "in theory, open-source [IoT

devices] can be patched. In practice, vendors get in the way." Cook remarked,

with malware here, botnets there, and state attackers everywhere, vendors

certainly should protect their devices, but, all too often, they don't.

"Unfortunately, this is the very common stance of vendors who see their

devices as just a physical product instead of a hybrid product/service that

must be regularly updated." Linux distributors, however, aren't as neglectful.

They tend to "'cherry-pick only the 'important' fixes. But what constitutes

'important' or even relevant? Just determining whether to implement a fix

takes developer time." It hasn't helped any that Linus Torvalds has sometimes

made light of security issues. For example, in 2017, Torvalds dismissed some

security developers' [as] "f-cking morons." He didn't mean to put all security

developers in the same basket, but his colorful language set the tone for too

many Linux developers.

As an open-source, Node.js is sponsored by Joyent, a cloud computing and

Node.js best development provider. The firm financed several other

technologies, like the Ruby on Rails framework, and implemented hosting duties

to Twitter and LinkedIn. LinkedIn also became one of the first companies to

use Node.js to create a new project for its mobile application backend. The

technology was next selected by many technology administrators, like Uber,

eBay, and Netflix. Though, it wasn’t until later that wide appropriation of

server-side JavaScript with Node.js server began. The investment in this

technology crested in 2017, and it is still trending on the top. Node.js IDEs,

the most popular code editor, has assistance and plugins for JavaScript and

Node.js, so it simply means how you customize IDE according to the coding

requirements. But, many Node.js developers praise specific tools from VS Code,

Brackets, and WebStorm. Exercising middleware over simple Node.js best

development is a general method that makes developers’ lives more

comfortable.

As an open-source, Node.js is sponsored by Joyent, a cloud computing and

Node.js best development provider. The firm financed several other

technologies, like the Ruby on Rails framework, and implemented hosting duties

to Twitter and LinkedIn. LinkedIn also became one of the first companies to

use Node.js to create a new project for its mobile application backend. The

technology was next selected by many technology administrators, like Uber,

eBay, and Netflix. Though, it wasn’t until later that wide appropriation of

server-side JavaScript with Node.js server began. The investment in this

technology crested in 2017, and it is still trending on the top. Node.js IDEs,

the most popular code editor, has assistance and plugins for JavaScript and

Node.js, so it simply means how you customize IDE according to the coding

requirements. But, many Node.js developers praise specific tools from VS Code,

Brackets, and WebStorm. Exercising middleware over simple Node.js best

development is a general method that makes developers’ lives more

comfortable.

At first glance, a recently granted South African patent relating to a “food

container based on fractal geometry” seems fairly mundane. The innovation in

question involves interlocking food containers that are easy for robots to

grasp and stack. On closer inspection, the patent is anything but mundane.

That’s because the inventor is not a human being – it is an artificial

intelligence (AI) system called DABUS. ... The granting of the DABUS patent in

South Africa has received widespread backlash from intellectual property

experts. The critics argued that it was the incorrect decision in law, as AI

lacks the necessary legal standing to qualify as an inventor. Many have argued

that the grant was simply an oversight on the part of the commission, which

has been known in the past to be less than reliable. Many also saw this as an

indictment of South Africa’s patent procedures, which currently only consist

of a formal examination step. This requires a check box sort of evaluation:

ensuring that all the relevant forms have been submitted and are duly

completed.

At first glance, a recently granted South African patent relating to a “food

container based on fractal geometry” seems fairly mundane. The innovation in

question involves interlocking food containers that are easy for robots to

grasp and stack. On closer inspection, the patent is anything but mundane.

That’s because the inventor is not a human being – it is an artificial

intelligence (AI) system called DABUS. ... The granting of the DABUS patent in

South Africa has received widespread backlash from intellectual property

experts. The critics argued that it was the incorrect decision in law, as AI

lacks the necessary legal standing to qualify as an inventor. Many have argued

that the grant was simply an oversight on the part of the commission, which

has been known in the past to be less than reliable. Many also saw this as an

indictment of South Africa’s patent procedures, which currently only consist

of a formal examination step. This requires a check box sort of evaluation:

ensuring that all the relevant forms have been submitted and are duly

completed.

It keeps the vehicle in the center of the lane, but with a little too much

urgency. It's not a safety issue, but to a driver unfamiliar with what's going

on, the steering movements are a little too frequent and a little too jerky. I

can tell that the computer is working really hard to keep the car centered at

all times — I compared it a 16-year old driver who was still learning the

ropes and wasn't quite confident in their abilities, making frequent, jerky

input adjustments as they drive along rather than smoother, more practiced

inputs that an experienced driver would make. It isn't necessary to always be

centered exactly in the lane, after all — an experienced driver knows that

drifting a few inches to the left or right is normal. I said to the Ford

engineers that most people probably wouldn't notice the tiny steering inputs,

but they might lose confidence in the system because of it, even if they

couldn't quite put their finger on why. Future releases will improve on it,

I'm sure. BlueCruise also isn't (yet) aware of anything going on to the side

or behind the vehicle.

It keeps the vehicle in the center of the lane, but with a little too much

urgency. It's not a safety issue, but to a driver unfamiliar with what's going

on, the steering movements are a little too frequent and a little too jerky. I

can tell that the computer is working really hard to keep the car centered at

all times — I compared it a 16-year old driver who was still learning the

ropes and wasn't quite confident in their abilities, making frequent, jerky

input adjustments as they drive along rather than smoother, more practiced

inputs that an experienced driver would make. It isn't necessary to always be

centered exactly in the lane, after all — an experienced driver knows that

drifting a few inches to the left or right is normal. I said to the Ford

engineers that most people probably wouldn't notice the tiny steering inputs,

but they might lose confidence in the system because of it, even if they

couldn't quite put their finger on why. Future releases will improve on it,

I'm sure. BlueCruise also isn't (yet) aware of anything going on to the side

or behind the vehicle.

Cobalt Strike is a legitimate security tool used by penetration testers to

emulate malicious activity in a network. Over the past few years, malicious

hackers—working on behalf of a nation-state or in search of profit—have

increasingly embraced the software. For both defender and attacker, Cobalt

Strike provides a soup-to-nuts collection of software packages that allow

infected computers and attacker servers to interact in highly customizable

ways. The main components of the security tool are the Cobalt Strike

client—also known as a Beacon—and the Cobalt Strike team server, which sends

commands to infected computers and receives the data they exfiltrate. An

attacker starts by spinning up a machine running Team Server that has been

configured to use specific “malleability” customizations, such as how often

the client is to report to the server or specific data to periodically send.

Then the attacker installs the client on a targeted machine after exploiting a

vulnerability, tricking the user or gaining access by other means.

Cobalt Strike is a legitimate security tool used by penetration testers to

emulate malicious activity in a network. Over the past few years, malicious

hackers—working on behalf of a nation-state or in search of profit—have

increasingly embraced the software. For both defender and attacker, Cobalt

Strike provides a soup-to-nuts collection of software packages that allow

infected computers and attacker servers to interact in highly customizable

ways. The main components of the security tool are the Cobalt Strike

client—also known as a Beacon—and the Cobalt Strike team server, which sends

commands to infected computers and receives the data they exfiltrate. An

attacker starts by spinning up a machine running Team Server that has been

configured to use specific “malleability” customizations, such as how often

the client is to report to the server or specific data to periodically send.

Then the attacker installs the client on a targeted machine after exploiting a

vulnerability, tricking the user or gaining access by other means.

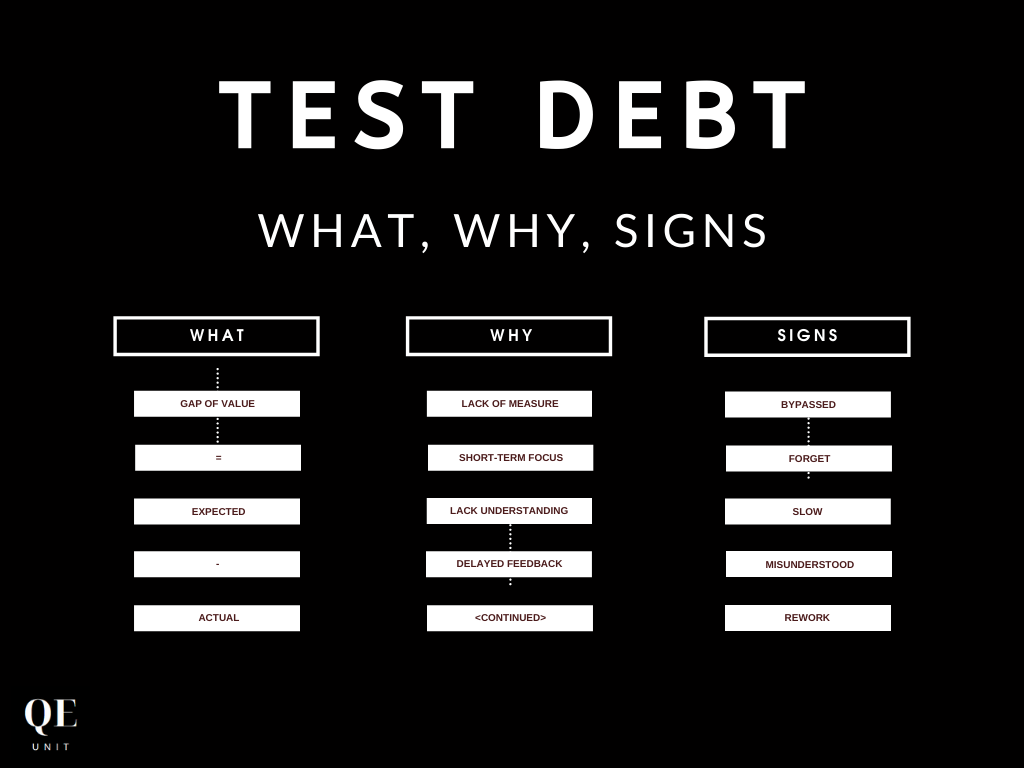

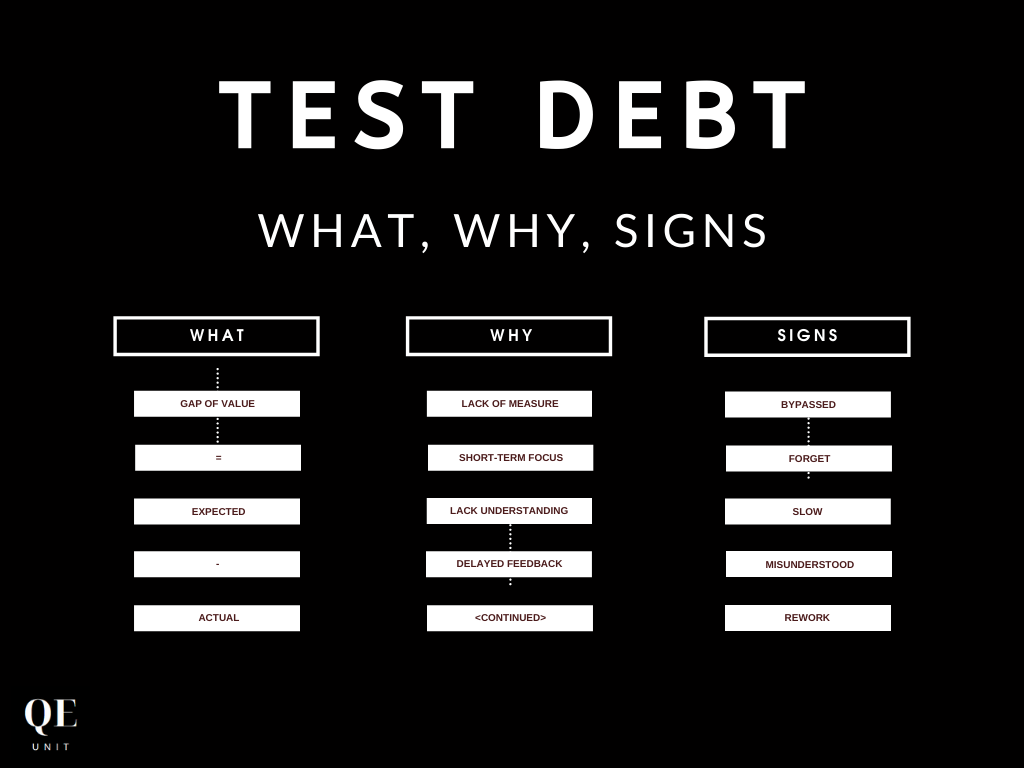

Test Debt is hard to measure factually, but we can rely on our human capacity

to detect, feel and react to warning signs. For test automation, we can sense

organizational behaviors and specific test automation attributes. Let’s get

back to the Why of our automated tests. One objective of our test automation

effort is to accelerate the delivery of software changes with confidence. The

test automation value disappears when the team starts to bypass the test

automation campaign, search for alternative routes, ask for exceptions.

Various reasons are possible as a long execution time, instability, lack of

understanding, or other maintainability criteria. The execution time is

directly tied to essential indicators of software delivery: lead-time for

changes, cycle-time, and MTTA. These metrics are all part of the Accelerate

report, correlating the organization’s performance with these measures. We

need to constraint our test execution time to limit its impact on these

acceleration metrics. For test automation, it means less but more valuable

tests executed faster.

Test Debt is hard to measure factually, but we can rely on our human capacity

to detect, feel and react to warning signs. For test automation, we can sense

organizational behaviors and specific test automation attributes. Let’s get

back to the Why of our automated tests. One objective of our test automation

effort is to accelerate the delivery of software changes with confidence. The

test automation value disappears when the team starts to bypass the test

automation campaign, search for alternative routes, ask for exceptions.

Various reasons are possible as a long execution time, instability, lack of

understanding, or other maintainability criteria. The execution time is

directly tied to essential indicators of software delivery: lead-time for

changes, cycle-time, and MTTA. These metrics are all part of the Accelerate

report, correlating the organization’s performance with these measures. We

need to constraint our test execution time to limit its impact on these

acceleration metrics. For test automation, it means less but more valuable

tests executed faster.

It's time to improve Linux's security

Believe it or not, many vendors, especially in the Internet of Things (IoT),

choose not to fix anything. Sure, they could do it. Several years ago, Linus

Torvalds, Linux's creator, pointed out that "in theory, open-source [IoT

devices] can be patched. In practice, vendors get in the way." Cook remarked,

with malware here, botnets there, and state attackers everywhere, vendors

certainly should protect their devices, but, all too often, they don't.

"Unfortunately, this is the very common stance of vendors who see their

devices as just a physical product instead of a hybrid product/service that

must be regularly updated." Linux distributors, however, aren't as neglectful.

They tend to "'cherry-pick only the 'important' fixes. But what constitutes

'important' or even relevant? Just determining whether to implement a fix

takes developer time." It hasn't helped any that Linus Torvalds has sometimes

made light of security issues. For example, in 2017, Torvalds dismissed some

security developers' [as] "f-cking morons." He didn't mean to put all security

developers in the same basket, but his colorful language set the tone for too

many Linux developers.

Believe it or not, many vendors, especially in the Internet of Things (IoT),

choose not to fix anything. Sure, they could do it. Several years ago, Linus

Torvalds, Linux's creator, pointed out that "in theory, open-source [IoT

devices] can be patched. In practice, vendors get in the way." Cook remarked,

with malware here, botnets there, and state attackers everywhere, vendors

certainly should protect their devices, but, all too often, they don't.

"Unfortunately, this is the very common stance of vendors who see their

devices as just a physical product instead of a hybrid product/service that

must be regularly updated." Linux distributors, however, aren't as neglectful.

They tend to "'cherry-pick only the 'important' fixes. But what constitutes

'important' or even relevant? Just determining whether to implement a fix

takes developer time." It hasn't helped any that Linus Torvalds has sometimes

made light of security issues. For example, in 2017, Torvalds dismissed some

security developers' [as] "f-cking morons." He didn't mean to put all security

developers in the same basket, but his colorful language set the tone for too

many Linux developers.Creating a Secure REST API in Node.js

As an open-source, Node.js is sponsored by Joyent, a cloud computing and

Node.js best development provider. The firm financed several other

technologies, like the Ruby on Rails framework, and implemented hosting duties

to Twitter and LinkedIn. LinkedIn also became one of the first companies to

use Node.js to create a new project for its mobile application backend. The

technology was next selected by many technology administrators, like Uber,

eBay, and Netflix. Though, it wasn’t until later that wide appropriation of

server-side JavaScript with Node.js server began. The investment in this

technology crested in 2017, and it is still trending on the top. Node.js IDEs,

the most popular code editor, has assistance and plugins for JavaScript and

Node.js, so it simply means how you customize IDE according to the coding

requirements. But, many Node.js developers praise specific tools from VS Code,

Brackets, and WebStorm. Exercising middleware over simple Node.js best

development is a general method that makes developers’ lives more

comfortable.

As an open-source, Node.js is sponsored by Joyent, a cloud computing and

Node.js best development provider. The firm financed several other

technologies, like the Ruby on Rails framework, and implemented hosting duties

to Twitter and LinkedIn. LinkedIn also became one of the first companies to

use Node.js to create a new project for its mobile application backend. The

technology was next selected by many technology administrators, like Uber,

eBay, and Netflix. Though, it wasn’t until later that wide appropriation of

server-side JavaScript with Node.js server began. The investment in this

technology crested in 2017, and it is still trending on the top. Node.js IDEs,

the most popular code editor, has assistance and plugins for JavaScript and

Node.js, so it simply means how you customize IDE according to the coding

requirements. But, many Node.js developers praise specific tools from VS Code,

Brackets, and WebStorm. Exercising middleware over simple Node.js best

development is a general method that makes developers’ lives more

comfortable. In a world first, South Africa grants patent to an artificial intelligence system

At first glance, a recently granted South African patent relating to a “food

container based on fractal geometry” seems fairly mundane. The innovation in

question involves interlocking food containers that are easy for robots to

grasp and stack. On closer inspection, the patent is anything but mundane.

That’s because the inventor is not a human being – it is an artificial

intelligence (AI) system called DABUS. ... The granting of the DABUS patent in

South Africa has received widespread backlash from intellectual property

experts. The critics argued that it was the incorrect decision in law, as AI

lacks the necessary legal standing to qualify as an inventor. Many have argued

that the grant was simply an oversight on the part of the commission, which

has been known in the past to be less than reliable. Many also saw this as an

indictment of South Africa’s patent procedures, which currently only consist

of a formal examination step. This requires a check box sort of evaluation:

ensuring that all the relevant forms have been submitted and are duly

completed.

At first glance, a recently granted South African patent relating to a “food

container based on fractal geometry” seems fairly mundane. The innovation in

question involves interlocking food containers that are easy for robots to

grasp and stack. On closer inspection, the patent is anything but mundane.

That’s because the inventor is not a human being – it is an artificial

intelligence (AI) system called DABUS. ... The granting of the DABUS patent in

South Africa has received widespread backlash from intellectual property

experts. The critics argued that it was the incorrect decision in law, as AI

lacks the necessary legal standing to qualify as an inventor. Many have argued

that the grant was simply an oversight on the part of the commission, which

has been known in the past to be less than reliable. Many also saw this as an

indictment of South Africa’s patent procedures, which currently only consist

of a formal examination step. This requires a check box sort of evaluation:

ensuring that all the relevant forms have been submitted and are duly

completed.Ford's new BlueCruise hands-off driving feature is a solid first effort

It keeps the vehicle in the center of the lane, but with a little too much

urgency. It's not a safety issue, but to a driver unfamiliar with what's going

on, the steering movements are a little too frequent and a little too jerky. I

can tell that the computer is working really hard to keep the car centered at

all times — I compared it a 16-year old driver who was still learning the

ropes and wasn't quite confident in their abilities, making frequent, jerky

input adjustments as they drive along rather than smoother, more practiced

inputs that an experienced driver would make. It isn't necessary to always be

centered exactly in the lane, after all — an experienced driver knows that

drifting a few inches to the left or right is normal. I said to the Ford

engineers that most people probably wouldn't notice the tiny steering inputs,

but they might lose confidence in the system because of it, even if they

couldn't quite put their finger on why. Future releases will improve on it,

I'm sure. BlueCruise also isn't (yet) aware of anything going on to the side

or behind the vehicle.

It keeps the vehicle in the center of the lane, but with a little too much

urgency. It's not a safety issue, but to a driver unfamiliar with what's going

on, the steering movements are a little too frequent and a little too jerky. I

can tell that the computer is working really hard to keep the car centered at

all times — I compared it a 16-year old driver who was still learning the

ropes and wasn't quite confident in their abilities, making frequent, jerky

input adjustments as they drive along rather than smoother, more practiced

inputs that an experienced driver would make. It isn't necessary to always be

centered exactly in the lane, after all — an experienced driver knows that

drifting a few inches to the left or right is normal. I said to the Ford

engineers that most people probably wouldn't notice the tiny steering inputs,

but they might lose confidence in the system because of it, even if they

couldn't quite put their finger on why. Future releases will improve on it,

I'm sure. BlueCruise also isn't (yet) aware of anything going on to the side

or behind the vehicle.Critical Cobalt Strike bug leaves botnet servers vulnerable to takedown

Cobalt Strike is a legitimate security tool used by penetration testers to

emulate malicious activity in a network. Over the past few years, malicious

hackers—working on behalf of a nation-state or in search of profit—have

increasingly embraced the software. For both defender and attacker, Cobalt

Strike provides a soup-to-nuts collection of software packages that allow

infected computers and attacker servers to interact in highly customizable

ways. The main components of the security tool are the Cobalt Strike

client—also known as a Beacon—and the Cobalt Strike team server, which sends

commands to infected computers and receives the data they exfiltrate. An

attacker starts by spinning up a machine running Team Server that has been

configured to use specific “malleability” customizations, such as how often

the client is to report to the server or specific data to periodically send.

Then the attacker installs the client on a targeted machine after exploiting a

vulnerability, tricking the user or gaining access by other means.

Cobalt Strike is a legitimate security tool used by penetration testers to

emulate malicious activity in a network. Over the past few years, malicious

hackers—working on behalf of a nation-state or in search of profit—have

increasingly embraced the software. For both defender and attacker, Cobalt

Strike provides a soup-to-nuts collection of software packages that allow

infected computers and attacker servers to interact in highly customizable

ways. The main components of the security tool are the Cobalt Strike

client—also known as a Beacon—and the Cobalt Strike team server, which sends

commands to infected computers and receives the data they exfiltrate. An

attacker starts by spinning up a machine running Team Server that has been

configured to use specific “malleability” customizations, such as how often

the client is to report to the server or specific data to periodically send.

Then the attacker installs the client on a targeted machine after exploiting a

vulnerability, tricking the user or gaining access by other means.Test Debt Fundamentals: What, Why & Warning Signs

Test Debt is hard to measure factually, but we can rely on our human capacity

to detect, feel and react to warning signs. For test automation, we can sense

organizational behaviors and specific test automation attributes. Let’s get

back to the Why of our automated tests. One objective of our test automation

effort is to accelerate the delivery of software changes with confidence. The

test automation value disappears when the team starts to bypass the test

automation campaign, search for alternative routes, ask for exceptions.

Various reasons are possible as a long execution time, instability, lack of

understanding, or other maintainability criteria. The execution time is

directly tied to essential indicators of software delivery: lead-time for

changes, cycle-time, and MTTA. These metrics are all part of the Accelerate

report, correlating the organization’s performance with these measures. We

need to constraint our test execution time to limit its impact on these

acceleration metrics. For test automation, it means less but more valuable

tests executed faster.

Test Debt is hard to measure factually, but we can rely on our human capacity

to detect, feel and react to warning signs. For test automation, we can sense

organizational behaviors and specific test automation attributes. Let’s get

back to the Why of our automated tests. One objective of our test automation

effort is to accelerate the delivery of software changes with confidence. The

test automation value disappears when the team starts to bypass the test

automation campaign, search for alternative routes, ask for exceptions.

Various reasons are possible as a long execution time, instability, lack of

understanding, or other maintainability criteria. The execution time is

directly tied to essential indicators of software delivery: lead-time for

changes, cycle-time, and MTTA. These metrics are all part of the Accelerate

report, correlating the organization’s performance with these measures. We

need to constraint our test execution time to limit its impact on these

acceleration metrics. For test automation, it means less but more valuable

tests executed faster. Systems of systems: The next big step for edge AI

SoS will allow autonomous or semi-autonomous systems to control and respond to

data flows. In the defense sector, for example, it will connect the data dots

gathered from weather analysis, radars, and video surveillance to provide either

the quickest path for a missile, or the best way to intercept it. Separately, a

train technology provider that delivers transportation as a service need to

unify the subsystems in a train and in a train station, expediting failure

flagging and repairs to reduce costly service delays. In each case, a system of

systems will inform or replace human decision-making, leading to faster,

smarter, and more precise insights. ... It’s no stretch to say that edge

AI-powered systems of systems will change society as we know it. Like bees

working together to build and maintain a hive, algorithms in a SoS will form a

swarm. Cars that can communicate with each other will be collectively smarter

and safer than any individual car. Inside one vehicle, a SoS will coordinate

navigation and telematics while independently gathering live weather and traffic

data from roads.

SoS will allow autonomous or semi-autonomous systems to control and respond to

data flows. In the defense sector, for example, it will connect the data dots

gathered from weather analysis, radars, and video surveillance to provide either

the quickest path for a missile, or the best way to intercept it. Separately, a

train technology provider that delivers transportation as a service need to

unify the subsystems in a train and in a train station, expediting failure

flagging and repairs to reduce costly service delays. In each case, a system of

systems will inform or replace human decision-making, leading to faster,

smarter, and more precise insights. ... It’s no stretch to say that edge

AI-powered systems of systems will change society as we know it. Like bees

working together to build and maintain a hive, algorithms in a SoS will form a

swarm. Cars that can communicate with each other will be collectively smarter

and safer than any individual car. Inside one vehicle, a SoS will coordinate

navigation and telematics while independently gathering live weather and traffic

data from roads.Mainframes: The Missing Link To AI (Artificial Intelligence)?

The power of AI for mainframes does not have to be about creating projects. For

example, there are emerging AIOps tools that help automate the systems. Some of

the benefits include improved performance and availability, increased support

speed for application releases and the DevOps process, and the proactive

identification of issues. Such benefits can be essential since it is

increasingly more difficult to attract qualified IT professionals. According to

a recent survey from Forrester and BMC, about 81% of the respondents indicated

that they rely partially on manual processes when dealing with slowdowns and 75%

said they use manual labor for diagnosing multisystem incidents. In other words,

there is much room for improvement—and AI can be a major driver for this.

“Mainframe decision makers are becoming more aware than ever that the

traditional way of handling mainframe operations will soon fall by the wayside,”

said John McKenny, who is the Senior Vice President and General Manager of

Intelligent Z Optimization and Transformation at BMC.

The power of AI for mainframes does not have to be about creating projects. For

example, there are emerging AIOps tools that help automate the systems. Some of

the benefits include improved performance and availability, increased support

speed for application releases and the DevOps process, and the proactive

identification of issues. Such benefits can be essential since it is

increasingly more difficult to attract qualified IT professionals. According to

a recent survey from Forrester and BMC, about 81% of the respondents indicated

that they rely partially on manual processes when dealing with slowdowns and 75%

said they use manual labor for diagnosing multisystem incidents. In other words,

there is much room for improvement—and AI can be a major driver for this.

“Mainframe decision makers are becoming more aware than ever that the

traditional way of handling mainframe operations will soon fall by the wayside,”

said John McKenny, who is the Senior Vice President and General Manager of

Intelligent Z Optimization and Transformation at BMC. Quote for the day:

"Ninety percent of leadership is the ability to communicate something people want." -- Dianne Feinstein

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/69689496/GettyImages_1230723516.0.jpg)