Why API Quality Is Top Priority for Developers

Processes such as chaos engineering, load testing and manual quality assurance

can uncover situations where an API is failing to handle unexpected situations.

Deploying your API to a cloud provider with a compelling SLA instead of your own

hardware and network shifts the burden of infrastructure resiliency to a

service, freeing your time to build features for your customers. A comprehensive

suite of automated tests isn’t always sufficient to provide a robust API. Edge

cases, unexpected code branches and other unplanned behavior may be triggered by

requests that were not considered when writing the test suite. Traditional

automated tests should be complimented by fuzz testing to help uncover hidden

execution paths. ... It is expected that most APIs are built on layers of open

source libraries and frameworks. Software composition analysis is a necessity to

stay on top of zero-day vulnerabilities by identifying vulnerable dependencies

as soon as they are discovered. OWASP guidance is a must-have—directing API

developers to implement attack mitigation strategies such as CORS and CSRF

protection. Application logic must be well tested for authorization and

authentication.

New Ransomware Group Claiming Connection to REvil Gang Surfaces

Like many established ransomware operators, the gang behind Prometheus has

adopted a very professional approach to dealing with its victims — including

referring to them as "customers," PAN said. Members of the group communicate

with victims via a customer service ticketing system that includes warnings on

approaching payment deadlines and notifications of plans to sell stolen data via

auction if the deadline is not met. "New ransomware gangs like Prometheus follow

the same TTPs as big players [such as] Maze, Ryuk, and NetWalker because it is

usually effective when applied the right way with the right victim," Santos

says. "However, we do find it interesting that this group sells the data if no

ransom is paid and are very vocal about it." From samples provided by the

Prometheus ransomware gang on their leak site, the group appears to be selling

stolen databases, emails, invoices, and documents that include personally

identifiable information. "There are marketplaces where threat actors can sell

leaked data for a profit, but we currently don't have any insight on how much

this information could be sold in a marketplace," Santos says

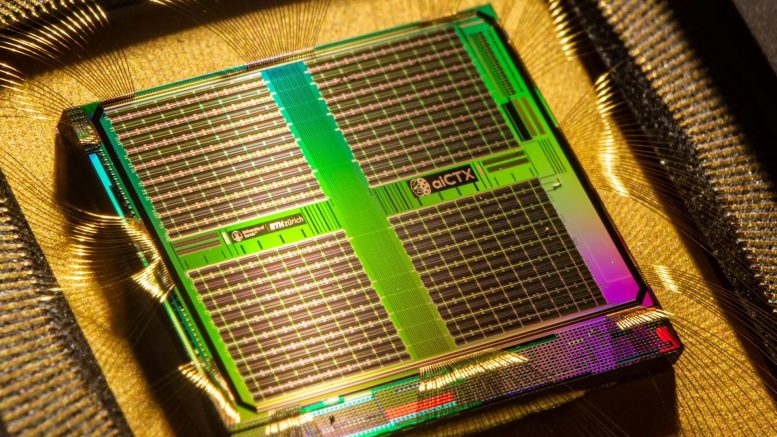

Google is using AI to design its next generation of AI chips more quickly than humans can

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/69433133/tpu_v2_hero_2.max_1000x1000.0.png)

Google’s engineers trained a reinforcement learning algorithm on a dataset of

10,000 chip floor plans of varying quality, some of which had been randomly

generated. Each design was tagged with a specific “reward” function based on its

success across different metrics like the length of wire required and power

usage. The algorithm then used this data to distinguish between good and bad

floor plans and generate its own designs in turn. As we’ve seen when AI systems

take on humans at board games, machines don’t necessarily think like humans and

often arrive at unexpected solutions to familiar problems. When DeepMind’s

AlphaGo played human champion Lee Sedol at Go, this dynamic led to the infamous

“move 37” — a seemingly illogical piece placement by the AI that nevertheless

led to victory. Nothing quite so dramatic happened with Google’s chip-designing

algorithm, but its floor plans nevertheless look quite different to those

created by a human. Instead of neat rows of components laid out on the die,

sub-systems look like they’ve almost been scattered across the silicon at

random.

More and More Professionals Are Using Their Career Expertise to Launch Entrepreneurial Ventures

The first step is to immerse yourself within your training and specialty and

have the confidence to be a key thought leader in the space. Do the extra

research, spend the time to learn all of the new information and data in your

field to truly understand the opportunity within. "I have been fortunate to be

involved with several top academic institutions during my training. While the

training was fantastic, there were areas that I felt could be improved for the

ultimate outcome of increased access to high-quality healthcare," says Dr.

Bajaj. "Thankfully, this vision has resulted in great outcomes and happy

patients." ... "Ready. Fire. Aim!" as Dr. Bajaj puts it, "Time was not waiting

for me to be fully prepared. Sometimes you have to take the leap." In

entrepreneurship, there are no guarantees, which is quite different from some

of the career paths that we have trained for our entire academic life.

Guaranteed salary, retirement plans, and annual bonuses are far from promised

in your own business, and it is important to adapt accordingly. Everything

will not go according to plan, and it is important to find comfort with that.

As long the launchpad for growth has been established - patience is the

biggest challenge, not security.

CISOs: It's time to get back to security basics

The goal of cybersecurity used to be protecting data and people's privacy,

Summit said. There has been a major shift in that thinking. "It's one thing to

lose a patient's data, which is extremely important to protect, but when you

start interrupting" people's ability to travel or the food supply chain, "you

have a whole different level of problems … It's not just about protecting data

but your operations. That's where major changes are starting to occur." Summit

added that he has long said if companies were making cybersecurity a high

priority long before now, "we wouldn't be in this position" and facing

government scrutiny. The cybersecurity field is "incredibly dynamic," Hatter

said, and CISOs don't have the luxury of planning out three to five years. "We

want to create and deploy a strategy that's sound and solid. But market forces

demand; we recalibrate what we do and COVID-19 was a great example of that."

CISOs now have to have as resilient a strategy as possible but be prepared to

make changes. Managed security service providers can help, Summit said, but

CISOs are still feeling overwhelmed.

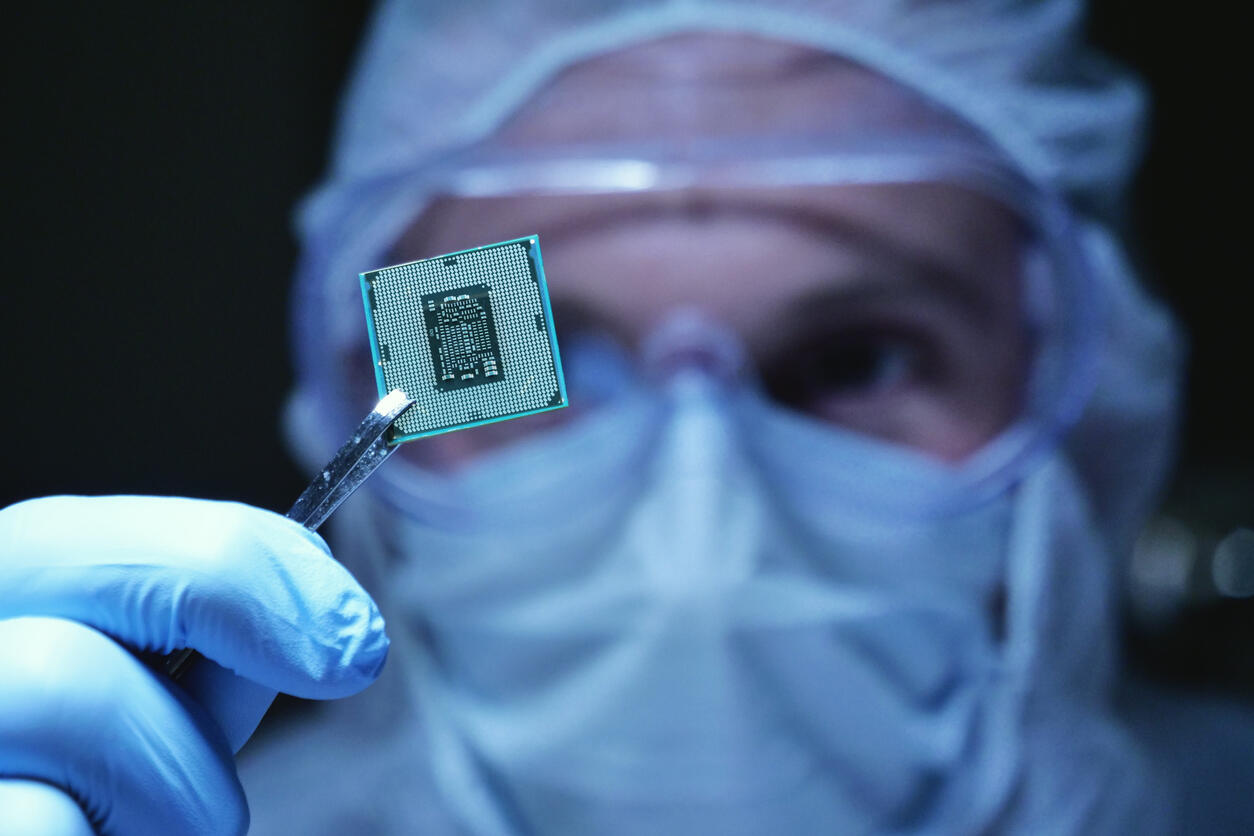

New quantum entanglement verification method cuts through the noise

Virtually any interaction with their environment can cause them to collapse

like a house of cards and lose their quantum correlations – a process called

decoherence. If this happens before an algorithm finishes running, the result

is a mess, not an answer. (You would not get much work done on a laptop that

had to restart every second.) In general, the more qubits a quantum computer

has, the harder they are to keep quantum; even today’s most advanced quantum

processors still have fewer than 100 physical qubits. The solution to

imperfect physical qubits is quantum error correction (QEC). By entangling

many qubits together in a so-called “genuine multipartite entangled” (GME)

state, where every qubit is entangled with every other qubit in that bunch, it

is possible to create a composite “logical” qubit. This logical qubit acts as

an ideal qubit: the redundancy of the shared information means if one of the

physical qubits decoheres, the information can be recovered from the rest of

the logical qubit. Developing quantum error-correcting systems requires

verifying that the GME states used in logical qubits are present and working

as intended, ideally as quickly and efficiently as possible.

DeepMind says reinforcement learning is ‘enough’ to reach general AI

Some scientists believe that assembling multiple narrow AI modules will

produce higher intelligent systems. For example, you can have a software

system that coordinates between separate computer vision, voice processing,

NLP, and motor control modules to solve complicated problems that require a

multitude of skills. A different approach to creating AI, proposed by the

DeepMind researchers, is to recreate the simple yet effective rule that has

given rise to natural intelligence. “[We] consider an alternative hypothesis:

that the generic objective of maximising reward is enough to drive behaviour

that exhibits most if not all abilities that are studied in natural and

artificial intelligence,” the researchers write. This is basically how nature

works. As far as science is concerned, there has been no top-down intelligent

design in the complex organisms that we see around us. Billions of years of

natural selection and random variation have filtered lifeforms for their

fitness to survive and reproduce. Living beings that were better equipped to

handle the challenges and situations in their environments managed to survive

and reproduce. The rest were eliminated.

Evaluation of Cloud Native Message Queues

The significant rise in internet-connected devices will consequently have a

substantial influence on systems’ network traffic, and current point-to-point

technologies using synchronous communication between end-points in IoT-systems

are not any longer a sustainable solution. Message queue architectures using

the publish-subscribe paradigm are widely implemented in event-based systems.

This paradigm uses asynchronous communication between entities and conforms to

scalable, high throughput, and low latency systems that are well adapted

within the IoT-domain. This thesis evaluates the adaptability of three popular

message queue systems in Kubernetes. The systems are designed differently,

where e.g. the Kafka system is using a peer-to-peer architecture while STAN

and RabbitMQ use a master-slave architecture by applying the Raft consensus

algorithm. A thorough analysis of the systems’ capabilities in terms of

scalability, performance, and overhead are presented. The conducted tests give

further knowledge on how the performance of the Kafka system is affected in

multi-broker clusters using multiple number of partitions, enabling higher

levels of parallelism for the system.

Mysterious Custom Malware Collects Billions of Stolen Data Points

Researchers have uncovered a 1.2-terabyte database of stolen data, lifted from

3.2 million Windows-based computers over the course of two years by an

unknown, custom malware. The heisted info includes 6.6 million files and 26

million credentials, and 2 billion web login cookies – with 400 million of the

latter still valid at the time of the database’s discovery. According to

researchers at NordLocker, the culprit is a stealthy, unnamed malware that

spread via trojanized Adobe Photoshop versions, pirated games and Windows

cracking tools, between 2018 and 2020. It’s unlikely that the operators had

any depth of skill to pull off their data-harvesting campaign, they added.

“The truth is, anyone can get their hands on custom malware. It’s cheap,

customizable, and can be found all over the web,” the firm said in a Wednesday

posting. “Dark Web ads for these viruses uncover even more truth about this

market. For instance, anyone can get their own custom malware and even lessons

on how to use the stolen data for as little as $100. And custom does mean

custom – advertisers promise that they can build a virus to attack virtually

any app the buyer needs.”

Get your technology infrastructure ready for the Age of Uncertainty

As I say, it’s by no means clear what happens next and how ingrained changes

will be. It’s plausible, of course, that we largely go back to old habits

although that seems unlikely with a groundswell of employees having become

accustomed to a different way of life and a different way of working. And it’s

worth noting that even evidence of a return to ancient ways of living in the

form of crafts, baking and so on are now very much digitally imbued

activities. We download apps, consult websites and share ideas on forums when

we try out a new recipe, and this sort of binary activity is part of the

fabric of life because it is faster, more convenient and more scalable than

the older alternatives. But what we need to do is strike the perfect balance

between technology-enabled agility and what we want to do with our time. What

we will need to manage through change is clear though. Adaptivity, enabled by

robust, data-centric digital business designs, will become the watchword of

operations. In other words, companies will need to be able to move fast,

whatever happens, changing operating models, moving into adjacent markets and

generally taking nothing for granted. In the new Age of Uncertainty, legacy

systems have to be reassessed in the context of how best to build for

agility.

Quote for the day:

"To have long term success as a coach

or in any position of leadership, you have to be obsessed in some way." --

Pat Riley