The Merging Of Human And Machine. Two Frontiers Of Emerging Technologies

The field of human and biological applications has many applications for

medical science. This includes precision medicine, genome sequencing and gene

editing (CRISPR), cellular implants, and wearables that can be implanted in

the human body The medical community is experimenting with delivering

nano-scale drugs (including anti-biotic “smart bombs” to target specific

strains of bacteria. Soon they will be able to implant devices such as bionic

eyes and bionic kidneys, or artificially grown and regenerated human organs.

Succinctly, we are on the cusp of significantly upgrading the human ecosystem.

It is indeed revolutionary. This revolution will expand exponentially in the

next few years. We will see the merging of artificial circuitries with

signatures of our biological intelligence, retrieved in the form of electric,

magnetic, and mechanical transductions. Retrieving these signatures will be

like taking pieces of cells (including our tissue-resident stem cells) in the

form of “code” for their healthy, diseased or healing states, or a code for

their ability to differentiate into all the mature cells of our body. This

process will represent an unprecedented form of taking a glimpse of human

identity.

Five ‘New Normal’ Imperatives for Retail Banking After COVID-19

The current financial crisis highlights an already trending need for

responsible, community-minded banking. How financial institutions respond to

the COVID-19 crisis — and the actions they take as the economy begins to right

itself — will influence their reputations in the long-term. Personalized

service and community-mindedness have never been more important. The approach

to providing them, however, will often be different from the past.

Data-powered audience segmentation can help banks and credit unions

proactively anticipate the needs of their customers, then offer services and

solutions to solve them. Voice-of-consumer and social listening tools can help

financial institutions understand and monitor their brand perception. It’s

important to develop a process and allocate resources to engage with consumers

in the digital space. For example, when complaints or concerns are raised on

social media or other channels, they should be triaged quickly and

effectively. If this capability is something you previously have put off

developing, it’s time to re-prioritize. According to EY’s Future Consumer

Index, only 17% of consumers surveyed said they trusted their financial

institutions in a time of crisis.

The 7 Benefits of DataOps You’ve Never Heard

The convergence of point-solution products into end-to-end platforms has

made modern DataOps possible. Agile software that manages, governs, curates,

and provisions data across the entire supply chain enables efficiencies,

detailed lineage, collaboration, and data virtualization, to name a few

benefits. While many point-solutions will continue, success today comes from

having a layer of abstraction that connects and optimizes every stage of the

data lifecycle, across vendors and clouds, in order to streamline and

protect the full ecosystem. As machine-learning and AI applications expand,

the successful outcome of these initiatives depends on expert data curation,

which involves the preparation of the data, automated controls to reduce the

risks inherent in data analysis, and collaborative access to as much

information as possible. Data collaboration, like other types of

collaboration, fosters better insights, new ideas, and overcomes analytic

hurdles. While often considered a downstream discipline, providing

collaboration features across data discovery, augmented data management, and

provisioning results in better AI/ML outcomes. In our COVID-19 age,

collaboration has become even more important, and the best of today’s

DataOps platforms offer benefits that break down the barriers of remote

work, departmental divisions, and competing business goals.

Generation Data: the future of cloud era leaders

What’s more, with most organisations adopting multiple cloud environments,

data is more fragmented than ever before. As such, businesses are looking to

data governance specialists (not just data scientists, but data engineers

too) to ensure that there is a catalogue of where the data resides, across

the different landscapes to ensure it’s well secured and well governed. It’s

important to have people who can spot risks associated with where data is –

or in some cases – isn’t stored, whilst deploying artificial intelligence

(AI) to adopt new roles to secure it within the cloud environments. Cloud

specialists can take on several different job titles within the business and

at some organisations, a single data leader like the CDO must seamlessly

shift between multiple roles in order to achieve success. Meanwhile at

others, a team of data leaders each having a specialised role under a

unified data strategy is a model for success. What’s clear is that as data

becomes part of everyone’s working lives, to ensure we’re not short on

talent, businesses need to engage with a wide range of individuals such as

citizen integrators and citizen analysts to upskill within existing roles

and to truly democratise data. This means equipping existing and future

employees with the skills needed to garner insights from existing data sets.

Standing Out with Invisible Payments: The Banking-as-a-Service Paradox

Industry players, such as regulators, FinTech partners, and businesses in the banking, financial services and insurance industries, are starting to realise that it is not ideal to be a ‘jack-of-all-trades’. In fact, the core of BaaS is built upon strategic collaboration. As such, there should be more acceptance of strengths and weaknesses from financial players so they can better identify what they are good at and what they need help with. Essentially, financial players need to ‘piggyback’ on either big banks or other financial service partners with strong regulatory license network. Furthermore, if they can identify a market that is underpenetrated, this is a good opportunity to work with existing players to fill the gap. For instance, the recent partnership between InstaReM and SBM Bank India allow users to remit money to more markets and send funds overseas in real-time. As its licensed banking partner, InstaReM will facilitate international money transfers from India to an expanded list of markets, including new destinations such as Malaysia and Hong Kong. In another example, Nium’s partnership with Geoswift, an innovative payment technology company, will enable overseas customers to remit money into China.How state and local governments can better combat cyberattacks

Hit by ransomware and other attacks, state and local governments are

obviously aware of the need for strong cybersecurity. And they have taken

certain measures to beef up security. Many local governments have hired top

cybersecurity people and created more effective teams. The recent

Congressional Solarium Commission on Cybersecurity stressed the need for

better security coordination among local governments, the federal

government, and the private sector. The State and Local Government

Cybersecurity Act of 2019 legislation passed last year is designed to foster

a greater collaboration among the different parties. But government agencies

are not all alike, especially on a local vs. state level. Differences exist

in funding and preparedness. Security policies can vary from one agency to

another. Plus, the effort to digitize systems and services at such a rapid

pace means that security sometimes gets left behind. Looking at open-source

data on 108 cyberattacks on state and local municipalities from 2017 to late

2019, BlueVoyant found that the number rose by almost 50%. Over the same

time, ransomware demands surged from a low of $30,000 a month to as high as

almost $500,000 in July 2019, according to the report.

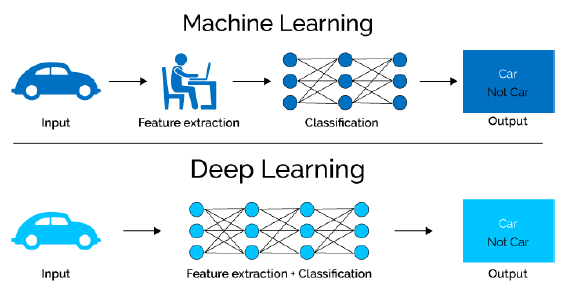

How AI can enhance your approach to DevOps

Companies can resort to AI data mapping techniques to accelerate data

transformation processes. At the same time, machine learning (ML) used in

data mapping will also automate data integrations, allowing businesses to

extract business intelligence and drive important business decisions

quickly. Taking it a step further, organizations can push for

AI/ML-powered DevOps for self-healing and self-managing processes,

preventing abrupt disruptions and script breaks. Besides that, organizations

can opt for AI to recommend solutions to write more efficient and resilient

code, based on the analysis of past application builds and performance. The

ability of AI and ML to scan through troves of data with higher precision

will play an essential role in delivering better security. Through a

centralized logging architecture, employees can detect and highlight any

suspicious activities on the network. With the help of AI, organizations can

track and learn of the hacker’s motive in trying to breach a system. This

capability will help DevOps teams to navigate through existing threats and

mitigate the impact. Communication is also a vital component in DevOps

strategy, but it’s often one of the biggest challenges when organizations

move to the methodology when so much information is flowing through the

system.

Shifting the mindset from cloud-first to cloud-best using hybrid IT

Those who are approaching the cloud for the first time face the classic

question around which type of service to choose, public or private. Both

have different use-cases and can be critical for businesses in achieving

their objectives. For instance, the public cloud is agile, scalable and

simple to use, great for teams looking to get up and running quickly.

However, the private cloud offers its own benefits, chiefly a greater degree

of control over data and performance. As organisations hosting their data in

a private cloud are in full control of that data, there’s typically a more

consistent security posture and a greater degree of flexibility and control

over how that data is used and managed. Moreover, the private cloud can

typically deliver faster and higher through-put environments for those

mission critical applications that cannot run in the public cloud without

business impacting performance issues. However, companies risk getting

caught in the cloud divide, feeling as though public cloud is not

appropriate for their enterprise applications, or that on-prem enterprise

infrastructure isn’t as user-friendly, simple or scalable as the public

cloud. Ultimately organisations should be able to make infrastructure

choices based on what’s best for their business, not constrained by what the

technology can do or where it lives.

5 Critical IT Roles for Rapid Digital Transformation

Information security leaders are the individuals who protect the information

and activity of an organization. These professionals lead the charge in

establishing the appropriate security standards and implementing the best

policies and procedures needed to prevent security breaches and confiscated

data. As more information and activity happens within the cloud

infrastructure during a transformation, security has to be a top priority.

Given the current situation, the rise of online activity has led to an

increase in cyber-attacks. With digital transformation, businesses need

assurance that their technologies are adequately protected. An InfoSec

leader will help quarterback the security game plan as well as monitor for

abnormal activity and handle the recovery should any issues arise. Since

data analysts are there to retrieve, gather and analyze data, they hold an

important role in the digital transformation journey. Technology opens the

doors to a world of data that must be uncovered and understood to deliver

any real value. The insights data analysts can provide allow organizations

to take a data-driven approach to the decision-making process. Since there

is a lot of uncertainty in the current business climate, data analysts are a

huge asset.

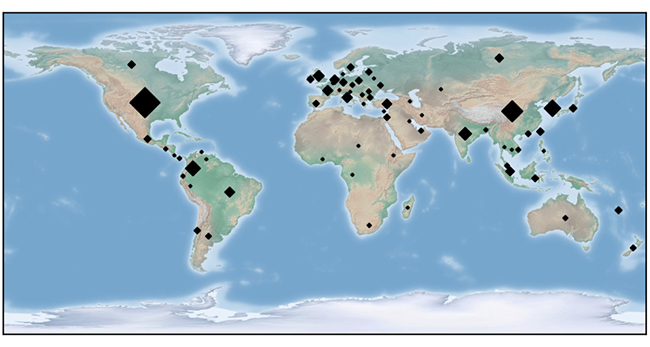

Facing gender bias in facial recognition technology

Our team performed two separate tests – first using Amazon Rekognition and the

second using Dlib. Unfortunately, with Amazon Rekognition we were unable to

unpack just how their ML modeling and algorithm works due to transparency

issues (although we assume it’s similar to Dlib). Dlib is a different story,

and uses local resources to identify faces provided to it. It comes pretrained

to identify the location of a face, and with face location finder HOG, a

slower CPU-based algorithm, and CNN, a faster algorithm making use of

specialized processors found in a graphics cards. Both services provide match

results with additional information. Besides the match found, a similarity

score is given that shows how close a face must match to the known face. If

the face on file doesn’t exist, a similarity score set to low may incorrectly

match a face. However, a face can have a low similarity score and still match

when the image doesn’t show the face clearly. For the data set, we used a

database of faces called Labeled Faces in the Wild, and we only investigated

faces that matched another face in the database. This allowed us to test

matching faces and similarity scores at the same time. Amazon Rekognition

correctly identified all pictures we provided.

Quote for the day:

"Hold yourself responsible for a higher standard than anybody expects of you. Never excuse yourself." -- Henry Ward Beecher