There is a crisis of face recognition and policing in the US

When Jennifer Strong and I started reporting on the use of face recognition

technology by police for our new podcast, “In Machines We Trust,” we knew

these AI-powered systems were being adopted by cops all over the US and in

other countries. But we had no idea how much was going on out of the public

eye. For starters, we don’t know how often police departments in the US

use facial recognition for the simple reason that in most jurisdictions, they

don’t have to report when they use it to identify a suspect in a crime. The

most recent numbers are speculative and from 2016, but they suggest that at

the time, at least half of Americans had photos in a face recognition system.

One county in Florida ran 8,000 searches each month. We also don’t know which

police departments have facial recognition technology, because it’s common for

police to obscure their procurement process. There is evidence, for example,

that many departments buy their technology using federal grants or nonprofit

gifts, which are exempt from certain disclosure laws. In other cases,

companies offer police trial periods for their software that allow officers to

use systems without any official approval or oversight.

Outlook “mail issues” phishing – don’t fall for this scam!

Only if you were to dig into the email headers would it be obvious that this

message actually arrived from outside and was not generated automatically by

your own email system at all. The clickable link is perfectly believable,

because the part we’ve redacted above (between the text https://portal and the

trailing /owa, short for Outlook Web App) will be your company’s own domain

name. But even though the blue text of the link itself looks like a URL, it

isn’t actually the URL that you will visit if you click it. Remember that a

link in a web page consists of two parts: first, the text that is highlighted,

usually in blue, which is clickable; second, the destination, or HREF (short

for hypertext reference), where you actually go if you click the blue text.

... One tricky problem for phishing crooks is what to do at the end, so you

don't belatedly realise it's a scam and rush off to change your password (or

cancel your credit card, or whatever it might be). In theory, they could try

using the credentials you just typed in to login for you and then dump you

into your real account, but there's a lot that could go wrong. The crooks

almost certainly will test out your newly-phished password pretty soon, but

probably not right away while you are paying attention and might spot any

anomalies that their attempted login might cause.

Taking on the perfect storm in cybersecurity

The future of cybersecurity depends on a platform approach. This will allow

your cybersecurity teams to focus on security rather than continue to

integrate solutions from many different vendors. It allows you to keep up with

digital transformation and, along the way, battle the perfect storm. Our

network perimeters are typically well-protected, and organizations have the

tools and technologies in place to identify threats and react to them in

real-time within their network environments. The cloud, however, is a

completely different story. There is no established model for cloud security.

The good news is that there is no big deployment of legacy security solutions

in the cloud. This means organizations have a chance to get it right this

time. We can also fix how to access the cloud and manage security operations

centers (SOCs) to maximize ML and AI for prevention, detection, response and

recovery. Cloud security, cloud access and next-generation SOCs are

interrelated. Individually and together, they present an opportunity to

modernize cybersecurity. If we build the right foundation today, we can break

the pattern of too many disparate tools and create a path to consuming

cybersecurity innovations and solutions more easily in the future.

FBI and CISA warn of major wave of vishing attacks targeting teleworkers

Collected information included: name, home address, personal cell/phone

number, the position at the company, and duration at the company, according to

the two agencies. The attackers than called employees using random

Voice-over-IP (VoIP) phone numbers or by spoofing the phone numbers of other

company employees. "The actors used social engineering techniques and, in some

cases, posed as members of the victim company's IT help desk, using their

knowledge of the employee's personally identifiable information—including

name, position, duration at company, and home address—to gain the trust of the

targeted employee," the joint alert reads. "The actors then convinced the

targeted employee that a new VPN link would be sent and required their login,

including any 2FA or OTP." When the victim accessed the link, for the phishing

site hackers had created, the cybercriminals logged the credentials, and used

it in real-time to gain access to the corporate account, even bypassing

2FA/OTP limits with the help of the employee. "The actors then used the

employee access to conduct further research on victims, and/or to fraudulently

obtain funds using varying methods dependent on the platform being accessed,"

the FBI and CISA said.

Why you need to revisit your IT policies

Part of that proactive planning should be adjustments to your IT policies.

These documents are often forgotten until they're most needed, and the recent

rushed transition from office work to remote work likely highlighted this

condition. In the rushed transition, imagine how helpful it would have been to

have some basic policy guidance on what equipment is supported for remote

work, what items are reimbursable and where they can be sourced, and which

software was recommended. If nothing else, some simple policies and guidance

around these topics probably would have saved your already-stretched support

staff dozens of phone calls and emails. ... At their best, policies provide

guidance based on organizational priorities and experience, and at their

worst, they are an extensive list of "Thou Shalt Nots" that assume your

colleagues are nefarious scallywags one step away from destroying the

organization should you not be there to preempt each of their misguided

notions. Many employees dislike policy documents since they bias toward the

latter, and unsurprisingly when you treat your colleagues like children and

scoundrels, they'll rise to the occasion.

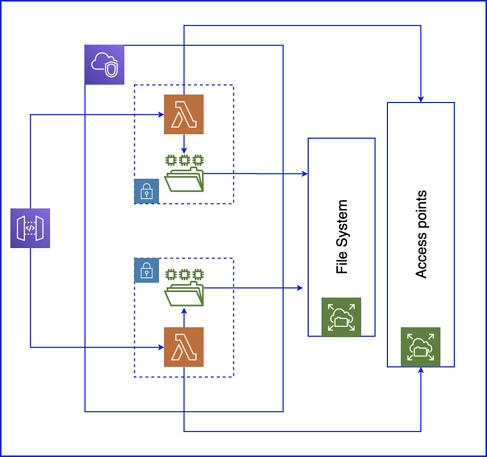

Styles, protocols and methods of microservices communication

For those who choose to stick with asynchronous protocols, consider exploring

the advanced message queuing protocol (AMQP). This widely available and mature

protocol provides a standard method for microservices communication and should

be a priority for those developing truly composite microservices apps.

Asynchronous protocols like AMQP use a lightweight service bus similar to a

service-oriented architecture (SOA) bus, though much less complex. Unlike

HTTP, this bus provides a message broker that acts as an intermediary between

the individual microservices, thus avoiding the problems associated with a

brokerless approach. Keep in mind, however, that a message broker will

introduce extra steps that can add latency. The individual services still

contain their functional and operational logic, and will need time to process

that logic. The bus simply helps standardize and throttle those

communications. Major cloud platforms, such as Azure, provide their own

proprietary service bus for message brokering. However, there are also

third-party options such as RabbitMQ, an open source message broker based in

the Erlang programming language.

Edge computing: 4 problems it helps solve for enterprises

Enterprises in the construction, manufacturing, mining, and oil and gas

industries, for example, are embracing the edge, which enables them to run the

core elements of any solution locally by empowering local devices to save

their state, interact with each other, and send important alerts and

notifications. “This means that even if the internet goes down the factory,

warehouse, construction site, mine, or field, edge processing continues to

work full steam ahead,” Allsbrook says. ... Edge computing can minimize the

network and bandwidth issues associated with moving large amounts of data to

or from IoT devices and reduce reliance on the network. Companies look to edge

solutions that can process data at the source and provide summary information

on what’s going on. This eliminates the need for expensive SIM cards, data

plans, and other network costs if the data were to have to be transported from

the device to a network. “Edges can use simple ‘if-then’ logic or advanced AI

algorithms to understand and build those summary reports,” explains Allsbrook

of ClearBlade.

The Great Reset requires FinTechs – and FinTechs require a common approach to cybersecurity

Established financial services providers have a number of frameworks,

standards and industry-driven initiatives available to test the security of

FinTechs and other third parties. However, the volume of industry initiatives

– driven by the pace of technological change and the multiplication of

regulations – is now creating “noise”. This makes it difficult for FinTechs to

direct their resources in a way that allows for security while also

facilitating commercial partnerships. Requirements placed on FinTechs sow

confusion, increase costs and may incentivise “security through obscurity”, in

which less well-resourced firms play a game of chance, betting that they’re

too small to be targeted by attackers and setting themselves up for problems

in the future. ... The sector needs a mutually understood and widely accepted

base level of cybersecurity controls. Clarity at the base level of security

will support effective protection of business and client assets across the

wider supply chain. This can accelerate the speed at which FinTechs can come

to market and create commercial partnerships – and, in turn, incentivise good

cyber hygiene

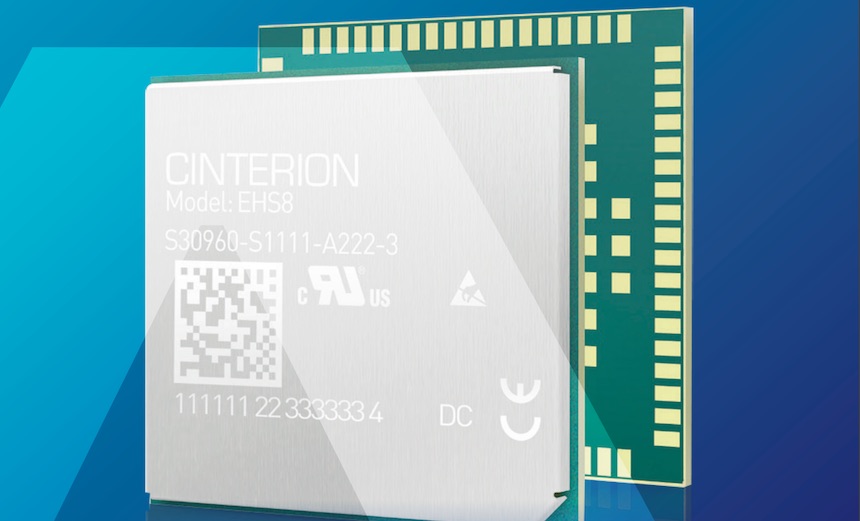

IBM Finds Flaw in Millions of Thales Wireless IoT Modules

The modules, which IBM describes as mini circuit boards, enable 3G or 4G

connectivity, but also store secrets such as passwords, credentials and code,

according to Adam Laurie, X-Force Red's lead hardware hacker, and Grzegorz

Wypych, senior security consultant, who wrote a blog post. "This vulnerability

could enable attackers to compromise millions of devices and access the networks

or VPNs supporting those devices by pivoting onto the provider's backend

network," Laurie and Wypych write. "In turn, intellectual property, credentials,

passwords and encryption keys could all be readily available to an attacker." In

a statement, Thales says "it takes the security of its products very seriously

and therefore has, after communicating and discussing this issue with affected

customers, delivered software fixes in Q1/2020." The modules run microprocessors

with an embedded Java ME interpreter and use flash storage. Also, there are Java

"midlets" that allow for customization. One of those midlets copies custom Java

code added by an OEM to a secure part of the flash memory, which should only be

in write mode so that code can be written there but not read back.

How to manage unstructured data using an ECM system

Structured data is information governed by a database structure, organized into

defined fields, usually within the context of a relational database. The

database structure requires that data in the fields follow a prescribed format.

For example, a date must have the format of a date and a name must be limited in

length. The most common place that people encounter structured data is in the

cells of a spreadsheet. Structured data has many applications within businesses

and is easy to search. It is found in finance, customer relationship management,

supply chain and other applications where compliance to structures is keyed to

business tasks. Unstructured data, on the other hand, is data without rules

and is not as searchable. Users who create unstructured data are writing

free-form, rather than complying with structured data fields. There is minimal

enforcement of any rules on the length of content, the format of the content or

what content goes where. Despite the lack of formal structure, unstructured

information -- which users create in word processing programs, spreadsheets,

presentation files, PDFs, social media feeds, and audio and video files -- forms

the bulk of the data created in an organization.

Quote for the day:

"When you expect the best from people, you will often see more in them than they see in themselves." -- Mark Miller