Build up a DevSecOps pipeline for fast and safe code delivery

Developers in need of a feature -- or simply in a rush -- might pull random Docker images that contain vulnerabilities from public internet repositories. Developers should always treat public container registries with extreme caution. Registry platforms, such as Harbor or even a self-hosted private local registry for the company, offer tighter control over what users deploy within an environment, and also streamline versioning and management to ensure DevSecOps doesn't impede code velocity. Docker Hub also offers certified images, but always exercise vigilance to minimize risk. Other tools ensure code builds don't ship with known vulnerabilities. For example, to prevent the release of software with vulnerable libraries, the auditing tool Open Security Content Automation Protocol (OpenSCAP) scans systems in the delivery pipeline and checks them against the Common Vulnerabilities and Exposures (CVE) library. There are several CVE feeds, both free and paid, that OpenSCAP can use.

Upgrading from Java 8 to Java 12

Generally speaking each new release performs better than the previous one. "Better" can take many forms, but in recent releases we've seen improvements in startup time; reduction in memory usage; and the use of specific CPU instructions resulting in code that uses fewer CPU cycles, among other things. Java 9, 10, 11 and 12 all came with significant changes and improvements to Garbage Collection, including changing the default Garbage Collector to G1, improvements to G1, and three new experimental Garbage Collectors (Epsilon and ZGC in Java 11, and Shenandoah in Java 12). Three might seem like overkill, but each collector optimises for different use cases, so now you have a choice of modern garbage collectors, one of which may have the profile that best suits your application. The improvements in recent versions of Java could lead to a cost reduction. Not least of all tools like JLink reducing the size of the artifact we deploy, and recent improvements in memory usage could, for example, decrease our cloud computing costs.

Atlassian targets agile development at scale with Jira Align

“There are a lot of benefits [with agile development]: it allows enterprises to be more nimble, to respond quickly to pressure and change to their roadmaps as needed based on customer demands,” he said. “But one thing that we have lost through that transformation is the certainty, the visibility and the clarity across an organization of when deadlines will be hit, and when capabilities will be available for customers. Agile simply just doesn't work that way. And that is a big challenge, especially for these very large organizations that need to be more nimble. “Our biggest customers were looking for guidance for how to scale all of this agile development goodness across thousands of people,” Deatsch said. “That is actually exactly what AgileCraft does.” As an example, Deatsch said a large bank could be building a new mobile app, an effort that could involve large numbers of developers working on individual, but related, projects – from building a front-end UI to back-end transactions systems.

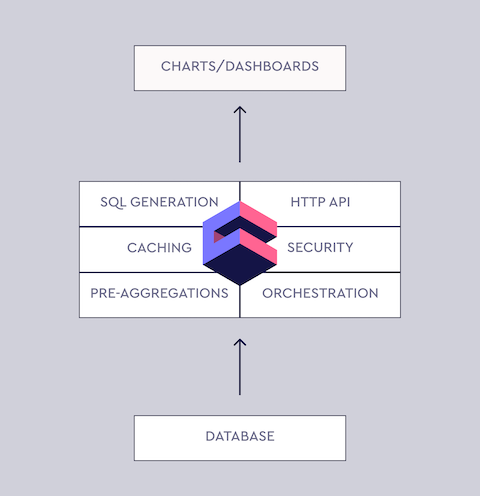

Cube.js: Ultimate Guide to the Open-Source Dashboard Framework

The majority of modern web applications are built as a single-page application, where the front-end is separated from the back-end. The back-end also usually is split into multiple services, following a microservices architecture. Cube.js embraces this approach. Conventionally, you run Cube.js back-end as a service. It manages the connection to your database, including queries queue, caching, pre-aggregation, and more. It also exposes an API for your front-end app to build dashboards and other analytics features. ... Analytics starts with the data and data resides in a database. That is the first thing we need to have in place. You most likely already have a database for your application, and usually, it is just fine to use for analytics. Modern popular databases such as Postgres or MySQL are well suited for a simple analytical workload. By simple, I mean a data volume with less than 1 billion rows. MongoDB is fine as well; the only thing you’ll need to add is MongoDB Connector for BI. It allows executing SQL code on top of your MongoDB data. It is free and can be easily downloaded from the MongoDB website. One more thing to keep in mind is replication. It is considered bad practice to run analytics queries against your production database mostly because of the performance issues.

Finance Remains Most Attacked Sector Globally Six of the Past Seven Years

John South of the Threat Intelligence Communication Team, Global Threat Intelligence Center at NTT Security, says: Finance is yet again on the top spot when it comes to targeted attacks, which surely is enough evidence to convince the board that cybersecurity is a must-have investment. Many financial organizations are moving forward with digital transformation but without prioritizing security as a core business requirement. While legacy methods and tools are still effective at providing a solid foundation for mitigation, new attack methods are continually being developed by malicious actors. Security leaders should ensure basic controls remain a primary focus but they must also embrace innovative solutions if they provide a good fit and true value. Mr. Fumitaka Takeuchi, Security Evangelist, Vice President, Managed Security Service Taskforce, Corporate Planning at NTT Communications, says: Many organizations are caught up in simply buying solutions to problems that either dont really exist, or a solution which costs more than the potential loss being prevented.

Why Xamarin

Even after years of building for mobile, developers still heavily debate the choice of technology stack. Perhaps there isn't a silver bullet and mobile strategy really depends - on app, developer expertise, code base maintenance and a variety of other factors. If developers want to write .NET however, Xamarin has essentially democratized cross-platform mobile development with polished tools and service integrations. Why are we then second guessing ourselves? Turns out, mobile development does not happen in silos and all mobile-facing technology platforms have evolved a lot. It always makes sense to look around at what other development is happening around your app - does the chosen stack lend itself to code sharing? Are the tools of the trade welcoming to developers irrespective of their OS platform? Do the underlying pillars of chosen technology inspire confidence? Let's take a deeper look and justify the Xamarin technology stack. Spoiler - you won't be disappointed.

LambdaTest Selenium Testing Tool Tutorial with Examples in 2019

LambdaTest Selenium Grid is a scalable, secure, and reliable cloud based Selenium grid. It lets you perform automated cross browser testing across all major browsers and various browser versions, latest and legacy and across operating systems. It also lets you run your multiple selenium automated tests in parallel which allows you to cut down on your build time. It also provides you with screenshots from over 2000 mobile and desktop browsers, so you can perform visual cross browser compatibility testing and there is no need to test for each browser manually as you get full paged screenshots by just selecting the configurations. ... The thing about Selenium Grid is that it can be expensive to setup additional machines as Nodes, and this is where an Online Selenium Grid (SaaS) can truly shine. They offer various packages from entry level pricing to enterprise packages. And usually, the price for cloud solutions often scale linear with the number of tests and the concurrency of tests. Which means that you can scale according to your needs and can keep the cost under control accordingly as well.

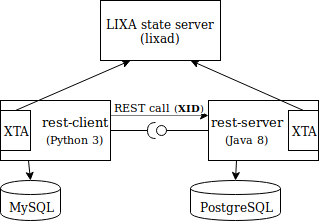

Microservices and Distributed Transactions

The usage of the two-phase commit protocol has been debated a lot since its inception. On one side, the enthusiasts tried to use it in every circumstance; on the other side, the detractors avoided it in all the situations. A first note that must be reported is related to performance: with every consensus protocol, the two-phase commit increases the time spent by a transaction. This side effect can’t be avoided and it must be considered at design time. It’s even common knowledge that some resource managers are affected by scalability limits when they manage XA transactions: this behavior depends more on the quality of the implementation than on the two-phase commit protocol itself. The abuse of two-phase commit severely hurts the performance of a distributed system, but trying to avoid it when it's the obvious solution leads to baroque and over engineered systems that are difficult to maintain. More specifically, the integration of already existing services requires serious re-engineering when both the transactional behavior must be guaranteed and a consensus protocol like the two-phase commit is not used.

Craft your data stores with VM storage performance in mind

One data store is almost never enough; you usually need multiple data stores, but fewer than the number of VMs you have. Modest performance VMs can share a data store; you might put six to 12 modest VMs on a single data store, but just don't put all of one kind of VM on the same data store. You'll be in a world of hurt if you keep all your Windows domain controllers on a single data store. The data store might become saturated and slow, and if you accidentally delete it, you won't have any of the necessary controllers left. Try not to place more than 12 VMs on a data store because they all share the queues and performance of the data store, and all of them will suffer if the queues become saturated. Usually, VMs share data stores with other VMs, but high-performance VMs that need multiple disks and multiple SCSI controllers also need multiple data stores. In certain cases, a single critical VM will have its own data store and, on rare occasions, one VM might need multiple data stores.

Samsung's Agile & Lean UX Journey

The greatest strength of a designer is understanding the users, argued Jo. They tried to get designers thinking about the users, to get users to the center of the team, he said. Have teams focus on the real problem for real users in a desirable and usable product. Samsung applies several user-centered practices to develop products. From the start of the project, the team creates personas together so that they can look forward to one goal without looking at different directions. Personas are connected to all of their activities. Jo mentioned that they used them in the scenarios, storyboards, workflows, design review, and user stories. Jo explained that since their personas are added and refined based on iterative research, they become more robust and concrete with their insights. Whenever we learn something new about users, we add or change our personas, said Jo. He stated that "the important thing is that our personas must be alive and evolving more and more, like real characters."

Quote for the day:

"When your values are clear to you, making decisions becomes easier." -- Roy E. Disney

/arc-anglerfish-syd-prod-nzme.s3.amazonaws.com/public/G5YQDSZEA5HD7M6KSMSCXOOLZE.jpg)