The New Era Of Artificial Intelligence

AI will soon become commoditized and democratized, just as electricity was in its time. Today we use computers, smartphones, other connected devices, and, mostly, apps. Whilst access to internet technologies has constantly improved over the past decades, very few people are able to program these and generate income by intelligently exploiting consumer data, which, in theory, is not theirs. GAFA (Google, Amazon, Facebook and Apple) and the Chinese BAT (Baidu, Alibaba and Tencent,) are among the most prominent players in these fields. Tomorrow’s world would be different with the emergence of relatively simple, portable AI devices, which might not necessarily be connected to each other by the internet, but would feature completely new protocols and peer-to-peer technologies. This will significantly re-empower consumers. Because it is decentralized, portable AI will be available for the masses within a decade or so. Its use will be intuitive; just as driving a car is today. Portable AI will also be less expensive than motorized vehicles,

What is DevSecOps and Vulnerabilities?

The principles of security and communications should be introduced every step of the way when building applications. The philosophy of DevSecOps was created by security practitioners who seek to “work and contribute value with less friction”. These practitioners run a web site that details an approach to improving security, explaining that “the goal of DevSecOps is to bring individuals of all capabilities to a high level of security efficiency in a short period of time. Security is everyone responsibility.” DevSecOps statement includes principles such as building a lower access platform, focusing on science, avoiding fear, uncertainty and doubt, collaboration, continuous security monitoring and cutting edge intelligence. Community DevSecOps promotes action directed at detecting potential issues or exploiting weaknesses. In other words, think like an enemy and perform similar tactics such as trying to penetrate to identify gaps that can be exploited and that need to be treated.

7 essential technologies for a modern data architecture

At the center of this digital transformation is data, which has become the most valuable currency in business. Organizations have long been hamstrung in their use of data by incompatible formats, limitations of traditional databases, and the inability to flexibly combine data from multiple sources. New technologies promise to change all that. Improving the deployment model of software is one major facet to removing barriers to data usage. Greater “data agility” also requires more flexible databases and more scalable real-time streaming platforms. In fact no fewer than seven foundational technologies are combining to deliver a flexible, real-time “data fabric” to the enterprise. Unlike the technologies they are replacing, these seven software innovations are able to scale to meet the needs of both many users and many use cases. For businesses, they have the power to enable faster and more intelligent decisions and to create better customer experiences.

Tesla cloud systems exploited by hackers to mine cryptocurrency

Researchers from the RedLock Cloud Security Intelligence (CSI) team discovered that cryptocurrency mining scripts, used for cryptojacking -- the unauthorized use of computing power to mine cryptocurrency -- were operating on Tesla's unsecured Kubernetes instances, which allowed the attackers to steal the Tesla AWS compute resources to line their own pockets. Tesla's AWS system also contained sensitive data including vehicle telemetry, which was exposed due to the unsecured credentials theft. "In Tesla's case, the cyber thieves gained access to Tesla's Kubernetes administrative console, which exposed access credentials to Tesla's AWS environment," RedLock says. "Those credentials provided unfettered access to non-public Tesla information stored in Amazon Simple Storage Service (S3) buckets." The unknown hackers also employed a number of techniques to avoid detection. Rather than using typical public mining pools in their scheme

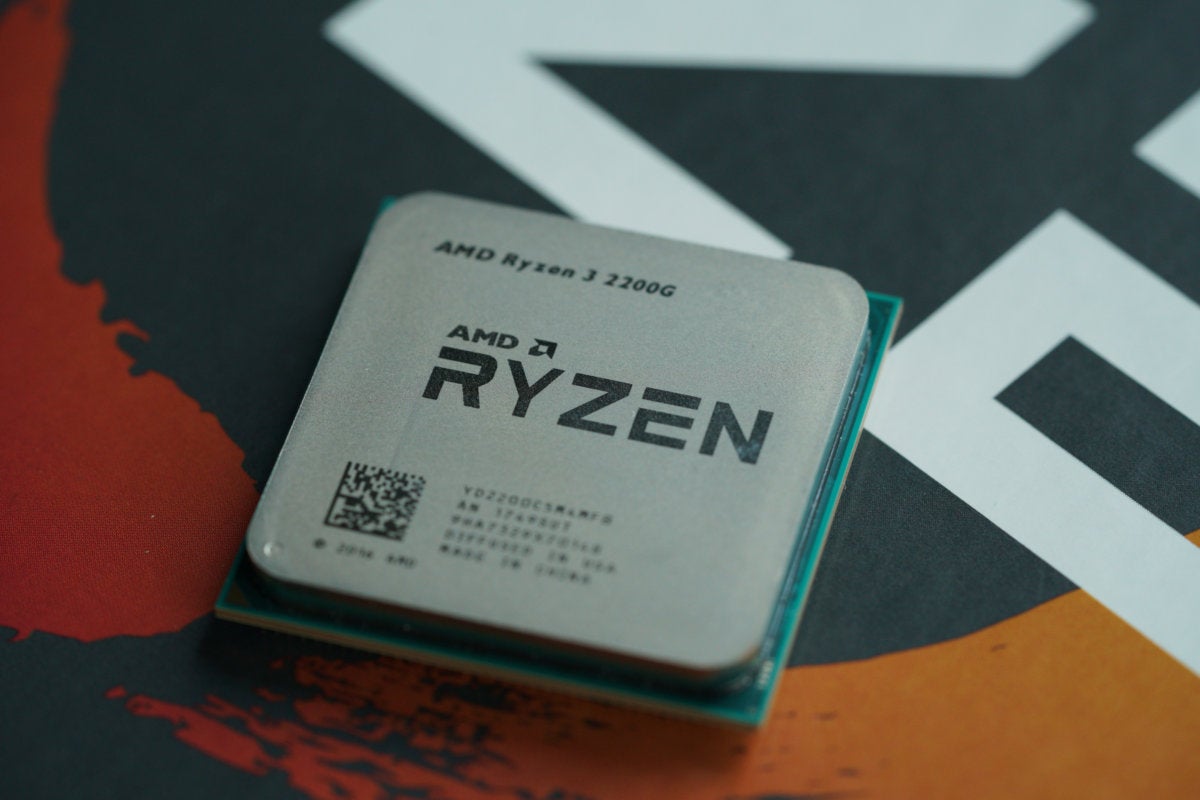

Micron sets its sights on quad-cell storage

The first single-level cell, with one bit per cell, first emerged in the late 1980s when flash drives first appeared for mainframes. In the late 1990s came multi-level cell (MLC) drives capable of storing two bits per cell. Triple-level cell (TLC) didn't come out until 2013 when Samsung introduced its 840 series of SSDs. So, these advances take a long time, although they are being sped up by a massive increase in R&D dollars in recent years. Multi-bit flash memory chips store data by managing the number of electronic charges in each individual cell. With each new cell, the number of voltage states doubles. SLC NAND tracks only two voltage states, while MLC has four voltage states, TLC has eight voltage states, and QLC has 16 voltage states. This translates to much lower tolerance for voltage fluctuations. As density goes up, the computer housing the SSD must be rock-stable electrically because without it, you risk damaging cells. This means supporting electronics around the SSD to protect it from fluctuations.

When it comes to cyber risk, execute or be executed!

Accountability must be clearly defined, especially in strategies, plans and procedures. Leaders at all levels need to maintain vigilance and hold themselves and their charges accountable to execute established best practices and other due care and due diligence mechanisms. Organizations should include independent third-party auditing and pen-testing to better understand their risk exposure and compliance posture. Top organizations don’t use auditing and pen-testing for punitive measures, but rather, to find weaknesses that should be addressed. Often, they find that personnel need more training, and regular cyber drills and exercises to get to a level of proficiency commensurate with their goals. Those organizations that fail are those that do not actively seek to find weaknesses or fail to address known weaknesses properly. Sound execution of cyber best practices buys down your overall risk. With today’s national prosperity and national security reliant on information technology, the stakes have never been higher.

Hack the CIO

CIOs have known for a long time that smart processes win. Whether they were installing enterprise resource planning systems or working with the business to imagine the customer’s journey, they always had to think in holistic ways that crossed traditional departmental, functional, and operational boundaries. Unlike other business leaders, CIOs spend their careers looking across systems. Why did our supply chain go down? How can we support this new business initiative beyond a single department or function? Now supported by end-to-end process methodologies such as design thinking, good CIOs have developed a way of looking at the company that can lead to radical simplifications that can reduce cost and improve performance at the same time. They are also used to thinking beyond temporal boundaries. “This idea that the power of technology doubles every two years means that as you’re planning ahead you can’t think in terms of a linear process, you have to think in terms of huge jumps,” says Jay Ferro, CIO of TransPerfect, a New York–based global translation firm.

Taking cybersecurity beyond a compliance-first approach

With high profile security breaches continuing to hit the headlines, organizations are clearly struggling to lock down data against the continuously evolving threat landscape. Yet these breaches are not occurring at companies that have failed to recognize the risk to customer data; many have occurred at organizations that are meeting regulatory compliance requirements to protect customer data. Given the huge investment companies in every market are making in order to comply with the raft of regulation that has been introduced over the past couple of decades, this continued vulnerability is – or should be – a massive concern. Regulatory compliance is clearly no safeguard against data breach. Should this really be a surprise, however? With new threats emerging weekly, the time lag inherent within the regulatory creation and implementation process is an obvious problem. It can take over 24 months for the regulators to understand and identify weaknesses within existing guidelines, update and publish requirements, and then set a viable timeline for compliance.

Three sectors being transformed by artificial intelligence

While these industries will see significant AI adoption this year, the AI platforms and products that scale to mainstream adoption won’t necessarily be the household names you may expect. As the “Frightful Five” continue to grow and expand their reach across industries, they have designed powerful AI products. However, these platforms present challenges for smaller companies looking to implement AI solutions, as well as larger companies in competitive industries such as retail, online gaming, shipping, and travel to name a few. How can an advertiser on Facebook feel comfortable entrusting its data to a tech behemoth that may sell a product that competes with its business? Should a big data company using a Google AI feature be concerned about the privacy of its data? These risks are very real, yet businesses have options. They can instead choose to host data on independent platforms with independent providers, guarding their intellectual property while also supercharging the advancement of AI technology.

What the ‘versatilist’ trend means for IT staffing

IT staff who once only focused on systems in the datacenter now focus on systems in the public cloud as well. This means that while they understand how to operate the LAMP stacks in their enterprise datacenters, as well as virtualization, they also understand how to do the same things in a pubic cloud. As a result, they have moved from one role to two roles, or even more roles. However, the intention is that eventually that the traditional systems will go away completely, and they will just be focused on the cloud-based systems. I agree with Gartner on that, too. While I understand where Gartner is coming from, the more automation that sits between us and the latest technology means we need more technology specialists, not less. So, I’m not convinced that IT versatilists will gain new business roles to replace the loss of of the traditional datacenter roles, as Gartner suggests will happen.

Quote for the day:

"We're so busy watching out for what's just ahead of us that we don't take time to enjoy where we are." -- Bill Watterson