How to develop a two-tiered security model for the hybrid work paradigm

Providing organizations and their stakeholders complete digital security is a

part of the holistic security culture that enterprises must inculcate. This is

how they can ensure that the work paradigm of the future is anchored by safety

and technological progression on the back of a top-down security culture.

Organizations must promote the belief that upholding digital security

requirements isn’t the responsibility of the security department alone. A

sustainable security culture requires a collective investment from all

stakeholders in the organization. A vision that treats security as a

non-negotiable asset, complemented by employee sensitization and training

practices, is necessary for the safekeeping of valuable data and prevention

against exploitation of vulnerabilities by threat actors. To drive optimal

results, administrators must make sure that the mechanics used to deliver

security training to employees account for different departments, learning

styles, and abilities. Employees are the bedrock of any organization. Employee

errors are common when they are unsupervised, anxious, or uneducated in matters

pertaining to organizational security.

5 Habits I Learned From Successful Data Scientists at Microsoft

Continuous learning and improvement are paramount for Data Scientists looking to

stand out from the crowd of other qualified data professionals. As many already

know Data Science is not a static field. Look at job descriptions, find out what

skills most employers are looking for in a data scientist, and compare with your

resume. Are you lacking these skills? Identify your weak points and work towards

improvement. ... It’s not just about models and programming languages; it is

paramount that you understand the inner workings of your profession. The truth

is if you are depending on the tricks and experience you’ve gathered from your

previous or current job, there are massive tendencies that you will remain

professionally stagnant. ... There are hundreds of quality research papers,

books, articles, and magazines exhibiting valuable Data Science resources to

educate yourself and expand your knowledge about certain concepts in your field.

Before I moved on to get my Data Science certification, I learned most of the

programming languages and analysis tricks from blog posts.

Yandex Pummeled by Potent Meris DDoS Botnet

“Yandex’ security team members managed to establish a clear view of the botnet’s

internal structure. L2TP [Layer 2 Tunneling Protocol] tunnels are used for

internetwork communications. The number of infected devices, according to the

botnet internals we’ve seen, reaches 250,000,” wrote Qrator in a Thursday blog

post. L2TP is a protocol used to manage virtual private networks and deliver

internet services. Tunneling facilitates the transfer of data between two

private networks across the public internet. Yandex and Qrato launched an

investigation into the attack and believe the Mēris to be highly sophisticated.

“Moreover, all those [compromised MikroTik hosts are] highly capable devices,

not your typical IoT blinker connected to Wi-Fi – here we speak of a botnet

consisting of, with the highest probability, devices connected through the

Ethernet connection – network devices, primarily,” researchers wrote. ... While

patching MikroTik devices is the most ideal mitigation to combat future Mēris

attacks, researchers also recommended blacklisting.

Consistency, Coupling, and Complexity at the Edge

Although RESTful APIs are easy for backend services to call, they are not so

easy for frontend applications to call. That is because an emotionally

satisfying user experience is not very RESTful. Users don’t want a GUI where

entities are nicely segmented. They want to see everything all at once unless

progressive disclosure is called for. For example, I don’t want to navigate

through multiple screens to review my travel itinerary; I want to see the

summary (including flights, car rental, and hotel reservation) all on one screen

before I commit to making the purchase. When a user navigates to a page on a web

app or deep links into a Single Page Application (SPA) or a particular view in a

mobile app, the frontend application needs to call the backend service to fetch

the data needed to render the view. With RESTful APIs, it is unlikely that a

single call will be able to get all the data. Typically, one call is made, then

the frontend code iterates through the results of that call and makes more API

calls per result item to get all the data needed.

Facebook Researcher’s New Algorithm Ushers New Paradigm Of Image Recognition

Humans have an innate capability to identify objects in the wild, even from a

blurred glimpse of the thing. We do this efficiently by remembering only

high-level features that get the job done (identification) and ignoring the

details unless required. In the context of deep learning algorithms that do

object detection, contrastive learning explored the premise of representation

learning to obtain a large picture instead of doing the heavy lifting by

devouring pixel-level details. But, contrastive learning has its own

limitations. According to Andrew Ng, pre-training methods can suffer from

three common failings: generating an identical representation for different

input examples, generating dissimilar representations for examples that humans

find similar (for instance, the same object viewed from two angles), and

generating redundant parts of a representation. The problems of representation

learning, wrote Andrew Ng, boil down to variance, invariance, and covariance

issues.

How AI Is Changing the IT and AV Industries

When AI can take visual, auditory, and human speech information and generate

speech in return, it will need to be able to make decisions. As an example,

AI-based systems may be able to process behavioral patterns on smartphone

applications and then convert that information into a decision to tweak the

user experience to enhance the effectiveness of the application. Another great

way for AI to make decisions and change the IT industry is to participate in

defect analysis and efficiency analysis. Some AI may be able to assess

protocols or infrastructure and determine where defects may exist in the

system and then determine the best solutions to increase efficiency. Another

consideration is for AI to collect lots of data and generate solutions to

improve efficiency over time, even without the presence of a defect. AI being

able to create and offer solutions is quickly changing the IT industry for the

better, making it more efficient and helpful in the long term. Obviously, the

introduction of AI in machines allows for automation at multiple process

stages.

DeepMind aims to marry deep learning and classic algorithms

Algorithms are a really good example of something we all use every day,

Blundell noted. In fact, he added, there aren’t many algorithms out there. If

you look at standard computer science textbooks, there’s maybe 50 or 60

algorithms that you learn as an undergraduate. And everything people use to

connect over the internet, for example, is using just a subset of those.

“There’s this very nice basis for very rich computation that we already know

about, but it’s completely different from the things we’re learning. So when

Petar and I started talking about this, we saw clearly there’s a nice fusion

that we can make here between these two fields that has actually been

unexplored so far,” Blundell said. The key thesis of NAR research is that

algorithms possess fundamentally different qualities to deep learning methods.

And this suggests that if deep learning methods were better able to mimic

algorithms, then generalization of the sort seen with algorithms would become

possible with deep learning.

SolarWinds Attack Spurring Additional Federal Investigations

Right now, the SEC investigation appears fairly broad and could reveal other

cyber incidents involving these companies, including past data breaches and

ransomware attacks, says Austin Berglas, who formerly was an assistant special

agent in charge of cyber investigations at the FBI's New York office. "This

[inquiry] could potentially include forensic and investigative reports of

past, unreported incidents and could bring the topic of attorney privilege

into play," says Berglas, who is now global head of professional services at

cybersecurity firm BlueVoyant. "If there is no evidence of [personally

identifiable information] exposure, organizations are not mandated to disclose

the incident. However, not all investigations are black-and-white. Sometimes

evidence is destroyed, unavailable or corrupted, and confirmation of the

exposure of sensitive information may not be obtainable upon forensic

analysis." While some companies will err on the side of caution and publish

data related to breaches, others might not, and Berglas says the SEC might be

probing to see which companies are following federal or state laws when it

comes to disclosures.

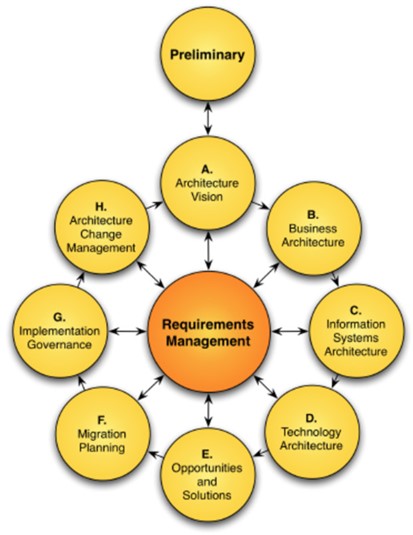

Implementing enterprise transformation using TOGAF

TOGAF includes the concept of "target first" and "baseline first." This can

help us in our decision on where to start. If we know how we want the future

state to look like, we could begin with the target first and work our way back

to the baseline. If we are not sure what we want the future state to look

like, we could begin with the baseline and work our way to the target state.

Regardless of which path you choose; in the end you need to have both the

baseline and target well defined. What we are looking for is the gap between

what we have and what we need. And it is within that gap that the enterprise

transformation is defined and takes place. The baseline provides us with

information on our current state. The target provides us with information on

what we would like to achieve at the end of the transformation. With this

information, we can put together a transformation roadmap and the ability to

measure our progress/success in achieving the target state. Enterprise

architecture is a discipline to lead enterprise responses proactively and

holistically to disruptive forces by identifying and analysing the execution

of change toward desired business vision and outcomes.

How new banking technology platforms will redefine the future of financial services

The evolution of fintech over the last five years has been quite dramatic in

that they have devised new operating and business models that are changing the

landscape. They are doing so by bringing in differentiated specialisation in a

specific area, which traditional banks are unable to match. For example, there

are a few who have created a business around becoming a ‘trusted advisor’ to

consumers offering valuable guidance to them on their financial needs and

enabling them to make the best choice on financial products and services.

Banks which were hitherto aligned to an exclusive sourcing arrangement with a

partner now have to contend with integrating seamlessly with these ‘advisors’

and participate in their competitive marketplace to acquire more customers.

Not doing so is increasingly not an option, as consumer behaviour is steadily

evolving to demand such experiences, and banks cannot provide these on their

own. And this is truly open banking. While there are no regulatory obligations

as of yet to participate in an open banking framework within India, it is a

matter of time before this becomes essential in the backdrop of RBI’s account

aggregator guidelines expected to come into effect soon.

Quote for the day:

"One man with courage makes a

majority." -- Andrew Jackson

No comments:

Post a Comment