What is DevOps? A guide to common methods and misconceptions

DevOps has been defined in many ways: a set of practices that automate and

integrate processes so teams can build, test, and release software faster and

more reliably; a combination of culture and tools that enable organizations to

ship software at a higher velocity; a culture, a movement, or a philosophy.

None of these are wrong, and they are all important aspects of DevOps—but they

don’t quite fully capture what’s at the heart of DevOps: the essential human

element between Dev and Ops teams, when collaboration bridges the gap that

allows teams to ship better software, faster. For organizations, DevOps

provides value by increasing software quality and stability, and shortening

lead times to production. For developers, DevOps focuses on both automation

and culture—it’s about how the work is done. But most importantly, DevOps is

about enabling people to collaborate across roles to deliver value to end

users quickly, safely, and reliably. Altogether, it’s a combination of focus,

means, and expected results. The focus of DevOps is people. The

means of implementing DevOps is process and tooling. The result of DevOps

is a better product, delivered faster and more reliably.

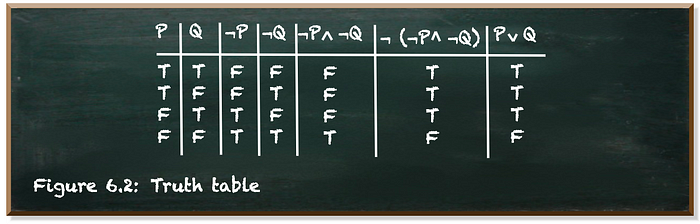

You Don’t Need To Be A Mathematician To Master Quantum Computing

Don’t get me wrong. Math is a great way to describe technical concepts. Math

is a concise yet precise language. Our natural languages, such as English, by

contrast, are lengthy and imprecise. It takes a whole book full of natural

language to explain a small collection of mathematical formulae. But most of

us are far better at understanding natural language than math. We learn our

mother tongue as a young child and we practice it every single day. We even

dream in our natural language. I couldn’t tell if some fellows dream in math,

though. For most of us, math is, at best, a foreign language. When we’re about

to learn something new, it is easier for us if we use our mother tongue. It is

hard enough to grasp the meaning of the new concept. If we’re taught in a

foreign language, it is even harder. If not impossible. Of course, math is the

native language of quantum mechanics and quantum computing, if you will. But

why should we teach quantum computing only in its own language? Shouldn’t we

try to explain it in a way more accessible to the learner? I’d say

“absolutely”!

From DevOps to DevApps

Perhaps an easier way to think about event-driven is to think in terms of

application flows. For example, when a trouble ticket is created in Zendesk,

that data can be automatically analyzed by Amazon Comprehend to determine what

the customer’s sentiment is (angry, satisfied, or confused). Then purchasing

history, warranty information, and other pertinent information stored in a

data warehouse like Amazon Redshift can be used to give the customer service

rep a complete picture of the customer, to more expediently resolve any

issues. One approach to using event-driven architecture utilizes the JAMStack

tools, a term coined by Netlify founding CEO, Matt Billman. While WordPress is

a platform that is used by an overwhelmingly large number of users deploying

websites, the JAMStack is a collection of tools used to deliver web content.

JAMStack tools can be used to deploy websites on the edge of the network, by

reducing the number of database calls and bringing content closer to the user

via CDN. However, you can also extend that stack by adding additional cloud

native services, such as AuthO for authentication. In a web app that collects

user data, information could be stored in Airtable.

Digital transformation: The new rules for getting projects done

"Amidst all the misery, this has been a great opportunity to fast-forward a

lot of changes that were on the stocks anyway," says Copinger-Symes. "So I

wouldn't want to say it's been positive, because that would undercut the

tragedies out there, but I think we've adjusted in stride and there are a lot

of opportunities to look out for, too." Like other organisations, the UK

military has to put its five-year plan for tech-led change on the back-burner

while it deals with the priorities of the pandemic. However, this change in

emphasis has helped the organisation to reprioritise – and Copinger-Symes

hopes the move away from a slower planning cycle is permanent, particularly

when it comes to tech. "That has to change, because increasingly our

competitiveness is found through the software not the hardware. And if you

adopt decade-long planning cycles with software, you're not going to be very

competitive," he says. "I think we were being forced to be change our planning

to a much shorter loop, so I think this pandemic has accelerated that process.

And I'm not saying we're on top of it or we've got it all right, but I think

that's just another acceleration of where we were moving anyway – to that

software-based view of the world, rather than a hardware view of the world."

Why AWS Recently Open Sourced A GUI Library For IoT Developers

“Developers can model the location and sizes of nodes, edges, and panels, and

Diagram Maker renders these as elements on the Diagram Maker canvas. The

rendered UI is fully interactive and lets users move nodes around, create new

edges, or delete nodes or edges,” says the AWS team. Diagram Maker also gives

developers the ability to layout a given graph via an API interface

automatically. With this feature, application developers can visualise the

relationships by having the layout-related information connected to the

resources, even if they are built outside the editor. In addition, application

developers can use Diagram Maker’s capabilities for use cases that are outside

of IoT. For example, with Diagram Maker, application developers can improve

the experience for end customers by letting them to intuitively and visually

design cloud resources needed by cloud services like Infrastructure as Code

(AWS CloudFormation) or Workflow Engines (AWS Step Functions) so as to figure

out the various relationships and hierarchies, according to AWS.

Alternatively, IoT application developers can utilise the Diagram Maker’s

plugin interface to author reusable plugins which can extend the Diagram

Maker’s core features.

Setting Up for Success: Governing Self-Service BI

With an increased number of users given access to the data layer, more reports

and dashboards are generated to support business decisions, especially in the

early stages of self-service BI adoption. When multiple individuals utilize

the same data source at different times, it can lead to discrepancies in the

data reported and redundant reports and dashboards generated from the same

data set. Different business users also create their own versions of data sets

derived from huge and more complex data sets. These activities, when

compounded, will eventually lead to inconsistent reporting, which can set back

executives making time-sensitive, data-driven business decisions. ... It’s not

surprising how often and soon organizations run into performance issues with

their self-service BI tools. Redundant data sets and reports can increase the

load on systems, leading to capacity issues. Though some of the most powerful

BI tools available provide best practices to improve report development, load

testing, and capacity management, it still boils down to how end users are

handling the technology in the absence of effective governance. ... An

overloaded system, with redundant data sets and reports, may still have

recourse, but when a security breach happens, it is one of the hardest

setbacks that CIOs and organizations endure.

The Changing Role of Data & the Chief Data Officer

In the short term, we’ve seen organizations increasingly focus on their data

strategy. Data management has become a lot more important because

organizations have to truly understand and trust their data. And especially

for things like contact tracing, you have the right contact data. In that

context, as part of a data coalition of my fellow CEOs, I wrote directly to

Congress about the need for valid, reliable data to help us fight the pandemic

in a much more thoughtful, data-driven way. We also worked very closely with

one of the hardest hit states in the early days of the pandemic. They

struggled because they didn’t have the necessary technology. They needed to

get the right data quality to analyze health issues and figure out where the

virus was spreading. We helped them leverage our technology to understand how

to bring the right equipment—PPE, ventilators—to the right hospitals to the

right patients at the right time. And just as innovative enterprises around

the globe have leveraged data to transform themselves to serve their customers

better and improve their products and services, we recommended that the

government do the same. The government is the biggest employer in the US.

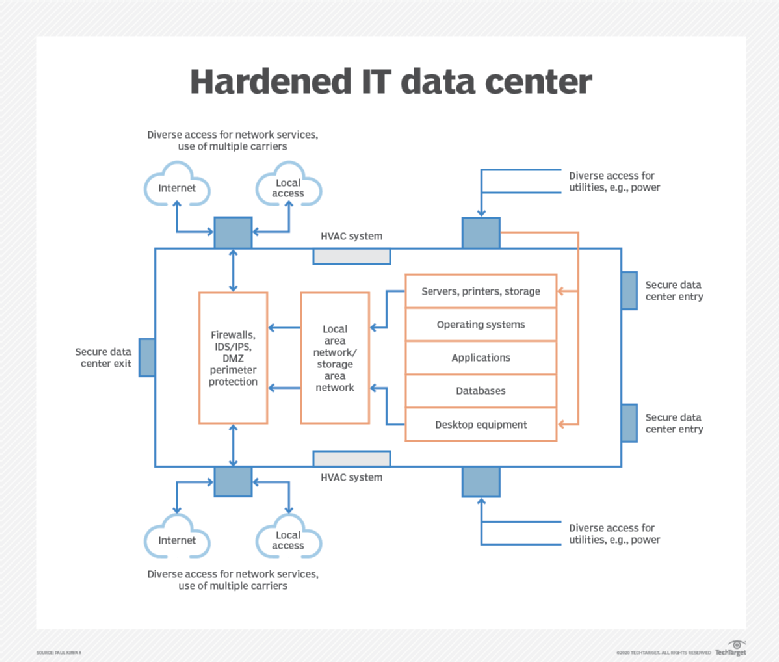

What CIOs need to know about hardening IT infrastructure

The good news is that infrastructure hardening technologies are readily

available and can be added to existing environments, often with minimal

disruption to production activities. However, to be extra prudent, it's

important to test hardening products in a test environment -- if available --

to protect the integrity of production systems. ... In addition to the

hardware and software tools that act as active frontline defense methods to

hardening IT infrastructures, CIOs should consider establishing policies and

procedures for infrastructure hardening. It may be that hardening activities

are part of day-to-day IT operations, but it also makes sense to document

these activities, especially if an IT audit is being planned. IT general

controls (ITGCs) include numerous controls and metrics examined by IT

auditors. Activities and initiatives mentioned above are among the ITGCs being

audited. Key ITGCs include organization and management, communications,

logical access security, physical and environmental security, change

management, risk management, monitoring of controls, system operations, system

availability, backup and recovery, incident management, and policies and

procedures.

How Redis Simplifies Microservices Design Patterns

Microservice architecture continues to grow in popularity, yet it is widely

misunderstood. While most conceptually agree that microservices should be

fine-grained and business-oriented, there is often a lack of awareness

regarding the architecture’s tradeoffs and complexity. For example, it’s

common for DevOps architects to associate microservices to Kubernetes, or an

application developer to boil implementation down to using Spring Boot. While

these technologies are relevant, neither containers nor development frameworks

can overcome microservice architecture pitfalls on their own — specifically at

the data tier. Martin Fowler, Chris Richardson, and fellow

thought-leaders have long addressed the trade-offs associated with

microservice architecture and defined characteristics that guide successful

implementations. These include the tenets of isolation, empowerment of

autonomous teams, embracing eventual consistency, and infrastructure

automation. While keeping with these tenets can avoid the pains felt by early

adopters and DIYers, the complexity of incorporating them into an architecture

amplifies the need for best practices and design patterns — especially as

implementations scale to hundreds of microservices.

The End of the Privacy Shield Agreement Could Lead to Disaster for Hyperscale Cloud Providers

Companies like Amazon Web Services (AWS), Google, and Microsoft were initially

happy that SCCs weren’t annulled. But they soon realized how the Privacy Shield

ruling could have more adverse consequences for them in the long run. Soon all

the three big shots issued statements in a bid to assure the customers that

their clouds were still open, with Microsoft assuring their commercial or public

sector customers that they could continue using Microsoft service without

breaking the European law. However, a few privacy advocates were quick to point

out that only those companies who continue to use SCCs can continue providing

assurances about data protection from third-party surveillance that are either

at rest or in transit. So several of these statements were misleading. Google,

for instance, is an electronic communication service provider, as a result of

which it falls under both categories. The platform may very well be the largest

search engine. Yet, the increasing awareness of data security and privacy might

force users to look for other reliable options that assure the more secure

sharing of private information online.

Quote for the day:

"Start at the end. You can't tell a story unless you know how it ends." -- Lewis

No comments:

Post a Comment