Gartner: Get ready for more AI in the workplace

AI will help out with the more mundane tasks managers already do. “Let's think about what managers do every day: they set schedules, assign work, do performance reviews, offer career guidance, help you access training, they do approvals, they cascade information and they enforce directives,” Cain said. “We can have AI doing a lot of that. “Your manager won't be replaced by an algorithm, but your manager will be using a lot of AI constructs to help improve and to make more efficient a lot of the routine work that they do. We think that that is going to be the combination.” There will also be more intelligence embedded in the workplace, as smart office technologies become more common, said Cain. “First of all, we are going to see workplaces have huge amounts of beacon and sensor networks woven throughout the physical workspace,” he said. “This can be used for space optimization, heating and cooling, energy use, supply replenishment [and] contextual data displays as you navigate the workplace.

Intelligent Field Instruments: The Smart Way to Industry 4.0

A key aspect in realizing a smart factory is the use of field instruments possessing intelligence—so-called smart transmitters. They support factory monitoring and diagnostics as well as networking with additional new field instruments. These transmitters can be distributed over the entire plant, different sensors can be connected, and previously unconnected parts can be monitored. The field instruments form the universal, intelligent basic unit of Industry 4.0. These units will be considered in more detail using the example of an instrument that can be employed with various sensors, such as resistance thermometers, thermocouples, and pressure sensors. Developed from the field instruments commonly in use today, smart transmitters are intelligent field instruments that are either purely loop-fed or supplied with auxiliary energy. A smart transmitter, besides containing other components, utilizes a microprocessor containing the software needed to make a transmitter smart.

How AI Is Changing Cyber Security Landscape and Preventing Cyber Attacks

Organizations have to be able to detect a cyber-attack in advance to be able to thwart whatever the adversaries are attempting to achieve. Machine learning is that part of Artificial Intelligence which has proven to be extremely useful when it comes to detecting cyber threats based on analyzing data and identifying a threat before it exploits a vulnerability in your information systems. Machine Learning enables computers to use and adapt algorithms based on the data received, learning from it, and understanding the consequent improvements required. In a cybersecurity context, this will mean that machine learning is enabling the computer to predict threats and observe any anomalies with a lot more accuracy than any human can. Traditional technology relies too much on past data and cannot improvise in the way that AI can. Conventional technology cannot keep up with the new mechanisms and tricks of hackers the way AI can. Additionally, the volume of cyber threats people has to deal with daily is too much for humans and is best dealt with by AI.

7 key relationships for the transformational CIO

This last relationship is one of the hardest for the CIO. Board members are often not technologically savvy and are business and/or financially minded. CIOs, on the other hand, are not typically business and/or financially minded. Nor does the CIO typically have exposure to the board of directors. Hence, the challenge with this relationship. Even so, this relationship is key for two reasons: a) differentiated company strategies rely heavily on technology and b) cybersecurity and risk. Like any relationship, relationships do not happen overnight and take time to build. Remember that relationships are one-to-one, not one-to-many. The combination of respect and trust becomes the foundation for each relationship. As the CIO, consider going to where the other person is. Do not expect or ask them to come to you. This is not a statement of physical location but rather a statement of current state. Consider where the other person is and approach the relationship from their perspective. With time, the work put into developing and nurturing these relationships will pay dividends for a long time. The effort also sets a good example for your teams to follow.

Does Education For Entrepreneurs Miss The Mark?

Particular areas of interest for entrepreneurs looking for this kind of just-in-time learning include identifying their customers and understanding their needs, developing and testing prototypes, creating value propositions, defining go-to-market strategies, determining the right profit model and learning from other entrepreneurs how they addressed these issues. In the two to four years it typically takes to launch a venture, it’s likely that founders will struggle with all of these challenges multiple times. It is not unusual for an entrepreneur to revisit these issues every two to three months and seek guidance from other entrepreneurs. This is why I joined forces with my Stanford GSB colleagues Jim Lattin and Baba Shiv to develop Stanford’s latest offering, Embark, a subscription based offering that combines frameworks and insights from our unique position in Silicon Valley with tactical steps necessary to launching or validating a sustainable business. The platform provides video advice from dozens of entrepreneurs about how to use these frameworks and is designed to support thousands of members.

What is incident response management and why do you need it?

The longer it takes an organisation to detect a vulnerability, the more likely it is that it will lead to a serious security incident. For example, perhaps you have an unpatched system that’s waiting to be exploited by a cyber criminal, or your anti-malware software isn’t up to scratch and is letting infected attachments pass into employees’ inboxes. Criminals sometimes exploit vulnerabilities as soon as they discover them, causing problems that organisations must react to immediately. However, they’re just as likely to exploit them surreptitiously, with the organisation only discovering the breach weeks or months later – often after being made aware by a third party. It takes 175 days on average to identify a breach, giving criminals plenty of time to access sensitive information and launch further attacks. As Ponemon Institute’s 2019 Cost of a Data Breach Study found, the damages associated with undetected security incidents can quickly add up, with the average cost of recovery being £3.17 million.

How Artificial Intelligence Will Transform Marketing in 2020

While one attempts to leverage the knowledge of AI to empower marketing, it also helps in fostering relevant and compelling interactions with customers, boost ROI, and affect revenue figures positively. Artificial Intelligence Marketing can function to work with a truckload of data at a much faster rate compared to any marketing team run by humans ever. Thus, finding hidden insights that affect consumer behavior, critical data points, and recognizing purchaser trends are valuable touchpoints for any marketing team to focus upon in order to develop creative content and impact strategy. Though a lot has been said about AI and the future of marketing, it is significant to understand why and how organizations are bent on implementing AI solutions for their marketing wing to prosper. Reportedly, brands who have recently adopted AI for marketing strategy, predict a 37 percent reduction in costs along with a 39 percent increase in revenue figures on an average by the end of 2020 alone. AI provides traditional marketing with tools that make way for personalized and relevant content brought at the right time to impact conversion rates for any business out there.

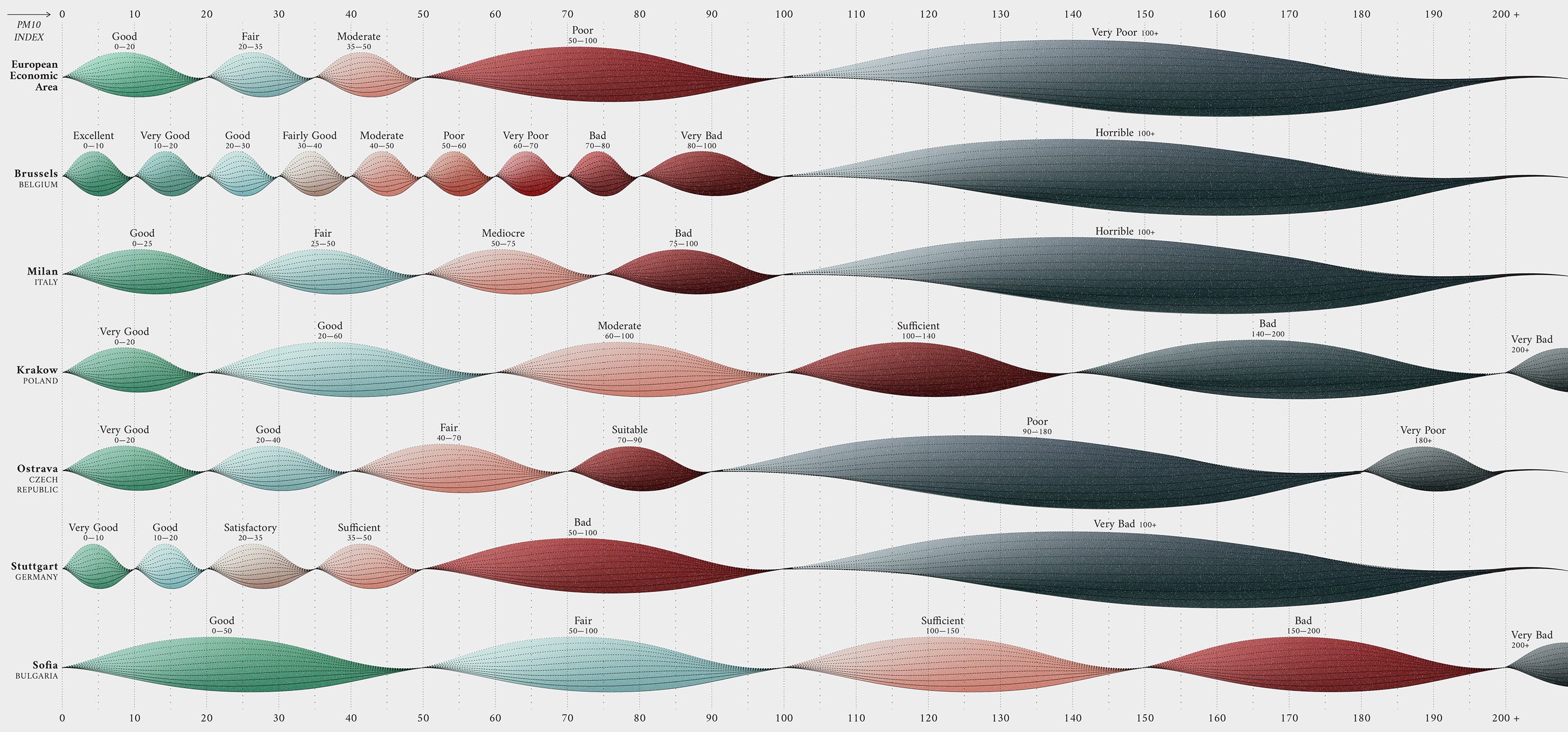

What Makes A Data Visualisation Elegant?

Perhaps a more sophisticated and flexible modern approach to the somewhat blunt notion of minimalism is that of “refinement”. What’s important is editing and, at times, being courageous or restrained about what you should not include or attempt to do. It’s about finding that moment — perhaps only through experience — where something just ‘feels right’. That leads me to one of my favourite German words, fingerspitzengefühl, which means having an intuitive flair or instinct — a ‘finger tip feeling’ where you just know. Moritz Stefaner mentions another key German word for this discussion, “pragnanz”, as meaning “concise and on point, but also memorable and assertive… so, not minimal for minimalism’s sake, but maximally effective with minimal effort”. Refinement is about being decisive. Possessing the clarity of vision and caring for the little details. This conveys to your viewer that your work has been thought-through and thought-about.

Anomaly detection methods unleash microservices performance

Traditional single or simple n-tier applications require platform and performance monitoring, but microservices add several logical layers to the equation. Along with more tiers come the y and z axes of the scale cube, including Kubernetes or another cluster manager for containers; a service layer and associated tools, such as the fabric and API gateways; and data and service partitioning across multiple clients. To detect and analyze performance problems, begin with the basics of problem identification and cause analysis. The techniques described here are relevant to microservices deployments. Each aims to identify and fix the internal source of application problems based on observable behavior. A symptom-manifestation-cause approach involves working back from external signs of poor performance to internal manifestations of a problem to then investigate likely root causes.

Why the founder of Apache is all-in on blockchain

As a result, "blockchain technology seemed urgent to get involved in [and] that lined up with these idealistic and pragmatic impulses that I've had—and I think other people in open source have had," he adds. Specifically, it was the emergence of a set of use cases beyond programmable money that drew in Behlendorf. "I think the one that pulled me in was land titles and emerging markets," he recalls. It wasn't just about having a distributed database. It was about having a distributed ledger that "actually supported consensus, one that actually had the network enforcing rules about valid transactions versus invalid transactions. One that was programmable, with smart contracts on top. This started to make sense to me, and [it] was something that was appealing to me in a way that financial instruments and proof-of-work was not." Behlendorf makes the point that for blockchain technology to have a purpose, the network has to be decentralized. For example, you probably want "nodes that are being run by different technology partners or … nodes being run by end-user organizations themselves because otherwise, why not just use a central database run by a single vendor or a single technology partner?" he argues.

Quote for the day:

If you can't handle others disapproval, then leadership isn't for you. -- Miles Anthony Smith

No comments:

Post a Comment