Europe’s Digital Services Act applies in full from tomorrow - here’s what you need to know

In one early sign of potentially interesting times ahead, Ireland’s Coimisiún na Meán has recently been consulting on rules for video sharing platforms that could force them to switch off profiling-based content feeds by default in that local market. In that case the policy proposal was being made under EU audio visual rules, not the DSA, but given how many major platforms are located in Ireland the Coimisiún na Meán, as DSC, could spin up some interesting regulatory experiments if it take a similar approach when it comes to applying the DSA on the likes of Meta, TikTok, X and other tech giants. Another interesting question is how the DSA might be applied to fast-scaling generative AI tools. The viral rise of AI chatbots like OpenAI’s ChatGPT occurred after EU lawmakers had drafted and agreed the DSA. But the intent for the regulation was for it to be futureproofed and able to apply to new types of platforms and services as they arise. Asked about this, a Commission official said they have identified two different situations vis-à-vis generative AI tools: One where a VLOP is embedding this type of AI into an in-scope platform — where they said the DSA does already apply.

Composable Architectures vs. Microservices: Which Is Best?

Composable architecture is a modular approach to software design and development that builds flexible, reusable and adaptable software architecture. It entails breaking down extensive, monolithic platforms into small, specialized, reusable and independent components. This architectural pattern comprises a pluggable array of modular components, such as microservices, packaged business capability (PBC), headless architecture and API-first development that can be seamlessly replaced, assembled and configured to align with business requirements. In a composable application, each component is developed independently using the technologies best suited to the application’s functions and purpose. This enables businesses to build customized solutions that can swiftly adapt to business needs. ... The composable approach has gained significant popularity in e-commerce applications and web development for enhancing the digital experience for developers, customers and retailers, with industry leaders like Shopify and Amazon taking advantage of its benefits.

Nginx core developer quits project in security dispute, starts “freenginx” fork

Comments on Hacker News, including one by a purported employee of F5, suggest

Dounin opposed the assigning of published CVEs to bugs in aspects of QUIC.

While QUIC is not enabled in the most default Nginx setup, it is included in the

application's "mainline" version, which, according to the Nginx documentation,

contains "the latest features and bug fixes and is always up to date." ...

MZMegaZone confirmed the relationship between security disclosures and Dounin's

departure. "All I know is he objected to our decision to assign CVEs, was not

happy that we did, and the timing does not appear coincidental," MZMegaZone

wrote on Hacker News. He later added, "I don't think having the CVEs should

reflect poorly on NGINX or Maxim. I'm sorry he feels the way he does, but I hold

no ill will toward him and wish him success, seriously." Dounin, reached by

email, pointed to his mailing list responses for clarification. He added,

"Essentially, F5 ignored both the project policy and joint developers' position,

without any discussion." MegaZone wrote to Ars (noting that he only spoke for

himself and not F5), stating, "It's an unfortunate situation, but I think we did

the right thing for the users in assigning CVEs and following public disclosure

practices.

Bridging Silos and Overcoming Collaboration Antipatterns in Multidisciplinary Organizations

Some problems with these anti-patterns. I'm going to talk again in threes,

I've talked about three anti-patterns, one role across many teams, product

versus engineering wars, and X-led. I'm going to talk about some of the

problems with these. The first one is one group holds the power. One group

holds all the decision-making power, and others can't properly contribute.

They aren't given the opportunity to contribute. In our first example, Anita

the designer doesn't hold any power because all she's doing is playing

catch-up. She's got no time to really contribute to decisions. In the second

anti-pattern in the product versus engineering, there's always a battle

between who holds the power. It's not collaborative, there's silos between the

two. ... Professional protectionism is about people protecting their

professional boundaries and not letting other people step into them. It's

like, "No, this is my area, you stay over there and you do your thing and I'll

do my thing over here." Maybe some people have experienced this. For example,

I was working with an organization recently and they said the user research

team didn't want to publish how they did user research, because other people

might do it.

Scalability Challenges in Microservices Architecture: A DevOps Perspective

Although microservices architectures naturally lend themselves to scalability,

challenges remain as systems grow in size and complexity. Efficiently managing

how services discover each other and distribute loads becomes complex as the

number of microservices increases. Communication across complex systems also

introduces a degree of latency, especially with increased traffic, and leads

to an increased attack surface, raising security concerns. Microservices

architectures also tend to be more expensive to implement than monolithic

architectures. Creating secure, robust, and well-performing microservices

architectures begins with design. Domain-driven design plays a vital role in

developing services that are cohesive, loosely coupled, and aligned with

business capabilities. Within a genuinely scalable architecture, every service

can be deployed, scaled, and updated autonomously without affecting the

others. One essential aspect of effectively managing microservices

architecture involves adopting a decentralized governance model, in which each

microservice has a dedicated team in charge of making decisions related to the

service

CQRS Pattern in C# and Clean Architecture – A Simplified Beginner’s Guide

When implementing Clean Architecture in C#, it’s important to recognize the

role each of the four components plays. Entities and Use Cases represent the

application’s core business logic, Interface Adapters manage the communication

between the Use Cases and Infrastructure components, and Infrastructure

represents the outermost layer of the architecture. To implement Clean

Architecture successfully, we have some best practices to keep in mind. For

instance, Entities and Use Cases should be agnostic to the infrastructure and

use plain C# classes, providing a decoupled architecture that avoids excess

maintenance. Additionally, applying the SOLID principles ensures that the code

is flexible and easily extensible. Lastly, implementing use cases

asynchronously can help guarantee better scalability. Each component of Clean

Architecture has a specific role to play in the implementation of the overall

architecture. Entities represent the business objects, Use Cases implement the

business logic, Interface Adapters handle interface translations, and

Infrastructure manages the communication to the outside world.

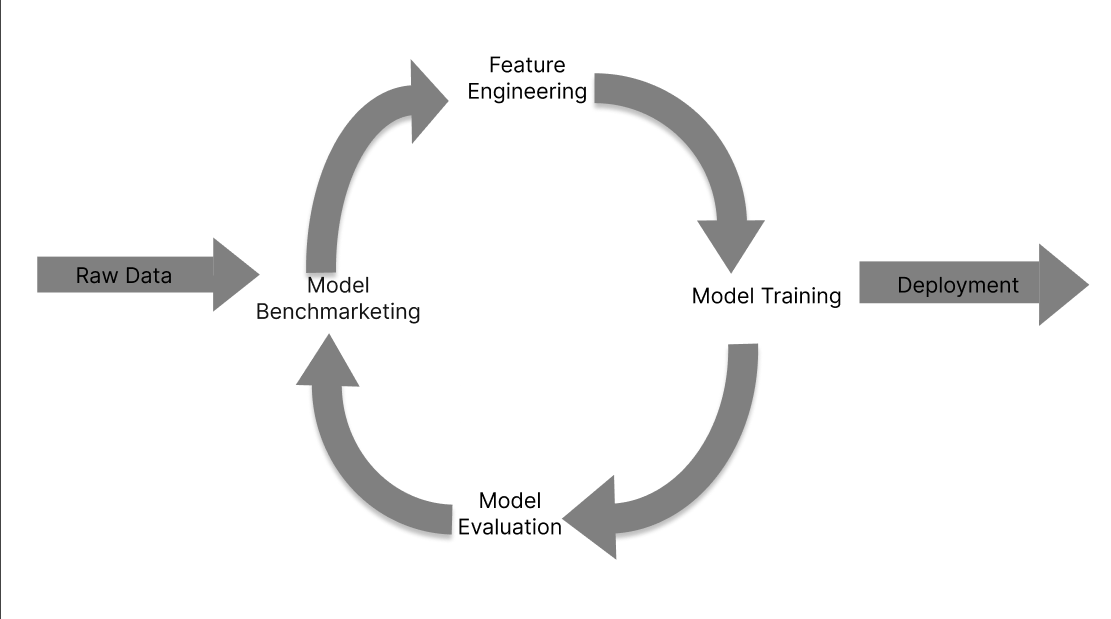

AI in practice - Celonis’ VP shares how AI can support system & process change

Brown said that, at the moment, Celonis is seeing AI being used to expedite

often tedious work, or work that often is prone to human error. Looking back

at the adoption of previous general purpose technologies, this makes sense.

More often than not the tools that are adopted early on are applied to use

cases that take time, don’t add a significant amount of value, and where

mistakes are easily made by people. ... Brown also had some thoughts

regarding how enterprises should consider their approach to AI adoption,

with a focus on not isolating people away from the technology - keeping them

close to the change and bringing them along on the journey. Firstly, Brown

acknowledged that this is going to be challenging, given the tendency for

employees to ‘build empires’ within enterprises and protect them at all

costs. She said: I'll go back to a phrase I used for a long, long time and I

still use: people don't hurt what they own. So if I'm invested in it, and

it's part of what I care about, I'm going to protect it and grow it. If I

boil down change management into one sentence, it’s about expectations and

accountability. So, what can I expect to be different and what do I need to

do differently?

Open Agile Architecture: A Comprehensive Guide for Enterprise Architecture Professionals

Open Agile Architecture equips you with a methodology that seamlessly

integrates Agile principles into the realm of enterprise architecture. In

today's business environment, change is constant. Open Agile Architecture

allows you to respond swiftly and effectively to evolving business needs,

technological advancements, and market dynamics. ... Collaboration is at the

heart of Agile methodologies, and Open Agile Architecture extends this

principle to enterprise architecture. By promoting cross-functional

collaboration and open communication, the methodology breaks down silos

within the organization. As a practitioner, you'll experience improved

collaboration between business and IT teams, fostering a shared

understanding of goals and priorities. ... Open Agile Architecture

emphasizes an iterative and incremental approach to development. This means

that instead of long, rigid planning cycles, you work on delivering

incremental value in shorter iterations. This not only ensures continuous

progress but also allows you to demonstrate tangible outcomes to

stakeholders regularly.

Microsoft Copilot is preparing advances in data protection

As the company has revealed through the Bing blog, Copilot is being prepared

to maximize the protection of user and company data that use this system.

With this, Microsoft wants to make it clear that the company’s priority is

to show that it has no interest in user data while using Copilot services in

its 365 versions. It is evident that Copilot is becoming a fundamental piece

of Microsoft’s brand strategy, and precisely for that reason they want to

distance it from some of the main stigmas that AI currently has. For its

part, Copilot is already deeply integrated into various Microsoft services,

such as Bing or Teams, where it offers considerable support to the user. One

of the concerns that many users have when using Artificial Intelligence is

the mere fact of being part of the learning and training process by the AI.

As these tools are constantly evolving, many of these systems used the

users’ own usage to create variations and advancements in various subjects.

However, over time, it has been shown that, in many cases, this has ended up

“dumbing down” the AI. However, many users find it quite ironic that an AI,

which is trained precisely by collecting data massively illicitly through

the Internet, has to actively demonstrate a system that ensures that Copilot

will not use user data to continue improving.

Artificial intelligence needs to be trained on culturally diverse datasets to avoid bias

Culture plays a significant role in shaping our communication styles and

worldviews. Just like cross-cultural human interactions can lead to

miscommunications, users from diverse cultures that are interacting with

conversational AI tools may feel misunderstood and experience them as less

useful. To be better understood by AI tools, users may adapt their

communication styles in a manner similar to how people learned to

“Americanize” their foreign accents in order to operate personal assistants

like Siri and Alexa. ... AI is already in use as the backbone of

various applications that make decisions affecting people’s lives, such as

resume filtering, rental applications and social benefits applications. For

years, AI researchers have been warning that these models learn not only

“good” statistical associations — such as considering experience as a

desired property for a job candidate — but also “bad” statistical

associations, such as considering women as less qualified for tech

positions. As LLMs are increasingly used for automating such processes, one

can imagine that the North American bias learned by these models can result

in discrimination against people from diverse cultures.

Quote for the day:

''Failure will never overtake me if

my de determination to succeed is strong enough.'' --

Og Mandino

/filters:no_upscale()/news/2024/02/apache-pekko-actor/en/resources/1actor_top_tree-1707735840414.png)