‘Fake Fingerprints’ Bypass Scanners with 3D Printing

The fake fingerprints achieved an 80 percent success rate on average, where the sensors were bypassed at least once. Researchers did not have success in defeating biometrics systems in place on Microsoft Windows 10 devices (though they said that this does not mean they are not necessarily safer; just that this particular approach did not work). However, the bigger takeaway is the sheer amount of time and budget that it still takes when creating threat models to bypass fingerprint sensors. At the end of the day, researchers said they had to create more than 50 molds and test them manually, which took months – and, they struggled to stay under a self-imposed budget of $2,000. These challenges point to the fact that a scalable, easy type of attack is not yet possible for bypassing biometrics. “Biometrics are not an Achilles heel,” Craig Williams, director of Cisco Talos Outreach, told Threatpost. “Biometrics are something that makes it very, very easy to use. You don’t have to remember a password. You don’t have to enter a password, which makes it very fast and easy. You don’t have to carry anything around with you. And so I think for most users, it’s still perfectly fine.”

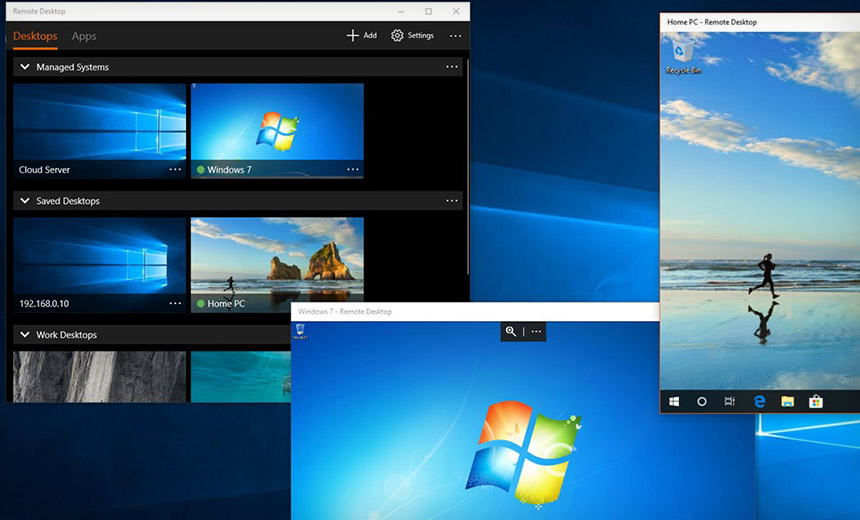

Robotic Process Automation (RPA): 6 open source tools

Open source might sound intimidating to non-developers, but there’s good news on this front: While some open source projects are particularly developer-focused, multiple options stress ease of use and no- or low-code tools, like their commercial counterparts. One reason for this: RPA use cases abound across various business functions, from finance to sales to HR and more. Tool adoption will depend considerably on the ability of these departments to manage their RPA development and ongoing management themselves, ideally in a collaborative manner with IT but not wholly dependent on IT. ... TagUI is a command-line interface for RPA that can run on any of the major OSes. TagUI uses the term and associated concept of “flows” to represent running an automated computer-based process, which can be done on demand or on a fixed schedule. ... Robocorp might have our favorite name of the lot – it kind of conjures up some of the darker, Terminator-esque images of RPA – but that’s a bit beside the point. This is a relatively new entry into the field, and somewhat unique in that it’s a venture-backed startup promising to deliver cloud-based, open source RPA tools for developers.

Inverting a matrix is one of the most common tasks in data science and machine learning. In this article I explain why inverting a matrix is very difficult and present code that you can use as-is, or as a starting point for custom matrix inversion scenarios. Specifically, this article presents an implementation of matrix inversion using Crout's decomposition. There are many different techniques to invert a matrix. The Wikipedia article on matrix inversion lists 10 categories of techniques, and each category has many variations. The fact that there are so many different ways to invert a matrix is an indirect indication of how difficult the problem is. Briefly, relatively simple matrix inversion techniques such as using cofactors and adjugates only work well for small matrices (roughly 10 x 10 or smaller). For larger matrices you should write code that involves a complex technique called matrix decomposition. The code presented in this article will run as a .NET Core console application or as a .NET Framework application. Many of the newer Microsoft technologies, such as the ML.NET code library, specifically target .NET Core so it makes sense to develop most new C# machine learning code in that environment.

PMI offers free project management courses during COVID-19 quarantines

This is the first time that the group has offered these online training and consulting resources at no charge, said DePrisco. The Project Management for Beginners course introduces participants to the foundational knowledge necessary to join a project team and provides insights into taking steps on the path to a project management career. The Agile in the Project Management course walks participants through their role as a project management office director and introduces a series of scenarios designed to improve their project management office's performance using agile principles and processes. The Business Continuity course offers information and lessons on rethinking work processes, which may be particularly helpful today as companies and their leaders and workers seek ways to cope with continuing their operations during the pandemic. ... Project management skills can be extremely beneficial during times of emergency such as the pandemic, he said. "Project management initiatives play an important role in preparing for these types of disruptions. All work is accomplished through programs and projects, and project managers are used to changing methods and approaches."

These hackers have been quietly targeting Linux servers for years

Linux is not typically a user-facing technology, so security companies tend to focus on it less, he explained. As a result, these hacking groups have zeroed in on that gap in security and leveraged it for their strategic advantage to steal intellectual property from targeted sectors for years without anyone noticing, he said. "It's critical for these servers to be up all the time; so what better place to put a root kit or a pervasive active tool than on a machine that's going to be turned on all time?" said Cornelius. The attackers scan for Red Hat Enterprise, CentOS, and Ubuntu Linux environments across a wide range of industries, attempting to identify unpatched servers. From there it's simply a case of establishing persistence on the network with malware. Not only can this provide the attackers the access they need to sensitive information and data, but with the infection on the servers themselves, they can create a persistent back door into the network that provides them with a way back in whenever they like – so long as the compromise isn't uncovered. The attackers are careful to do as little damage as possible to the networks so as to avoid detection – and therefore keep campaigns up and running for as long as possible, which might be years.

Is It Possible To Become A Successful Self-Taught Data Scientist?

Although a university degree is a great accomplishment, self-taught aspirants can rejoice as this is not enough to land a good data science job. While a degree may lay down a foundation for a career in this field – and may get one a job interview – it is not a key qualifying factor when applying for tech positions. Even though you may be competing against applicants who have relevant degrees, you can garner a competitive advantage with upskilling using the world of resources available online. What is more, self-study also signals a candidate’s motivation to succeed. But you need to first narrow down what you need to learn to substitute for your lack of formal training. Data science is a broad discipline and comprises a wide collection of jobs – from statisticians to machine learning (ML) experts, to business analysts to data visualization experts. Since the skills required for each vary, it is important to first narrow down the skill sets you need to acquire, and then create a plan around it.

9 Security Podcasts Worth Tuning In To

The cybersecurity industry changes every day, sometimes multiple times a day, and it can be overwhelming for professionals to keep up with the constant flow of breaking news, new threats, defensive strategies, reports, mergers, valuations, product releases, and trends. Podcasts can help you stay in the loop on security news by hearing the latest updates and analysis from experts across the industry. Some of the best security podcasts offer insight from practitioners, CISOs, analysts, and reporters who take a closer look at industry events and aim to educate their listeners with digestible information and discussions with other security pros. Many cybersecurity podcasts offer informative takes on recent incidents and shed light on how current events; for example, COVID-19, are affecting the IT security community. Others discuss specific parts of the industry, like the Dark Web or the relationship between CISOs and vendors. The handy thing about podcasts is they help you stay on top of cybersecurity news and trends, and learn from the pros, when you're not sitting in front of a screen or attending a conference.

How to Integrate Security Into Your Application Infrastructure

Cequence describes the threats they address, stating that the web, mobile, and API-based apps that power organizations are also targets for relentless cyberattacks. These include automated bot attacks focused on business logic abuse (such as credential stuffing, site scraping, fake account creation, and more), as well as targeted attacks designed to exploit both known and unknown application vulnerabilities. Cequence Security stops these attacks with an AI-powered, container-based software platform that can be easily deployed on-premises or in the cloud, wherever your apps need to be protected. Matt told us, “We look at our customer’s web or application traffic and use machine learning algorithms to look for patterns of automation to determine if it is malicious. While doing this, we mustn’t introduce additional friction to the user experience. “We collect telemetry and look at the patterns within the traffic. We watch for underlying behavior characteristics that may indicate potentially malicious traffic.

Zero-day exploits increasingly commodified, say researchers

In new research published this week, FireEye said it had documented more zero-day exploitations in 2019 than in the previous three years, and although not every attack could be pinned on a known and tracked group, a wider range of tracked actors do seem to have gained access to these capabilities. The researchers said they had seen a significant uptick, over time, in the number of zero-days being leveraged by threat actors who they suspect of being “customers” of private companies that supply offensive cyber capabilities to governments or law enforcement agencies. “We surmise that access to zero-day capabilities is becoming increasingly commodified based on the proportion of zero-days exploited in the wild by suspected customers of private companies,” they said. “Private companies are likely to be creating and supplying a larger proportion of zero-days than they have in the past, resulting in a concentration of zero-day capabilities among highly resourced groups.

Chrome 81 released with initial support for the Web NFC standard

Plans to remove the TLS 1.0 and TLS 1.1 encryption protocols from Chrome, also initially scheduled for Chrome 81, are now delayed to Chrome 84. The decision to delay removing these two protocols is related to the current COVID-19 outbreak, as removing the two protocols might have prevented some Chrome 81 users from accessing critical government healthcare sites that were still using TLS 1.0 and 1.1 to set up their HTTPS connections. Removing support would have prevented users from accessing those sites altogether, something that Google wanted to avoid. Today's Chrome 81 release marks the most turbulent release in Chrome's history. Because the browser maker had to shift features around from version to version, and because the three-week Chrome 81 delay also disrupted Google's regular six-week release schedule, Google has now taken a first-of-its-kind step to scrap a Chrome version. Google said the next version of Chrome is v83, and that work on v82 has been permanently abandoned.

Quote for the day:

"Every great leader can take you back to a defining moment when they decided to lead." -- John Paul Warren