AI Transforming & Automating The Consumer Goods Industry

Utilizing AI algorithms, machines outfitted with intelligent automation can assess emerging production issues and are liable to mess quality up. At the point when they detect a potential issue, they can automatically notify manufacturing personnel and may even autonomously execute corrective actions. By improving the customer experience, retailers can release altogether new ways to deal with customer engagement and interaction. With intelligent automation, they can identify customers’ anticipated needs at exact times and catch the correct minute with the correct idea in the quest for competitive advantage. The automation of customer experience processes is seeing somewhat less footing compared to different parts of intelligent automation. Today, brands and retailers have started to use AI-fueled engines to automatically trigger email campaigns. A much progressively amazing utilization of this capability is to apply it to the order fulfillment process, empowering users to make purchases legitimately from within the campaign.

Corporate culture complicates Kubernetes and container collaboration

When it comes to navigating corporate culture, things get a bit difficult for Kubernetes and container proponents. For example, 40% of survey respondents cited a lack of internal alignment as a problem when selecting a Kubernetes distribution. Surprisingly, in some cases, business leaders want to get their hands in the process. Plus, there are many other hands involved in the decision -- 83% say more than one team is involved in choosing a Kubernetes distribution. The primary decision-maker varies from organization to organization, depending in part on whether Kubernetes is running in development or production. Development teams are the primary decision makers 38% of the time when Kubernetes is deployed only for development, while infrastructure teams are the primary decision makers 23% of the time in production environments. It's notable that C-level executives are involved 18% of the time. "This involvement is occurring because enterprises are choosing their next-generation platform, and that earns executive attention," the survey's authors relate. The survey also finds a significant disconnect between the views of upper-level company executives and developers: 46% of executives think the biggest impediment to developers is integrating new technology into existing systems.

Accelerating data-driven discoveries

Matz says SciDB did 1 billion linear regressions in less than an hour in a recent benchmark, and that it can scale well beyond that, which could speed up discoveries and lower costs for researchers who have traditionally had to extract their data from files and then rely on less efficient cloud-computing-based methods to apply algorithms at scale. “If researchers can run complex analytics in minutes and that used to take days, that dramatically changes the number of hard questions you can ask and answer,” Matz says. “That is a force-multiplier that will transform research daily.” Beyond life sciences, Paradigm4’s system holds promise for any industry dealing with multifaceted data, including earth sciences, where Matz says a NASA climatologist is already using the system, and industrial IoT, where data scientists consider large amounts of diverse data to understand complex manufacturing systems. Matz says the company will focus more on those industries next year. In the life sciences, however, the founders believe they already have a revolutionary product that’s enabling a new world of discoveries.

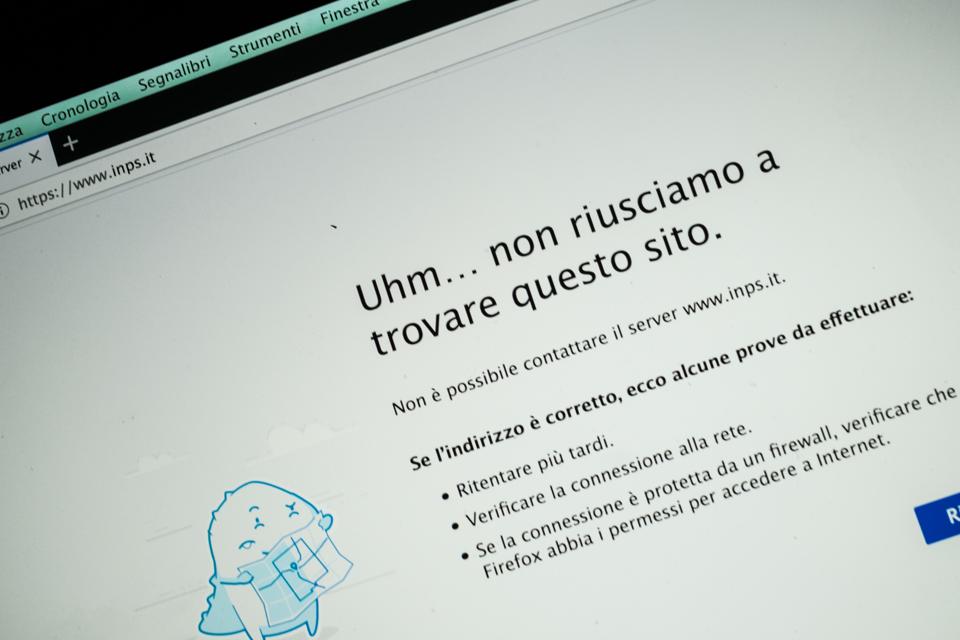

Cyber Attack Disrupts COVID-19 Payouts: Hackers Take Down Italian Social Security Site

We've already seen supposed "elite hackers" attacking the World Health Organization, cyber criminals hitting a COVID-19 vaccine testing facility with ransomware and healthcare workers being targeted with Windows malware using coronavirus information as the lure. Now, it has been reported, hackers have forced the Italian social security website to shut down for a period, as the most vulnerable in society started their claims for a €600 ($655) crisis payout. The general director of Italian welfare agency INPS, Pasquale Tridico, told the state broadcaster RAI on April 1 that there had been several hacker attacks across the previous few days. "They continued today, and we had to close the website," Tridico said. This at the same time as the site was receiving 100 application requests per second, according to Tridico. Italian police have been informed of the ongoing cyberattacks, and the ruling Democratic Party has suggested that national security services could be put on the case of finding out who is responsible.

What is a design sprint? A 5-day plan for improving products and services

Design sprints start with a team of around four to seven people, which is the recommended team size according to GV. Teams include a facilitator, designer, decision maker, product manager, engineer and someone from a relevant business unit. The decision maker on the team is often the CEO, especially at smaller companies or startups. A design sprint is intended to move quickly, lasting just five days, and it’s designed to spur ideas and create learning opportunities without having to build and launch a completed product or service. With a design sprint, you can get fast feedback, improve products and services and find new opportunities throughout the five-day sprint by creating a testable prototype. The prototype will allow your team to get a better sense of how customers and clients will react to the finished product, what needs to be changed and what customers enjoyed about the product or service. Design sprints are broken out into five major phases that take place over the five-day sprint. These phases are intended to help you develop the best team to tackle a project and to guide your business through the design sprint.

Distributed disruption: Coronavirus multiplies the risk of severe cyberattacks

When it comes to remote work, VPN servers turn into bottlenecks. Keeping them secure and available is a number-one IT priority. Hackers can launch DDoS campaigns on VPN services and deplete their resources, knocking out the VPN server and limiting its availability. The implications are clear: Since the VPN server is the gateway to a company’s internal network, an outage can keep all employees working remotely from doing their job, effectively cutting off the entire organization from the outside world. During an unprecedented time of peak traffic, the risk of a DDoS attack is growing exponentially. If the utilization of the available bandwidth is very high, it does not take much to cause an outage. In fact, even a tiny attack can become the last nail in the coffin. For instance, a VPN server or firewall can be taken down by a TCP blend attack with an attack volume as low as 1 Mbps. SSL-based VPNs are just as vulnerable to an SSL flood attack, as are web servers. Making matters worse, many organizations either use in-house hardware appliances or rely on their Internet carrier to ward off incoming attacks.

How to Prepare for Your Next Cybersecurity Compliance Audit

Reading a list of cybersecurity compliance frameworks is like looking at alphabet soup: NIST CSF, PCI DSS, HIPAA, FISMA, GDPR…the list goes on. It’s easy to be overwhelmed, and not only because of the acronyms. Many frameworks do not tell you where to start or exactly how to become compliant. Cybersecurity best practices from the Center for Internet Security (CIS) provide prioritized, prescriptive guidance for a strong cybersecurity foundation. And, they support your efforts toward compliance with the aforementioned alphabet soup. CIS offers multiple resources to help organizations get started with a compliance plan that also improves cyber defenses. Each of these resources is developed through a community-driven, consensus-based process. Cybersecurity specialists and subject matter experts volunteer their time to ensure these resources are robust and secure. What they are: The CIS Controls approach cyber defense with prioritized and prescriptive security guidance. There are 20 top-level CIS Controls and 171 Sub-Controls, prioritized into three Implementation Groups (IGs). The CIS Controls IGs prioritize cybersecurity actions based on organizational maturity level and available resources.

Trustworthy AI must be designed and trained to follow a fair, consistent process and make fair decisions. It must also include internal and external checks to reduce discriminatory bias. Bias is an ongoing challenge for humans and society, not just AI. However, the challenge is even greater for AI because it lacks a nuanced understanding of social standards—not to mention the extraordinary general intelligence required to achieve “common sense”— potentially leading to decisions that are technically correct but socially unacceptable. AI learns from the data sets used to train it, and if those data sets contain real-world bias then AI systems can learn, amplify, and propagate that bias at digital speed and scale. For example, an AI system that decides on-the-fly where to place online job ads might unfairly target ads for higher paying jobs at a website’s male visitors because the real-world data shows men typically earn more than women. Similarly, a financial services company that uses AI to screen mortgage applications might find its algorithm is unfairly discriminating against people based on factors that are not socially acceptable, such as race, gender, or age. In both cases, the company responsible for the AI could face significant consequences, including regulatory fines and reputation damage.

AI runs smack up against a big data problem in COVID-19 diagnosis

It's simple in theory to identify what a computer should look for. An X-ray or a CT scan will show formations in the lung that are associated with a number of respiratory conditions including pneumonia. The feature in an image most often linked to a COVID-19 case, although not exclusive to COVID-19, is what's called "ground-glass opacity," a kind of haze hovering in an area of the lung, caused by a build-up of fluid. Opacities and other anomalies can show up even in asymptomatic COVID-19 patients. What slows things down is that neural networks have to be tuned to pick out opacities in the pixels of a high-resolution image, and that takes data. It also takes time working with physicians who know what to look for in the data. Both data and expertise are in short supply at the outset of a pandemic. The neural network programs that Xu and others are deploying have been refined by computer scientists to a high degree of sophistication over many years and they are providing ready tools with which to build new systems. The system that Xu and team built combines two deep learning neural networks, a "ResNet-50," the standard for many years for image recognition, and something called "UNet++" that was developed at Arizona State University in 2018 for the specific purpose of processing chest CT scans.

Code Search Now Available to Browse Google's Open-Source Projects

Code Search is used by Google developers to search through Google's huge internal codebase. Now, Google has made it accessible to everyone to explore and better understand Google's open source projects, including TensorFlow, Go, Angular, and many others. CodeSearch aims to make it easier for developers to move through a codebase, find functions and variables using a powerful search language, readily locate where those are used, and so on. Code Search provides a sophisticated UI that supports suggest-as-you-type help that includes information about the type of an object, the path of the file, and the repository to which it belongs. This kind of behaviour is supported through code-savvy textual searches that use a custom search language. For example, to search for a function foo in a Go file, you can use lang:go:function:foo. For repositories that include cross-reference information, Code Search is also able to display richer information, including a list of places from where a given symbol is referenced. Code Search repositories that provide cross-reference information include Angular, Bazel, Go, etc.

Quote for the day:

"Change your friends if they are holding you back - pick the new ones with caution and care." -- Tim Fargo

No comments:

Post a Comment