Cloud computing could look quite different in a few years

Everything may run on the cloud, but running multiple clouds at the same time can still pose challenges, such as compliance with data regulation. Slack — the fastest-growing Software-as-a-Service company on the planet — has already shown how integration can work, and its success is reflected in its trial-to-paid conversion rate, which stands at 30 percent. Slack integrates with other apps such as Trello, Giphy, and Simple Poll so users can access all of them from a single platform. This is something we’ll see increasingly in cloud computing as players large and small look to help businesses and individuals become more efficient and productive. As more and more of life happens in the cloud, the term “cloud” could disappear altogether (and companies like mine, with “cloud” in their name may need to rethink their branding). What we now call “cloud computing” will simply be “computing.” And maybe, by extension, “as-a-Service” will disappear, too, as SaaS replaces traditional software. In tech, you can never be certain of the direction of travel. Things change quickly and in unexpected ways, and some of the changes we’ve seen over even the past 10 years would have been inconceivable just a few years before.

Diversifying the high-tech talent pool

Entrepreneurs always find a way. I’ve never considered being a woman or a Latina to be an obstacle. In fact, I usually consider it to be quite an asset, in part due to the incredible entrepreneurial culture of the Hispanic community in general and my family in particular. There are so many challenges to starting your own business at 25 years old, including insufficient access to affordable capital, top talent, and customers. These obstacles can be overcome only through consistent growth; that in turn can be accomplished only by consistently reinvesting back into Pinnacle. In many ways, everything we have achieved has only been made possible by the simple philosophy of investing back into the business, which is a message I share with other entrepreneurs every chance I get. ... The successful firms — Pinnacle included — have embraced these technologies and adapted their business models and service offerings accordingly. Others have chosen to sell, resulting in our industry consolidating somewhat over the years. No matter what, the one thing we will always be able to count on is change, so we’re making the investments today to be ready for tomorrow.

What are edge computing challenges for the network?

In the ongoing back and forth between centralized and decentralized IT, we are beginning to see the limitations of a centralized IT that relies on hundreds or thousands of industry standard servers running a host of applications in consolidated data centers. New types of workloads, distributed computing and the advent of IoT have fueled the rise of edge computing. ... When compute resources and applications are centralized in a data center, enterprises can standardize both technical security and physical security. It's possible to build a wall around the resources for easier security. But edge computing forces businesses to grapple with enforcing the same network security models and the physical security parameters for more remote servers. The challenge is the security footprint and traffic patterns are all over the place. ... The need for edge computing typically emerges because disparate locations are collecting large amounts of data. Enterprises need an overall data protection strategy that can comprehend all this data.

Empowering robotic process automation with a bot development framework

The bot development framework is a methodology, which standardizes bot development throughout the organization. It is a template or skeleton providing generic functionality and can be selectively changed by additional user-written code. It adheres to the design and development guidelines defined by the Center of Excellence (CoE), performs testing, and provides application access. This speeds up the development process and makes it simple and convenient enough for business units to create bots with no or minimum help from RPA team. It helps saving time in development, testing, building, deploying and execution. ... Define frequently changing variables in a central configuration fileCommon data such as application URLs, orchestrator queue names, maximum retry numbers, timeout values, asset names, etc. are prone to updates frequently. It is recommended to create a “configuration file” to store these data in a centralized location. This will increase process efficiency by saving the time needed to access multiple applications.

Experts: Enterprise IoT enters the mass-adoption phase

That’s not to imply that there aren’t still huge tasks facing both companies trying to implement their own IoT frameworks and the creators of the technology underpinning them. For one thing, IoT tech requires a huge array of different sets of specialized knowledge. “That means partnerships, because you need an expert in your [vertical] area to know what you’re looking for, you need an expert in communications, and you might need a systems integrator,” said Trickey. Phil Beecher, the president and CEO of the Wi-SUN Alliance (the acronym stands for Smart Ubiquitous Networks, and the group is heavily focused on IoT for the utility sector), concurred with that, arguing that broad ecosystems of different technologies and different partners would be needed. “There’s no one technology that’s going to solve all these problems, no matter how much some parties might push it,” he said. One of the central problems – IoT security – is particularly dear to Beecher’s heart, given the consequences of successful hacks of the electrical grid or other utilities.

What does Arm's new N1 architecture mean for Windows servers?

The AWS A1 Arm instances are for scale-out workloads like microservices, web hosting and apps written in Ruby and Python. Like Cloudflare's workloads, those are tasks that benefit from the massive parallelisation and high memory bandwidth that Arm provides. Inside Azure, Windows Server on Arm is running not virtual machines — because emulating x86 trades off performance for low power — but highly parallel PaaS workloads like Bing search index generation, storage and big data processing. For the first time, an Arm-based supercomputer (built by HPE with Marvell ThunderX2 processors) is on the list of the top 500 systems in HPC — another highly parallel workload. And the next-generation Arm Neoverse N1 architecture is designed specifically for servers and infrastructure. Part of that is Arm delivering a whole server processor reference design, not just a CPU spec, making it easier to build N1 servers. The first products based on N1 should be available in late 2019 or early 2020, with a second generation following in late 2020 or early 2021.

The World Economic Forum wants to develop global rules for AI

The issue is of paramount importance given the current geopolitical winds. AI is widely viewed as critical to national competitiveness and geopolitical advantage. The effort to find common ground is also important considering the way technology is driving a wedge between countries, especially the United States and its big economic rival, China. “Many see AI through the lens of economic and geopolitical competition,” says Michael Sellitto, deputy director of the Stanford Institute for Human-Centered AI. “[They] tend to create barriers that preserve their perceived strategic advantages, in access to data or research, for example.” A number of nations have announced AI plans that promise to prioritize funding, development, and application of the technology. But efforts to build consensus on how AI should be governed have been limited. This April, the EU released guidelines for the ethical use of AI. The Organisation for Economic Co-operation and Development (OECD), a coalition of countries dedicated to promoting democracy and economic development, this month announced a set of AI principles built upon its own objectives.

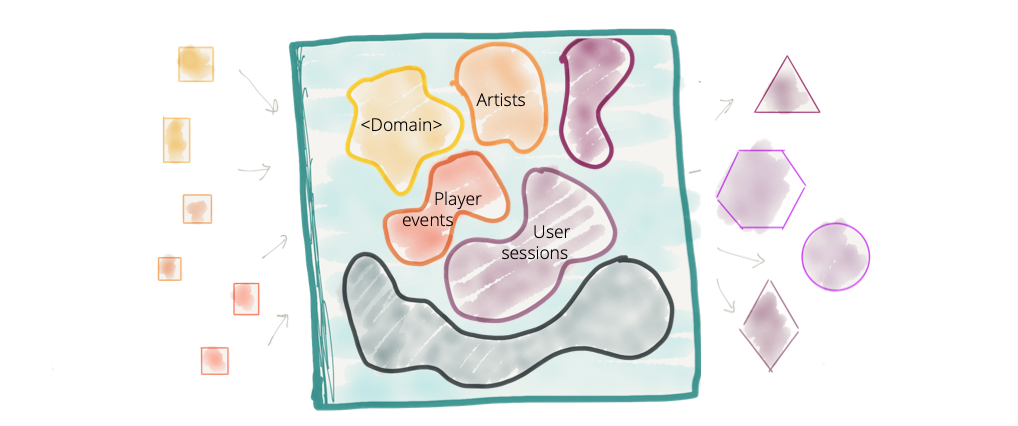

Data Architect's Guide to Containers

From the perspective of the analyst or data scientist, containers are valuable for a number of reasons. For one thing, container virtualization has the potential to substantively transform the means by which data is created, exchanged, and consumed in self-service discovery, data science, and other practices. The container model permits an analyst to share not only the results of her analysis, but the data, transformations, models, etc. she used to produce it. Should the analyst wish to share this work with her colleagues, she could, within certain limits, encapsulate what she’s done in a container. In addition to this, containers claim to confer several other distinct advantages—not least of which is a consonance with DataOps, DevOps and similar continuous software delivery practices—that I will explore in this series. To get a sense of what is different and valuable about containers, let’s look more closely at some of the other differences between containers, VMs, and related modes of virtualization. ... Unlike a VM image, the ideal container does not have an existence independent of its execution. It is, rather, quintessentially disposable in the sense that it is compiled at run time from two or more layers, each of which is instantiated in an image. Conceptually, these “layers” could be thought of as analogous to, e.g., Photoshop layers: by superimposing a myriad of layers, one on top of the other, an artist or designer can create a rich final image.

Business leaders failing to address cyber threats

Despite this, the majority (71%) of the C-suite concede that they have gaps in their knowledge when it comes to some of the main cyber threats facing businesses today. This includes malware(78%), despite the fact that 70% of businesses admit they have found malware hidden on their networks for an unknown period of time. When a security breach does happen, in the majority of businesses surveyed, it is first reported to the security team (70%) or the executive/senior management team (61%). In less than half of cases is it reported to the board (40%). This is unsurprising, the report said, in light of the fact that one-third of CEOs state that they would terminate the contract of those responsible for a data breach. The report also reveals the only half of CISOs say they feel valued by the rest of the executive team from a revenue and brand protection standpoint, while nearly a fifth (18%) of more than 400 CISOs questioned in a separate poll say they believe the board is indifferent to the security team or actually sees them as an inconvenience.

Executive's guide to prescriptive analytics

Any data that creates a picture of the present can be used to create a descriptive model. Common types of data are customer feedback, budget reports, sales numbers, and other information that allows an analyst to paint a picture of the present using data about the past. A thorough and complete descriptive model can then be used in predictive analysis to build a model of what's likely to happen in the future if the organization's current course is maintained without any change. Predictive models are built using machine learning and artificial intelligence, and take into account any potential variables used in a descriptive model. Like a descriptive analysis, a predictive model can be as broadly or as narrowly focused as a business needs it to be. Predictive models are useful, but they aren't designed to do anything outside of predicting current trends into the future. That's where prescriptive analytics comes in. A good prescriptive model will account for all potential data points that can alter the course of business, make changes to those variables, and build a model of what's likely to happen if those changes are made.

Quote for the day:

"The question isn't who is going to let me; it's who is going to stop me." -- Ayn Rand