Protecting PHI on Devices: Essential Steps

"Anyone with physical access to electronic computing devices and media, including malicious actors, potentially has the ability to change configurations, install malicious programs, change information or access sensitive information," the Department of Health and Human Services notes in September edition of its monthly e-newsletter advisory. While HIPAA requires covered entities and business associates to limit physical access to their electronic information systems and the facilities in which they are housed, organizations are also required to implement policies and procedures that govern the receipt and removal of hardware and electronic media containing electronic PHI into and out of an organization's facilities, as well as their movement within a facility, the HHS Office for Civil Rights notes. Implementing processes to govern the movement of electronic devices and media may vary depending on the type of device and media, the agency states. ... Organizations can use various methods to govern and track the movement of electronic devices and media, the agency explains.

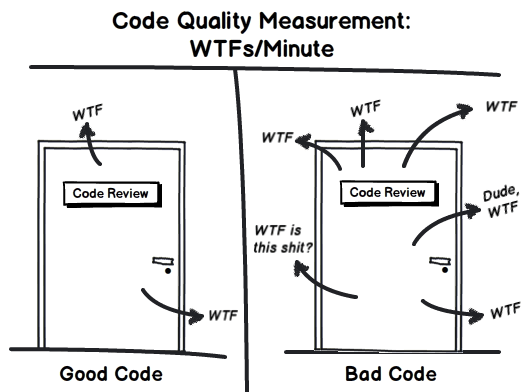

5 top strategies to make development cycles more efficient

Transparency and project visibility is important. Both are required for teams to learn, research and implement changes. Changes could only be responded to if everyone on the team, from development to administration, are on the same page. Daily and weekly meetings are structured within larger time frames, sometimes referred to as sprints. These time frames define overarching goals. If implemented at regular intervals these time periods, or sprints, can be a great time to reframe, reassess, and retune the development process. It is important in modern software development environments to keep a steady, methodical pace. Only through continual communication and review is this possible. The needs of the client, customer or user need to come before implementing tools. Often we fall in love with certain tools and processes within software development, but it is important to understand that these tools and processes are only as useful insofar as they help empower our clients, customers and users. Anything that hinders customer satisfaction must be cut out.

The use cases, challenges and benefits behind retail AI

Decades on, retailers now collect so much customer data from so many different people that it is impossible to offer this personalised service without the help of technology. As pointed out by Brian Kalms, partner and retail lead at consultancy Elixirr, some retailers have so much data there is no longer the option of analysing it by hand, especially when adding new online ventures into the mix. “Historically, when you went into a store, you didn’t identify yourself,” he says. “Online brands know who you are, so retailers are going to have to learn to be data-savvy, and that’s one of the first applications of AI – it’s been in the form of bots and communications, and it’s moving into data analysis.” Where retailers used to categorise their customers in a “simplistic way”, now data can be used to better understand customers individually. For example, using old customer demographics based on socio-economic background, earnings and gender, a consumer who buys high-end food but “value” tissues should not exist, be we know this isn’t the case.

5 Tips for Integrating Security Best Practices into Your Cloud Strategy

Agility, resilience, and speed are baked into the development of every cloud implementation; they are why organizations adopt cloud-first strategies. But without the proper tools, sys admins can't effectively manage and protect their evolving cloud landscape, negating these benefits. As you plan your cloud strategy, the right tools and a detailed road map are essential for supporting a successful transition. Start by assuming that at some point, if not already, some of your workload will move to the public cloud, so you'll really be managing a hybrid environment. Next, it's highly like that the people supporting your data center will also support your cloud, so to avoid misconfigurations and minimize complexity, adopt management and security solutions that support hybrid cloud scenarios. It's also likely your environment will evolve to include more than one cloud service. Whether through a merger or acquisition, adopted in a development lab or acquired elsewhere, you may be faced with a combination of Microsoft Azure, Amazon Web Services, and/or Google cloud environments.

Why your company needs an open source program office

We seem to be very confused about what constitutes an "open source company." Tobie Langel has asked if Mozilla and Microsoft are open source companies. The majority (78%) think Mozilla is, and an almost equivalent percentage (67%) think Microsoft is not. Yet, Microsoft contributes orders of magnitude more open source code than Mozilla. The reality is that both organizations qualify as "open source companies." Hopefully yours does, too. ... It would be tempting to think that an open source program office is a lagging indicator of open source activity, but in my experience it's actually a leading indicator, and a causal factor. As my colleagues Fil Maj and Steve Gill presented at the recent Open Source Summit in Vancouver, a good open source program can remove roadblocks to participation. We've seen Adobe go from a top-32 contributor (based on active GitHub contributors) to top-16 in a year, with a refactored contribution process a major driver to that change.

Standard to protect against BGP hijack attacks gets first official draft

Back in October 2017, two US government agencies, the aforementioned NIST and the Department of Homeland Security (DHS) Science and Technology Directorate, started a joint project named Secure Inter-Domain Routing (SIDR) with the explicit purpose of securing the BGP protocol from such attacks. "The overall defensive effort will use cryptographic methods to ensure routing data travels along an authorized path between networks," the NCCoE at NIST said in a press release at the time. "There are three essential components of the IETF SIDR effort: The first, Resource Public Key Infrastructure (RPKI), provides a way for a holder of a block of internet addresses--typically a company or cloud service provider--to stipulate which networks can announce a direct connection to their address block; the second, BGP Origin Validation, allows routers to use RPKI information to filter out unauthorized BGP route announcements, eliminating the ability of malicious parties to easily hijack routes to specific destinations.

How the TOGAF Standard Serves Enterprise Architecture

Businesses thrive off change to deliver new products and services to earn revenue and stay relevant. But throughout a business’s lifetime, new systems are created, mergers require system integration or consolidation, new technologies are adopted for a competitive edge, and more systems need integration to share information. A well-defined and governed EA practice is critically important at an organizational level to confront, handle and manage these technological and computing complexities. Without an EA practice, there could be disconnects between systems, inconsistencies in solutions, miscommunications among product and engineering teams, duplication of engineering efforts and erosion of an organization’s architecture and solution quality. Let us use a product startup company as an example. This startup could experience surging growth, rapidly advancing from nascent to emergent. The business and technology are experiencing brisk change. Without an EA practice, or at least some level of architecture guidance, the startup may quickly find its systems in disparity and unable to share information.

How the industry expects to secure information in a quantum world

QLabs is focusing in particular on applications in cybersecurity and communications and scooping up funding from the Australian government to help it do that at a Defence-grade level. Today, commercial exchange of information is protected primarily via public key infrastructure (PKI), with the security of PKI reliant on the computational complexity of certain mathematical operations. Sharma said that essentially, the system is reliant on mathematical problems that are easy to do one way, but difficult to reverse in order to decrypt -- and that's what cybersecurity currently relies on. One such system used for PKI exchange is an RSA algorithm. "The mathematics of the RSA key exchange will be broken once we have a quantum computer because it will be able to do the reverse calculation much faster than we can with conventional computers, even supercomputers," Sharma explained. "That's where the threat arises ... when we look forward we need to recognise that certainly within the next decade, most people would contend, that we'd have a quantum computer available at a useful scale.

The deep-learning revolution: How understanding the brain will let us supercharge AI

Sejnowski compares the neural networks of today to the early steam engines developed by the engineer James Watt at the dawn of the Industrial Age - remarkable tools that we know work but are uncertain how. "This is exactly what happened in the steam engines. 'My god, we've got this artifact that works. There must be some explanation for why it works and some way to understand it'. "There's a tremendous amount of theoretical mathematical exploration occurring to really try to understand and build a theory for a deep learning." If research into deep learning follows the same trajectory as that spurred by the steam engine, Sejnowski predicts society is at the start of journey of discovery that will prove transformative, citing how the first steam engines "attracted the attention of the physicists and mathematicians who developed a theory called thermodynamics, which then allowed them to improve the performance of the steam engine, and led to many innovative improvements that continued over the next hundred years, that led to these massive steam engines that pulled trains across the continent."

Digital Payments Security: Lessons From Canada

Canada, which has a head start on the adoption of digital payments, has learned some valuable security lessons that could be beneficial to the U.S., says Gord Jamieson of Visa. "If we look at Canada itself as a market, we're probably one of the leading countries when it comes to the adoption and usage of digital payments," says Jamieson, head of payment system risk for Visa Canada. "Seventy percent of our Canadian personal consumption expenditure is conducted using digital payments." ... "We see tokenization as basically the key to addressing fraud within this space," he says. "Tokenization is going to take that account data out of the mix. It's going to be replaced by a token - a proxy value - that today would go through the [payment] rails the same way as a normal transaction for authorization. ... And the beauty of a token is that token is unique to that environment and if it gets compromised, then you simply replace the token."

Quote for the day:

"All leadership takes place through the communication of ideas to the minds of others." -- Charles Cooley