A Brief History of Data Ethics

The roots of the practice of data ethics can be traced back to the mid-20th

century when concerns about privacy and confidentiality began to emerge

alongside the growing use of computers for data processing. The development of

automated data collection systems raised questions about who had access to

personal information and how it could be misused. Early ethical discussions

primarily revolved around protecting individual privacy rights and ensuring the

responsible handling of sensitive data. One pivotal moment came with the

enactment of the Fair Information Practice Principles (FIPPs) in the United

States in the 1970s. These principles, which emphasized transparency,

accountability, and user control over personal data, laid the groundwork for

modern data protection laws and influenced ethical debates globally. ... Ethical

guidelines such as those proposed by the European Union’s General Data

Protection Regulation (GDPR) emphasize the importance of informed consent,

limiting the collection of data to its intended use, and data minimization. All

these concepts are part of an ethical approach to data and its usage.

Collaborative AI in Building Architecture

As a design practice fascinated by the practical deployment of AI, we can’t

help but be reminded of the early days of the personal computer, as this also

had a high impact on the design of workplace. Back in the 1980s, most

computers were giant, expensive mainframes that only large companies and

universities could afford. But then, a few visionary companies started putting

computers on desktops, from workplaces, to schools and finally homes.

Suddenly, computing power was accessible to everyone but it needed different

spaces. ... As with any powerful new tool, AI also brings with it profound

challenges and responsibilities. One significant concern is the potential for

AI to perpetuate or even amplify biases present in the data it is trained on,

leading to unfair or discriminatory outcomes. AI bias is already prevalent and

it is crucial we learn how to teach AI to discern bias. Not so easy. AI could

also be used maliciously, e.g. to create deepfakes or spread misinformation.

There are also legitimate concerns about the impact of AI on jobs and the

workforce, but equally how it improves and inspires that workforce.

The Deeper Issues Surrounding Data Privacy

Corporate legal departments will continue to draft voluminous agreement

contracts packed with fine print provisions and disclaimers. CIOs can’t avoid

this, but they can make a case to clearly present to users of websites and

services how and under what conditions data is collected and shared. Many

companies are doing this—and are also providing "Opt Out" mechanisms for users

who are uncomfortable with the corporate data privacy policy. That said,

taking these steps can be easier said than done. There are the third-party

agreements that upper management makes that include provisions for data

sharing, and there is also the issue of data custody. For instance, if you

choose to store some of your customer data on a cloud service and you no

longer have direct custody of your data, and the cloud provider experiences a

breach that comprises your data, whose fault is it? Once again, there are no

ironclad legal or federal mandates that address this issue-but insurance

companies do tackle it. “In a cloud environment, the data owner faces

liability for losses resulting from a data breach, even if the security

failures are the fault of the data holder (cloud provider),” says Transparity

Insurance Services.

A survival guide for data privacy in the age of federal inaction

First, organizations should map or inventory their data to understand what

they have. By mapping and inventorying data, organizations can better

visualize, contextualize and prioritize risks. And, by knowing what data you

have, not only can you manage current privacy compliance risks, but you can

also be better prepared to respond to new requirements. As an example, those

data maps can allow you to see the data flows you have in place where you are

sharing data – a key to accurately reviewing your third-party risks. In

addition to be able to prepare for existing, and new, privacy laws, it also

allows organizations to be able to identify their data flows to minimize risk

exposure or compromise by being able to better understand where you are

distributing your data. Secondly, companies should think through how to

operationalize priority areas to embed them in your business. This might be

through training of privacy champions and adopting technology to automate

privacy compliance obligations such as implementing an assessments program

that allows you to better understand data-related impact.

The Struggle To Test Microservices Before Merging

End-to-end testing is really where the rubber meets the road, and we get the

most reliable tests when sending in requests that actually hit all

dependencies and services to form a correct response. Integration testing at

the API or frontend level using real microservice dependencies offers

substantial value. These tests assess real behaviors and interactions,

providing a realistic view of the system’s functionality. Typically, such

tests are run post-merge in a staging or pre-production environment, often

referred to as end-to-end (E2E) testing. ... What we really want is a

realistic environment that can be used by any developer, even at an early

stage of working on a PR. Achieving the benefits of API and frontend-level

testing pre-merge would save effort on writing and maintaining mocks while

testing real system behaviors. This can be done using canary-style testing in

a shared baseline environment, akin to canary rollouts but in a pre-production

context. To clarify that concept: We want to try running a new version of code

on a shared staging environment, where that experimental code won’t break

staging for all the other development teams, the same way a canary deploy can

go out, break in production and not take down the service for everyone.

Neurotechnology is becoming widespread in workplaces – and our brain data needs to be protected

Neurotechnology has long been used in the field of medicine. Perhaps the most

successful and well known example are cochlear implants, which can restore

hearing. But neurotechnology is now becoming increasingly widespread. It is

also becoming more sophisticated. Earlier this year, tech billionaire Elon

Musk’s firm Neuralink implanted the first human patient with one of its

computer brain chips, known as “Telepathy”. These chips are designed to enable

people to translate thoughts into action. More recently, Musk revealed a

second human patient had one of his firm’s chips implanted in their brain. ...

These concerns are heightened by a glaring gap in Australia’s current privacy

laws – especially as they relate to employees. These laws govern how companies

lawfully collect and use their employees’ personal information. However, they

do not currently contain provisions that protect some of the most personal

information of all: data from our brains. ... As the Australian government

prepares to introduce sweeping reforms to privacy legislation this month, it

should take heed of these international examples and address the serious

privacy risks presented by neurotechnology used in workplaces.

I Said I Was Technically a CISO, Not a Technical CISO

Often a CISO will not come from a technical background, or their technical

background is long in their career rearview mirror. Can a CISO be effective

today without a technical background? And how do you keep up on your technical

chops once you get the role? ... We often talk about the need for a CISO to

serve as a bridge to the rest of the business, but a CISO’s role still needs

to be grounded in technical proficiency, argues Jeff Hancock, who’s the CISO

over at Access Point Technology in a recent LinkedIn post. Now, many CISOs

come from a technical background, but it becomes hard to maintain once you’re

in a CISO role. Geoff says that while no one can be a master in all technical

disciplines, CISOs should make a goal of selecting a few to retain mastery of

over a long-term plan. Now, Andy, I’ll say, does this reflect your experience?

Is this a matter of credibility with the rest of the security team, or does a

technical understanding allow a CISO to do their job better? As you were a

CISO, how much of your technical skills were sort of staying intact?

API security starts with API discovery

Because APIs tend to change quickly, it’s essential to update the API

inventory continuously. A manual change-control process can be used, but this

is prone to breakdowns between the development and security teams. The best

way to establish a continuous discovery process is to adopt a runtime

monitoring system that discovers APIs from real user traffic, or to require

the use of an API gateway, or both. These options yield better oversight of

the development team than relying on manual notifications to the security team

as API changes are made. ... Threats can arise from outside or inside the

organization, via the supply chain, or by attackers who either sign up as

paying customers, or take over valid user accounts to stage an attack.

Perimeter security products tend to focus on the API request alone, but

inspecting API requests and responses together gives insight into additional

risks related to security, quality, conformance, and business operations.

There are so many factors involved when considering API risks that reducing

this to a single number is helpful, even if the scoring algorithm is

relatively simple.

3 key strategies for mitigating non-human identity risks

The first step of any breach response activity is to understand if you’re

actually impacted; the ability to quickly identify any impacted credentials

associated with the third-party experiencing the incident is key. You need to

be able to determine what the NHIs are connected to, who is utilizing them,

and how to go about rotating them without disrupting critical business

processes, or at least understand those implications prior to rotation. We

know that in a security incident, speed is king. Being able to outpace

attackers and cut down on response time through documented processes,

visibility, and automation can be the difference between mitigating direct

impact from a third-party breach, or being swept up in a list of organizations

impacted due to their third-party relationships. ... When these factors change

from baseline activity associated with NHIs they may be indicative of

nefarious activity and warrant further investigation, or even remediation, if

an attack or compromise is confirmed. Security teams are not only regularly

stretched thin, but they also often lack a deep understanding across the

organization’s entire application and third-party ecosystem as well as

insights into what assigned permissions and associated usage is

appropriate.

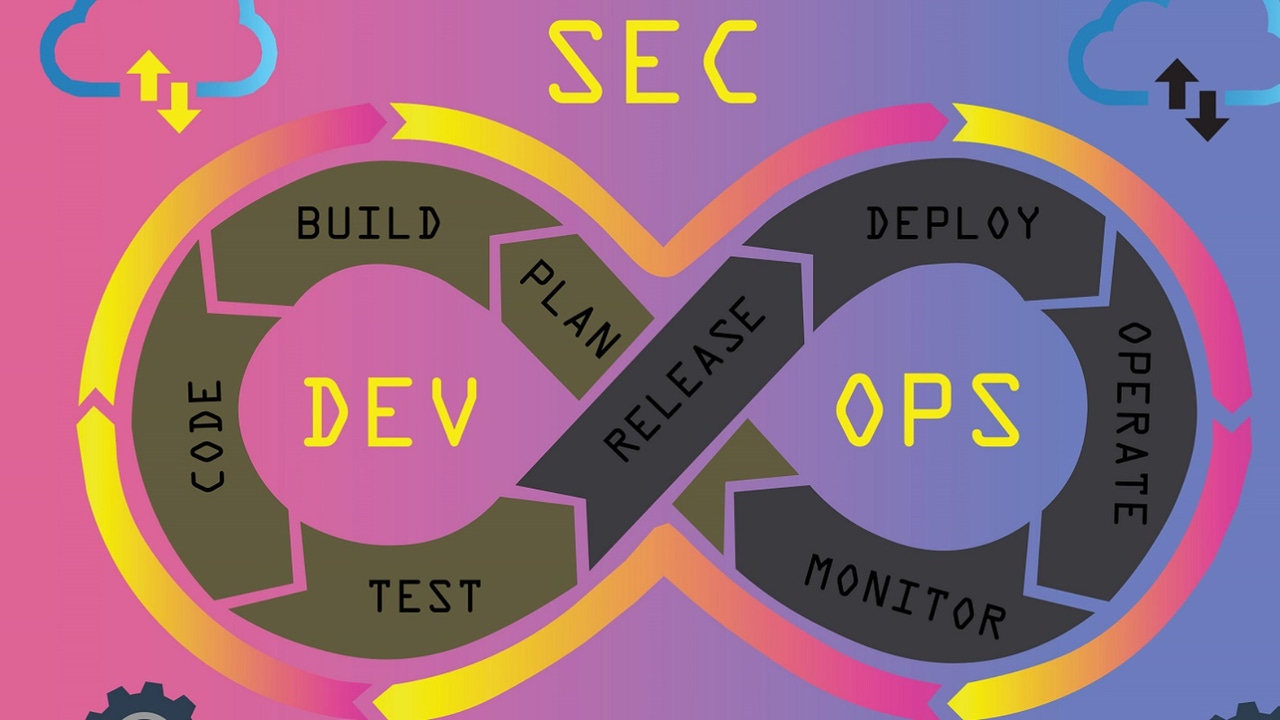

The Rising Cost of Digital Incidents: Understanding and Mitigating Outage Impact

Causal AI for DevOps promises a bridge between observability and automated

digital incident response. By ‘Causal AI for DevOps’ I mean causal reasoning

software that applies machine learning (ML) to automatically capture cause and

effect relationships. Causal AI has the potential to help dev and ops teams

better plan for changes to code, configurations or load patterns, so they can

stay focused on achieving service-level and business objectives instead of

firefighting. With Causal AI for DevOps, many of the incident response tasks

that are currently manual can be automated: When service entities are degraded

or failing and affecting other entities that makeup business services, causal

reasoning software surfaces the relationship between the problem and the

symptoms it is causing. The team with responsibility for the failing or

degraded service is immediately notified so they can get to work resolving the

problem. Some problems can be remediated automatically. Notifications can be

sent to end users and other stakeholders, letting them know that their

services are affected along with an explanation for why this occurred and when

things will be back to normal.

Quote for the day:

"Holding on to the unchangeable past

is a waste of energy, and serves no purpose in creating a better future." --

Unknown

/filters:no_upscale()/articles/cloud-waste-management/en/resources/1picture%202-1723469719794.jpg)