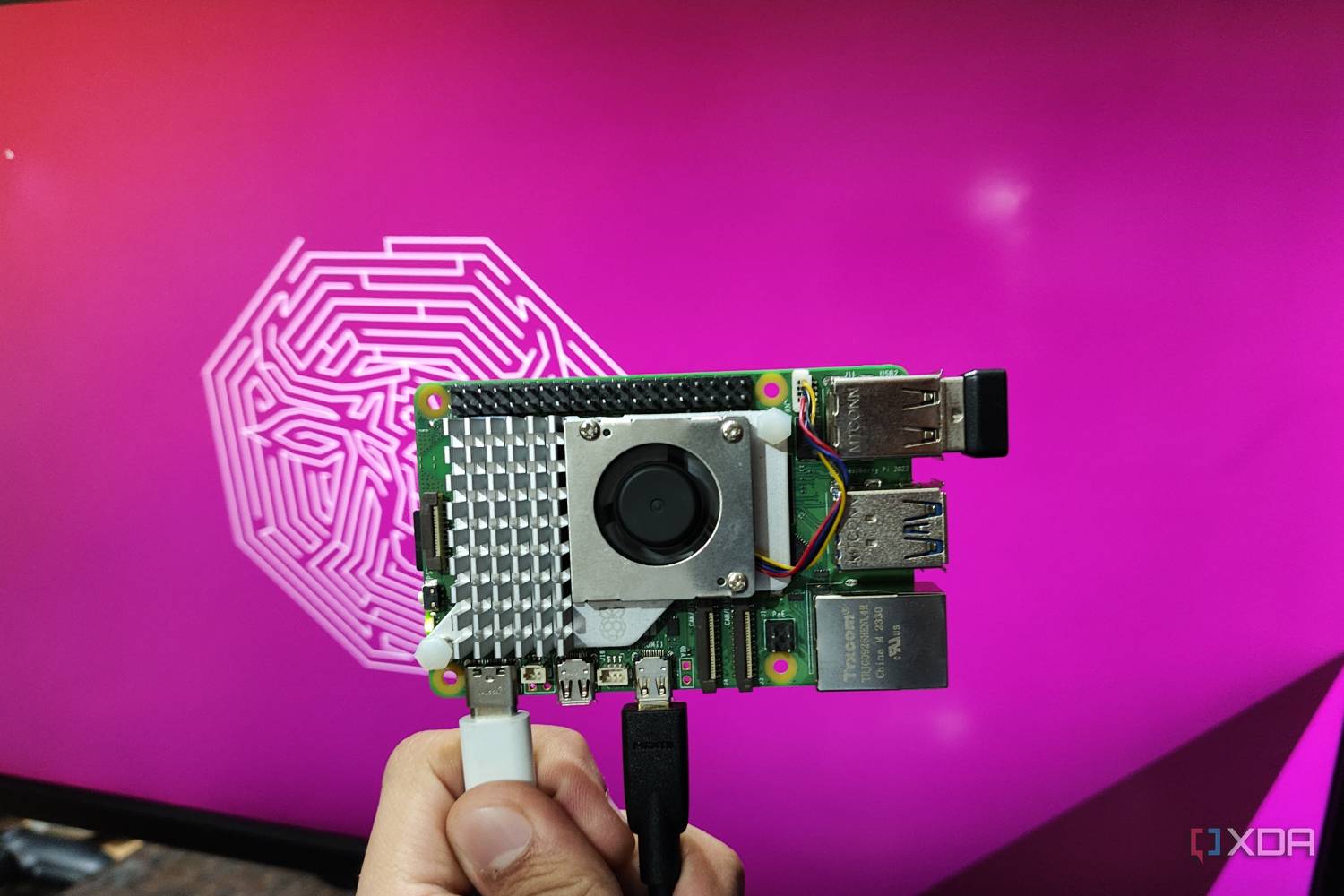

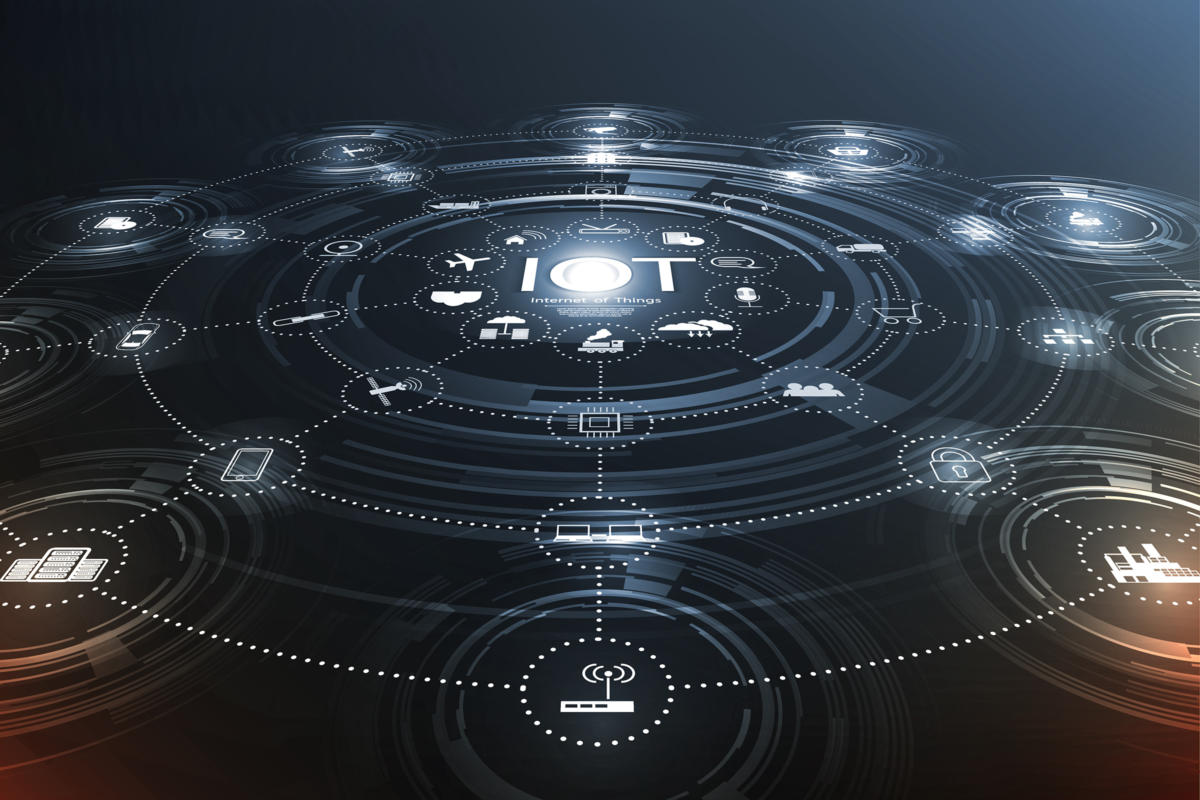

How to deploy software to Linux-based IoT devices at scale

IoT development may be so nascent that it may not yet be part of your mainstream

DevOps processes—you may still be in the early stages of experimentation. Once

you’re ready to scale, you’ll need to bring IoT into the DevOps fold. Needless

to say, the scale and costs of dealing with thousands of deployed devices are

significant. DevOps is an important approach for ensuring the seamless and

efficient delivery of software development, updates, and enhancements to IoT

devices. By integrating IoT development into an established workflow, you’ll

gain the improved collaboration, agility, assured delivery, control, and

traceability that’s part of a modern DevOps process. It’s critical to use a

secure deployment process to protect your IoT devices from unauthorized access,

inadvertent vulnerabilities, and malware. A secure deployment must include

strong authentication methods to access the devices and the management platform.

The data that is transmitted between the devices and the management platform

should be protected by encryption. The manner in which the client devices

connect to the platform after deployment should always be encrypted as well.

In 5 Years, Coding will be Done in Natural Language

“Because AI is a tool,” he adds, that people should be able to operate at a

higher level of abstraction and become way more efficient at the job they do.

Eventually, everyone is likely to be coding in the natural language, but that

wouldn’t necessarily make them a software engineer or a programmer. The skills

required to be a coder are far more complex than being able to put prompts in

an AI tool, copying the code, or merely typing in natural language. ... Soon,

there would be a programming language exclusively in our very own English

language. Not to be confused with prompt engineering and writing code, the

term natural language programming means that most of the coding would be done

by the software in the backend. The programmer would only have to interact

with the tool in English, or any other language and never even look at the

code. On the contrary, a few experts believe that English cannot be a

programming language because it is filled with misunderstandings. “If they’re

going into machines, which will affect the lives of people, we can’t afford

that level of comedy,” said Douglas Crockford when talking to AIM.

Cybersecurity's Future: Facing Post-Quantum Cryptography Peril

The post-quantum cryptography era might not be open season on unprepared

systems, he says, but rather an uneven landscape. There are layers of concerns

to consider. “What I think scares me a little bit is that this type of attack is

somewhat quiet,” Ho says. “The people who are going to be taking advantage of

this -- the few people initially who have quantum computers as you can imagine,

probably state actors -- will want to keep this on the downlow. You wouldn’t

know it, but they probably already have access.” ... “From a technical

perspective … being quantum-safe -- it’s a binary thing. You either are or

you’re not,” says Duncan Jones, head of quantum cybersecurity with quantum

computing company Quantinuum. If there is a particular computer system that an

organization fails to migrate to new standards and protocols, he says that

system will be vulnerable. However, the barrier to entry for access to quantum

compute resources may limit the potential for early attackers who already have

pockets deep enough to procure the technology.

The New CISO: Rethinking the Role

CISOs need to be negotiators. They need to argue in favor of stronger security

and convince boards and business units of the risks in terms they understand.

How a CISO goes about this can vary, depending on whether board members'

experience is in technology or business. Providing a demonstration that puts the

technical risk into a business perspective can be helpful. CISOs should also

talk with other C-level executives — as well as CISOs from other industries — to

get advance buy-in and different perspectives on similar conversations they're

having with their boards. ... CISOs need to be comfortable developing a

risk-based approach focusing on the importance of resiliency, because attackers

will get in. Developing a tested plan to respond to attacks is just as important

as implementing preventative measures. … it's balancing the risk with the cost.

... CISOs should build a deeply technical team that can focus on key security

practices. They should run tabletop exercises on scenarios such as a system

shutdown or inability to connect to the Internet. CISOs must not rely on

assumptions about how to respond; running through and testing all response plans

is vital.

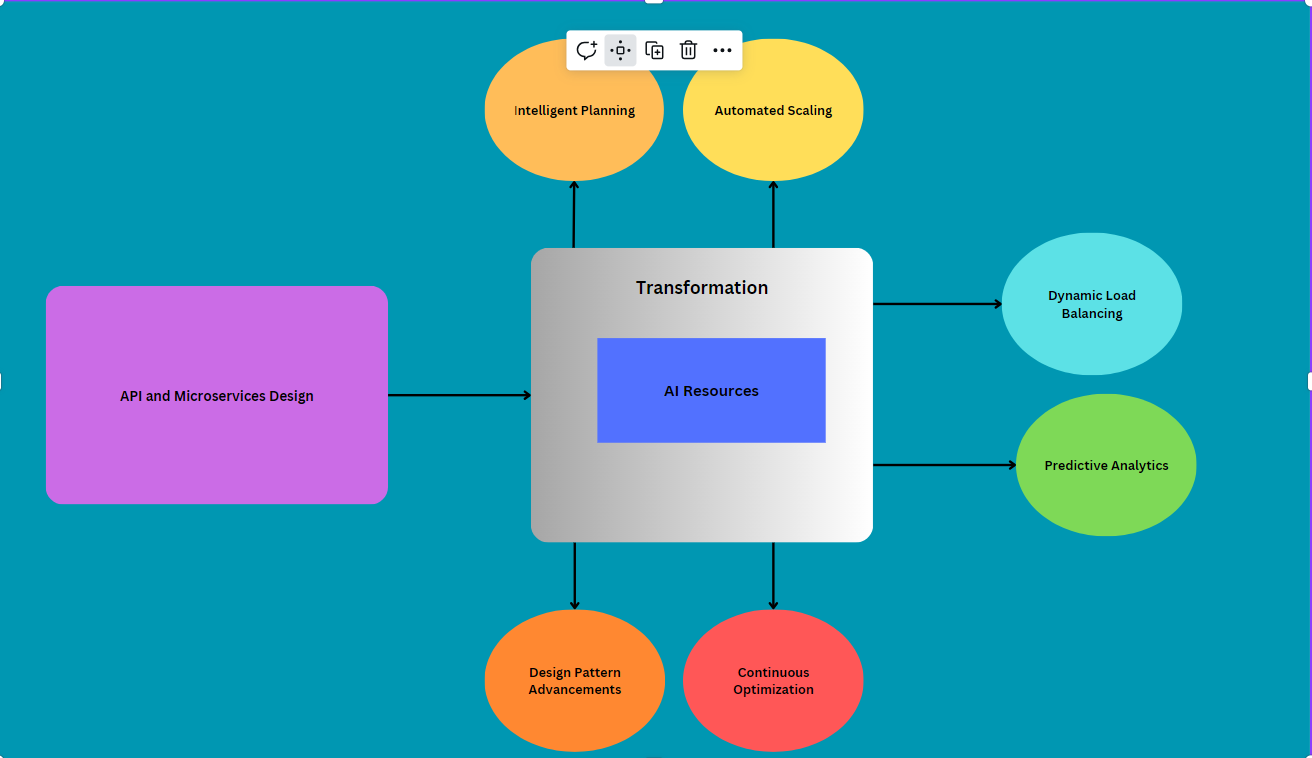

Architect’s Guide to a Reference Architecture for an AI/ML Data Lake

If you are serious about generative AI, then your custom corpus should define

your organization. It should contain documents with knowledge that no one else

has, and should only contain true and accurate information. Furthermore, your

custom corpus should be built with a vector database. A vector database indexes,

stores and provides access to your documents alongside their vector embeddings,

which are the numerical representations of your documents. ... Another important

consideration for your custom corpus is security. Access to documents should

honor access restrictions on the original documents. (It would be unfortunate if

an intern could gain access to the CFO’s financial results that have not been

released to Wall Street yet.) Within your vector database, you should set up

authorization to match the access levels of the original content. This can be

done by integrating your vector database with your organization’s identity and

access management solution. At their core, vector databases store unstructured

data. Therefore, they should use your data lake as their storage solution.

5 ways private organizations can lead public-private cybersecurity partnerships

One tangible step that cybersecurity stakeholders can take is to build the

bottom-up infrastructure that can meet JCDC’s top-down strategic vision as it

attempts to descend into tactical usefulness. This can be done by encouraging

the development of volunteer civil cyber defense organizations while

simultaneously lobbying the federal government for support of these entities.

This kind of volunteer service model is an incredibly cost-efficient way to

boost national defense, save federal government resources, and assure private

stakeholders about their independence. ... Unfortunately, as criticism of the

JCDC emphasizes, top-down P3 efforts often fail to effectively do so due to the

role of strategic parameters driving derivative mission parameters. If industry

is to shape P3 cyber initiatives CISA’s more clearly toward alignment with

practical tactical considerations, mapping out where innovation and adaptation

comes from in the interaction of key individuals spread across a complex array

of interacting organizations (particularly during a crisis) becomes a critical

common capacity.

Decoding tomorrow’s risks: How data analytics is transforming risk management

With digital technologies coming in, corporations can make use of data analytics

to ensure goals correlate with their strategic needs. ... Talking about the

different risk management strategies, data analytics can contribute towards

optimisation models, which directs data-backed resource deployment towards risk

mitigation, scenario analysis, which recreates likely circumstances to calculate

the effectiveness of different risk mitigation applications, and personalised

answers, which supplies custom-fit replies towards certain market conditions.

... “I believe the role of data analytics in risk mitigation has become

paramount, enabling organisations to make decisions based on data-driven

insights. By leveraging advanced analytics techniques, such as predictive

modelling and ML, we can anticipate threats and take measures to mitigate them.

From a business perspective, data analytics is considered indispensable in risk

management as it helps organisations identify, assess, and prioritise risks.

Companies that leverage data analytics in risk management can gain an edge by

minimising disruptions, maximising opportunities, and safeguarding their

reputation,” Yuvraj Shidhaye, founder and director, TreadBinary, a digital

application platform, mentioned.

How AI-Driven Cyberattacks Will Reshape Cyber Protection

Aside from adaptability and real-time analysis, AI-based cyberattacks also have

the potential to cause more disruption within a small window. This stems from

the way an incident response team operates and contains attacks. When AI-driven

attacks occur, there is the potential to circumvent or hide traffic patterns.

This is somewhat similar to criminal activity, where fingerprints are destroyed.

Of course, the AI methodology is to change the system log analysis process or

delete actionable data. Perhaps having advanced security algorithms that

identify AI-based cyberattacks is the answer. ... AI has introduced challenges

where security algorithms must become predictive, rapid and accurate. This

reshapes cyber protection because organizations' infrastructure devices must

support the methodologies. It's no longer a concern where network intrusions,

malware and software applications are risk factors, but rather how AI transforms

cyber protection. The shield is not broken. It requires a transformation

practice for AI-based attacks.

Four easy ways to train your workforce in cybersecurity

Do your employees install all kinds of random apps and programs? Do the same

thing as the phishing emails: create your own dodgy software that locks the

employee's computer, blast it out to the employee database, and see who falls

for it. When they have to bring their IT assets in to be unlocked and get a

scolding for installing suspicious material, however harmless, the lesson will

stick. ... Cyber attacks soar during festive seasons, like the upcoming Holi

holiday. Set up automated reminders to your employees to remind them not to

blindly open greeting mails or click on suspicious links. You can track the open

and read rate of these messages to get an idea of whether people are actually

paying attention. ... If your IT team is savvy and has some time to spare, they

can use generative AI to create fake personas – someone from another department,

a vendor, or a customer – and see if these fake personas can fool people into

giving away information they should be keeping confidential. This is

particularly important, because many cyber criminals today are already using

generative AI to scam unwitting victims.

Report: AI Outpacing Ability to Protect Against Threats

There are "two sides of the coin" when it comes to AI adoption, said Greg

Keller, CTO at JumpCloud. Employee productivity and technology stacks being

embedded into SaaS solutions are "the new frontier," he said. "Yet there are

universal security concerns. There is fear of commingling or escaping of your

data into public sectors. And there is a fear of using one's data on an AI

platform. CTOs are concerned about their data leaking through public LLMs,"

Keller said. ... "We're at the tail end of understanding digital

transformation. Now, we are beginning the first phase of the identity

transformation. These companies have done an amazing amount of work to lift and

shift their technology stacks from legacy into the cloud with one exception -

overwhelmingly, it's the Microsoft Active Directory problem," Keller said.

"That's still on-premises or self-managed. So they're looking at ways to

modernize this. We are in the earliest phases of security shifting away from

endpoint-based [security]. Now, it's about understanding access control through

the identity, and this is the new frontier."

Quote for the day:

"Whatever you can do, or dream you

can, begin it. Boldness has genius, power and magic in it." --Johann Wolfgang von Goethe