Malicious hackers are weaponizing generative AI

The headline here is not that this new threat exists; it was only a matter of

time before threats powered by generative AI power showed up. There must be some

better ways to fight these types of threats that are likely to become more

common as bad actors learn to leverage generative AI as an effective weapon. If

we hope to stay ahead, we will need to use generative AI as a defensive

mechanism. This means a shift from being reactive (the typical enterprise

approach today), to being proactive using tactics such as observability and

AI-powered security systems. The challenge is that cloud security and devsecops

pros must step up their game in order to keep out of the 24-hour news cycles.

This means increasing investments in security at a time when many IT budgets are

being downsized. If there is no active response to managing these emerging

risks, you may have to price in the cost and impact of a significant breach,

because you’re likely to experience one. Of course, it’s the job of security

pros to scare you into spending more on security or else the worst will likely

happen.

Avoiding the Pain of a ‘Resume-Driven Architecture’

A resume-driven architecture occurs when the interests of developers lead them

to designs that no longer align with maximized impacts and outcomes for the

organization. Often, the developer clings to a technology that provides them a

greater level of control and, at least initially, a higher salary. Meanwhile,

the organization gets an architecture that only a handful of people know how

to manage and maintain, limiting the available talent pool and hindering

future innovation. ... There’s no sense in investing resources in a bespoke

architecture if it’s not providing you with any differentiation—especially

when competitors are achieving the same outcome with fewer resources.

Moreover, getting stuck in a Stage Two mindset when the field moves on to

Stage Three (or, worse, Stage Four) and cuts you off from the next wave of

innovation. Subsequent technology breakthroughs often build on top of—and

interoperate with—the previous technology layers. If you’re stuck with a

custom architecture when the industry has moved on, you can miss out on the

next wave of innovation and fall further behind competitors.

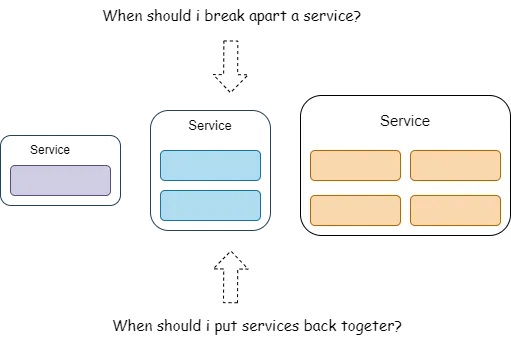

In the Great Microservices Debate, Value Eats Size for Lunch

A key criterion for a service to be standing alone as a separate code base and

a separately deployable entity is that it should provide some value to the

users — ideally the end users of the application. A useful heuristic to

determine whether or not a service satisfies this criterion is to think about

whether most enhancements to the service would result in benefits perceivable

by the user. If in a vast majority of updates the service can only provide

such user benefit by having to also get other services to release

enhancements, then the service has failed the criterion. ... Providing value

is also about the cost efficiency of designing as multiple services versus

combining as a single service. One such aspect that was highlighted in the

Prime Video case was chatty network calls. This could be a double whammy

because it not only results in additional latency before a response goes back

to the user, but it might also increase your bandwidth costs. This would be

more problematic if you have large or several payloads moving around between

services across network boundaries.

Enhancing Code Reviews with Conventional Comments

In software development, code reviews are a vital practice that ensures code

quality, promotes consistency, and fosters knowledge sharing. Yet, at times,

they can drive me absolutely bananas! However, the effectiveness of code

reviews is contingent on clear, concise communication. This is where

Conventional Comments play a pivotal role. Conventional Comments provide a

standardized method of delivering and receiving feedback during code

reviews, reducing misunderstandings and promoting more efficient

discussions. Conventional Comments are a structured commenting system for

code reviews and other forms of technical dialogue. They establish a set of

predefined labels, such as nitpick, issue, suggestion, praise, question,

thought, and notably, non-blocking. Each label corresponds to a specific

comment type and expected response. ... By standardizing labels and formats,

Conventional Comments enhance the clarity of comments, eliminating vague

language and misunderstandings, ensuring all participants understand the

intent and meaning of the comments.

How the modern CIO grapples with legacy IT

When reviewing products and services, Abernathy considers whether a

technology still fits into requirements for simplicity of geographies,

designs, platforms, applications, and equipment. “Driving for simplicity is

of paramount importance because it increases quality, stability, value,

agility, talent engagement and security,” she says. Other red flags for

replacement include point solutions, duplicative solutions, or technologies

that become very challenging because of unreasonable pricing models,

inadequate support or instability. In some ways, moving to SaaS-based

applications makes the review process simpler because decisions as to

whether and when to update and refactor are up to the provider, Ivy-Rosser

says. But while technology change decisions are the responsibility of the

provider, if you’re modernizing in a hybrid world, you need to make sure

your data is ready to move and that any changes don’t create privacy issues.

With SaaS, the review should take a hard look at the issues surrounding

ownership and control.

The psychological impact of phishing attacks on your employees

The aftermath of a successful phishing attack can be emotionally draining,

leaving people feeling embarrassed and ashamed. The fear of accidentally

clicking a phishing email can affect a person’s performance and productivity

at work. Even simulated phishing attacks can cause stress when employees are

lured with fake promises of bonuses or freebies. Furthermore, when phishing

emails repeatedly get through security measures and are not neutralized,

employees may view these as safe and click on them. This could ultimately

lead to employees losing faith in their employer’s ability to protect them.

... Organizations owe it to their employees to be proactive. To ensure

employees are protected, they should implement advanced technology that uses

Artificial Intelligence and Machine Learning models, such as Natural

Language Processing (NLP) and Natural Language Understanding. These tools

can detect even the most advanced phishing attempts and will serve as a

safety net.

Cyber liability insurance vs. data breach insurance: What's the difference?

Understanding the distinction is important, as cyber insurance is becoming

an integral part of the security landscape. Many companies may have no

choice but to find insurance as more organizations are requiring that their

business partners have cyber coverage. Many traditional business insurance

policies will simply not cover cyber incidents, considering them outside the

scope of the agreement, which is why cyber insurance has become a separate

form of protection. It’s also important to note that getting insurance isn’t

guaranteed — insurers are increasingly asking for more proof that strong

cybersecurity strategies are in place before agreeing to provide coverage.

Many companies may have no choice but to meet such terms. Put simply, cyber

liability insurance refers to coverage for third-party claims asserted

against a company stemming from a network security event or data breach.

Data breach insurance, on the other hand, refers to coverage for first-party

losses incurred by the insured organization that has suffered a loss of

data.

These leaders recognize that transformation investments remain critical to

any business, and they plan to emerge from these volatile times armed with

new business models and revenue streams. In short, they plan to continue

winning through transformation, and they are laser-focused about how they

will do it. You might even say they’re “outcomes obsessed.” ... Remember,

your goal is to prune the tree so it can thrive—not just to go around sawing

off branches. Any cuts must set up individuals, teams, and departments for

long-term success, despite the short-term pain. One way I’ve seen successful

leaders do this is by taking the choices they are considering (both cutting

investments and expanding them) and mapping them out in terms of their

expected financial and nonfinancial impact ... Top-performing companies look

beyond functional excellence, and instead aim for enterprise-level

reinvention that extends across the company’s business, operating, and

technology models. You should too. These transformations enable you to

strengthen ecosystems, close capability gaps, and better chart your future

revenue streams.

Don't Let Age Mothball Your IT Career

Age discrimination is a significant concern in the IT industry, Schneer

says. “Some companies may prioritize younger workers who are perceived to be

more tech-savvy and adaptable,” she notes. “However, experienced

professionals bring valuable skills and knowledge that can be an asset to

any organization.” Weitzel observes that it's difficult to know how

prevalent age discrimination is in any industry. “But applicants can be

proactive in combatting any false assumptions by showcasing upfront the

current skills and recent experience that employers are seeking.” Age

discrimination may be more prevalent in certain IT fields, such as software

development or web design, where rapid advancements in technology can make

older professionals feel less relevant, Schneer says. “However, roles that

require extensive experience and expertise, such as IT management or

cybersecurity, may be less susceptible to age bias.” When encountering

suspected age bias, senior IT workers should document any incidents or

patterns of behavior that suggest discrimination, Schneer advises.

Thinking Deductively to Understand Complex Software Systems

The main goal is to think through the role of tests in helping you

understand complex code, especially in cases where you are starting from a

position of unfamiliarity with the code base. I think most of us would agree

that tests allow us to automate the process of answering a question like "Is

my software working right now?". Since the need to answer this question

comes up all the time, at least as frequently as you deploy, it makes sense

to spend time automating the process of answering it. However, even a large

test suite can be a poor proxy for this question since it can only ever

really answer the question "Do all my tests pass?". Fortunately, tests can

be useful in helping us answer a larger range of questions. In some cases

they allow us to dynamically analyse code, enabling us to glean a genuine

understanding of how complex systems operate, that might otherwise be hard

won.

Quote for the day:

"To do great things is difficult;

but to command great things is more difficult." --

Friedrich Nietzsche