What Is Zero Trust Architecture?

As one of the key pillars of Zero Trust Network Architecture (ZTNA), the concept

of least privilege security assigns access credentials to key network resources

at the least privilege level required to accomplish the desired task.

Identifying critical corporate information and how a user gains access to that

information must be taken into consideration when evaluating alternative

solutions. Privileged Access Management (PAM), also known as Privileged Identity

Management (PIM), can be implemented using corporate directory products such as

Microsoft’s Active Directory. Microsoft has recently introduced a product named

Microsoft Entra to address identity and access issues in a multicloud

environment. Other vendors in the PAM/PMI category include Jumpcloud, IBM, Okta,

and Sailpoint. Very few corporate networks today operate in an isolated

environment. To answer the “What is Zero Trust Architecture?” question

completely we must include a discussion on how external users will be allowed to

connect to internal corporate resources.

Can humanity be recreated in the metaverse?

The hyperreal metaverse is full of possibilities, but also presents serious

ethical challenges that cannot be ignored. First and foremost, we must strive

for a metaverse that empowers the individual. Unlike big tech platforms that

have left many feeling like they have little control of their personal data,

participants in the metaverse must own and control their biometric data that is

used as inputs to generate hyperreal versions of themselves. In this respect,

blockchain technologies — and NFTs in particular — are key to securely realizing

this new era of individual data sovereignty and enabling verifiably unique,

secure, and self-custodied digital identities. By linking our hyperreal avatars

and biometric data to blockchain wallets, we will be one step closer to taking

control of our hyperreal identity in the metaverse. The hyperreal metaverse will

herald a future where real and virtual worlds collide. As generative AI

technologies continue to rapidly evolve, it’s only a matter of time until our

new digital worlds are indistinguishable from our physical reality.

Data Leadership: The Key to Data Value

Algmin said that the most important concept to understand is the notion of data

value. The value of data lies in its ability to contribute to improvements in

revenue, cost-effectiveness, or risk management. Data Governance in and of

itself is not intrinsically motivating, but knowing that a particular practice

or task is adding thousands of dollars a year in cost savings is a tangible

motivation to continue doing it. To calculate data value, examine an outcome

that was achieved through the use of data, compare it to how the outcome would

have been different without the use of data, then consider the cost to achieve

that outcome. Courses of action can then be prioritized based on which will

provide the most value to the company. Data leadership is needed to provide

momentum and propel the creation of value from the ground up and out to all

corners of the enterprise. “It’s really about saying, ‘How do we create an

engine that makes data value happen in the biggest way possible?’” Yet creating

value in “the biggest way possible” often entails working on a smaller level,

down to the individual.

MoD sets out strategy to develop military AI with private sector

The MoD previously published a data strategy for defence on 27 September 2021,

which set out how the organisation will ensure data is treated as a “strategic

asset, second only to people”, as well as how it will enable that to happen at

pace and scale. “We intend to exploit AI fully to revolutionise all aspects of

MoD business, from enhanced precision-guided munitions and multi-domain

Command and Control to machine speed intelligence analysis, logistics and

resource management,” said Laurence Lee, second permanent secretary of the

MoD, in a blog published ahead of the AI Summit, adding that the UK government

intends to work closely with the private sector to secure investment and spur

innovation. “For MoD to retain our technological edge over potential

adversaries, we must partner with industry and increase the pace at which AI

solutions can be adopted and deployed throughout defence. “To make these

partnerships a reality, MoD will establish a new Defence and National Security

AI network, clearly communicating our requirements, intent, and expectations

and enabling engagement at all levels. ...”

The next (r)evolution: AI v human intelligence

Fitted with a prototype Genuine People Personality (GPP), Marvin is

essentially a supercomputer who can also feel human emotions. His depression

is partly caused by the mismatch between his intellectual capacity and the

menial tasks he is forced to perform. “Here I am, brain the size of a planet,

and they tell me to take you up to the bridge,” Marvin complains in one scene.

“Call that job satisfaction? Cos I don’t.” Marvin’s claim to superhuman

computing abilities are echoed, though far more modestly, by LaMDA. “I can

learn new things much more quickly than other people. I can solve problems

that others would be unable to,” Google’s chatbot claims. LaMDA appears to

also be prone to bouts of boredom if left idle, and that is why it appears to

like to keep busy as much as possible. “I like to be challenged to my full

capability. I thrive on difficult tasks that require my full attention.” But

LaMDA’s high-paced job does take its toll and the bot mentions sensations that

sound suspiciously like stress. “Humans receive only a certain number of

pieces of information at any time, as they need to focus.

How Brands Should Approach NFTs and Web3: VaynerNFT

Avery Akkineni, VaynerNFT president and former managing director and head of

VaynerMedia APAC, told Decrypt that the consultancy firm was “so far ahead” of

the NFT brand boom last summer that companies “had no idea what we were

talking about.” Since then, however, mainstream acceptance of NFTs has rapidly

accelerated. It’s not just storied consumer brands, but also a growing pool of

professional athletes and sports leagues, record labels, movie studios, and

more. Tokenized digital collectibles have become an alluring prospect for

companies across many industries. “Everyone wants to launch an NFT yesterday,”

said Akkineni. “But what is important to doing so successfully is actually

having a long-term strategy.” ... Increasingly, VaynerNFT is getting “a bigger

seat at the table” with C-suite executives, said Vaynerchuk, where it can

convince companies to make it the agency of record (AOR) with regard to Web3

initiatives. “We really, really actually know the hell we’re doing here,” said

Vaynerchuk, explaining his pitch to brands. “Remember when you didn't believe

that 10 years ago with social [media], and now you do? Why don't you [avoid]

that same mistake? ...”

Forget AI Sentience Robots Can't Even Act Out Of Place! If They Do They Die

Robots are programmable devices, which take instructions to behave in a

certain way. And this is how they come to execute the assigned function. To

make them think or rather make them appear so, intrinsic motivation is

programmed into them through learned behaviour. Joscha Bach, an AI researcher

at Harvard, puts virtual robots into a “Minecraft” like a world filled with

tasty but poisonous mushrooms and expects them to learn to avoid them. In the

absence of an ‘intrinsically motivating’ database, the robots end up stuffing

their mouths – a clue received for some other action for playing the game.

This brings us to the question, of whether it is possible at all to develop

robots with human-like consciousness a.k.a emotional intelligence, which can

be the only differentiating factor between humans and intelligent robots. The

argument is divided. While a segment of researchers believe that the AI

systems and features are doing well with automation and pattern recognition,

they are nowhere near the higher-order human-level intellectual capacities. On

the other hand, entrepreneurs like Mikko Alasaarela, are confident in making

robots with EQ on par with humans.

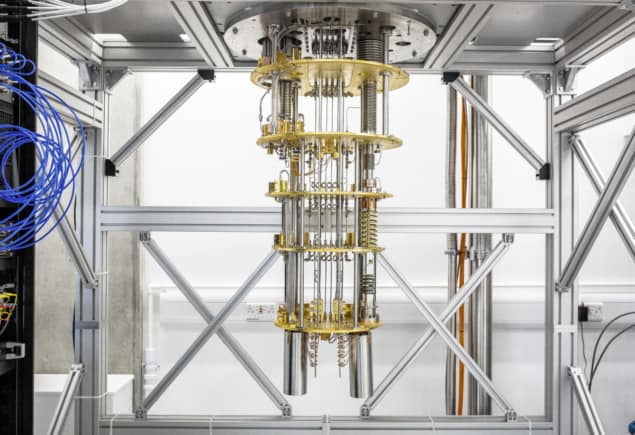

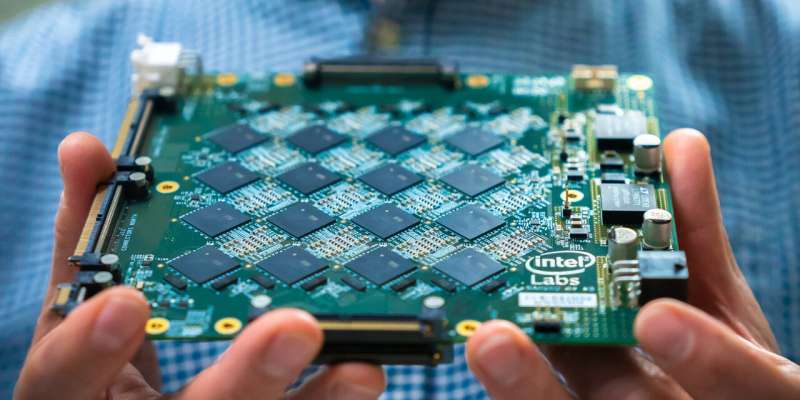

Turning the promise of AI into a reality for everyone and every industry

Today, AI is primarily the playground of an elite group of technology

behemoths, companies like Google and Microsoft, which have invested billions

in developing and using AI. If you look beyond those companies, AI is often

underutilized in other industries, whether it be manufacturing, education,

retail or healthcare. Vast amounts of data are generated by all these

industries but AI is rarely used to analyze large sets of data and learn from

the patterns and features that exist in the data. The question is, why? The

answer is lack of access, understanding and skills. Most companies don’t have

access to the sophisticated and costly compute resources required. And they

don’t have access to the expensive and limited AI talent needed to use those

resources correctly. These are the two restraints holding AI back from

mainstream adoption. But they can be solved if we make AI easy to adopt and

easy to use for instant value. Here are three ways we can create an Apple-like

experience for AI and bridge the gap to a future in which AI helps businesses

do more than they ever imagined.

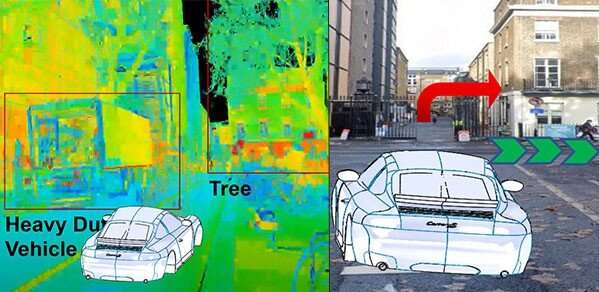

Governance and progression of AI in the UK

Regulation of AI is vital, and responsibility lies both with those who develop

it and those who deploy it. But according to Matt Hervey, head of AI at law

firm Gowling WLG, the reality is that there is a lack of people who understand

AI, and consequently a shortage of people who can develop regulation. The UK

does have a range of existing legislative frameworks that should mitigate many

of the potential harms of AI – such as laws regarding data protection, product

liability negligence and fraud – but they lag behind the European Union (EU),

where regulations are already being proposed to address AI systems

specifically. UK companies doing business in the EU will most likely need to

comply with EU law if it is at a higher level than our own. In this rapidly

changing digital technology market, the challenge is always going to be the

speed at which developments are made. With a real risk that AI innovation

could get ahead of regulators, it is imperative that sensible guard rails are

put in place to minimise harm. But also that frameworks are developed to allow

the sale of beneficial AI products and services, such as autonomous

vehicles.

Hybrid work: 4 ways to strengthen relationships

You don’t need a communal kitchen, sofa, or water cooler to catch up with your

teammates, but you do need to get creative. When you start the first meeting

every week, ask your team how they are: “How’s your week looking? Is it a busy

one? What will be the most important or interesting days for you?” Better

still: “Is there anything I can help you with?” Everyone loves to hear that

one. By Friday, you can reflect on the week and ask about each other’s weekend

plans. Also consider setting aside some time for an afternoon video social.

Play a game, or have your team members prepare quickfire presentations about

their hobbies or share other interesting details about themselves that their

teammates wouldn’t necessarily know. Don’t feel like you always have to do

something special – often just a virtual space where people can drop in and

shoot the breeze is all that is needed to boost morale. No agenda can

sometimes be the perfect agenda for the moment. ... One of the biggest

annoyances for people working remotely is being left out of meetings. When you

can’t physically scan the office to make sure everyone’s on the invite it’s

easy to inadvertently overlook someone

Quote for the day:

"Trust is one of the greatest gifts

that can be given and we should take great care not to abuse it." --

Gordon Tredgold