The cloud data migration challenge continues - why data governance is job one

How can governance help? The role of governance is to define the rules and

policies for how individuals and groups access data properties and the kind of

access they are allowed. Yet people in an organization rarely operate according

to well-defined roles. They perform in multiple roles, often provisionally.

On-ramping has to happen immediately; off-ramping has to be a centralized

function. One very large organization we dealt with discovered that departing

employees still had access to critical data for seven to nine days! So how can

data governance support more intelligent data security? After all, without

governance, security would be arbitrary. Many organizations that employ security

schemes struggle because such schemes tend to be either too loose or too tight

and almost always too rigid (insufficiently dynamic). In this way, security can

hinder the progress of the organization. Yet, given the complexity of data

architecture today, it’s become impossible to manage security for individuals

without a coherent and dynamic governance policy to drive security allowance or

grants for exceptions to those rules.

Cybersecurity and the Pareto Principle: The future of zero-day preparedness

There’s a good reason why software asset inventory and management is the

second-most important security control, according to the Centers for Internet

Security’s (CIS) Critical Security Controls. It’s “essential cyber hygiene” to

know what software is running and being able to access that up-to-date

information instantaneously. It’s as though you were a new master-at-arms for a

local baron in the Middle Ages. Your first duty would be to map out the castle

grounds that you are charged to protect. ... As we put Log4Shell behind us,

let’s incorporate these lessons learned for a more prepared future. The

allocation of resources by enterprise security teams needs to be more

purposeful, as attackers become increasingly sophisticated and continue to have

what feels like unlimited resources. The value added through clear visibility

and real-time insights into your entire ecosystem becomes all the more

important. Remember, the core scope of the security team is to create a secure

IT ecosystem, mitigate the exploit of known vulnerabilities and monitor for any

suspicious activity.

Expect to see more online data scraping, thanks to a misinterpreted court ruling

What can and should IT do about that? Given that these are generally

publicly-visible pages, it’s a problem. There are few technical methods to block

scrapers that wouldn’t cause problems for the site visitors the enterprise

wants. Years ago, I was managing a media outlet that was making a huge move to

premium content, meaning that readers would now have to pay for selected premium

stories. We ran into a problem. We couldn’t allow people to freely share premium

content, as we needed people to buy those subscriptions. That meant that we

blocked cut-and-paste and specifically blocked someone from saving the page as a

PDF. But that meant that those pages also couldn’t be printed. (Saving as PDF is

really printing to PDF, so blocking PDF downloads meant blocking all printers.)

It took just a couple of hours before new premium subscribers screamed that they

paid for access and they need to be able to print pages and read them at home or

on a train. After quite a few subscribers threatened to cancel their paid

subscriptions, we surrendered and reinstated the ability to print.

Unpatched DNS Bug Puts Millions of Routers, IoT Devices at Risk

The flaw affects the ubiquitous open-source Apache Log4j framework—found in

countless Java apps used across the internet. In fact, a recent report found

that the flaw continues to put millions of Java apps at risk, though a patch

exists for the flaw. Though it affects a different set of targets, the DNS flaw

also has a broad scope not only because of the devices it potentially affects,

but also because of the inherent importance of DNS to any device connecting over

IP, researchers said. DNS is a hierarchical database that serves the integral

purpose of translating a domain name into its related IP address. To distinguish

the responses of different DNS requests aside from the usual 5-tuple–source IP,

source port, destination IP, destination port, protocol–and the query, each DNS

request includes a parameter called “transaction ID.” The transaction ID is a

unique number per request that is generated by the client and added in each

request sent. It must be included in a DNS response to be accepted by the client

as the valid one for request, researchers noted. “Because of its relevance, DNS

can be a valuable target for attackers,” they observed.

Managed services vs. hosted services vs. cloud services: What's the difference?

Managed service providers (MSPs) existed first - before we were talking about

the big public cloud providers. “I’ve seen some definitions where MSPs are a

superset and all CSPs are MSPs, but not all MSPs are CSPs. That seems a

reasonable definition to me,” says Miniman. One historical example of a managed

service provider you may know is Rackspace: Their company name literally

reflected that you were buying space in their rack to run workloads. The way

their business started out was as a hosted service: Your server ran in

Rackspace’s data center. But Rackspace also offered other types of services to

customers - managed services. ... “When I think of a hosted environment, that is

something dedicated to me,” says Miniman. “So traditionally, there was a

physical machine…that maybe had a label on it. But definitely from a security

standpoint, it was “company X is renting this machine that is dedicated to that

environment.” Public cloud service providers sell hundreds of services: You can

think of those as standard tools, just like you’d find standard metric tools

walking into any hardware store.

Making Agile Work in Asynchronous and Hybrid Environments

The ideal state for asynchronous teams is to remain aligned passively - or with

little effort - eliminating the need for frequent meetings or lengthy

documentation of the minutiae of every project. To pull this off, visual

collaboration should be a key element of Agile management for teams that are

working remotely and asynchronously. Visual collaboration brings the ease of

alignment of the whiteboard into the digital workplace, giving developers a

living artifact of project plans that can include diagrams, UX mockups, embedded

videos, and other communication tools that can make async work nearly

error-proof. Our team at Miro uses a variety of visual tools to manage our

development, and many of these tools are available as free templates that other

teams can use. The agile product roadmap helps prioritize work and shift tasks

as priorities change. And the product launch board helps our team visually align

design, development, and GtM teams as we come down to the wire on a new launch.

The shared nature of these tools gives us confidence as we work.

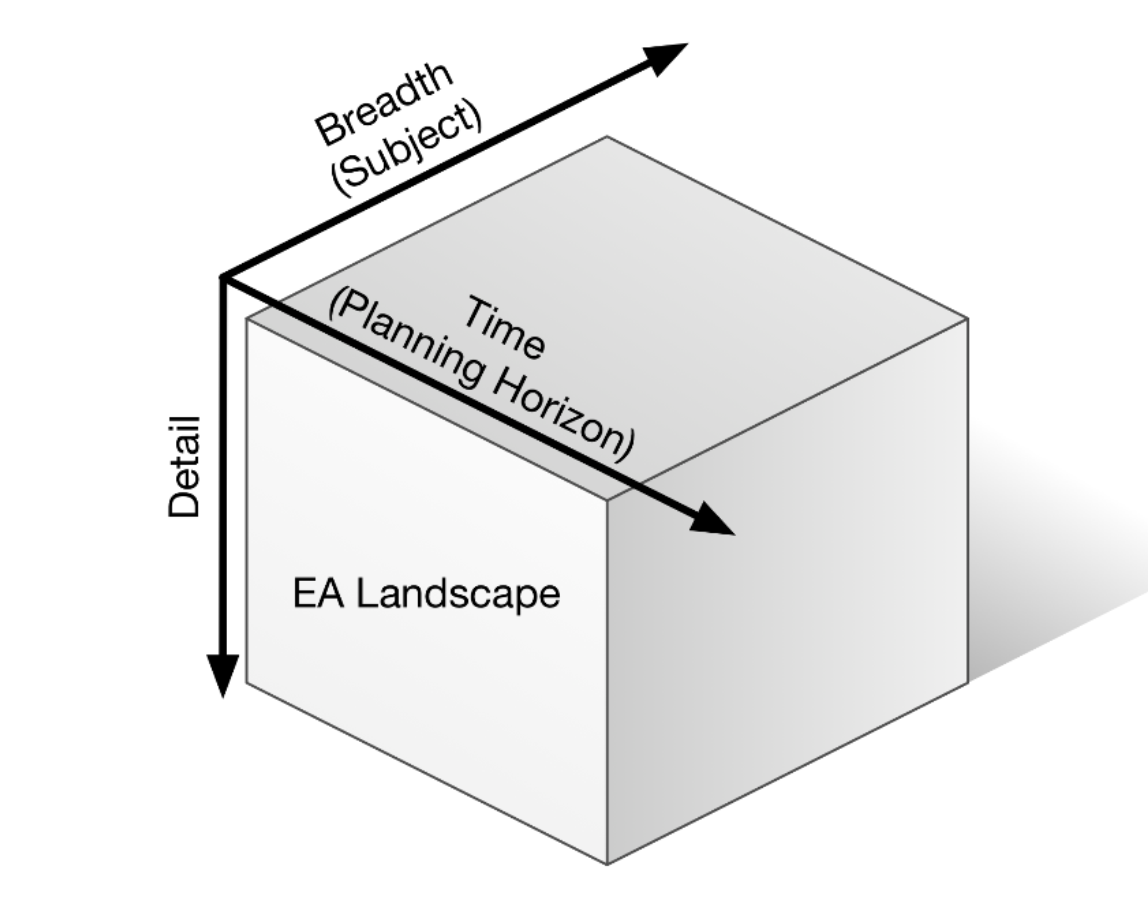

Three steps to an effective data management and compliance strategy

Businesses clearly need to know more about their data to meet compliance

needs, but the challenge is sorting through the noise in all the volume. Data

analytics is essential for enterprises looking to increase efficiency, improve

business decision-making and attain that important competitive edge while

still ensuring that they comply with today’s data standards. However, while

big data can add significant value to the decision-making process, supporting

large volumes of unstructured data can be complex, as inadequate data

management and data protection introduce unacceptable levels of risk. The

emergence of DataOps, which is an automated and process-oriented methodology

aimed at improving the quality of data analytics, further supports the

requirement for enhanced data management. Driving faster and more

comprehensive analytics is key to leveraging value from data, but this can

only be done if data is managed correctly, the right governance protocols are

in place, and data quality is kept to the highest standard.

5 key industries in need of IoT security

The growth of IoT has spurred a rush to deploy billions of devices worldwide.

Companies across key industries have amassed vast fleets of connected devices,

creating gaps in security. Today, IoT security is overlooked in many areas.

For example, a sizable percentage of devices share the userID and password of

“admin/admin” because their default settings are never changed. The reason

security has become an afterthought is that most devices are invisible to

organizations. Hospitals, casinos, airports, cities, etc. simply have no way

of seeing every device on their networks. ... Cities rely on 1.1 billion IoT

devices for physical security, operating critical infrastructure from traffic

control systems, street lights, subways, emergency response systems and more.

Any breach or failure in these devices could pose a threat to citizens. You

see it in the movies: brilliant hackers control the traffic lights across a

city, with perfect timing, to guide an armored vehicle into a trap. Then

there’s real life; for instance, when a hacker in Romania took control of

Washington DC’s outside video cameras days before the Trump inauguration.

Getting strategy wrong—and how to do it right instead

Making matters more complex, especially in areas of public policy and defense,

real-life leaders do not have a neat economist’s single measure of value.

Instead, they are faced with a bundle of conflicting ambitions—a group of

desires, goals, intents, values, and fears—that cannot all be satisfied

simultaneously. Forging a sense of purpose from this bundle is part of the

gnarly problem. Making matters most complex is the fact that the connection

between potential actions and actual outcomes is unclear. A gnarly challenge

is not solved with analysis or the application of preset frameworks. A

coherent response arises only through a process of diagnosing the nature of

the challenges, framing, reframing, chunking down the scope of attention,

referring to analogies, and developing insight. The result is a design, or

creation, embodying purpose. I call it a creation because it is often not

obvious at the start, the product of insight and judgment rather than an

algorithm. Implicit in the concept of insightful design is that knowledge,

though required, is not, by itself, sufficient.

Understand the 3 P’s of Cloud Native Security

The movement to shift security left has empowered developers to find and fix

defects early so that when the application is pushed into production, it is as

free as possible from known vulnerabilities at that time… But shifting

security left is just the beginning. Vulnerabilities arise in software

components that are already deployed and running. Organizations need a

comprehensive approach that spans left and right, from development through

production. While there’s no formulaic one-size-fits-all way to achieve

end-to-end security, there are some worthwhile strategies that can help you

get there. ... Shifting left can help organizations develop applications with

security in mind. But no matter how confident you are in the security of an

application when it leaves development, there is no guarantee that it will

remain secure in production. We have seen on a large scale that

vulnerabilities are often disclosed well after being deployed to production.

Reminders include Apache Struts, Heartbleed, and, most recently, Log4j, which

was first published in 2013 but discovered just last year.

Quote for the day:

"Leaders are more powerful role models

when they learn than when they teach." -- Rosabeth Moss Kantor