Is low-code safe and secure?

Think back to the early days of computing, when developers wrote their programs

in assembly language or machine language. Developing in these low-level

languages was difficult, and required highly experienced developers to

accomplish the simplest tasks. Today, most software is developed using

high-level programming languages, such as Java, Ruby, JavaScript, Python, and

C++. Why? Because these high-level languages allow developers to write more

powerful code more easily, and to focus on bigger problems without having to

worry about the low-level intricacies of machine language programming. The

arrival of high-level programming languages, as illustrated in Figure 1,

enhanced machine and assembly language programming and generally allowed less

code to accomplish more. This was seen as a huge improvement in the ability to

bring bigger and better applications to fruition faster. Software development

was still a highly specialized task, requiring highly specialized skills and

techniques. But more people could learn these languages and the ranks of

software developers grew.

The Real-World Advantages and Disadvantages of Low-Code Development Platforms

Low-code proponents point to what they claim is another distinct advantage: LDCP

technologies help businesses do more with less. What is more, they promise to

free skilled software engineers to focus on hard problems, on creative

solutions, on what they (i.e., proponents) call “value-creating” work, as

distinct to the types of recurrent, repeatable problems that MDSD and LDCP

technologies aim to formalize and encapsulate in reusable applications and

workflows. “We have four or five developers that … work in Mendix and they

accomplish more than a team of, no lie, probably 15 to 20 developers,” Conway

Solomon, CEO with Mendix customer WRSTBND, a company that provides

event-management software and services, told Kavanagh. “So, what kind of cost

savings is that? Especially as a small company that has a lot of ambitions,

where you know, like, a lot of extra money has been [spent] on payroll, you can

do it in a fraction of the cost and have the same outcome … if not better, and

so we use that to our advantage.”

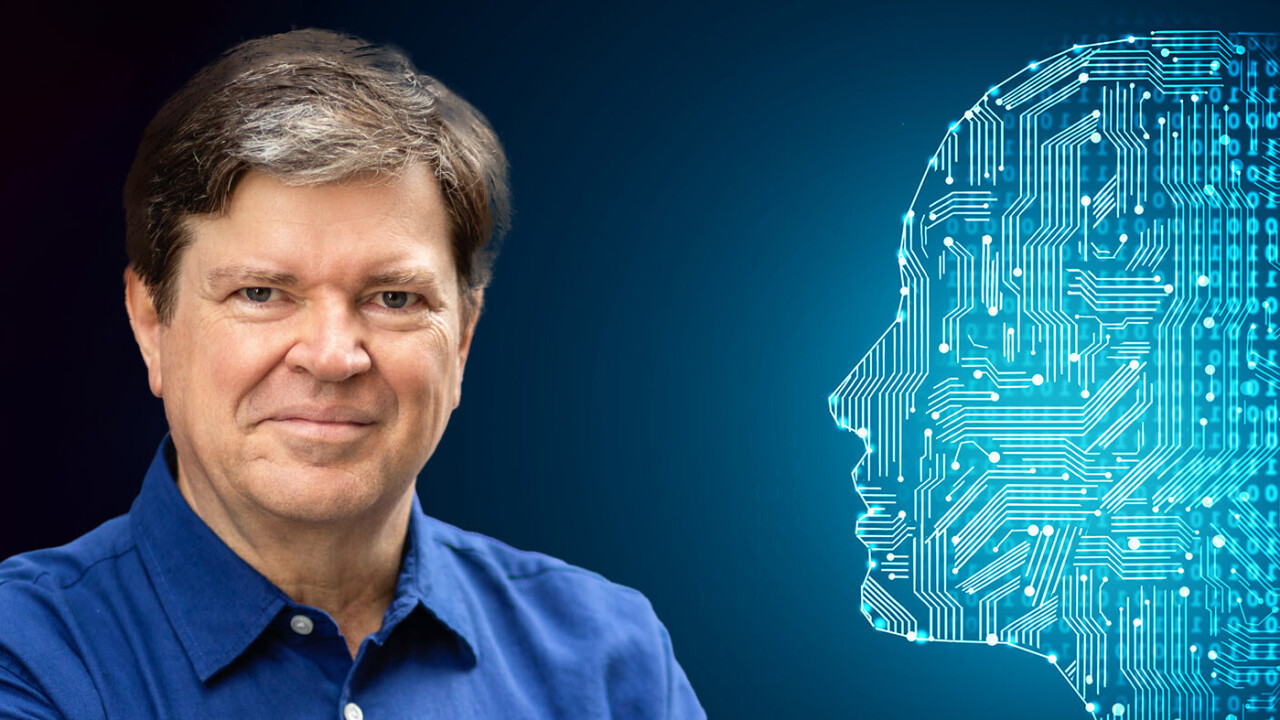

Meta’s Yann LeCun is betting on self-supervised learning to unlock human-compatible AI

The more popular branch of ML is supervised learning, in which models are

trained on labeled examples. While supervised learning has been very successful

at various applications, its requirement for annotation by an outside actor

(mostly humans) has proven to be a bottleneck. First, supervised ML models

require enormous human effort to label training examples. And second, supervised

ML models can’t improve themselves because they need outside help to annotate

new training examples. In contrast, self-supervised ML models learn by observing

the world, discerning patterns, making predictions (and sometimes acting and

making interventions), and updating their knowledge based on how their

predictions match the outcomes they see in the world. It is like a supervised

learning system that does its own data annotation. The self-supervised learning

paradigm is much more attuned to the way humans and animals learn. We humans do

a lot of supervised learning, but we earn most of our fundamental and

commonsense skills through self-supervised learning.

How do we close the huge generation gap on flexible working?

There is a clear generational divide in where people want to work and how they

see the purpose of the office. For young people, flexibility is key. They want

to be in the office and connect and collaborate with co-workers face to face.

That helps them onboard, form working relationships, receive guidance and soak

up the company culture – all issues that workers have struggled with during the

pandemic, a Microsoft and YouGov study highlighted in December. The office is

often the vehicle for knowledge transfer between generations. But they also want

to work from home when they need to – to look after a sick relative or wait for

a repair engineer, for example. They don’t see this as a major issue, because

their view isn’t based on traditional ways of working in an office every day.

For them, the office space no longer stops at the office. They want to work

where they want, when they want. And they want their bosses to provide the tools

to help them do that. Last year, Microsoft’s Work Trend Index found that 42

percent of employees who worked from home lacked office essentials, and one in

10 didn’t have an adequate internet connection to do their job.

How Do You Identify a Successful Scrum Master?

The Scrum team delivers a valuable Increment every single Sprint. As a

framework, Scrum is focusing on delivery. Admittedly, this comes with many

challenges. However, if a Scrum team is not regularly creating value for the

(internal and external) stakeholders, everything else is of lesser importance.

(A secondary positive effect of regularly delivering valuable Increments is

building trust among stakeholders. Typically, building trust with them results

in less supervision, for example, in the form of reporting duties or committees

messing with Scrum—you get the idea. All of this is bolstering self-management,

thus making working as a Scrum team more effective and enjoyable.) ... Other

people want to join the Scrum team because nothing succeeds like success.

(People voting with their feet is an excellent indicator for Scrum Master

success, and it applies in both directions. My tip: Run regular, anonymous

surveys in the Scrum team and ask whether team members would recommend an open

position in the organization to a good friend with an agile mindset and track

the development of this “employer NPS®” regularly to spot trends.)

Knox Wire Introduces an Eye-opening Network for Global Financial Settlements

The Knox Wire system was built by utilising world-class distributed ledger

technology while further integrating artificial intelligence to facilitate its

efficiency. It facilitates security, information authentication, and information

storage on the network. It believes in the combined effort of its team to create

the global settlement network through extensive experience in the development

and finance sectors. Also, it holds professionalism throughout its interactions

with institutions, hoping to revolutionise financial systems through innovation.

The endgame is to benefit users, institutions, and eventually, governments. The

onboarding process for financial institutions is straightforward, involving the

beginning of an agreement with the platform. The institution will sign a

contract with the settlement network and set all favourable employees by

creating accounts on the Knox Wire system. Then, the network will provide AI

integrations alongside its API parameters to support all the processes.

Fintech Roundup: Due diligence makes a comeback and a former Better.com employee speaks out

We all knew – or at least some of us did, ahem – that this was likely not

sustainable in the long term. Investors appeared to be backing some startups in

part due to FOMO, and that’s not necessarily a good thing. So as the first

quarter draws to a close, it’s clear that while in no way have fundraises come

to a screeching halt, investors are starting to pump the brakes. Generally, it

appears we are experiencing a market pullback – which Alex touches on in this

piece – precipitated by a number of things, not the least of which – the

conflict in Ukraine and disappointing performances by companies who went public

in the last year. And fintech, last year’s rising star of venture, is not

immune. My former colleague, Joanna Glasner, at Crunchbase News published a

story on March 7 indicating that venture capitalists’ enthusiasm for fintech

seems to be waning as of late. Her data point, according to Crunchbase data, was

that in the two weeks leading up to her post, a total of 51 fintech companies

across the globe collectively had raised $1.1 billion in seed through late-stage

venture funding.

Ensuring safety of digital communities with next-gen AI and proactive care

Safety would be easier to achieve if there was only one type of problematic

behaviour online, but there are so many different categories in places you don’t

expect. It’s become more difficult for consumers to protect their privacy when

there’s so much software beyond the layperson’s understanding. Over a decade

ago, a Cambridge Analytica-linked firm abused platforms to deceive people who

held too much trust in what they saw online, swaying an election in Trinidad by

encouraging people to abstain from voting, ultimately leading to the opposition

party winning. It was made to look like a natural resistance movement, but it

was engineered through corrupt practices. Coronavirus disinformation online has

been a major battleground in the last few years. It’s hard to estimate how many

lives have been potentially lost because people trusted unverified sources. The

need for platforms to moderate user-generated content has never been more

severe. Schwartz points to the importance of detecting issues early, saying, “If

harmful online activity is left unchecked, its reach can grow rapidly and

fester, exposing countless users to violent, extremist, or misleading

content.”

Talent Shortage: Are Universities Delivering Well-Prepared IT Graduates?

Catherine Southard, vice president of engineering at D2iQ, says her company

hasn’t had much success finding new grads with experience in Kubernetes and the

Go programming language, in which D2iQ’s product is primarily developed. “Part

of that is because the tech landscape changes so quickly. It would be great for

a representative from tech companies -- maybe a panel of CTOs -- to sit down

with curriculum developers every couple of years and talk through industry

trends and where technology is headed, and then brainstorm how to bridge the gap

between university and industry,” she says. Southard added something students

can do is research jobs that look interesting, then see what tech stack those

companies are using. They can then equip themselves to land those jobs by

studying up on that technology by using free resources online or taking courses.

She sees another area of improvement in support for internship programs.

Historically, D2iQ had a program in the US, but it was expensive to operate, and

it didn't lead to long-term employee retention, except for a couple of stand-out

talents.

IT talent: Rethinking age in the hybrid work era

Never have an organization’s technical capabilities mattered less to its

long-term differentiation and competitiveness. The rise of accessible,

affordable outsourced vendors, SaaS platforms, and capabilities-on-demand means

that most companies have the ability to acquire whatever leading-edge

technologies and skill sets are needed at the moment. Leaders know the companies

that win are the ones that get the most out of their people and teams. Resumes

don’t tell us much about the skills that matter most in our current climate, and

the computers we “hire” to read resume keywords tell us even less. These

workers, having seen and been through countless configurations of teams,

conflict, and trends have figured out how to focus on what makes a difference.

We might learn more from them on how to spot and hire the unique capabilities

that real people bring to real-people solutions in our workforce. ... As our

work becomes physically less proximate, we need to find ways to seek out

guidance – not just in classes and courses, but in real time, from our

colleagues.

Quote for the day:

"Your first and foremost job as a leader

is to take charge of your own energy and then help to orchestrate the energy

of those around you." -- Peter F. Drucker

/filters:no_upscale()/articles/problem-reframing-method/en/resources/7image001-1646833426264.jpg)

/filters:no_upscale()/articles/kubernetes-stateful-applications/en/resources/4image1-1646437036290.jpg)