Decentralized identity using blockchain

Let’s assume an online shopping scenario where the required data will transit

from the wallet associated with the decentralized identity. The wallet in this

scenario contains the verified identity, address, and financial data. The users

share identity data to log in with the website by submitting the required

information from the identity wallet. They are authenticated with the website

without sharing the actual data. The same scenario applies to the checkout

process; a user can place an order with the address and payment source already

verified in his identity wallet. Consequently, a user can go through a smooth

and secure online shopping experience without sharing an address or financial

data with an ecommerce website owner. ... Blockchain technology uses a consensus

approach to prove the data authenticity through various nodes and acts as the

source of trust to verify user identity. Along with the data, each block also

contains a hash that changes if someone tempers the data. These blocks are a

highly-encrypted list of transactions or entries shared across all the nodes

distributed throughout the network.

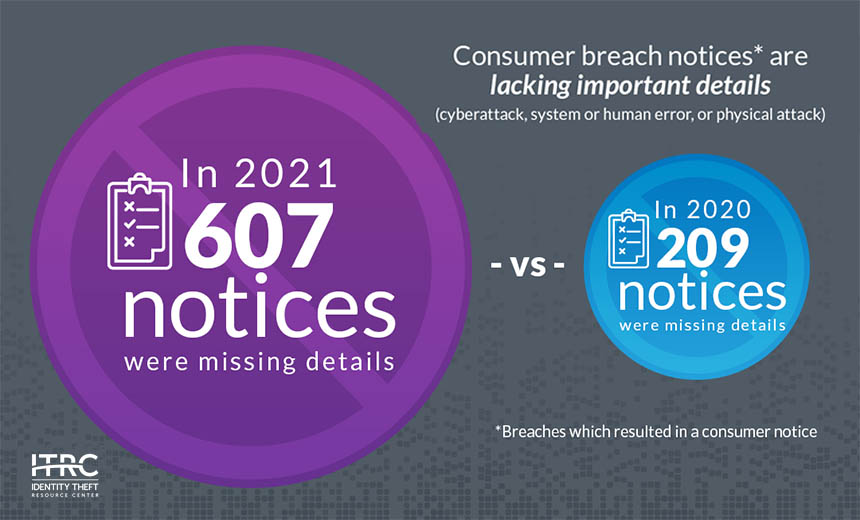

Breach Notification: Poor Transparency Complicates Response

Unfortunately, data breach experts continue to see increasing transparency

shortfalls, both from organizations that fall victim and from regulators. In

2020, for example, 209 consumer breach notifications lacked important details,

while in 2021, 607 breaches lacked such details. So says the Identity Theft

Resource Center, a nonprofit organization based in San Diego, California, that

provides no-cost assistance to U.S. identity theft victims to help resolve their

cases (see: Data Breach Trends: Global Count of Known Victims Increases). "The

lack of actionable information in breach notices prevents consumers from

effectively judging the risks they face of identity misuse and taking the

appropriate actions to protect themselves," ITRC says in its latest Annual Data

Breach Report, looking at 2021 trends. "A decrease in timely notices posted by

states, including one state that updated breach notices in December 2021 for the

first time since the fall of 2020, also prevents consumers from taking action to

protect themselves and organizations that assist identity crime victims from

offering timely, effective advice."

Entrepreneurship for Engineers: How to Build a Community

You probably already know that understanding and being able to articulate your

product’s value proposition is critical to successful sales and marketing — but

your community needs to add value, too, above and beyond the value that the

product/project provides. “No one wakes up in the morning and thinks ‘I’m going

to go and answer questions on the internet,” Bacon said. People need to get

something out of participating in the community that they can’t get anywhere

else. “People love the community aspects,” said Ketan Umare, co-founder and CEO

of Union.ai, the company behind Flyte, an open source workflow automation

platform for data and machine learning processes, and his experience building a

community with a value proposition above and beyond the project’s value. “We

guarantee you that in the community, there is somebody to listen to your

problems,” Umare said. “It creates this feeling that you are not alone.”

What SREs Can Learn From Capt. Sully: When To Follow Playbooks

What’s interesting about Sully’s story is that he didn’t do exactly what pilots

(or engineers) are trained to do. He didn’t stick completely to the playbook

that a pilot is supposed to follow during engine failure, which stipulates that

the plane should land at the nearest airport. Instead, he made a decision to

crash-land in the Hudson River. The fact that Sully did this without any loss of

human life turned him into a hero. In fact, Sully the movie almost villainizes

the National Transportation Safety Board (NTSB) for what the film presents as an

unfair investigation of Sully for not sticking to the playbook. Yet, as the

podcasters noted, the difference between heroism and villanism for Sully may

just have boiled down to luck. They pointed out that in similar incidents – like

the Costa Concordia sinking in 2012 – in which staff deviated from playbooks,

they ended up facing stiff penalties. In the Costa Concordia case, the captain

of the boat was placed in jail – despite the fact that his decision not to stick

rigidly to the playbook most likely reduced the total loss of human life.

The truth about VDI and cloud computing

Performance is the core problem. Not all home-based Internet connections

support high speeds and low latency. Indeed, even if you pay for the faster

stuff, a few days of detailed monitoring will show that latency and speed are

pretty bursty overall. VDI, depending on what you’re leveraging, indeed keeps

data and applications centrally located and thus hopefully secure. But both

application images and data must be constantly transmitted to the employees’

devices and interactions transmitted back to the virtual servers. They are

very chatty. This is unlike applications that run locally and have data stored

locally, where the response is nearly instantaneous. Most of us are used to

this kind of performance. Latency, even if it’s not noticeable by most remote

workers, can add up to productivity losses that run into many millions of

dollars a year. Many of the savvier remote workers have worked around the

performance issues by moving some of the data to local storage on their

devices (such as with email), thus causing a potential security problem if the

device is hacked or stolen.

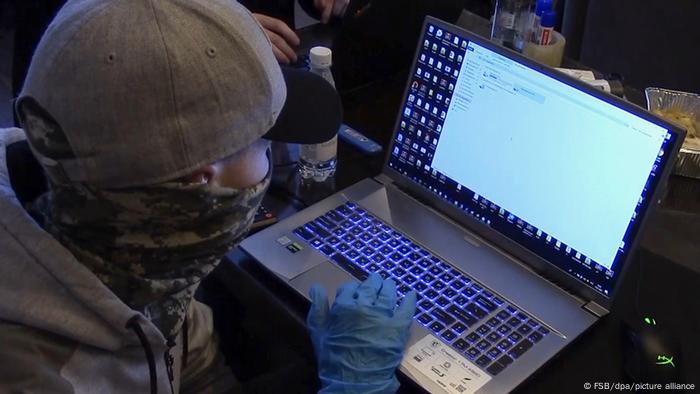

Ukraine: How to protect yourself against cyberattacks

Experts say they are currently more concerned with institutional rather than

personal cyber hacks. But attacks on individual accounts owned by private

citizens, who work for institutions that handle sensitive information, are

still a risk. "People who are not wary are often the weakest link and the foot

in the door for cybercriminals looking to stage a larger attack on critical

infrastructure," Rachel Schutte, an IT and cybersecurity manager based in

Germany, told DW. This was the case for European government personnel involved

in assisting refugees fleeing Ukraine. They received phishing emails — or

messages aimed at collecting sensitive information — from a Ukrainian armed

service member’s compromised account, she said. In response to increased

instances of cyberattacks aimed at employees of high-profile organizations,

Deutsche Welle has also asked employees to ramp up security on personal social

media accounts. ... Cloud-based services distribute distinct functions

across data centers in multiple locations, fueling a race towards

interconnected networks.

Finance firms scrape alternative data from unexpected sources

In light of the "Great Resignation" and unprecedented job mobility in part

sparked by the pandemic, such data about job happiness is "top of mind for

investors today," Lopata said. Another timely use for alternative data is

tracking how inflation in the U.S. is disrupting markets. Thinknum is

following used car sales on CarMax and Carvana, two of the big auto sales

apps. "We're tracking all that data in real time down to a VIN number, so that

allows you to understand whether prices are peaking," Lopata said. "Beyond

just tracking the peaks … we're tracking when the peak ends." "We're able to

identify that in January '22, we finally started to see some decrease in

pricing," she added. Other current market trends for which Thinknum is digging

up alternative data include changes in the food delivery services business and

cryptocurrency price fluctuations, where the vendor has discovered that

GitHub, the provider of internet hosting for software development, is a prime

source of data.

US Officials Push Collaboration, AML Controls for Crypto

According to Conklin, the Treasury Department has for a decade targeted the

assets of Russian elites - dating back to the country's first invasion of

Crimea in 2014. "So we do know a little bit about how this regime likes to

evade sanctions and move money, and we have a significant toolkit at our

disposal now to tackle that," he said. "The regime does like to layer its

assets and move money. They have a long and extensive playbook to launder

money, and at the center of their playbook is their web of international

corporate registration and the use of foreign companies and foreign persons.

They're also really adept at conversion to other assets, including gold and

foreign currencies." And so, asked whether crypto will be a part of its

workaround, Conklin said: "Certainly, there's going to be an element. That's

part of the playbook, but it frankly isn't at the top of their list." He also

referenced Treasury's sanctioning of the Russian crypto exchange Suex in

September 2021 as an example of "how sanctions can work in the crypto

ecosystem"

Gartner: Public sector must target disjointed IT strategy

Mickoleit recommended that public sector IT chiefs “zoom out” to enable them

to look at how technology investments can be aligned with policy objectives.

As an example of joining up IT with policy, he said it is impossible to

provide high-quality public sector services without the concept of digital

identity, which needs to link across different tech infrastructure and public

sector bodies. Another aspect of the pandemic was that having “good enough”

processes is not sufficient, said Mickoleit. “Just working isn’t enough. There

were huge scaling issues, families and businesses in need.” He warned that

such a situation is not sustainable when there is a disruption. “There is a

need for efficiencies in government,” he added. This means IT leaders need to

focus on reducing the number of process steps to support case work and deliver

a service to a citizen, said Mickoleit. “There is an ideal opportunity to

combine AI and automation for better support,” he pointed out.

How to Become a Data Governance Lead

A significant problem facing businesses implementing a Data Governance program

is the realization that raw data is often not analysis-ready. The data may be

badly organized, unstructured, or has been stored in separate databases. The

data has to be cleaned and standardized before the Data Governance program can

move forward. Developing a Data Governance program might require a fair amount

of manual labor, but after the data has been standardized, incoming data would

be sent automatically to the appropriate location, and in the correct format.

Data silos are a slightly different problem for Data Governance programs. Data

can be stored in silos and treated as though certain teams or individuals own

it — and they sometimes don’t like to share. Additionally, different

departments may use entirely different systems, making standardization

especially difficult. These same departments may have no real understanding of

their data’s value. Data Governance will support a framework allowing access

to their data, breaking down the silos.

Quote for the day:

"A leader's dynamic does not come from

special powers. It comes from a strong belief in a purpose and a willingness

to express that conviction." -- Kouzes & Posner

/filters:no_upscale()/articles/iot-sensors-enterprise/en/resources/1Sensors_img-1645633720457.jpeg)