Multifactor Authentication Is Being Targeted by Hackers

Proofpoint found today’s phishing kits range from “simple open-source kits with

human-readable code and no-frills functionality to sophisticated kits utilizing

numerous layers of obfuscation and built-in modules that allow for stealing

usernames, passwords, MFA tokens, social security numbers, and credit card

numbers.” How? By sending phishing emails with links to a fake target website,

like a login page, to naive users. That, of course, is old news. Hackers have

been using that technique for ages. What this “new kind of kit” brings to the

table is a malware-planted MitM transparent reverse proxy. With this residing on

the target’s PC, it intercepts all the traffic including their credentials and

session cookies even if the connection is to the real site. ... One such

program, Modlishka, already automates these attacks. Polish security researcher

Piotr Duszyński, said of it, “With the right reverse proxy targeting your domain

over an encrypted, browser-trusted, communication channel one can really have

serious difficulties in noticing that something was seriously wrong.”

How to choose a cloud data management architecture

Multi-cloud models incorporate one of more services from more than one cloud

provider (and optionally may include on-premises or hybrid architectures). In

this scenario, the difference is that services from multiple cloud providers are

used. A DBMS offering and the applications that rely on it may be deployed both

on-premises and/or on one or more clouds. As such, all of the considerations of

hybrid cloud may apply with the added considerations of deploying software in

multiple cloud environments. These offerings have historically been limited to

independent software vendors (ISVs) rather than native CSPs, as the ISVs have

more of a vested interest in making sure that their software runs in as many

environments as possible. However, cloud service providers are increasingly

engaging in multi-cloud and intercloud scenarios. The multi-cloud scenario

generally appeals to end users who are concerned about cloud vendor lock-in and

want to be able to move their applications easily to a different cloud provider.

How blockchain investigations work

Knowing the exact entity behind a batch of addresses can be crucial, and

blockchain intelligence companies have ways of finding that. They aggregate

information from multiple sources, often using off-chain data to enrich their

understanding of transactions. They look at dark web forums, social media

posts, and court papers among others. "You can be on Facebook, and you see

[someone] soliciting funds in bitcoin and there's an address there," Redbord

says. That address is copied and can be associated with a cybercriminal ring,

a terrorist organization, or other illicit entities, depending on the case.

Such nuggets of information are gathered by blockchain intelligence companies

and stored for future references. "[We] are building a giant blacklist of

cryptocurrency addresses," Redbord adds. This process of categorizing

addresses is done in the background. Investigators using blockchain

intelligence software simply input the address corresponding to the payment.

Then, they can see the flow of digital money.

Will AI Ever Become Ubiquitous?

We’re entering an era where our personal data will be more valuable than ever,

and consumers are beginning to wake up to that fact. A report in 2019

indicates over 60 percent of respondents felt connected devices were “creepy,”

which will likely slow adoption of such devices. While all of this may sound

daunting, there are some interesting innovations addressing the pain points.

And you’re likely enjoying the benefits of this thinking without even

realizing it. To understand, we have to go into a room filled with networking

gear. Most of us are familiar with server rooms thanks to TV shows and movies

where we see some generic, but high-tech, “data center.” What most consumers

don’t realize is that companies don’t just upgrade all their data center

hardware at once. Just as you likely don’t buy a new router when you buy a new

laptop, data center components are swapped out over time, here and there, and

can wind up as a patchwork of vendors and services. Some time ago, network

administrators unified their management while allowing underlying systems to

micro-manage the individual components.

IoT Deployment – How to Secure and Deploy Internet of Things Devices

Many IoT devices are connected to the internet and can be accessed by hackers

from anywhere in the world. This makes them ripe for attack. Hackers can

exploit vulnerabilities in these devices to gain access to sensitive data or

even take control of them. Another issue is that many IoT devices are not

well-integrated into existing IT security frameworks. As a result, they may

not be properly protected against cyber threats. For example, many IoT devices

lack adequate firewalls and intrusion detection systems, making them

susceptible to attack. Finally, there is also a risk that malicious actors

could weaponize IoT devices for use in DDoS attacks or other cyberattacks. For

example, hackers could exploit vulnerabilities in smart TVs or other

internet-connected devices to launch a devastating DDoS attack against a

company or organization. To mitigate these security problems, organizations

should take steps to secure their IoT devices properly. They should ensure

that all devices have strong passwords and are routinely updated with the

latest security patches.

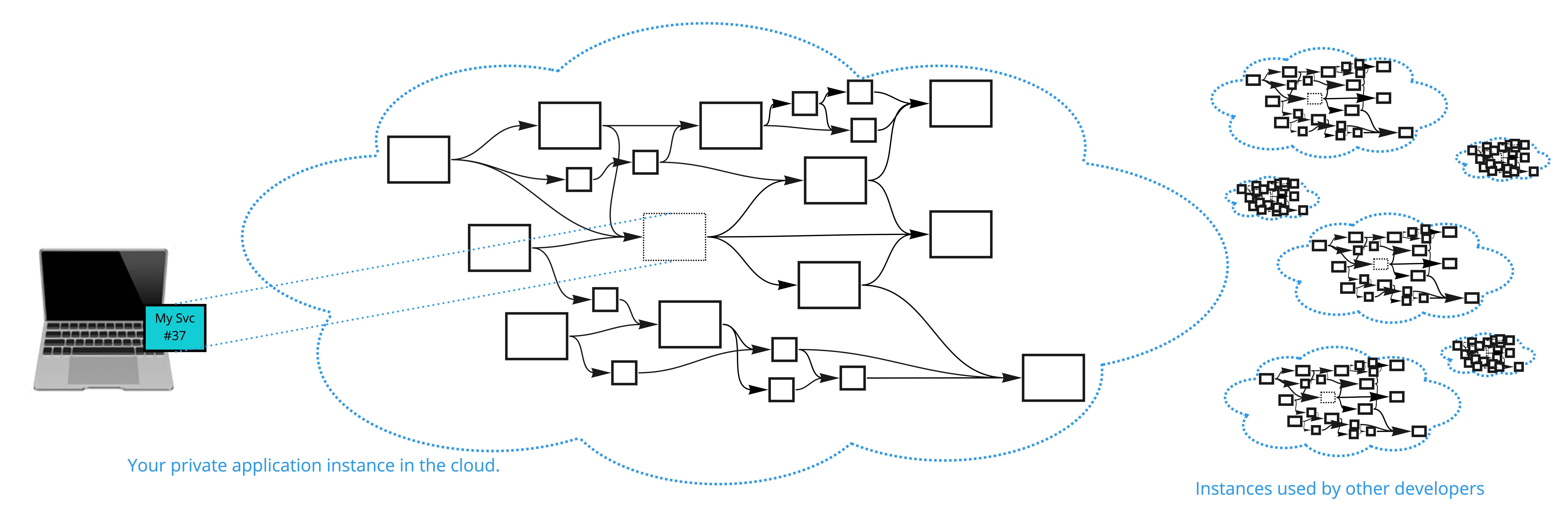

The Cloud Challenge: Choice Paralysis and the Bad Strategy of “On-Premising” the Cloud

Here is the troubling fact: most organizations know that the cloud is

different than on-prem, most of them also know the main differences. Yet, this

knowledge doesn’t translate into better solutions. That is because most

organizations face a challenge: "With all these cloud services out there,

which one to use in each scenario?" Too many choices can lead

developers/architects to some kind of decision paralysis. Instead of going

through the many choices, they just resort to the most familiar. In the case

of organizations who are used to building on-prem, this often means choosing

the old-stack without even considering the alternatives. Having tens of cloud

services is indeed a challenge (Azure has 400+ different services at the time

of writing this, each service might have tens of built-in capabilities).

However, it is still a good challenge to have. That is because if you’re not

dealing with resolving this challenge you’re effectively dealing with the

challenge of how to make the cloud behave like on-prem.

Software development is changing again. These are the skills companies are looking for

Today, good developers work across the stack – in fact, their success relies

on their ability to engage with a range of stakeholders to deliver business

outcomes, says Spencer Clarkson, chief technology officer at Verastar. "I

think what makes a good developer nowadays is that rounded understanding," he

says. "They need to be agile in working style, and also understand the concept

of doing Agile development – fail fast, develop quickly." That's something

that others recognise, too. Tech analyst Forrester says Agile delivery is

critical to successful digital transformations, yet the best enterprises go

even further. ... "Software development is now much more about gluing things

together rather than building something from scratch," he says. "There's lots

of good apps and products out there. It's how you glue them together – that's

your IP. People need to have that aptitude first and be multiskilled second."

Gartner also says organisations and their employees should be prepared to move

in multiple strategic directions at once due to the ongoing requirements for

innovation and digitisation.

Comparing Programming models: SYCL and CUDA

SYCL and CUDA serve the same purpose: to enhance performance through

processing parallelization in varied architectures. However, SYCL offers more

extendibility and code flexibility than CUDA while simplifying the coding

process. Instead of using complex syntax, SYCL enables developers to use ISO

C++ for programming. Unlike CUDA, SYCL is a pure C++ domain-specific embedded

language that doesn’t require C++ extensions, allowing for a simple CPU

implementation that relies on pure runtime rather than a particular compiler.

SYCL is a competitive alternative to CUDA in terms of programmability. With

SYCL, there’s no need for a complex toolchain to develop an application, and

the tools ecosystem is readily available, ensuring a hassle-free development

experience. SYCL doesn’t need separate source files for the host and device.

Instead, you can find the code for the host and the device in the same C++

source file. SYCL implementations are capable of splitting up this source

file, parsing the code, and sending it to the appropriate compilation

backend.

Ban predictive policing systems in EU AI Act, says civil society

As it currently stands, the AIA lists four practices that are considered “an

unacceptable risk” and which are therefore prohibited, including systems that

distort human behaviour; systems that exploit the vulnerabilities of specific

social groups; systems that provide “scoring” of individuals; and the remote,

real-time biometric identification of people in public places. However,

critics have previously told Computer Weekly that while the proposal provides

a “broad horizontal prohibition” on these AI practices, ...”. In their letter,

published 1 March, the civil society groups explicitly call for predictive

policing systems to be included in this list of prohibited AI practices, which

is contained in Article 5 of the AIA. “To ensure that the prohibition is

meaningfully enforced, as well as in relation to other uses of AI systems

which do not fall within the scope of this prohibition, affected individuals

must also have clear and effective routes to challenge the use of these

systems via criminal procedure, to enable those whose liberty or right to a

fair trial is at stake to seek immediate and effective redress,” it said.

IT leadership: 3 new rules for hybrid work

The very nature of the annual review sets up a dynamic where the manager

critiques and the employee is on the defensive. The employee often feels that

the manager focuses solely on shortcomings and not on achievements. They may

wonder, “Why didn’t my manager mention this issue when it actually happened?”

or “Why won’t my manager recognize the things I’ve done right?” The manager

may be new to the position and not entirely familiar with the employee, their

position, or work history, making a constructive review more challenging. In

addition, many managers simply are not trained to communicate, coach, and lead

effectively. With higher numbers of employees working remotely, reviews have

an added layer of difficulty especially if they aren’t done in person. Body

language can be harder to read. Without seeing the employee in action

day-to-day, the manager might not be aware of how productive they are. Zoom

fatigue can also cause many employees to remain silent rather than actively

participate.

Quote for the day:

"Leadership is about carrying on when

everyone else has given up." -- Gordon Tredgold

/filters:no_upscale()/articles/iot-sensors-enterprise/en/resources/1Sensors_img-1645633720457.jpeg)