Oh, Snap! Security Holes Found in Linux Packaging System

The first problem was the snap daemon snapd didn’t properly validate the

snap-confine binary’s location. Because of this, a hostile user could hard-link

the binary to another location. This, in turn, meant a local attacker might be

able to use this issue to execute other arbitrary binaries and escalate

privileges. The researchers also discovered that a race condition existed in the

snapd snap-confine binary when preparing a private mount namespace for a snap.

With this, a local attacker could gain root privileges by bind-mounting their

own contents inside the snap’s private mount namespace. With that, they could

make snap-confine execute arbitrary code. From there, it’s easy to start

privilege escalation for an attacker to try to make it all the way to root.

There’s no remote way to directly exploit this. But, if an attacker can log in

as an unprivileged user, the attacker could quickly use this vulnerability to

gain root privileges. Canonical has released a patch that fixes both security

holes. The patch is available in the following supported Ubuntu releases: 21.10;

20.04, and 18.04. A simple system update will fix this nicely.

The DAO is a major concept for 2022 and will disrupt many industries

It is not yet clear where these disruptive technologies will lead us, but we are

sure that there will be much value up for grabs. At the convergence of Web3 and

NFTs lie many platforms looking to leverage technology and infrastructure to

make the NFT ecosystem more decentralized, structured and community-driven.

Using both social building and governance, the decentralized autonomous

organization disruption is a notch higher. The DAO is one major invention that

is challenging current systems of governance. Utilizing NFTs, DAOs are changing

our perspective of how organizations and systems should be run, and they put

further credence to the idea that the optimal form of governance does not have

to do with hierarchical structures. With the principal-agent problem limiting

the growth of organizations and preventing agents from feeling like part of a

team, you can see why the need for decentralized organizations fostering

community-inclusion is paramount. Is there something you would change about your

current organization if given the chance? Leadership?

Use the cloud to strengthen your supply chain

What’s interesting about this process is that it does not entail executives in

the C-suites pulling all-nighters to come up with these innovative solutions.

It’s 100% automated using huge amounts of data and machine learning and

embedding these things directly within business processes so the fix happens

seconds after the supply chain problem is found. These aspects of intelligent

supply chain automation are not new. For years, there has been some deep

thinking in terms of how to automate supply chains more effectively. Those of

you who specialize in supply chains understand this far too well. How many

companies are willing to invest in the innovation—and even the risk—of

leveraging these new systems? Most are not, and they are seeing the downsides

from the markets tossing them curveballs that they try to deal with using

traditional approaches. We’re seeing companies that have been in 10th place in a

specific market move to second or third place by differentiating themselves with

these intelligent cloud-based systems.

Open Source Code: The Next Major Wave of Cyberattacks

When it comes to testing the resilience of your open source environment with

tools, static code analysis is a good first step. Still, organizations must

remember that this is only the first layer of testing. Static analysis refers to

analyzing the source code before the actual software application or program goes

live and addressing any discovered vulnerabilities. However, static analysis

cannot detect all malicious threats that could be embedded in open source code.

Additional testing in a sandbox environment should be the next step. Stringent

code reviews, dynamic code analysis, and unit testing are other methods that can

be leveraged. After scanning is complete, organizations must have a clear

process to address any discovered vulnerabilities. Developers may be finding

themselves against a release deadline, or the software patch may require

refactoring the entire program and put a strain on timelines. This process

should help developers address tough choices to protect the organization's

security by giving clear next steps for addressing vulnerabilities and

mitigating issues.

A guide to document embeddings using Distributed Bag-of-Words (DBOW) model

Beyond practising when things come to the real-world applications of NLP,

machines are required to understand what is the context behind the text which

surely is longer than just a single word. For example, we want to find

cricket-related tweets from Twitter. We can start by making a list of all the

words that are related to cricket and then we will try to find tweets that have

any word from the list. This approach can work to an extent but what if any

tweet related to cricket does not contain words from the list. Let’s take an

example of any tweet that contains the name of an Indian cricketer without

mentioning that he is an Indian cricketer. In our daily life, we may find many

applications and websites like Facebook, twitter, stack overflow, etc which use

this approach and fail to obtain the right results for us. To cope with such

difficulties we may use document embeddings that basically learn a vector

representation of each document from the whole world embeddings. This can also

be considered as learning the vector representation in a paragraph setting

instead of learning vector representation from the whole corpus.

Great Resignation or Great Redirection?

All this Great Resignation talk has many panicking and being reactive. We

definitely shouldn’t ignore it, but we should seek to understand what is

happening and why. And what the implications are for the future. The truly

historical event is the revolution in how people conceive of work and its

relationship to other life priorities. Even within that, there are distinctively

different categories. We know service workers in leisure and hospitality got hit

disproportionately hard by the pandemic. These people unexpectedly found

themselves jobless, unsure how they would pay their bills and survive. Being

resilient and hard-working, many — like my Uber driver — found gigs doing

delivery, rideshare or other jobs giving greater flexibility and autonomy. These

jobs also provided better pay than traditional service roles. Now, with their

former jobs calling for their return, this group of workers has the ability to

choose for themselves what they want. When Covid displaced office workers to

their homes, they were bound to realize it was nice to not have that commute or

the road warrior travel.

The post-quantum state: a taxonomy of challenges

While all the data seems to suggest that replacing classical cryptography by

post-quantum cryptography in the key exchange phase of TLS handshakes is a

straightforward exercise, the problem seems to be much harder for handshake

authentication (or for any protocol that aims to give authentication, such as

DNSSEC or IPsec). The majority of TLS handshakes achieve authentication by using

digital signatures generated via advertised public keys in public certificates

(what is called “certificate-based” authentication). Most of the post-quantum

signature algorithms currently being considered for standardization in the NIST

post-quantum process, have signatures or public keys that are much larger than

their classical counterparts. Their operations’ computation time, in the

majority of cases, is also much bigger. It is unclear how this will affect the

TLS handshake latency and round-trip times, though we have a better insight now

in respect to which sizes can be used. We still need to know how much slowdown

will be acceptable for early adoption.

An overview of the blockchain development lifecycle

Databases developed with blockchain technologies are notoriously difficult to

hack or manipulate, making them a perfect space for storing sensitive data.

Blockchain software development requires an understanding of how blockchain

technology works. To learn blockchain development, developers must be

familiar with interdisciplinary concepts, for example, with cryptography and

with popular blockchain programming languages like Solidity. A considerable

amount of blockchain development focuses on information architecture, that is,

how the database is actually to be structured and how the data to be distributed

and accessed with different levels of permissions. ... Determine if the

blockchain will include specific permissions for targeted user groups or if it

will comprise a permissionless network. Afterward, determine whether the

application will require the use of a private or public blockchain network

architecture. Also consider the hybrid consortium, or public permissioned

blockchain architecture. With a public permissioned blockchain, a participant

can only add information with the permission of other registered

participants.

How TypeScript Won Over Developers and JavaScript Frameworks

Microsoft’s emphasis on community also extends to developer tooling; another

reason the Angular team cited for their decision to adopt the language.

Microsoft’s own VS Code naturally has great support for TypeScript, but the

TypeScript Language Server provides a common set of editor operations — like

statement completions, signature help, code formatting, and outlining. This

simplifies the job for vendors of alternative IDEs, such as JetBrains with

WebStorm. Ekaterina Prigara, WebStorm project manager at JetBrains, told

the New Stack that “this integration works side-by-side with our own support of

TypeScript – some of the features of the language support are powered by the

server, whilst others, e.g. most of the refactorings and the auto import

mechanism, by the IDE’s own support.” The details of the integration are quite

complex. Continued Prigara, “Completion suggestions from the server are shown

but they could, in some cases, be enhanced with the IDE’s suggestions. It’s the

same with the error detection and quick fixes. Formatting is done by the IDE.

Inferred types shown on hover, if I’m not mistaken, come from the server.

...”

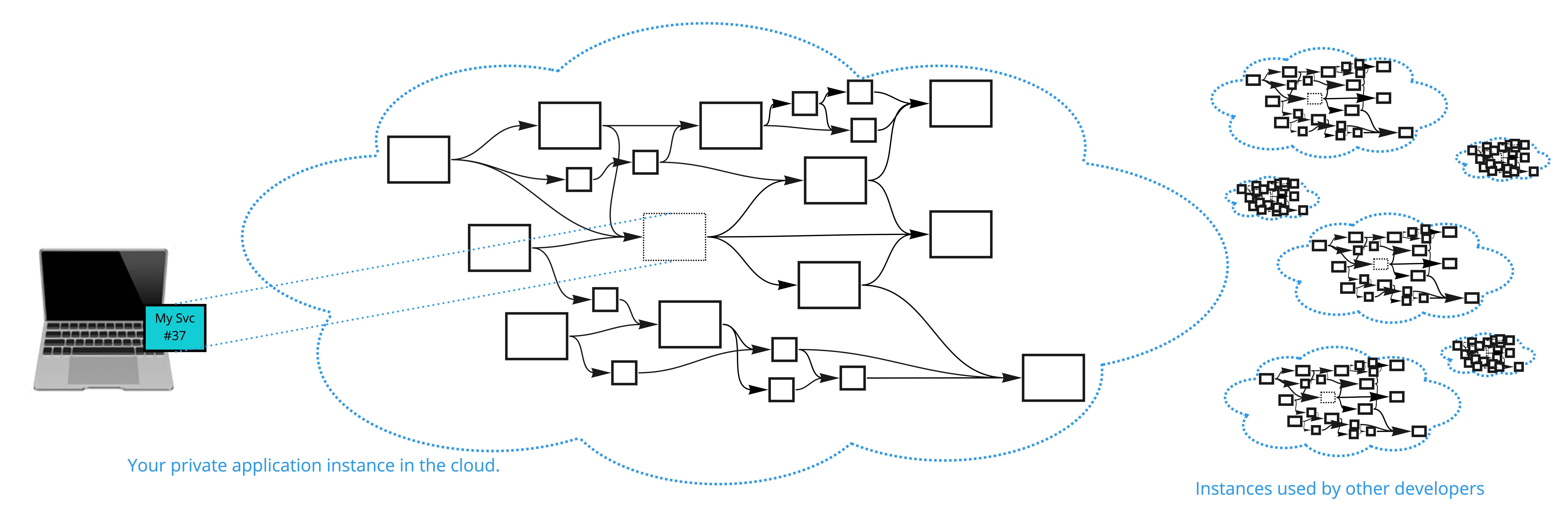

Developing and Testing Services Among a Sea of Microservices

The first option is to take all of the services that make up the entire

application and put them on your laptop. This may work well for a smaller

application, but if your application is large or has a large number of services,

this solution won’t work very well. Imagine having to install, update, and

manage 500, 1,000, or 5,000 services in your development environment on your

laptop. When a change is made to one of those services, how do you get it

updated? ... The second option solves some of these issues. Imagine having the

ability to click a button and deploy a private version of the application in a

cloud-based sandbox accessible only to you. This sandbox is designed to look

exactly like your production environment. It may hopefully even use the same

Terraform configurations to create the infrastructure and get it all connected,

but it will use smaller cloud instances and fewer instances, so it won’t cost as

much to run. Then, you can link your service running on your laptop to this

developer-specific cloud setup and make it look like it’s running in a

production environment.

Quote for the day:

"Courage is leaning into the doubts and

fears to do what you know is right even when it doesn't feel natural or safe."

-- Lee Ellis

No comments:

Post a Comment