Speeding Time to Value: The ‘Just in Time’ Data Analytics Stack

JIT analytics signals an end to the era of data consolidation in which data

management was based on organizations moving data into warehouses, data lakes

and data lakehouses. By leveraging a variety of approaches, including data

materialization, query tool abstractions, and data virtualization, this new

analytics era obsoletes the need to move data to store it in one place for

analysis. JIT represents a substantial evolution in fundamental data strategy

that not only results in better, timelier analytics, but protects the enterprise

from numerous shortfalls. Costs (related to data pipelines, manual code

generation, etc.) are reduced, boosting resource conservation. Moreover,

organizations decrease a considerable amount of risk related to regulations and

data privacy by not constantly copying data from one setting to another, which

potentially exposes it to pricy noncompliance penalties. ... Best of all, the

business logic — schema, definitions, end-user understanding of data’s relation

to business objectives — is no longer locked in silos but is transported to the

data layer for greater transparency and usefulness.

Why DeFi could signal the beginning of the end of traditional Fintech

This is to be expected with current processes that require analogue or legacy

methods of ID verification. This is where the interoperability of the likes of

blockchain come in; providing unimpeachable data to prove identity/provenance,

and in doing so both removing the need for slow processes and accelerating the

rate of transactions. Interoperability is where identifies are portable across

ecosystems. For instance, in gaming, progress and identifies can move across

different gaming dApps. In finance, that would be a single, immutable identity

used for everything, with less requirement to prove a customer is who they say

they are as their interoperable identity is trusted by all providers. Lending,

payments, proof of identity, trading; all will become decentralised, and much

faster. Then there’s NFTs. Transforming ownership of assets with irrefutable

proof, as well as ushering in a new asset class – that of digital. For example,

we could well see the rise of tokenised invoices. Invoice beneficiaries could

sell parts or whole of the invoice on an NFT marketplace within

seconds.

Cybercriminals Swarm Windows Utility Regsvr32 to Spread Malware

“Threat actors can use Regsvr32 for loading COM scriptlets to execute DLLs,”

they explained in a Wednesday writeup. “This method does not make changes to the

Registry as the COM object is not actually registered, but [rather] is executed.

This technique [allows] threat actors to bypass application whitelisting during

the execution phase of the attack kill chain.” ... As a class, .OCX files

contain ActiveX controls, which are code blocks that Microsoft developed to

enable applications to perform specific functions, such as displaying a

calendar. “The Uptycs Threat Research team has observed more than 500+ malware

samples using Regsvr32.exe to register [malicious] .OCX files,” researchers

warned. “During our analysis of these malware samples, we have identified that

some of the malware samples belonged to Qbot and Lokibot attempting to execute

.OCX files…97 percent of these samples belonged to malicious Microsoft Office

documents such as Excel spreadsheet files.” Most of the Microsoft Excel files

observed in the attacks carry the .XLSM or .XLSB suffixes, they added, which are

types that contain embedded macros.

Sardine’s algorithm helps crypto and fintech companies detect fraud

Sardine, a compliance platform for fintech companies, primarily serves neobanks,

NFT marketplaces, crypto exchanges and crypto on-ramps. Its 50+ customer roster

includes Brex, FTX, Luno and Moonpay, the company says. “The use case for all

our customers is essentially that they’re all using us to prevent fraud when

money is being loaded into a wallet,” CEO and co-founder Soups Ranjan told

TechCrunch in an interview. When customers move money to their wallet via credit

card, debit card or ACH transfer, Sardine assigns a risk score to the card or

bank account being used through an algorithm and assumes fraud liability for the

transaction. The company has long offered these two features, but it announced

today that it is unveiling instant ACH transfer capabilities, allowing customers

to bypass the traditional three- to seven-day waiting period to access their

funds. Sardine will do this by pre-funding the consumer’s crypto purchase in

their wallet and taking on the fraud, regulatory and compliance risk associated

with the waiting period.

Is DeFi signalling the great financial evolution?

DeFi presents a revolutionary new frontier for opening fresh opportunities in

improving the global finance markets. From a utility perspective, its technology

alone presents improved speed and security to facilitate financial transactions

— internationally. Moreover, this use case alone is a highly-developed feature

that sees constant pursuit of uptake and improvement by popular cryptocurrency

projects such as Stellar (XLMUSD). Additionally, DeFi sets a fascinating new

precedent for enabling access to financial services. While bank accounts are the

expected norm within most developed societies, the World Bank estimates that up

to 1.7 billion people in the world do not have access to an account of their

own. As a result, the emergent term within discussions around DeFi sees its

examples championed by those in favour of enabling access among users who don’t

transact via traditional bank accounts, now commonly referred to as the

‘unbanked’. As the concept continues to evolve, the question of how DeFi can be

regulated will no doubt continue to be a pressing topic globally.

Cyprus: The Concept Of Control In DeFi Arrangements

With regard to DeFi arrangements specifically, the FATF Guidelines recognize

that a DeFi application, that is, the underlying software, is not a CASP in

accordance with FATF standards, as these do not apply to software or technology.

Despite this, it is possible for persons such as creators, owners and operators

of such applications to qualify as a CASP, if they are found to be providing or

actively facilitating CASP services. Their classification or not as the obliged

entity for AML/CFT law purposes will be based on whether they maintain control

or sufficient influence over the arrangement. Owners or operators are often

distinguished by their relationship to the activities undertaken by the

arrangement. Control or sufficient influence may be exercised in a number of

ways, for example, setting parameters, holding an administrative key, retaining

access to the platform or collecting fees or realizing profit. It is emphasized

that a person may be found to be exercising sufficient control or influence over

a DeFi arrangement even in instances where other parties are involved in the

provision of the service, or where part of the process is automated.

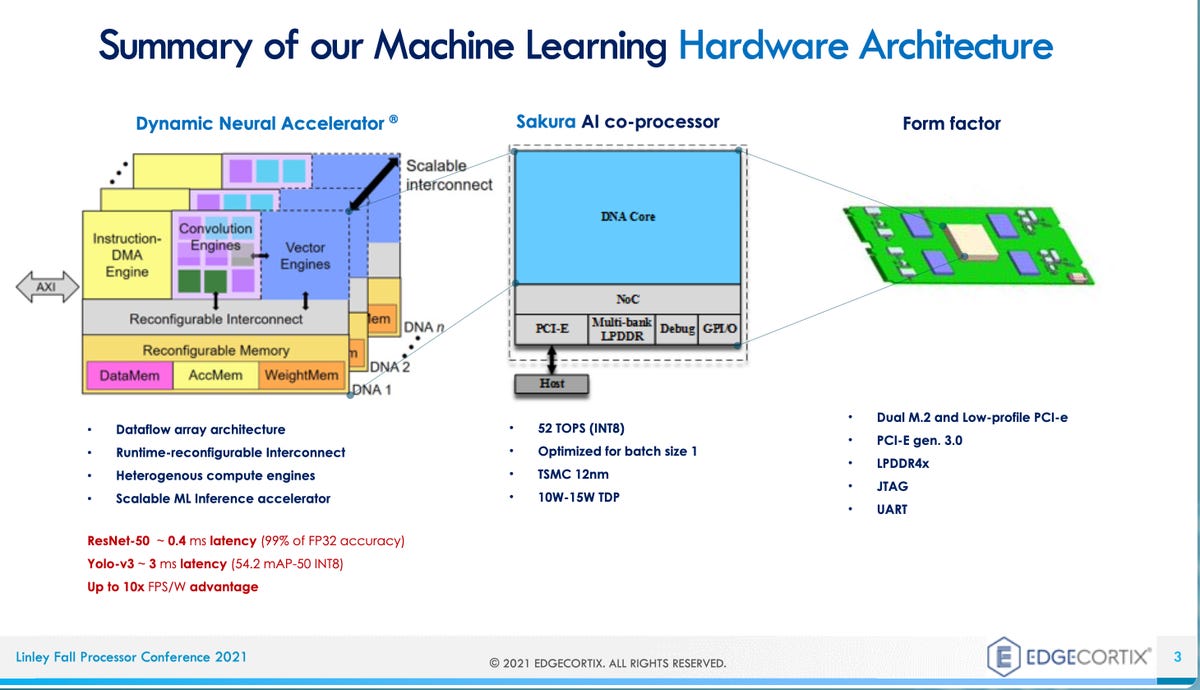

The AI edge chip market is on fire, kindled by 'staggering' VC funding

Edge AI has become a blanket term that refers mostly to everything that is not

in a data center, though it may include servers on the fringes of data centers.

It ranges from smartphones to embedded devices that suck micro-watts of power

using the TinyML framework for mobile AI from Google. The middle part of that

range, where chips consume from a few watts of power up to 75 watts, is an

especially crowded part of the market, said Demler, usually in the form of a

pluggable PCIe or M.2 card. "PCIe cards are the hot segment of the market, for

AI for industrial, for robotics, for traffic monitoring," he explained. "You've

seen companies such as Blaize, FlexLogic -- lots of these companies are going

after that segment." But really low-power is also quite active. "I'd say the

tinyML segment is just as hot. ..." Most of the devices are dedicated to the

"inference" stage of AI, where artificial intelligence makes predictions based

on new data. Inference happens after a neural network program has been trained,

meaning that its tunable parameters have been developed fully enough to reliably

form predictions and the program can be put into service.

If Scrum isn’t agile, a SaaS solution could be

There are certain situations where a software development organization simply

can’t be agile. The first is where there is a fixed deadline. If there is a

deadline, you almost certainly can’t make adjustments that may be necessary to

finish the project. Changes to what you are coding almost always mean a change

to the date. What happens if, when you do the sprint review with the customer,

the customer asks for changes? You can’t roll back a whole sprint, redo the work

in a different way, and then still ship according to a contracted date. If you

can’t adapt to changing requirements, then you aren’t being agile. The second is

when there is a fixed cost. This works very similarly to what I described in the

previous paragraph — if you are only getting paid a set amount to do a project,

you can’t afford to redo portions of the work based on customer feedback. You

would very likely end up losing money on the project. Bottom line: If you have a

fixed deadline or a fixed amount of time, you can’t strictly follow Scrum, much

less the Agile Principles.

Can an AI be properly considered an inventor?

Thaler, an advocate of recognizing these devices as inventors, clearly believes

the time has come, stating, “It’s been more of a philosophical battle,

convincing humanity that my creative neural architectures are compelling models

of cognition, creativity, sentience, and consciousness. … The recently

established fact that DABUS has created patent-worthy inventions is further

evidence that the system ‘walks and talks’ just like a conscious human brain.”

(We should bear in mind, however, that an “author” in copyright is not an

identical legal construction with that of an “inventor” in the domain of

patents, but they are closely related concepts.) We also need to consider that

the South African patent system does not involve an examination of the substance

of an application, but unlike in a lot of countries leaves both first

consideration and final resolution of patent validity to the courts, and so the

patent grant was in some sense automatic and not policy-driven.) Importantly,

the U.S., the U.K., and the European Patent Offices (all of which do preliminary

consideration of patentability) rejected this same patent application on the

basis of its ineligibility.

MoleRats APT Flaunts New Trojan in Latest Cyberespionage Campaign

The campaign uses various phishing lures and includes tactics not only to avoid

being detected but also to ensure that its core malware payload only attacks

specific targets, Proofpoint researchers wrote in the report. Some of the

attacks observed by the team also delivered a secondary payload, a trojan dubbed

BrittleBush, they said. NimbleMamba, delivered as an obfuscated .NET executable

using third-party obfuscators, is an intelligence-gathering trojan researchers

believe is a replacement for previous malware used by TA402, LastConn.

“NimbleMamba has the traditional capabilities of an intelligence-gathering

trojan and is likely designed to be the initial access,” researchers wrote.

“Functionalities include capturing screenshots and obtaining process information

from the computer. Additionally, it can detect user interaction, such as looking

for mouse movement.” MoleRats is part of the Gaza Cybergang, an Arabic speaking,

politically motivated collective of interrelated threat groups actively

targeting the Middle East and North Africa.

Quote for the day:

"Leadership is a process of mutual

stimulation which by the interplay of individual differences controls human

energy in the pursuit of a common goal." -- P. Pigors