France’s privacy watchdog latest to find Google Analytics breaches GDPR

The decision on this complaint has clear implications for any website based in

France that’s currently using Google Analytics — or, indeed, any other tools

that transfer personal data to the U.S. without adequate supplementary measures

— at least in the near term. For one thing, the CNIL’s decision notes it has

made “other” compliance orders to website operators using Google Analytics

(again without naming any sites). While, given joint working by EU regulators on

these 101 strategic complaints, the ramifications likely scale EU-wide. The CNIL

also warns that its investigation — along with the parallel probes being

undertaken by fellow EU regulators — extends to “other tools used by sites that

result in the transfer of data of European Internet users to the United States”,

adding: “Corrective measures in this respect may be adopted in the near future.”

So all U.S.-based tools that are transferring personal data are facing

regulatory risk. We’ve asked the CNIL which other tools it’s looking at and will

update this report with any response.

Security Risks Facing Web3 Developers

One of the challenges to securing dApps in the new Web3 world is engaging

security professionals in a meaningful way. A number of the cybersecurity

experts I follow on Twitter have been dismissive of Web3 and blockchain

technologies as fads at best and scams at worst. I asked Spanier what it will

take to get more of these folks to engage with Web3. “For security

professionals, here’s some advice to figure out if blockchain security interests

you,” he replied. “Treat your initial plunge as an exploratory journey. Look at

different security issues that have manifested themselves in the past, be they

with smart contracts or core blockchains. These projects are mostly open, so you

can look at their Github issues and patches. Review vulnerability write-ups and

deconstructions of previous attacks. Projects affected by a compromise will

typically post detailed write-ups. This would be a good start.” There’s a lesson

for developers here too. Because so much of what’s being developed for Web3 is

done in a very public way, there’s an opportunity to avoid the mistakes of

others. As you develop, consider doing a review of mistakes made by others a

part of your release process.

How to maximise value from IT vendor collaborations

It goes without saying that not everyone can be a maestro of everything. This is

hugely applicable to IT businesses who tackle complex tasks across several

technologies and infrastructures on a daily basis. It becomes clear that IT

companies that are running their strategies solo are not taking advantage of the

possible strengths of great IT vendor collaborations, which include several

experts of different skills across a plethora of applications. In other words,

it is likely that an IT partner with an appropriate talent and skillset would be

capable of selecting the right solution for the client, rather than the primary

vendor that does not specialise in the client’s specific needs. In order to

develop and nurture productive partnerships, IT businesses must know when to

partner, who to partner with, and how to add value to a partnership. A

partnership is a value added relationship that develops over time based on the

foundation of trust. It takes equal endeavour from both sides to evolve into an

IT partnership enabling both the parties to share their ideologies, work

cultures, expertise, and strategies.

Embracing Agile Values as a Tech and People Lead

A key agile principle to me is “Embrace Change”, the subtitle of XP Xplained by

Kent Beck. Change is continuous in our world and also at work. Accepting this

fact makes it easier to let go of a decision that was taken once under different

circumstances, and find a new solution. To change something is also easier if

there is already momentum from another change. So I like to understand where the

momentum is and then facilitate its flow. We had a large organizational change

at the beginning of 2020. Some teams were newly created and everyone at MOIA was

allowed to self-select into one of around 15 teams. That was very exciting. Some

team formations went really well. Others didn’t. There were two frontend

developers who had self-selected into a team that had less frontend work to do

than expected. These two tried to make it work by taking over more

responsibility in other fields, thus supporting their team, but after a year

they were frustrated and felt stuck. Recognizing the right moment that they

needed support from the outside to change their team assignment was very

important.

AI Biometric Authentication for Enterprise Security

Biometric authentication technology has been an important industry trend for

years, especially in 2021 due to the latest AI innovations available on the

market. According to IBM, 20% of breaches are caused by compromised

credentials. Worse, it can take an average of 287 days to identify and respond

to a data breach. AI-based security is increasing in usage and will be

necessary to remain competitive in any industry. IBM reports that as of 2021,

25% of businesses have completed deployment of AI-based security, while 40%

are partially deployed. The remaining 35% have not begun this process, and if

your business falls into this category you may be placing your clients at

great risk for dangerous data breaches. Investing in AI-based security can

save a business up to $3.81 million in 2021. Being able to use artificial

intelligence to identify and automatically respond to data breaches is

incredibly important for protecting the data and privacy of a company and its

customers. AI biometrics authentication provides yet another safeguard against

a data breach that is essential for businesses of any scale.

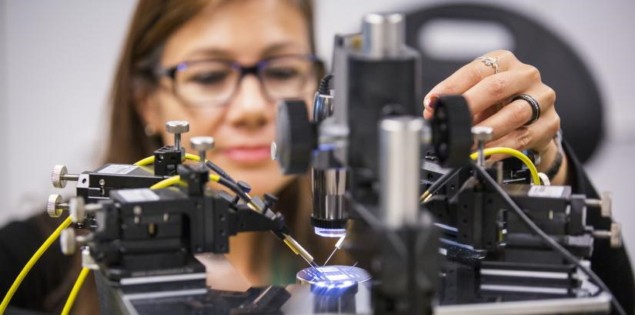

Graphene biosensor will drive new innovations in brain-controlled robotics

Recently, researchers have developed EEG sensors made from graphene, which

offers excellent conductivity and biocompatibility. Graphene-based

biosensors, however, often have low durability, corroding upon contact with

sweat, and exhibit high skin-contact impedance that hampers the detection of

signals from the brain. A novel graphene-based biosensor developed at the

University of Technology Sydney aims to overcome these limitations,

detecting EEG signals with high sensitivity and reliability – even in highly

saline environments. The sensor, described in the Journal of Neural

Engineering, is made from epitaxial graphene (EG) grown on a silicon carbide

(SiC)-on-silicon substrate. This structure unites graphene’s favourable

properties with the physical robustness and chemical inertness of SiC.

“We’ve been able to combine the best of graphene, which is very

biocompatible, very conductive, with the best of silicon technology, which

makes our biosensor very resilient and robust to use,” says senior author

Francesca Iacopi in a press statement.

Is artificial intelligence better than humans at making money in financial markets?

Our immediate observation was that most of the experiments ran multiple

versions (in extreme cases, up to hundreds) of their investment model in

parallel. In almost all the cases, the authors presented their

highest-performing model as the primary product of their experiment –

meaning the best result was cherry-picked and all the sub-optimal results

were ignored. This approach would not work in real-world investment

management, where any given strategy can be executed only once, and its

result is unambiguous profit or loss – there is no undoing of results. ...

Despite all their imperfections, empirical evidence strongly suggests humans

are currently ahead of AI. This may be partly because of the efficient

mental shortcuts humans take when we have to make rapid decisions under

uncertainty. In the future, this may change, but we still need evidence

before switching to AI. And in the immediate future, we believe that,

instead of pinning humans against AI, we should combine the two. This would

mean embedding AI in decision-support and analytical tools, but leaving the

ultimate investment decision to a human team.

Future for Careers in Automation Looking Bright

Trivedi points out there are many places where people can begin their

journey, starting in core-IT or IT engineering, before moving into

automation engineering/SRE (Site Reliability Engineer). “Another good path

is to start off in the technical support organization and learn more about

the technical side of the product,” he says. “Then, you can take on small

projects that help automate support issues, and you can use this experience

to move into SRE. Many people also start off in a systems administration

role before moving into an engineering role.” Nirmal said entry-level

professionals with an understanding and passion for automation technologies

have “endless opportunities” to embark on a career path that provides

tremendous value to a company's digital transformation and future growth. A

key change in how organizations are approaching automation is through

expanded use of AI and machine-learning. This means IT workers must have

knowledge of how AI advances automation and allows for more informed

decisions that improve outcomes.

Pay attention to your attention

An overly intense focus on a goal can lead to what cognitive psychologists

call goal neglect. That may seem counterintuitive to the average

goal-oriented MBA or entrepreneur, but take, for example, the dynamic at

work in micromanagement. Often, when leaders micromanage employees, an

intense focus on task performance distracts those leaders from the larger

goals of the company. They obsess over the trees and neglect the forest—and

drive employees crazy while they’re at it. Where you direct your focus is a

function of the brain’s attention system. This system has three subsystems,

which Amishi Jha, a professor and the director of contemplative neuroscience

for the Mindfulness Research and Practice Initiative at the University of

Miami, describes as the flashlight (or orienting system), which enables you

to selectively direct and concentrate your attention; the floodlight (or

alerting system), which enables you to take in the larger picture; and the

juggler (or executive function), which enables you to align your actions to

your aims. “What happens with goal neglect is that the flashlight is pointed

very intently, but the floodlight is not quite working,” she told me in a

recent Zoom interview.

Governance: Your Data Mesh Self-Service Depends on It

Federated governance is a balancing act. While a producer of a data product

should have full autonomy to build, populate and publish in any way they see

fit, they must also ensure that it is in a form that is easy and reasonable

for consumers to access and use. There are many parallels that can be drawn

between the microservices domain and the data mesh domain: Both empower

users to select the tools and technology that is best suited for their use

cases while simultaneously offering resistance to technological sprawl,

confusing implementations and difficulty in usage. For example, a

microservice platform may restrict the languages that developers may use to

a specific subset. In the data mesh, a similar analogy would be to restrict

the format of data products such that only one or two mechanisms are the

usable standards. In both cases, the goal isn’t to make life more difficult

for the creators, but rather to limit the technological sprawl and

implementation complexity, particularly if existing technologies and

standards are more than sufficient to meet the product needs.

Quote for the day:

"The actions of a responsible

executive are contagious." -- Joe D. Batton

No comments:

Post a Comment