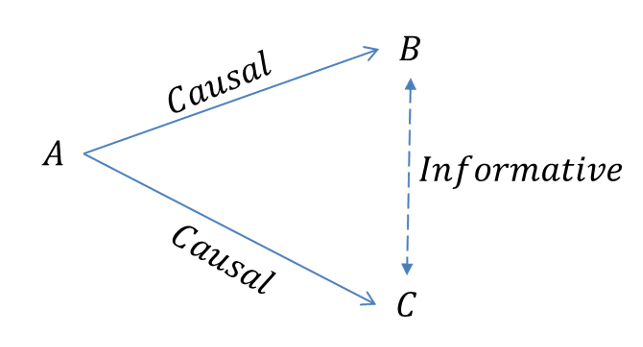

Using Complex Networks to improve Machine Learning methods

Let’s start by defining what a complex network is: a collection of entities

called nodes connected between themselves by edges that represent some kind of

relationship. If you’re thinking: this is a graph! Well, you are correct, most

complex networks can be considered a graph. However, complex networks usually

scale up to thousands or millions of nodes and edges, which can make them pretty

hard to analyze with standard graph algorithms. There is a lot of synergy

between complex networks and the data science field because we have tools to try

and understand how the network is built and what behavior we can expect from the

entire system. Because of that, if you can model your data as a complex network,

you have a new set of tools to apply to it. In fact, there are many machine

learning algorithms that can be applied to complex networks and also algorithms

that can leverage network information for prediction. Even though this

intersection is relatively new, we can already play around with it a bit.

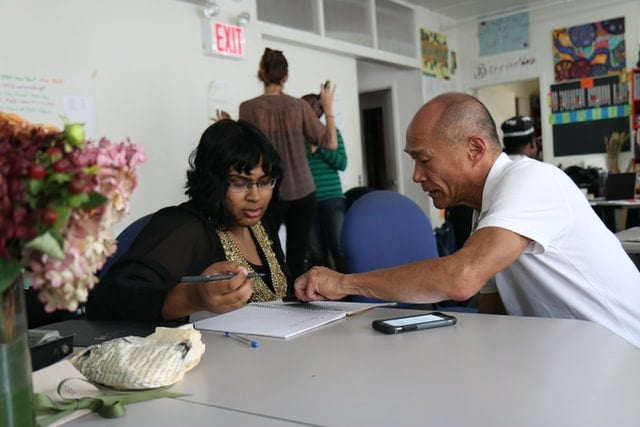

How to Find a Mentor and Get Started in Open Source

What separates open source from its proprietary counterpart is the open source

community, made up of a mix of volunteers, super-fans and über-users of a

product or suite of products. So while it’s reasonably overwhelming to think

where to start, there’s the unique benefit of built-in communities to support

you. It’s good to start with an idea of what you want to get out of your

contribution — a job, a mentor, experience in a methodology, service, interest

or coding language. Use the CNCF project landscape to search by your interest —

monitoring, securing, or deploying, for example — or by organization or

skillset. Next, think if you want to be part of one of the biggest, horizontal

communities or if you’re feel more comfortable in a smaller niche. And then it’s

about deciding what you want to put in to achieve that goal. For Mohan,

contributing to open source projects gives her experience in a wider breadth of

technologies outside of her job, including in Kubernetes and chaos

engineering.

Securing a New World: Navigating Security in the Hybrid Work Era

Security doesn’t get any easier with some workers returning to the office,

others staying home and quite a few doing a bit of both. That’s because the

office, which was once the company’s security standard, is often full of devices

that have been sitting idle since early last year. Security patches, which are

issued all the time, are important to install at the point they’re published.

But a computer that has been turned off for a year, unable to download patches,

is a vulnerable device. And there may be dozens or even hundreds of patches

waiting in the queue that are needed to bring a device up to par. There are, not

surprisingly, a host of recommendations that experts have offered to help

security teams in their work. Educating employees on the threats that people and

companies face is one of their top suggestions. A survey from Proofpoint’s State

of the Phish report emphasizes the need for a people-centric approach to

cybersecurity protections and awareness training that accounts for changing

conditions, like those constantly experienced throughout the pandemic.

Now’s the time for more industries to adopt a culture of operational resilience

When you think about resiliency and doing work in operational models, it’s a

verb-based system, right? How are you going to do it? How are you going to

serve? How are you going to manage? How are you going to change, modify, and

adjust to immediate recovery? All of those verbs are what make resiliency

happen. What differentiates one business sector from another aren’t those verbs.

Those are immutable. It’s the nouns that change from sector to sector. So,

focusing on all the same verbs, that same perspective we looked at within

financial services, is equally as integratable when you think about

telecommunications or power. ... We’re seeing resiliency in the top five

concerns for board-level folks. They need a solution that can scale up and down.

You cannot take a science fair project and impact an industry nor provide value

in the quick way these firms are looking for. The idea is to be able to try it

out and experiment. And when they figure out exactly how to calibrate the

solution for their culture and level of complexity, then they can rinse, repeat,

and replicate to scale it out.

AWS's new quantum computing center aims to build a large-scale superconducting quantum computer

The launch of the AWS Center for Quantum Computing sees Amazon reiterating its

ambition to take a leading role in the field of quantum computing, which is

expected to one day unleash unprecedented amounts of compute power. Experts

predict that quantum computers, when they are built to a large enough scale,

will have the potential to solve problems that are impossible to run on

classical computers, unlocking huge scientific and business opportunities in

fields like materials science, transportation or manufacturing. There are

several approaches to building quantum hardware, all relying on different

methods to control and manipulate the building blocks of quantum computers,

called qubits. AWS has announced that the company has chosen to focus its

efforts on superconducting qubits -- the same method used by rival quantum teams

at IBM and Google, among others. AWS reckons that superconducting processors

have an edge on alternative approaches: "Superconducting qubits have several

advantages, one of them being that they can leverage microfabrication techniques

derived from the semiconductor industry," Nadia Carlsten tells ZDNet.

The causes of technical debt, and how to mitigate it

There is no single silver bullet that will fix technical debt. Instead, it needs

to be addressed in a multi-faceted way. First, there needs to be a better

cultural understanding across the entire business regarding precisely what it

is. Importantly, stakeholders, including product owners, must also understand

how their actions and decisions may be contributing. Going back to the credit

card analogy, it helps if stakeholders can bear in mind that they could be

dealing with 22% or higher annual interest. In such a case, the temptation to

‘spend’ beyond the team’s limits and live with minimum payments is less

tempting. To pay off existing architectural and other types of technical debt,

teams should compare their current minimum payments and the impact of those on

overall velocity and team morale with the staggering expense of re-architecting

part or all of a solution. Moving from a monolith to microservices is a good

example. As mentioned, however, there is no one-size-fits-all solution.

Long-term maintenance and ‘expenses’ need to be considered as well.

Why aren’t optical disks the top choice for archive storage?

Optical media is also designed with full backwards compatibility, meaning future

BD-R and ODA drives will be able to read disks written in today’s drives. For

example, you can read a CD-R disk written in 1991 in a current BD-R drive. In

contrast, LTO-8 tape drives cannot read LTO-5 tape although they can read LTO-6

tapes. BD-R drives advertise a lifetime of 50 years and Sony advertises 100

years, both of which are longer than tape (30 years) and magnetic hard drives

(five years). If you wanted a 50-year archive on LTO, you would be forced to

migrate data at least once to avoid bit rot but not, as some optical marketing

material suggests, every 10 years. Many people do this anyway to allow them to

retire older tape drives and achieve greater storage density. There is also no

current requirement to re-tension the tapes every so often. There is some debate

about the bit error rate of optical versus tape, but that is a complex issue

beyond the scope of this article.

How to develop a high-impact team

Innovation is increasingly becoming a team sport, requiring diverse perspectives

and collective intelligence. These innovation-focused teams tend to be

ephemeral. They form, collaborate, and disband quickly. Team members need to be

able to step up and step back with equal ease. To participate in this fast,

fluid model of leadership, less assertive employees (and those uninterested in

careers in management) will likely need help stepping up. To get these reluctant

leaders to step up and then step back, provide a path of retreat. Show them that

being a designated leader can be a temporary assignment, existing for the

duration of a project or even for just a single meeting. Some team members will

need encouragement and support to become “step-up” leaders, but others will do

so with ease. It can take work to then get them to step back and support others.

You can help these people develop a more fluid leadership style by modeling

healthy followership practices. Let them see you collaborating with a peer

organization or contributing to a project led by someone below you in the

management hierarchy.

Why automation progress stalls: 3 hidden culture challenges

“A general challenge with putting automation in place is that IT culture often

focuses on heroic problem-solving rather than more mundane processes that

prevent problems from happening in the first place,” says Red Hat technology

evangelist Gordon Haff. “Automation has long been part of the picture – think

system admins writing Bash scripts – but it’s also been reactive rather than

proactive.” If your organization has treated automation mostly as a reactive

problem-solver in the past, people may be less inclined to instinctively grasp

its greater value. That’s where leaders have work to do in terms of

communicating your big-picture plan and the role that automation – and everyone

on the team – plays in it. This is also a mindset that must shift over time with

experience and results: Automation should be as much (or more) about improvement

and optimization as it is about dousing production fires or cutting costs.

Ideally, automation should be boring, in the best possible sense of the word.

“Modern automation practices, such as we often see in SRE roles, make automating

systems and workflows part of the daily routine,” Haff says.

Regulation fatigue: A challenge to shift processes left

President Biden’s recent executive order asks government vendors to attest “to the extent practicable, to the integrity and provenance of open source software used within any portion of a product.” The president’s recent order, and the potential actions of legislators to follow, could lead to burdensome regulations that interfere with shift left practices, and ultimately slow down the pace of software development. The challenge with the directive is that nearly 60 percent of software developers have little to no secure coding training. Developers are traditionally focused on pushing out innovative, stable products, not triaging security alerts. They want to use open-source code without thinking about its possible security risks. Developers rely on open-source components because these are ready-made pieces of code that allow them to keep up with competitive release time frames. They often leave it to their security teams to identify mistakes at the end of the development process. Developers’ reliance on open-source components often presents a challenge to the cautious attitude of security teams.

Quote for the day:

"Leaders, be mindful that there is a

tendency to become arrogant. Such hubris blinds even the best intentions.

Lead with humility." -- S Max Brown

/filters:no_upscale()/articles/productivity-software-development-kaizen/en/resources/1figure-1-senecaglobal-implements-the-kaizen-method-1634561591693.jpg)