10 Steps to Simplify Your DevSecOps

Automation is key when balancing security integrations with speed and scale.

DevOps adoption already focuses on automation, and the same holds true for

DevSecOp. Automating security tools and processes ensures teams are following

DevSecOps best practices. Automation ensures that tools and processes are used

in a consistent, repeatable and reliable manner. It’s important to identify

which security activities and processes can be completely automated and which

require some manual intervention. For example, running a SAST tool in a pipeline

can be automated entirely; however, threat modeling and penetration testing

require manual efforts. The same is true for processes. A successful automation

strategy also depends on the tools and technology used. One important automation

consideration is whether a tool has enough interfaces to allow its integration

with other subsystems. For example, to enable developers to do IDE scans, look

for a SAST tool that has support for common IDE software. Similarly, to

integrate a tool into a pipeline, review whether he tool offers APIs or webhooks

or CLI interfaces that can be used to trigger scans and request reports.

Next-Generation Layer-One Blockchain Protocols Remove the Financial Barriers to DeFi & NFTs

The rapidly expanding world of DeFi is singlehandedly reshaping the global

financial infrastructure as all manner of stocks, securities and transferable

assets are slowly but surely being tokenized and stored in digital wallets. New

protocols are arising daily that allow anyone with an internet connection or

smartphone to access ecosystems that are equivalent to digital savings accounts

but offer much more attractive yields than those found in the traditional

banking sector. Unfortunately, with most of the top DeFi protocols currently

operating on the Ethereum blockchain, the high cost of conducting transactions

on the network has priced out ordinary individuals living in countries where

even a $5 transaction fee is a significant amount of money capable of feeding a

family for a week. This is where competing new blockchain platforms have the

biggest opportunity for growth and adoption thanks to cross-chain bridges, a

growing number of opportunities to earn a yield on new DeFi protocols and

significantly smaller transaction cost.

The Dance Between Compute & Network In The Datacenter

In an ideal world, there is a balance between compute, network, and storage

that allows for the CPUs to be fed with data such that they do not waste too

much of their processing capacity spinning empty clocks. System architects try

to get as close as they can to the ideals, which shift depending on the nature

of the compute, the workload itself, and the interconnects across compute

elements — which are increasingly hybrid in nature. We can learn some

generalities from the market at large, of course, which show what people do as

opposed to what they might do in a more ideal world than the one we all

inhabit. We tried to do this in the wake of Ethernet switch and router stats

and server stats for the second quarter being released by the box counters at

IDC. We covered the server report last week, noting the rise of the

single-socket server, and now we turn to the Ethernet market and drill down

into the datacenter portion of it that we care about greatly and make some

interesting correlations between compute and network.

ZippyDB: The Architecture of Facebook’s Strongly Consistent Key-Value Store

/filters:no_upscale()/news/2021/09/facebook-zippydb/en/resources/1ZippyDb-Architecture-1631795724578.jpg)

A ZippyDB deployment (named "tier") consists of compute and storage resources

spread across several regions worldwide. Each deployment hosts multiple use

cases in a multi-tenant fashion. ZippyDB splits the data belonging to a use case

into shards. Depending on the configuration, it replicates each shard across

multiple regions for fault tolerance, using either Paxos or async replication. A

subset of replicas per shard is part of a quorum group, where data is

synchronously replicated to provide high durability and availability in case of

failures. The remaining replicas, if any, are configured as followers using

asynchronous replication. Followers allow applications to have many in-region

replicas to support low-latency reads with relaxed consistency while keeping the

quorum size small for lower write latency. This flexibility in replica role

configuration within a shard allows applications to balance durability, write

performance, and read performance depending on their needs. ZippyDB provides

configurable consistency and durability levels to applications, specified as

options in read and write APIs. For writes, ZippyDB persists the data on a

majority of replicas' by default.

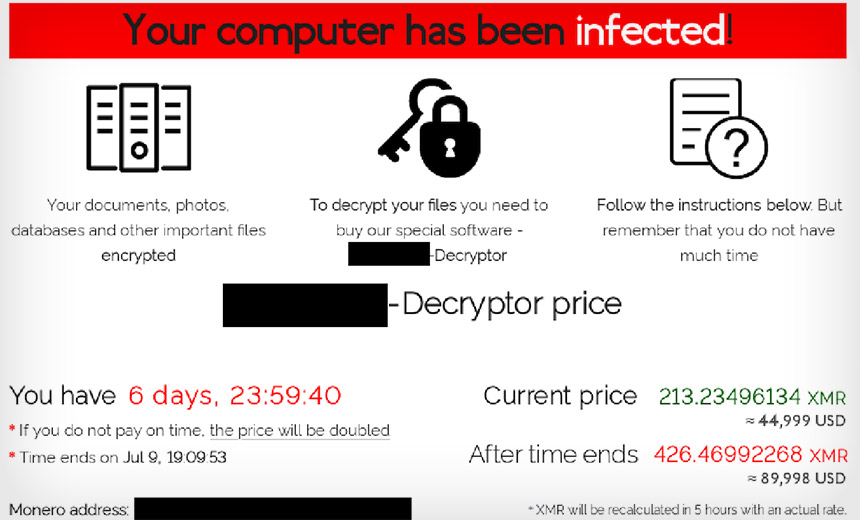

CISA, FBI: State-Backed APTs May Be Exploiting Critical Zoho Bug

The FBI, CISA and the U.S. Coast Guard Cyber Command (CGCYBER) warned today that

state-backed advanced persistent threat (APT) actors are likely among those

who’ve been actively exploiting a newly identified bug in a Zoho single sign-on

and password management tool since early last month. At issue is a critical

authentication bypass vulnerability in Zoho ManageEngine ADSelfService Plus

platform that can lead to remote code execution (RCE) and thus open the

corporate doors to attackers who can run amok, with free rein across users’

Active Directory (AD) and cloud accounts. The Zoho ManageEngine ADSelfService

Plus is a self-service password management and single sign-on (SSO) platform for

AD and cloud apps, meaning that any cyberattacker able to take control of the

platform would have multiple pivot points into both mission-critical apps (and

their sensitive data) and other parts of the corporate network via AD. It is, in

other words, a powerful, highly privileged application which can act as a

convenient point-of-entry to areas deep inside an enterprise’s footprint, for

both users and attackers alike.

Algorithmic Thinking for Data Science

Generalizing the definition and implementation of an algorithm is algorithmic

thinking. What this means is, if we have a standard of approaching a problem,

say a sorting problem, in situations where the problem statement changes, we

would not have to completely modify the approach. There will always be a

starting point to attack the new problem set. That’s what algorithmic thinking

does: it gives a starting point. ... Why is the calculation of time and space

complexities important, now more than ever? It has to do with something we

discussed earlier – the amount of data getting processed today. To explain this

better, let us walk through a few examples that will showcase the importance of

large amounts of data in algorithm building. The algorithms that we casually

create for problem-solving in a classroom are very different from what the

industry requires when the amount of data being processed is more than a million

times what we deal with, in test scenarios. And time complexities are always

seen in action when the input size is significantly larger.

Forget Microservices: A NIC-CPU Co-Design For The Nanoservices Era

Large applications hosted at the hyperscalers and cloud builders — search

engines, recommendation engines, and online transaction processing applications

are but three good examples — communicate using remote procedure calls, or RPCs.

The RPCs in modern applications fan out across these massively distributed

systems, and finishing a bit of work often means waiting for the last bit of

data to be manipulated or retrieved. As we have explained many times before, the

tail latency of massively distributed applications is often the determining

factor in the overall latency in the application. And that is why the

hyperscalers are always trying to get predictable, consistent latency across all

communication across a network of systems rather than trying to drive the lowest

possible average latency and letting tail latencies wander all over the place.

The nanoPU research set out, says Ibanez, to answer this question: What would it

take to absolutely minimize RPC median and tail latency as well as software

processing overheads?

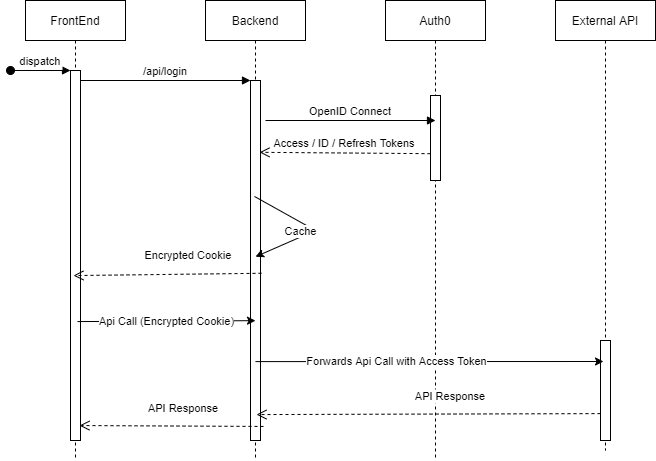

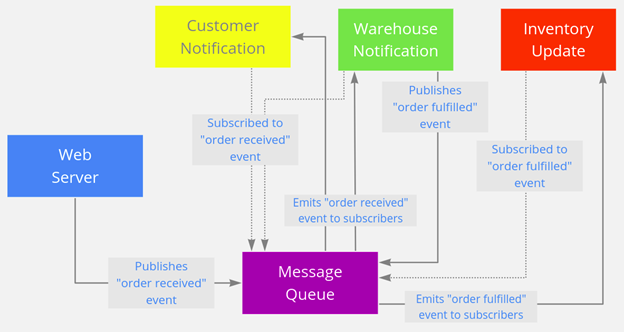

RESTful Applications in An Event-Driven Architecture

There are many use cases where REST is just the ideal way to build your

applications/microservices. However, increasingly, there is more and more demand

for applications to become real-time. If your application is customer-facing,

then you know customers are demanding a more responsive, real-time service. You

simply cannot afford to not process data in real-time anymore. Batch processing

(in many modern cases) will simply not be sufficient. RESTful services,

inherently, are polling-based. This means they constantly poll for data as

opposed to being event-based where they are executed/triggered based on an

event. RESTful services are akin to the kid on a road trip continuously asking

you “are we there yet?”, “are we there yet?”, “are we there yet?”, and just when

you thought the kid had gotten some sense and would stop bothering you, he asks

again “are we there yet?”. Additionally, RESTful services communicate

synchronously as opposed to asynchronously. What does that mean? A synchronous

call is blocking, which means your application cannot do anything but wait for

the response.

Application Security Tools Are Not up to the Job of API Security

With the advent of a microservice-based API-centric architecture, it is possible

to test each of the individual APIs as they are developed rather than requiring

a complete instance of an application — enabling a “shift left” approach

allowing early testing of individual components. Because APIs are specified

earliest in the SDLC and have a defined contract (via an OpenAPI / Swagger

specification) they are ideally suited to a preemptive “shift left” security

testing approach — the API specification and underlying implementation can be

tested in a developer IDE as a standalone activity. Core to this approach is

API-specific test tooling as contextual awareness of the API contract is

required. The existing SAST/DAST tools will be largely unsuitable in this

application — in the discussion on DAST testing to detect BOLA we identified the

inability of the DAST tool to understand the API behavior. By specifying the API

behavior with a contract the correct behavior can be enforced and verified

enabling a positive security model as opposed to a black list approach such as

DAST.

Microservice Architecture – Introduction, Challeneges & Best Practices

In a microservice architecture, we break down an application into smaller

services. Each of these services fulfills a specific purpose or meets a specific

business need for example customer payment management, sending emails, and

notifications. In this article, we will discuss the microservice architecture in

detail, we will discuss the benefits of using it, and how to start with the

microservice architecture. In simple words, it’s a method of software

development, where we break down an application into small, independent, and

loosely coupled services. These services are developed, deployed, and maintained

by a small team of developers. These services have a separate codebase that is

managed by a small team of developers. These services are not dependent on each

other, so if a team needs to update an existing service, it can be done without

rebuilding and redeploying the entire application. Using well-defined APIs,

these services can communicate with each other. The internal implementation of

these services doesn’t get exposed to each other.

Quote for the day:

"Leadership cannot just go along to get

along. Leadership must meet the moral challenge of the day." --

Jesse Jackson