The Reality Behind The AI Illusion

So far, AI has shown some impressive results in narrow application areas only,

like chess-playing computers beating world chess champions and supercomputers

beating human Jeopardy champions. However, these are computers programmed to

solve one specific problem and cannot interpret more complex and multilayered

challenges beyond the given task. This is exactly what Moravec's paradox states;

though it may be easy to get computers to beat human chess champions, it may be

difficult to give them the skills of a toddler when it comes to perception and

mobility. While AI has not reached human performance, it brings valuable

solutions to many real-world problems quickly and effectively. From enhanced

healthcare, innovations in banking and improved environmental protection to

self-driving vehicles, automated transportation, smart homes and chatbots, AI

can offer simpler and more intelligent ways of accomplishing many of our daily

tasks. But how far can AI go? Will it ever be able to function autonomously and

mimic cognitive human actions? We cannot envision how AI will end up evolving in

the far-off future, but at this point, humans remain smarter than any type of

AI.

Mastering the Data Monetization Roadmap

The Data Monetization Roadmap provides both a benchmark and a guide to help

organizations with their data monetization journey. To successfully navigate the

roadmap, organizations must be prepared to traverse two critical inflection

points: Inflection Point #1 is where organizations transition from data as a

cost to be minimized, to data as an economic asset to be monetized; the “Prove

and Expand Value” inflection point; Inflection Point #2 is where

organizations master the economics of data and analytics by creating composable,

reusable, and continuously refining digital assets that can scale the

organization’s data monetization capabilities; the “Scale Value” inflection

point. Carefully navigate these two inflection points enables organizations to

fully exploit the game-changing economic characteristics of data and analytics

assets – assets that never deplete, never wear out, can be used across an

unlimited number of use cases at zero marginal cost, and can continuously-learn,

adapt, and refine, resulting in assets that actually appreciate in value the

more that they are used.

Will AI Make Interpreters and Sign Language Obsolete?

One of Google’s newest ASR NLPs is seeking to change the way we interact with

others around us, broadening the scope of where — and with whom — we can

communicate. The Google Interpreter Mode uses ASR to identify what you are

saying, and spits out an exact translation into another language, effectively

creating a conversation between foreign individuals and knocking down language

barriers. Similar instant-translate tech has also been used by SayHi, which

allows users to control how quickly or slowly the translation is spoken. There

are still a few issues in the ASR system. Often called the AI accent gap,

machines sometimes have difficulty understanding individuals with strong accents

or dialects. Right now, this is being tackled on a case-by-case basis:

scientists tend to use a “single accent” model, in which different algorithms

are designed for different dialects or accents. For example, some companies have

been experimenting with using separate ASR systems for recognizing Mexican

dialects of Spanish versus Spanish dialects of Spanish. Ultimately, many of

these ASR systems reflect a degree of implicit bias. In the United States,

African-American Vernacular English ...

Bad cybersecurity behaviors plaguing the remote workforce

Over one quarter of employees admit they made cybersecurity mistakes — some of

which compromised company security — while working from home that they say no

one will ever know about. 27% say they failed to report cybersecurity mistakes

because they feared facing disciplinary action or further required security

training. In addition, just half of employees say they always report to IT when

they receive or click on a phishing email. ... As lockdown restrictions are

lifted, six in 10 IT leaders think the return to business travel will pose

greater cybersecurity challenges and risks for their company. These risks could

include a rise in phishing attacks whereby threat actors impersonate airlines,

booking operators, hotels or even senior executives supposedly on a business

trip. There is also the risk that employees accidentally leave devices on public

transport or expose company data in public places. ... As cybersecurity will be

mission-critical in the new work environment, it’s encouraging that 67% of

surveyed IT decision makers report that they have a seat at the table when it

comes to office reopening plans in their organizations.

Microsoft's new security tool will discover firmware vulnerabilities

Today, ReFirm needs you to provide the firmware files, but Microsoft plans to

create a database of device information, Weston says. "You plug in CyberX and it

discovers the devices, it monitors them and it asks ReFirm 'do you know anything

about IoT device X or Y'. Hopefully we've pre-scanned most of those devices and

we can propagate the information -- and for anything we don't have, there's the

drag-and-drop interface to do a custom analysis." Having that visibility of

what's on your network and whether it's safe to have on your network is a good

first step. The Azure Device Updates service can already push IoT firmware

updates out through Windows Update. Microsoft's bigger vision is to create a

service based on Windows Update that can handle a much wider range of

third-party devices, says Weston. "We're going to take Windows Update, which

people already at least know and trust on Patch Tuesdays, and we want to push

the IoT and edge devices into that model. Microsoft's update system is a pretty

known commodity -- just about every government regulator out there looked at it

in one form or another -- and so we feel good about being able to move customers

towards it."

Deep Learning, XGBoost, Or Both: What Works Best For Tabular Data?

Today, XGBoost has grown into a production-quality software that can process

huge swathes of data in a cluster. In the last few years, XGBoost has added

multiple major features, such as support for NVIDIA GPUs as a hardware

accelerator and distributed computing platforms including Apache Spark and Dask.

However, there have been several claims recently that deep learning models

outperformed XGBoost. To verify this claim, a team at Intel published a survey

on how well deep learning works for tabular data and if XGBoost superiority is

justified. The authors explored whether DL models should be a recommended option

for tabular data by rigorously comparing the recent works on deep learning

models to XGBoost on a variety of datasets. The study showed XGBoost

outperformed DL models across a wide range of datasets and the former required

less tuning. However, the paper also suggested that an ensemble of the deep

models and XGBoost performs better on these datasets than XGBoost alone. For the

experiments, the authors examined DL models such as TabNet, NODE, DNF-Net,

1D-CNN along with an ensemble that includes five different classifiers: TabNet,

NODE, DNF-Net, 1D-CNN, and XGBoost.

Insider Versus Outsider: Navigating Top Data Loss Threats

While breaches from outside cybercriminals are becoming more complex and require

more resources to combat, companies mustn’t lose sight of a data-loss cause

closer to home – their employees. In their day-to-day positions, employees are

entrusted with highly sensitive information, from financial and personally

identifiable information (PII) to medical records or intellectual property.

While employee error is a major source of security breaches, a well-trained

employee who knows how to take the proper precautions is a key defense from

attacks and breaches. Over the course of their daily responsibilities, employees

can mistakenly share that information outside of the secure network. Often, this

data loss occurs through email, such as mentioning restricted information in

outside correspondence or attaching documents that may violate customer or

patient privacy. For example, let’s say that an employee is working on a

presentation that contains confidential data. They hit a roadblock while trying

to fix a formatting issue and in their race to meet the looming deadline, they

decide to reach out to a friend for help and send the presentation via email

with the confidential data included.

Lawmakers Urge Private Sector to Do More on Cybersecurity

Treating cybersecurity as a core business risk and devoting the appropriate

resources to it is now essential, said Tom Kellermann, head of cybersecurity

strategy at software firm VMware Inc., who also sits on the Secret Service’s

Cyber Investigation Advisory Board. “Cybersecurity should no longer be viewed as

an expense, but a function of conducting business,” he said. Christopher

Roberti, senior vice president for cyber, intelligence and supply chain security

policy at the U.S. Chamber of Commerce, which says it is the world’s largest

business association, said companies don’t stand a chance against determined

nation-state attacks regardless of cybersecurity investments. Partnerships

between the government and the private sector are essential, he said.

“Businesses must take necessary steps to ensure their cyber defenses are robust

and up to date, and the U.S. government must act decisively against cyber

criminals to deter future attacks. Each has a role to play and both need to work

closely to do more,” Mr. Roberti said.

AI Centers Of Excellence Accelerate AI Industry Adoption

It is important to note that there are several functional and operational models

that enterprises are adapting in regard to CoE. The change management model

focuses on emphasizing the prospective innovation that artificial intelligence

can provide for business stakeholders in the organization. Central to this model

is education and training of executives and business units. In addition to

change management, the Sandbox approach is another central model, in which the

CoE acts as the company’s hub of innovation and R&D. This model emphasizes

proofs of concepts and different emerging technologies. The key is alignment of

business units around POCs and being accountable for the initial launch and

development of per-subject use cases. Lastly, the Launchpad model for the CoE

leverages and builds upon the capabilities of existing data scientists,

engineers, and developers. The CoE deploys top subject-matter experts to across

departments to conduct hands-on training and education and scope out early stage

business solutions.

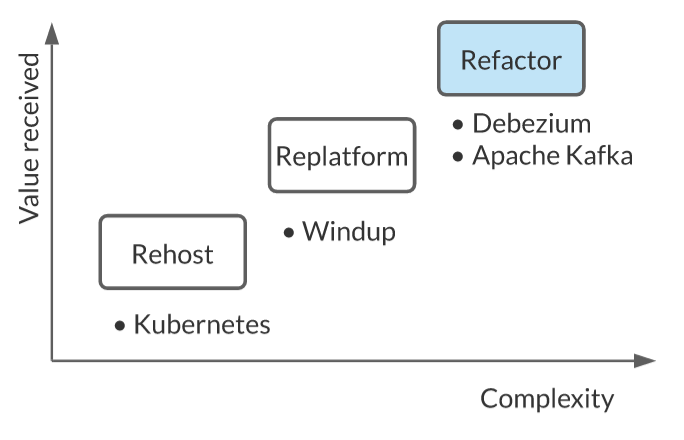

Kubernetes: 5 tips we wish we knew sooner

“One thing that’s better to learn earlier than later with Kubernetes is that

automation and audits have an interesting relationship: automation reduces human

errors, while audits allow humans to address errors made by automation,” Andrade

notes. You don’t want to automate a flawed process. It’s often wise to take a

layered approach to container security, including automation. Examples include

automating security policies governing the use of container images stored in

your private registry, as well as performing automated security testing as part

of your build or continuous integration process. Check out a more detailed

explanation of this approach in 10 layers of container security. Kubernetes

operators are another tool for automating security needs. “The really cool thing

is that you can use Kubernetes operators to manage Kubernetes itself – making it

easier to deliver and automate secured deployments,” as Red Hat security

strategist Kirsten Newcomer explained to us. “For example, operators can manage

drift, using the declarative nature of Kubernetes to reset and unsupported

configuration changes.”

Quote for the day:

"Well, I think that - I think

leadership's always been about two main things: imagination and courage." --

Paul Keating

:max_bytes(150000):strip_icc():format(webp)/GettyImages-1247665468-7fdf4af32a4f4df3a0d885e71b77a601.jpg)