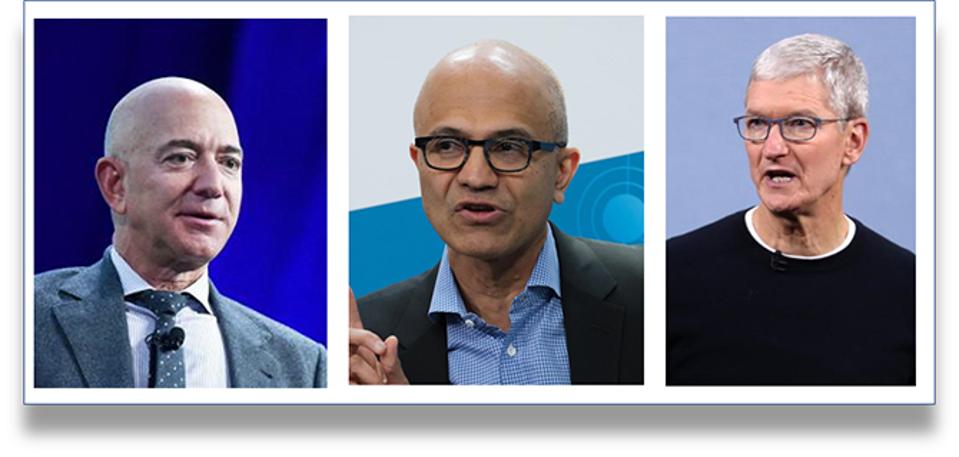

Study Reveals a ‘Skills Gap’ That Jeopardizes Future of Banking Workforce

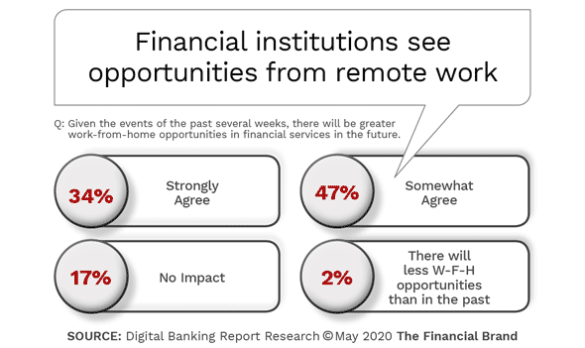

Over a period of only a couple months, entire workforces were required to

familiarize themselves with digital tools which never were needed in a

traditional work environment. At the same time, financial institutions were

required to connect with customers using mobile apps, online tools and digital

engagement capabilities that were foreign to many. The impact of these changes

was felt most by the employees who had been with their financial institution

the longest or were in areas of an organization that had not adjusted to

recent marketplace realities. Many financial institutions responded to

internal and external digital needs with mid-term solutions, understanding

that significantly more is needed. The impact of COVID-19 has forced banks and

credit unions to quickly assess the digital competency of their teams, while

looking to internal training and the marketplace to provide longer term

solutions. This comes at a time when every industry is looking to address a

massive digital and technology skills gap. The research from the Digital

Banking Report found that 72% of financial services executives believed there

was either a moderate (37%) or significant (35%) skills gap. Less than three

in ten thought there was only a minor or no threat.

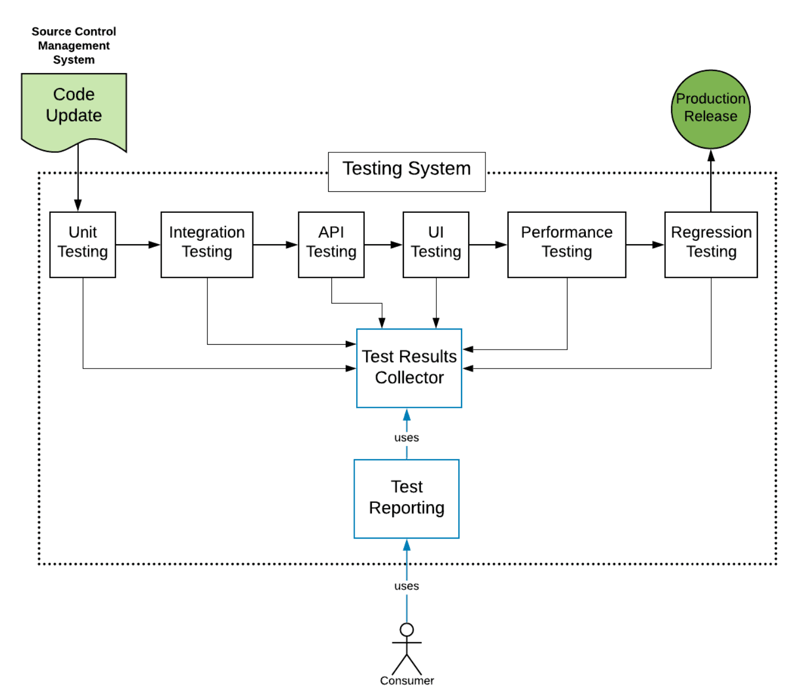

Deployment and Productionization of Machine Learning Models

A machine infrastructure encompasses almost every stage of the machine

learning workflow. To train, test, and deploy machine learning models you need

services from data scientists, data engineers, software prog engineers, and

DevOps engineers. The infrastructure allows people from all these domains to

collaborate and empower them to associate for an end to end execution of the

project. Some examples of tools and platforms are AWS(amazon web services,

Google Cloud, Microsoft Azure machine learning studio, Kubeflow:

Machine-Learning Toolkit for Kubernetes. Architecture deals with the

arrangement of these components(things discussed above) and also takes care of

how they must interact with them. Think of it as building a machine learning

home where bricks, concrete, iron, are integral to the infrastructure,

applications, etc. The architecture shapes our home by using these materials.

Similarly, the architecture here provides that interaction among these

components. ... In machine learning, for the given data different models are

built and we keep track through version control tools like DVC and Git.

Version control will keep track of changes made to the model at each stage and

keep a repository.

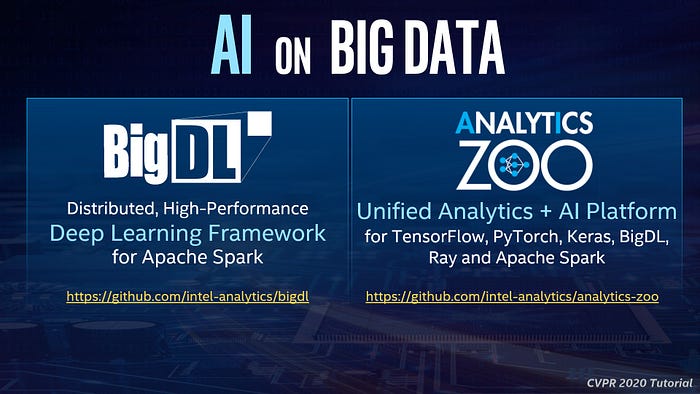

Seamlessly Scaling AI for Distributed Big Data

Conventional approaches usually set up two separate clusters, one dedicated to

Big Data processing, and the other dedicated to deep learning (e.g., a GPU

cluster), with “connector” (or glue code) deployed in between. Unfortunately,

this “connector approach” not only introduces a lot of overheads (e.g., data

copy, extra cluster maintenance, fragmented workflow, etc.), but also suffers

from impedance mismatches that arise from crossing boundaries between

heterogeneous components (more on this in the next section). To address these

challenges, we have developed open source technologies that directly support

new AI algorithms on Big Data platforms. ... Before diving into the technical

details of BigDL and Analytics Zoo, I shared a motivating example in the

tutorial. JD is one of the largest online shopping websites in China; they

have stored hundreds of millions of merchandise pictures in HBase, and built

an end-to-end object feature extraction application to process these pictures

(for image-similarity search, picture deduplication, etc.). While object

detection and feature extraction are standard computer vision algorithms, this

turns out to be a fairly complex data analysis pipeline when scaling to

hundreds of millions pictures in production, as shown in the slide below.

‘Undeletable’ Malware Shows Up in Yet Another Android Device

While it was not immediately obvious that the trojan was present on the

device, researchers were able to detect it given its similarity to another

malware downloader. “Proof of infection is based on several similarities to

other variants of Downloader Wotby,” Collier explained. “Although the infected

Settings app is heavily obfuscated, we were able to find identical malicious

code. Additionally, it shares the same receiver name: com.sek.y.ac; service

name: com.sek.y.as; and activity names: com.sek.y.st, com.sek.y.st2, and

com.sek.y.st3.” The app did not trigger any malicious activity when

researchers analyzed the device, which they expected; however, the smartphone

they examined also did not have a SIM card installed, which also could affect

how the malware behaves, he said. “Nevertheless, there is enough evidence that

this Settings app has the ability to download apps from a third-party app

store,” he wrote. “This is not okay.” The other malware variant came

preinstalled in the UL40’s Wireless Update app, which functions as the

device’s main way of updating security patches, the operating system and other

apps.

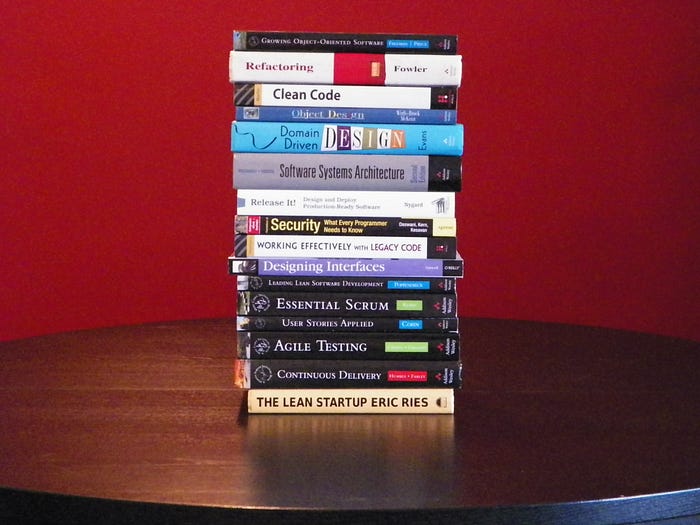

6 Coding Books Every Programmers and Software Developers should Read

Refactoring, Improving the design of existing code: This book is written

in Java as it’s the principal language, but the concept and idea are

applicable to any Object-oriented language, like C++ or C#. This book will

teach you how to convert a mediocre code into a great code that can stand

production load and real-world software development nightmare, the CHANGE. The

great part is that Martin literally walks you the steps by taking a code you

often see and then step by step converting into more flexible, more usable

code. You will learn the true definition of clean code by going through his

examples. ... The Art of Unit Testing: If there is one thing I would

like to improve on projects, as well as programmers, are their ability to unit

test. After so many years or recognition that Unit testing is must have

practiced for a professional developer, you will hardly find developers who

are a good verse of Unit testing and follows TDD. Though I am not hard on

following TDD, at a bare minimum, you must write the Unit test for the code

you wrote and also for the code you maintain. Projects are also not different,

apart from open source projects, many commercial in-house enterprise projects

suffer from the lack of Unit test.

How to become an effective software development manager and team leader

I learn by doing, and I learn from others. So first of all, I don't think

anyone is born with these skills. I mean, some people are better communicators

than other people, but a lot of the things that you actually have to learn

like how to manage somebody, how to... the good news is it can be learned and

the way I learned it is by doing and getting better every time I did it. But I

was also fortunate that I was able to surround myself with really great people

every step along the way, both in Drupal and at Acquia frankly. So surrounding

yourself with experienced managers, or experienced leaders is very helpful and

fast tracks that learning, right? ... I think about it almost everyday

actually. But I prioritize it lower than a lot of other things that I do.

Literally, when I wake up I try to think, "What should I do today that has the

biggest impact on Drupal and Acquia?" It's almost never coding for me,

unfortunately. I secretly hope it would be one day it's like, "Wow, go code.

Go write this piece of code." But it usually involves unblocking other people

or teams, or helping to fundraise for the Drupal Association right now. So the

coding is often reserved for evenings and weekends. I like to dabble with code

still.

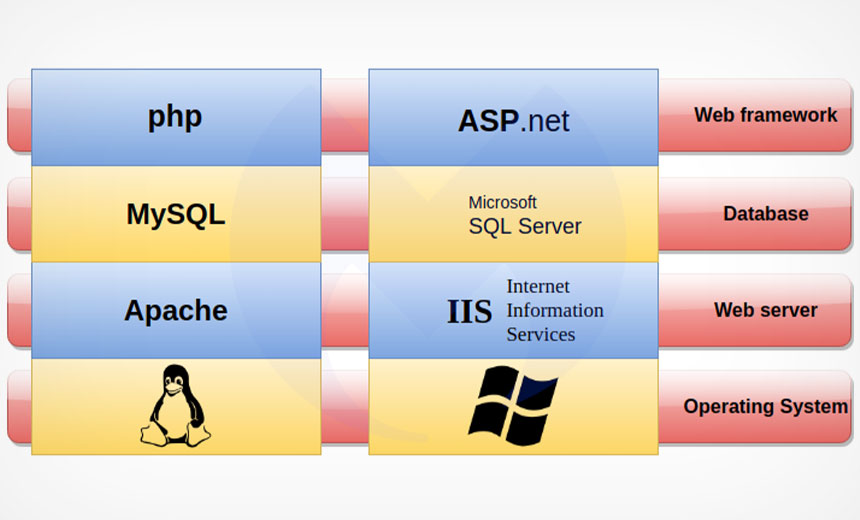

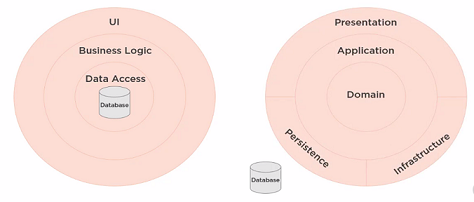

Whiteapp ASP.NET Core using Onion Architecture

It is Architecture pattern which is introduced by Jeffrey Palermo in 2008,

which will solve problems in maintaining application. In traditional

architecture, where we use to implement by Database centeric architecture.

Onion Architecture is based on the inversion of control principle. It's

composed of domain concentric architecture where layers interface with each

other towards the Domain (Entities/Classes). Main benefit of Onion

architecture is higher flexibility and de-coupling. In this approach, we can

see that all the Layers are dependent only on the Domain layer (or sometimes,

it called as Core layer). ... Testability: As it decoupled all layers, so

it is easy to write test case for each Components; Adaptability/Enhance:

Adding new way to interact with application is very easy;

Sustainability: We can keep all third party libraries in Infrastructure layer

and hence maintainence will be easy; Database Independent: Since database

is separated from data access, it is quite easy switch database

providers; Clean code: As business logic is away from presentation layer,

it is easy to implement UI;

In the age of disruption, comprehensive network visibility is key

In an age of dynamic disruption, IT is increasingly challenged to maintain

optimal service delivery, while implementing remote working at an

unprecedented scale. It’s not surprising, then, that nearly 60 percent of

study respondents cite the need for greater visibility into remote user

experiences. The top challenge for troubleshooting applications is the ability

to understand end-user experience (nearly 47 percent). “As remote working

becomes the new norm, IT teams are challenged to find and adapt technologies,

such as flow-based reporting to manage bandwidth consumption, VPN

oversubscription and troubleshooting applications. To guarantee the best

performance and reduce cybersecurity threats, increasing network visibility is

now a must for all businesses,” said Charles Thompson, Senior Director,

Enterprise and Cloud, VIAVI. “By empowering NetOps, as well as application and

security teams with network visibility, IT can mitigate the impact of

disruptive migrations, incidents and new technologies like SD-WAN to achieve

consistent operational excellence.”

Prepare for Artificial Intelligence to Produce Less Wizardry

“Deep neural networks are very computationally expensive,” says Song Han, an

assistant professor at MIT who specializes in developing more efficient forms

of deep learning and is not an author on Thompson’s paper. “This is a critical

issue.” Han’s group has created more efficient versions of popular AI

algorithms using novel neural network architectures and specialized chip

architectures, among other things. But he says there is a “still a long way to

go,” to make deep learning less compute-hungry. Other researchers have

noted the soaring computational demands. The head of Facebook’s AI research

lab, Jerome Pesenti, told WIRED last year that AI researchers were starting to

feel the effects of this computation crunch. Thompson believes that, without

clever new algorithms, the limits of deep learning could slow advances in

multiple fields, affecting the rate at which computers replace human tasks.

“The automation of jobs will probably happen more gradually than expected,

since getting to human-level performance will be much more expensive than

anticipated,” he says.

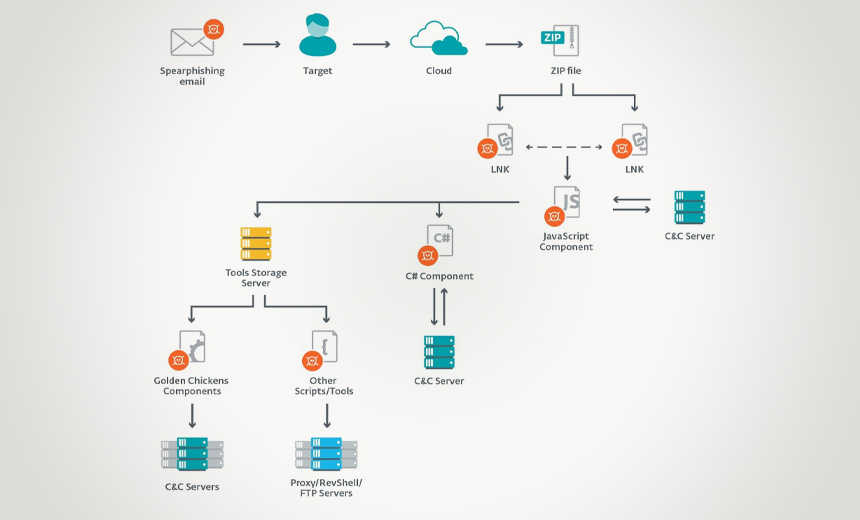

Ransomware Characteristics and Attack Chains – What you Need to Know about Recent Campaigns

Ransomware is a type of malware that prevents users from accessing their

system or personal files and demands a “ransom payment” in order to regain

access. There are two types of campaigns for ransomware “Human-operated” and

“Auto-spreading”, this article focusing on the human-operated campaigns.

Human-operated campaigns tend to have common attack patterns which include:

Gaining initial access, credential theft, lateral movement and persistence.

For many of the human-operated campaigns, typical access comes from RDP brute

force, a vulnerable internet-facing system, or weak application settings. Once

attackers have gained access they can deploy a plethora of tools to get user

credentials. After gaining credentials lateral movement takes place with

either deploying a widely known commercial penetration testing suite called

Cobalt Strike, changing settings of the WMI (Windows Management Instrument) or

abusing management tools with low-level privilege. Finally, attackers want to

keep a connection and make it persistent; this is done by creating new

accounts, making GPO (Group Policy Object) changes, creating scheduled tasks,

manipulating service registration, or by deploying shadow tools.

Quote for the day: