300+ Terrifying Cybercrime and Cybersecurity Statistics & Trends

With global cybercrime damages predicted to cost up to $6 trillion annually by 2021, not getting caught in the landslide is a matter of taking in the right information and acting on it quickly. We collected and organized over 100 up-to-date cybercrime statistics that highlight: The magnitude of cybercrime operations and impact; The attack tactics bad actors used most frequently in the past year

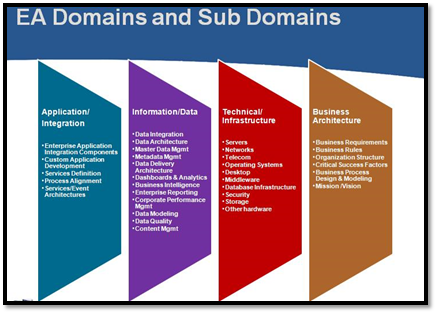

How user behavior is changing and how it… isn’t; What cybersecurity professionals are doing to counteract these threats; How different countries fare in terms of fighting off blackhat hackers and other nation states; and What can be done to keep data and assets safe from scams and attacks. Dig into these surprising (and sometimes mind-boggling) internet security statistics to understand what’s going on globally and discover how several countries fare in protecting themselves. The article includes a handy infographic you can browse to see how each stat is connected to the others, and plenty of visual representations of the most important facts and figures in information security today.How Blockchain And AI Can Help Master Data Management

Ensuring data security is vital, not only for ethical purposes but also for compliance with regulatory bodies. And no conversation about security and privacy, in this day and age, is complete without the mention of blockchain. Blockchain, which is often considered to be synonymous with privacy, can be used to secure sensitive information that makes up master data. This includes any personal information, such as that pertaining to customers and employees. It can also refer to accounting and banking-related information that may be necessary for processes like procurement and sales. All such information can be secured using blockchain through cryptographic hashing. Businesses can internally build enterprise blockchain networks to secure and manage master data using a decentralized model. It not only secures the information from illicit modification, but also from accidental loss due to physical damage to centralized servers. Additionally, it also helps in compliance with privacy regulations in an easily demonstrable manner. This is because data on a blockchain, in addition to being immutable, is also transparent and visible to all participants, ensuring smoother audits and checks.

Developing a Functional Data Governance Framework

Harvard Business Review reports 92 percent of executives say their Big Data and AI investments are accelerating, and 88 percent talk about a greater urgency to invest in Big Data and AI. In order for AI and machine learning to be successful, Data Governance must also be a success. Data Governance remains elusive to the 87 percent of businesses which, according to Gartner, have lower levels of Business Intelligence. Recent news has also suggested a need to improve Data Governance processes. Data breaches continue to affect customers and the impacts are quite broad, as an organization’s customers (including banks, universities, and pharmaceutical companies) must continually take stock and change their user names and passwords. Effective Data Governance is a fundamental component of data security processes. Data Governance has to drive improvements in business outcomes. “Implementing Data Governance poorly, with little connection or impact on business operations will just waste resources,” says Anthony Algmin, Principal at Algmin Data Leadership. To mature, Data Governance needs to be business-led and a continuous process, as Donna Burbank and Nigel Turner emphasize.

Survey: Data-center staffing shortage remains challenging

Contributing to the staffing crisis is a lack of workplace diversity. In particular, the Uptime Institute’s research highlights a significant gender imbalance: 25 percent of managers surveyed have no women among their design, build or operations staff, and another 54 percent have 10 percent or fewer women on staff. Only 5 percent of respondents said women represent 50 percent or more of staff. Yet most respondents don’t seem to think there’s anything deterring women from working where they work. A majority (85 percent) said it’s easy for women to pursue a career in their respective organization’s data center team or department; just 15 percent said it’s difficult. Referring to the data-center industry as a whole, respondents were less confident about women’s employment prospects: 53 percent said it’s easy for women to pursue a career in data centers, and 47 percent said it’s difficult. In the big picture, diversity issues could become a threat to business operations. “Study after study shows that a lack of diversity is not just a pipeline issue,” Ascierto said.

How banks can use ecosystems to win in the SME market

In parallel to designing the prototype, banks need to think through IT implications at the outset. The design choices will significantly affect the speed of development and the potential reach of the new solution. A design based on integration with an existing banking app might command a larger audience than a new stand-alone application—yet the latter typically offers more flexibility. The choice of a platform should be wedded to the monetization approach (see the “Think early about monetization” section). If the bank wants to retain the option of spinning off an ecosystem platform in the future, or listing it separately, its IT should not be enmeshed with the bank’s legacy systems. Nor can it be completely divorced: efficient transfer of information between the two systems is needed to maximize value for both banking and nonbanking offerings. IT is a key driver of costs and of the ecosystem design and business model. For instance, a Western European bank decided to integrate its ecosystem solution with its mobile banking platform.

The New Addition to the Dell EMC Ready Solutions for AI Portfolio

The Deep Learning with Intel solution joins the growing portfolio of Dell EMC Ready Solutions for AI and was unveiled today at International Super Computing in Frankfurt. This integrated hardware and software solution is powered by Dell EMC PowerEdge servers, Dell EMC PowerSwitch networking, and scale-out Isilon NAS storage and leverages the newest AI capabilities of Intel’s 2nd Generation Intel® Xeon® Scalable processor microarchitecture, Nauta open source software and includes enterprise support. The solution empowers organizations to deliver on the combined needs of their data science and IT teams and leverages deep learning to fuel their competitiveness. Dell Technologies Consulting Services help customers implement and operationalize Ready Solution technologies and AI libraries, and scale their data engineering and data science capabilities. Once deployed, ProSupport experts provide comprehensive hardware and collaborative software support to help ensure optimal system performance and minimize downtime.

5G in the UK — overhyped or has the next era of connectivity really begun?

The availability of 5G is dependent on local operators (EE, O2, Vodafone etcetera) — businesses are relying on them to build it out and drive on the capabilities. These businesses will need connectivity across multiple networks and so while an operator race is developing, it’s important that every network is competitive. Unfortunately, this is not a priority for heated competitors. Over the last two months, operators have revealed their capabilities, but they’re very focused on their own networks. It’s unlikely, they will have even started to talk about how to make that available to other partners or asked how to support sharing across partners within the network, which, as is the case with other technology changes, typically has a second phase. “Typically, the first phase is to build out and scale up within their own networks; and then the next phase is asking how do you do interoperability and interworking between the networks,” said Sherwood. “That’s even further away from scale. ... ”

Could AI Enable The Idea Of 'Reverse Fact Checking'?

Fact checking today is a reactive process in which journalists wait for a falsehood to begin spreading virally and then publish their final verdict long after the falsehood’s spread has tapered off and the damage done. Much of this delay stems from the amount of time and research it takes for fact checkers to investigate a claim and determine its veracity. What if we inverted this process and required every social media post to provide external attribution for its claims and used deep learning algorithms to compare the statements in the post to the original material it cites as its source? Could this “reverse fact checking” largely curb the spread of digital falsehoods? The greatest limitation of today’s fact checking landscape is the time and effort it takes fact checkers to investigate a claim. Collecting evidence, reaching out to organizations and experts for commentary and summarizing the resulting information into a final verdict is an extremely time-consuming process that offers few opportunities for efficient scaling.

Identity and access management –– mitigating password-related cyber security risks

The death of the password has been heralded since the Hewlett Packard, in the mid 1990s, introduced biometric fingerprint scanning into laptops. But, it is still pervasive. Biometrics have become more common in personal devices and mobile devices, it’s true. But, there are still a range of applications out there that are hugely dependent on passwords as their primary method of authentication. In any enterprise or small business, there’s still a heavy reliance on passwords and often businesses don’t even know the extent to which applications are being used in the business. IT might know about the common apps that are used in that organisation, but they may have no visibility of these applications that some departments have adopted autonomously. To give an example, My1Login worked with one smaller organisation who thought it had about 40 applications in use across the business. When they switched My1Login’s solution on, the technology discovered there were actually 600 corporate applications being used. All of these are now integrated fully with a single sign-on.

Five Android and iOS UI Design Guidelines for React Native

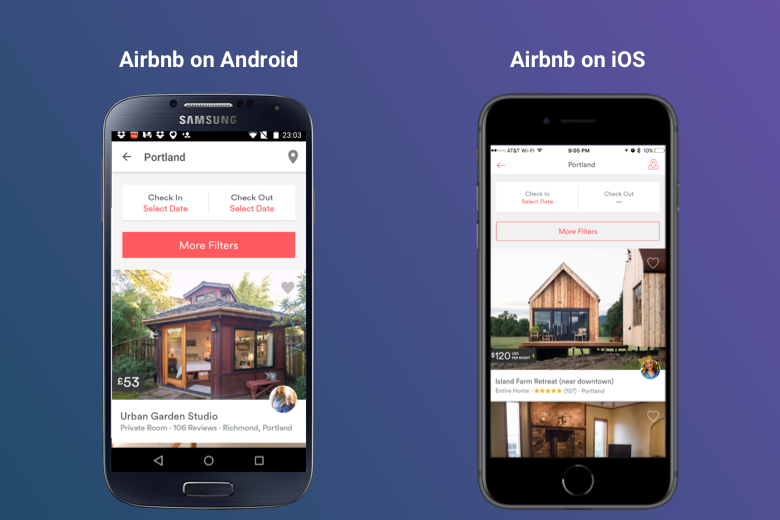

In a multiplatform approach, the designer is bound by the guidelines for each platform. This approach is more useful if your application has a complex UI and your main goal is to attract users who are more likely to spend their time on their favorite platform, be it iOS or Android. Going by the above example of a search bar, an Android user is more likely to be comfortable with the look and feel of the standard search bar of an Android app. This contrasts with an iPhone user, who will not be comfortable with the standard Android search bar. So, in a multiplatform approach, you strive to give each user the kind of look and feel they are used to. Let’s have a look at a more realistic example in order to have a clearer picture of what the multi-platform approach entails: Airbnb. As you can see in the image below, the versions of the Airbnb app for iOS and Android look entirely different and the reason for that is they follow design guidelines which are totally platform-specific.

Quote for the day:

"Blessed are the people whose leaders can look destiny in the eye without flinching but also without attempting to play God "- Henry Kissinger