If you develop software with Microsoft, you now own the rights

There has been confusion over who owns newly created intellectual property and concern that without an approach that ensures customers own key patents to their solutions, technology companies like Microsoft will enter those customers’ markets and compete against them with the very techhnology they codeveloped. Microsoft’s initiative puts the company ahead of the curve on this issue, said Patrick Moorhead, president of the analyst firm Moor Insights & Strategy. “The reality is, most major companies will become [intellectual property] creators in the future, but they don’t know it yet,” said Moorhead. “What Microsoft announced helps those companies protect their [intellectual property] and Microsoft’s in a very open and consistent way. This will likely reduce buyer’s remorse and lawsuits.” Analyst Stephen O’Grady of RedMonk concurred. “As more enterprises have begun to embrace software as a core to their business rather than simply a cost of doing business, the likelihood that they create potentially valuable [intellectual property] as part of their efforts increases.”

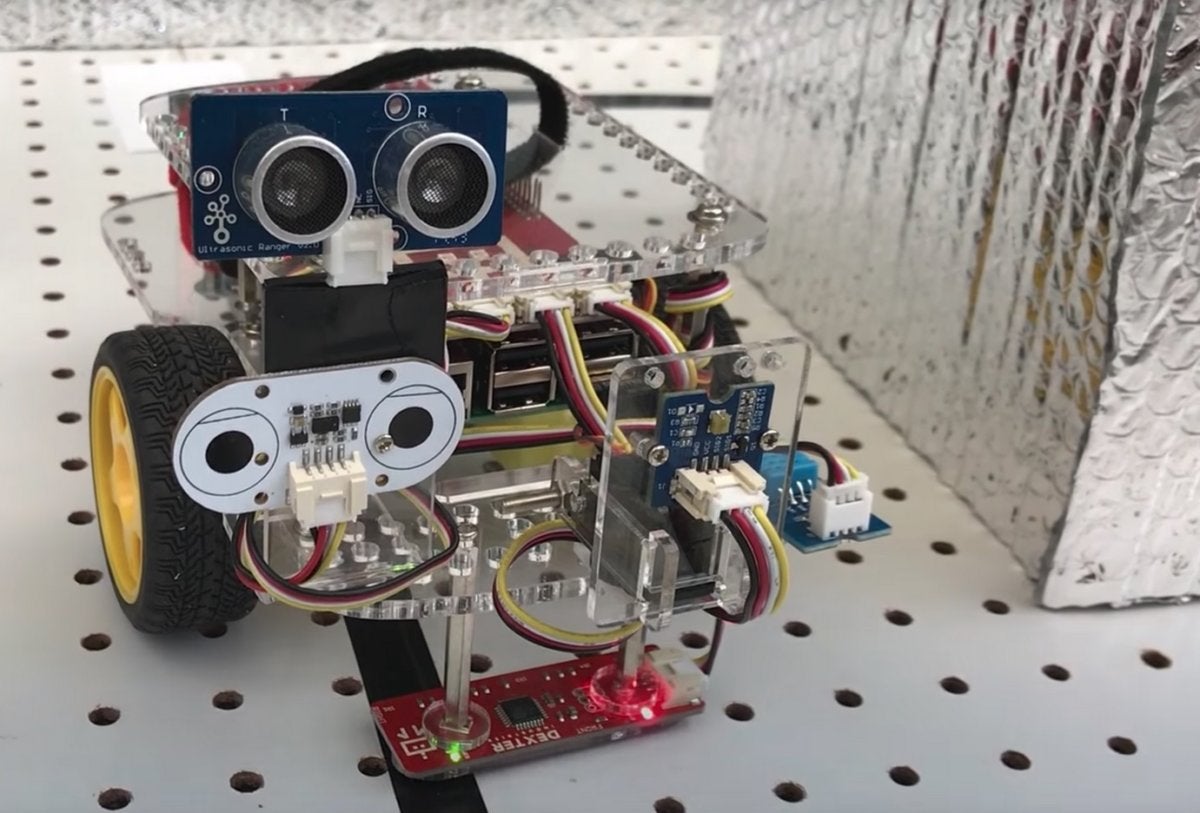

Mirai Variant Botnet Takes Aim at Financials

According to the researchers, the botnet involved in the first company attack was 80% compromised MikroTik routers and 20% various IoT devices. Those devices range from Apache and IIS web servers to webcams, DVRs, TVs, and routers. Manufacturers of the recruited devices include companies from the very small up to Cisco and Linksys. Irfan Saif is cyber risk services principal for Deloitte Risk and Financial Advisory. In an interview with Dark Reading he points out that the IoT devices brought into the botnets have processing, communication, and networking capabilities, so it's not surprising that they're being recruited for nefarious purposes. "It will be a continuing problem and the intricacies and complexities will continue to evolve," he says. "There's an ever-increasing set [of IoT applications] in industries and for facilities management that will broaden the set of devices that can be taken," Saif says, adding, "The complexity of devices that can be taken will continue to increase."

Open Source Isn't The Community You Think It Is

The interesting thing is just how strongly the central “rules” of open source engagement have persisted, even as open source has become standard operating procedure for a huge swath of software development, whether done by vendors or enterprises building software to suit their internal needs. While it may seem that such an open source contribution model that depends on just a few core contributors for so much of the code wouldn’t be sustainable, the opposite is true. Each vendor can take particular interest in just a few projects, committing code to those, while “free riding” on other projects for which it derives less strategic value. In this way, open source persists, even if it’s not nearly as “open” as proponents sometimes suggest. Is open source then any different from a proprietary product? After all, both can be categorized by contributions by very few, or even just one, vendor. Yes, open source is different. Indeed, the difference is profound. In a proprietary product, all the engagement is dictated by one vendor.

Google employees demand end to company's AI work with Defense Department

Both Google and the Pentagon have stressed that the technology is not ready to be used in combat situations, with Marine Corps Col. Drew Cukor telling the audience at the 2017 Defense One Tech Summit audience that "AI will not be selecting a target [in combat] ... any time soon. What AI will do is [complement] the human operator." But Col. Cukor also said that he believes the Defense Department is "in an AI arms race," and acknowledged that "the big five Internet companies are pursuing this heavily." Cukor later added: "Key elements have to be put together...and the only way to do that is with commercial partners alongside us." According to the Wall Street Journal, the Defense Department spent $7.4 billion on technology involving AI last year, and Google, Microsoft, and Amazon are openly battling for a variety of defense contracts involving cloud computing and other software. But the employee letter argues that Google is damaging its brand by working on Project Maven and contributing to "growing fears of biased and weaponized AI."

GDPR will give Dutch privacy watchdog its teeth

Recent research showed that many small companies in the Netherlands are not ready for the GDPR. Another important link between the privacy watchdog and the business world are data protection officers (DPOs), who must be appointed by government institutions and companies working with “special personal data”, such as people’s social security numbers or medical data. “We rely heavily on DPOs to update us on how companies handle data protection,” says Wolfsen. The presence of a DPO in organisations is one of the first things the AP will check when the GDPR comes into effect, he says. “From day one, it’s going to be simple – we will check whether companies have a DPO if they are required to. If they don’t, we’re going to take action.” Wolfsen declines to say what kind of action that might be. Fines are a possibility, but the AP is known to show leniency in such matters, warning a company rather than fining immediately. This has led to some criticism from both opponents and privacy groups.

MPLS explained

ATM and frame relay are distant memories, but MPLS lives on in carrier backbones and in enterprise networks. The most common use cases are branch offices, campus networks, metro Ethernet services and enterprises that need quality of service (QoS) for real-time applications. There’s been a lot of confusion about whether MPLS is a Layer 2 or Layer 3 service. But MPLS doesn’t fit neatly into the OSI seven-layer hierarchy. In fact, one of the key benefits of MPLS is that it separates forwarding mechanisms from the underlying data-link service. In other words, MPLS can be used to create forwarding tables for any underlying protocol. Specifically, MPLS routers establish a label-switched path (LSP), a pre-determined path to route traffic in an MPLS network, based on the criteria in the FEC. It is only after an LSP has been established that MPLS forwarding can occur. LSPs are unidirectional which means that return traffic is sent over a different LSP. When an end user sends traffic into the MPLS network, an MPLS label is added by an ingress MPLS router that sits on the network edge.

Microsoft’s AI lets bots predict pauses and interrupt conversations

The new way to talk debuts with Microsoft’s Xiaoice in China and Rinna in Japan. Xiaoice can chat through Xiaomi’s Yeelight, a smart speaker that looks identical to Amazon’s Echo Dot released two months ago. Microsoft plans to extend the conversational feature to additional devices within the next six months, Zo AI director Ying Wang told VentureBeat in an email. In the U.S., Microsoft’s Zo will receive the new feature for Skype soon, and it will also be expanded to Ruuh in India and Rinna bot in Indonesia. No specific date or time period was provided for when the capabilities would be made available to additional bots. The more natural way of speaking is called “full duplex voice sense” by Microsoft and gives bots that communicate via voice the ability to carry on a continuous conversation with just a single use of a wake word like “Hey, Cortana.” This enables people to speak with machines in a way that feels more like a phone call or conversation.

Unpatched Vulnerabilities the Source of Most Data Breaches

Patching software security flaws by now should seem like a no-brainer for organizations, yet most organizations still struggle to keep up with and manage the process of applying software updates. "Detecting and prioritizing and getting vulnerabilities solved seems to be the most significant thing an organization can do [to prevent] getting breached," says Piero DePaoli, senior director of marketing at ServiceNow, of the report. "Once a vuln and patch are announced, the race is on," he says. "How fast can a hacker weaponize it and take advantage of it" before organizations can get their patches applied, he says. Most of the time, when a vuln gets disclosed, there's a patch for that. Some 86% of vuln reports came with patches last year, according to new data from Flexera, which also tallied a 14% increase in flaws compared with 2016. The dreaded zero-day flaw that gets exploited prior to an available patch remains less of an issue, according to Flexera. Only 14 of the nearly 20,000 known software flaws last year were zero-days, and that's a decrease of 40% from 2016.

NGINX Debuts App Server For Microservices

Nginx, makers of the popular Nginx open source web server, will begin shipping on April 12 a multilingual application server called Nginx Unit. It has also upgraded its Nginx Plus application server and announced a new control plane. Configured via a dynamic API, Nginx Unit 1.0 is an open source application server. Unlike the Nginx web server, which is designed for serving web pages and websites, the Nginx Unit application server is a web server that also can run code such as what might be found in a microservices environment. Application-level logic is supported. Supported languages in the initial release include Go, Perl, PHP, Python, and Ruby. Support for Java and JavaScript is due soon. Microservices are simplified via Nginx Unit because a single instance can simultaneously serve multiple application types, the company said. Nginx Unit also has networking capabilities such as reverse-proxying.

Patterns for Microservice Developer Workflows and Deployment

In the prototyping phase, there is a lot of emphasis on putting features in front of users quickly, and because there are no existing users, there is relatively little need for stability. In the production stage, you are generally trying to balance stability and velocity. You want to add enough features to grow your user base, but you also need things to be stable enough to keep your existing users happy. In the mission-critical phase, stability is your primary objective. If the people in your organization are divided along these lines (product, development, QA, and operations), it becomes very difficult to adjust how many resources you apply to each activity for a single feature. This can show up as new features moving really slowing because they follow the same process as mission-critical features or it can show up as mission-critical features breaking too frequently in order to accommodate the faster release of new features. By organizing your people into independent feature teams, you can enable each team to find the ideal stability versus velocity tradeoff to achieve its objective, without forcing a single global tradeoff for your whole organization.

Quote for the day:

"A person must have the courage to act like everybody else, in order not to be like anybody." -- Jean-Paul Sartre

![mobile apps crowdsourcing via social media network [CW cover - October 2015]](https://images.techhive.com/images/article/2015/10/mobile_apps_crowdsourcing_via_social_media_network_thinkstock-100618594-large.jpg)