Quote for the day:

"Lost money can be found. Lost time is lost forever. Protect what matters most." -- @ValaAfshar

PTP is the New NTP: How Data Centers Are Achieving Real-Time Precision

Precision Time Protocol (PTP) – an approach that is more complex to implement

but worth the extra effort, enabling a whole new level of timing synchronization

accuracy. ... Keeping network time in sync is important on any network. But it’s

especially critical in data centers, which are typically home to large numbers

of network-connected devices, and where small inconsistencies in network timing

could snowball into major network synchronization problems. ... NTP works very

well in situations where networks can tolerate timing inconsistencies of up to a

few milliseconds (meaning thousandths of a second). But beyond this, NTP-based

time syncing is less reliable due to limitations ... Unlike NTP, PTP doesn’t

rely solely on a server-client model for syncing time across networked devices.

Instead, it uses time servers in conjunction with a method called hardware

timestamping on client devices. Hardware timestamping involves specialized

hardware components, usually embedded in network interface cards (NICs), to

track time. Central time servers still exist under PTP. But rather than

having software on servers connect to the time servers, hardware devices

optimized for the task do this work. The devices also include built-in clocks,

allowing them to record time data faster than they could if they had to forward

it to the generic clock on a server.

Precision Time Protocol (PTP) – an approach that is more complex to implement

but worth the extra effort, enabling a whole new level of timing synchronization

accuracy. ... Keeping network time in sync is important on any network. But it’s

especially critical in data centers, which are typically home to large numbers

of network-connected devices, and where small inconsistencies in network timing

could snowball into major network synchronization problems. ... NTP works very

well in situations where networks can tolerate timing inconsistencies of up to a

few milliseconds (meaning thousandths of a second). But beyond this, NTP-based

time syncing is less reliable due to limitations ... Unlike NTP, PTP doesn’t

rely solely on a server-client model for syncing time across networked devices.

Instead, it uses time servers in conjunction with a method called hardware

timestamping on client devices. Hardware timestamping involves specialized

hardware components, usually embedded in network interface cards (NICs), to

track time. Central time servers still exist under PTP. But rather than

having software on servers connect to the time servers, hardware devices

optimized for the task do this work. The devices also include built-in clocks,

allowing them to record time data faster than they could if they had to forward

it to the generic clock on a server.

Why AI adoption requires a dedicated approach to cyber governance

Today enterprises are facing unprecedented internal pressure to adopt AI tools

at speed. Business units are demanding AI solutions to remain competitive, drive

efficiency, and innovate faster. But existing cyber governance and third-party

risk management processes were never designed to operate at this pace. ...

Without modernized cyber governance and AI-ready risk management capabilities,

organizations are forced to choose between speed and safety. To truly enable the

business, governance frameworks must evolve to match the speed, scale, and

dynamism of AI adoption – transforming security from a gatekeeper into a

business enabler. ... What’s more, compliance doesn’t guarantee security. DORA,

NIS2, and other regulatory frameworks set only minimum requirements and rely on

reporting at specific points in time. While these reports are accurate when

submitted, they capture only a snapshot of the organization’s security posture,

so gaps such as human errors, legacy system weaknesses, or risks from fourth-

and Nth-party vendors can still emerge afterward. What’s more, human weakness is

always present, and legacy systems can fail at crucial moments. ... While

there’s no magic wand, there are tried-and-tested approaches that resolve and

mitigate the risks of AI vendors and solutions. Mapping the flow of data around

the organization helps reveal how it’s used and resolve blind spots. Requiring

AI tools to include references for their outputs ensures that risk decisions are

trustworthy and reliable.

Today enterprises are facing unprecedented internal pressure to adopt AI tools

at speed. Business units are demanding AI solutions to remain competitive, drive

efficiency, and innovate faster. But existing cyber governance and third-party

risk management processes were never designed to operate at this pace. ...

Without modernized cyber governance and AI-ready risk management capabilities,

organizations are forced to choose between speed and safety. To truly enable the

business, governance frameworks must evolve to match the speed, scale, and

dynamism of AI adoption – transforming security from a gatekeeper into a

business enabler. ... What’s more, compliance doesn’t guarantee security. DORA,

NIS2, and other regulatory frameworks set only minimum requirements and rely on

reporting at specific points in time. While these reports are accurate when

submitted, they capture only a snapshot of the organization’s security posture,

so gaps such as human errors, legacy system weaknesses, or risks from fourth-

and Nth-party vendors can still emerge afterward. What’s more, human weakness is

always present, and legacy systems can fail at crucial moments. ... While

there’s no magic wand, there are tried-and-tested approaches that resolve and

mitigate the risks of AI vendors and solutions. Mapping the flow of data around

the organization helps reveal how it’s used and resolve blind spots. Requiring

AI tools to include references for their outputs ensures that risk decisions are

trustworthy and reliable. What CIOs get wrong about integration strategy and how to fix it

As Gartner advises, business and IT should be equal partners in the definition

of integration strategy, representing a radical departure from the traditional

IT delivery and business “project sponsorship” model. This close collaboration

and shared accountability result in dramatically higher success rates ... A

successful integration strategy starts by aligning with the organization’s

business drivers and strategic objectives while identifying the integration

capabilities that need to be developed. Clearly defining the goals of

technology implementation, establishing governance frameworks and

decision-making authority and setting standards and principles to guide

integration choices are essential. Success metrics should be tied to business

outcomes, and the integration approach should support broader digital

transformation initiatives. ... Create cross-functional data stewardship teams

with authority to make binding decisions about data standards and quality

requirements. Document what data needs to be shared between systems, which

applications are the “source of truth.” Define and document any regulatory or

performance requirements to guide your technical planning. ... Integrations

that succeed in production are designed with clear system-of-record rules,

traceable transactions, explicit recovery paths and well-defined operational

ownership. Preemptive integration is not about reacting faster — it’s about

ensuring failures never reach the business.

As Gartner advises, business and IT should be equal partners in the definition

of integration strategy, representing a radical departure from the traditional

IT delivery and business “project sponsorship” model. This close collaboration

and shared accountability result in dramatically higher success rates ... A

successful integration strategy starts by aligning with the organization’s

business drivers and strategic objectives while identifying the integration

capabilities that need to be developed. Clearly defining the goals of

technology implementation, establishing governance frameworks and

decision-making authority and setting standards and principles to guide

integration choices are essential. Success metrics should be tied to business

outcomes, and the integration approach should support broader digital

transformation initiatives. ... Create cross-functional data stewardship teams

with authority to make binding decisions about data standards and quality

requirements. Document what data needs to be shared between systems, which

applications are the “source of truth.” Define and document any regulatory or

performance requirements to guide your technical planning. ... Integrations

that succeed in production are designed with clear system-of-record rules,

traceable transactions, explicit recovery paths and well-defined operational

ownership. Preemptive integration is not about reacting faster — it’s about

ensuring failures never reach the business. CFOs are now getting their own 'vibe coding' moment thanks to Datarails

For the modern CFO, the hardest part of the job often isn't the math—it's the

storytelling. After the books are closed and the variances calculated, finance

teams spend days, sometimes weeks, manually copy-pasting charts into

PowerPoint slides to explain why the numbers moved. ... Datarails’ new agents

sit on top of a unified data layer that connects these disparate systems.

Because the AI is grounded in the company’s own unified internal data, it

avoids the hallucinations common in generic LLMs while offering a level of

privacy required for sensitive financial data. "If the CFO wants to leverage

AI on the CFO level or the organization data, they need to consolidate the

data," explained Datarails CEO and co-founder Didi Gurfinkel in an interview

with VentureBeat. By solving that consolidation problem first, Datarails can

now offer agents that understand the context of the business. "Now the CFO can

use our agents to run analysis, get insights, create reports... because now

the data is ready," Gurfinkel said. ... "Very soon, the CFO and the financial

team themselves will be able to develop applications," Gurfinkel predicted.

"The LLMs become so strong that in one prompt, they can replace full product

runs." He described a workflow where a user could simply prompt: "That was my

budget and my actual of the past year. Now build me the budget for the next

year."

For the modern CFO, the hardest part of the job often isn't the math—it's the

storytelling. After the books are closed and the variances calculated, finance

teams spend days, sometimes weeks, manually copy-pasting charts into

PowerPoint slides to explain why the numbers moved. ... Datarails’ new agents

sit on top of a unified data layer that connects these disparate systems.

Because the AI is grounded in the company’s own unified internal data, it

avoids the hallucinations common in generic LLMs while offering a level of

privacy required for sensitive financial data. "If the CFO wants to leverage

AI on the CFO level or the organization data, they need to consolidate the

data," explained Datarails CEO and co-founder Didi Gurfinkel in an interview

with VentureBeat. By solving that consolidation problem first, Datarails can

now offer agents that understand the context of the business. "Now the CFO can

use our agents to run analysis, get insights, create reports... because now

the data is ready," Gurfinkel said. ... "Very soon, the CFO and the financial

team themselves will be able to develop applications," Gurfinkel predicted.

"The LLMs become so strong that in one prompt, they can replace full product

runs." He described a workflow where a user could simply prompt: "That was my

budget and my actual of the past year. Now build me the budget for the next

year."The internet’s oldest trust mechanism is still one of its weakest links

Attackers continue to rely on domain names as an entry point into enterprise

systems. A CSC domain security study finds that large organizations leave this

part of their attack surface underprotected, even as attacks become more

frequent. ... Large companies continue to add baseline protections, though

adoption remains uneven. Email authentication shows the most consistent

improvement, driven by phishing activity and regulatory pressure. Organizations

still leave email domains partially protected, which allows spoofing to persist.

Other protections see much slower uptake. ... Consumer oriented registrars tend

to emphasize simplicity and cost. Organizations that rely on them often lack

access to protections that limit the impact of account compromise or social

engineering. Risk increases as domain portfolios grow and change. ... Brand

impersonation through domain spoofing remains widespread. Lookalike domains tied

to major brands are often owned by third parties. Some appear inactive while

still supporting email activity. Inactive domains with mail records allow

attackers to send phishing messages that appear associated with trusted brands.

Others are parked with advertising networks or held for later use. A smaller

portion hosts malicious content, though dormant domains can be activated

quickly. ... Gaps appear in infrastructure related areas. DNS redundancy and

registry lock adoption lag, and many unicorns rely on consumer grade registrars.

These limitations become more pronounced as operations scale.

Attackers continue to rely on domain names as an entry point into enterprise

systems. A CSC domain security study finds that large organizations leave this

part of their attack surface underprotected, even as attacks become more

frequent. ... Large companies continue to add baseline protections, though

adoption remains uneven. Email authentication shows the most consistent

improvement, driven by phishing activity and regulatory pressure. Organizations

still leave email domains partially protected, which allows spoofing to persist.

Other protections see much slower uptake. ... Consumer oriented registrars tend

to emphasize simplicity and cost. Organizations that rely on them often lack

access to protections that limit the impact of account compromise or social

engineering. Risk increases as domain portfolios grow and change. ... Brand

impersonation through domain spoofing remains widespread. Lookalike domains tied

to major brands are often owned by third parties. Some appear inactive while

still supporting email activity. Inactive domains with mail records allow

attackers to send phishing messages that appear associated with trusted brands.

Others are parked with advertising networks or held for later use. A smaller

portion hosts malicious content, though dormant domains can be activated

quickly. ... Gaps appear in infrastructure related areas. DNS redundancy and

registry lock adoption lag, and many unicorns rely on consumer grade registrars.

These limitations become more pronounced as operations scale.Misconfigured demo environments are turning into cloud backdoors to the enterprise

Internal testing, product demonstrations, and security training are critical

practices in cybersecurity, giving defenders and everyday users the tools and

wherewithal to prevent and respond to enterprise threats. However, according to

new research from Pentera Labs, when left in default or misconfigured states,

these “test” and “demo” environments are yet another entry point for attackers —

and the issue even affects leading security companies and Fortune 500 companies

that should know better. ... After identifying an exposed instance of Hackazon,

a free, intentionally vulnerable test site developed by Deloitte, during a

routine cloud security assessment for a client, Yaffe performed a five-step hunt

for exposed apps. His team uncovered 1,926 “verified, live, and vulnerable

applications,” more than half of which were running on enterprise-owned

infrastructure on AWS, Azure, and Google Cloud platforms. They then discovered

109 exposed credential sets, many accessible via a low-priority lab environment,

tied to overly-privileged identity access management (IAM) roles. These often

granted “far more access” than a ‘training’ app should, Yaffe explained, and

provided attackers:Administrator-level access to cloud accounts, as well as full

access to S3 buckets, GCS, and Azure Blob Storage; The ability to launch and

destruct compute resources and read and write to secrets managers; Permissions

to interact with container registries where images are stored, shared, and

deployed.

Internal testing, product demonstrations, and security training are critical

practices in cybersecurity, giving defenders and everyday users the tools and

wherewithal to prevent and respond to enterprise threats. However, according to

new research from Pentera Labs, when left in default or misconfigured states,

these “test” and “demo” environments are yet another entry point for attackers —

and the issue even affects leading security companies and Fortune 500 companies

that should know better. ... After identifying an exposed instance of Hackazon,

a free, intentionally vulnerable test site developed by Deloitte, during a

routine cloud security assessment for a client, Yaffe performed a five-step hunt

for exposed apps. His team uncovered 1,926 “verified, live, and vulnerable

applications,” more than half of which were running on enterprise-owned

infrastructure on AWS, Azure, and Google Cloud platforms. They then discovered

109 exposed credential sets, many accessible via a low-priority lab environment,

tied to overly-privileged identity access management (IAM) roles. These often

granted “far more access” than a ‘training’ app should, Yaffe explained, and

provided attackers:Administrator-level access to cloud accounts, as well as full

access to S3 buckets, GCS, and Azure Blob Storage; The ability to launch and

destruct compute resources and read and write to secrets managers; Permissions

to interact with container registries where images are stored, shared, and

deployed.Cyber Insights 2026: API Security – Harder to Secure, Impossible to Ignore

“We’re now entering a new API boom. The previous wave was driven by cloud

adoption, mobile apps, and microservices. Now, the rise of AI agents is fueling

a rapid proliferation of APIs, as these systems generate massive, dynamic, and

unpredictable requests across enterprise applications and cloud services,”

comments Jacob Ideskog ... The growing use of agentic AI systems and the way

they act autonomously, making decisions and triggering workflows, is ballooning

the number of APIs in play. “It isn’t just ‘I expose one billing API’,” he

continues, “now there are dozens of APIs that feed data to LLMs or AI agents,

accept decisions from AI agents, facilitate orchestration between services and

micro-apps, and potentially expose ‘agentic’ endpoints ... APIs have been a

major attack surface for years – the problem is ongoing. Starting in 2025 and

accelerating through 2026 and beyond, the rapid escalation of enterprise agentic

AI deployments will multiply the number of APIs and increase the attack surface.

That alone suggests that attacks against APIs will grow in 2026. But the attacks

themselves will scale and be more effective through adversaries’ use of their

own agentic AI. Barr explains: “Agentic AI means that bad actors can automate

reconnaissance, probe API endpoints, chain API calls, test business-logic abuse,

and execute campaigns at machine scale. Possession of an API endpoint,

particularly a self-service, unconstrained one, becomes a lucrative target. And

AI can generate payloads, iterate quickly, bypass simple heuristics, and map

dependencies between APIs.”

“We’re now entering a new API boom. The previous wave was driven by cloud

adoption, mobile apps, and microservices. Now, the rise of AI agents is fueling

a rapid proliferation of APIs, as these systems generate massive, dynamic, and

unpredictable requests across enterprise applications and cloud services,”

comments Jacob Ideskog ... The growing use of agentic AI systems and the way

they act autonomously, making decisions and triggering workflows, is ballooning

the number of APIs in play. “It isn’t just ‘I expose one billing API’,” he

continues, “now there are dozens of APIs that feed data to LLMs or AI agents,

accept decisions from AI agents, facilitate orchestration between services and

micro-apps, and potentially expose ‘agentic’ endpoints ... APIs have been a

major attack surface for years – the problem is ongoing. Starting in 2025 and

accelerating through 2026 and beyond, the rapid escalation of enterprise agentic

AI deployments will multiply the number of APIs and increase the attack surface.

That alone suggests that attacks against APIs will grow in 2026. But the attacks

themselves will scale and be more effective through adversaries’ use of their

own agentic AI. Barr explains: “Agentic AI means that bad actors can automate

reconnaissance, probe API endpoints, chain API calls, test business-logic abuse,

and execute campaigns at machine scale. Possession of an API endpoint,

particularly a self-service, unconstrained one, becomes a lucrative target. And

AI can generate payloads, iterate quickly, bypass simple heuristics, and map

dependencies between APIs.”

Complex VoidLink Linux Malware Created by AI

_NicoElNino_Alamy.png?width=1280&auto=webp&quality=80&format=jpg&disable=upscale) An advanced cloud-first malware framework targeting Linux systems was created

almost entirely by artificial intelligence (AI), a move that signals significant

evolution in the use of the technology to develop advanced

malware. VoidLink — comprised of various cloud-focused capabilities and

modules and designed to maintain long-term persistent access to Linux systems —

is the first case of wholly original malware being developed by AI, according to

Check Point Research, which discovered and detailed the malware framework last

week. While other AI-generated malware exists, it's typically "been linked to

inexperienced threat actors, as in the case of FunkSec, or to malware that

largely mirrored the functionality of existing open-source malware tools," ...

The malware framework, linked to a suspected, unspecified Chinese actor,

includes custom loaders, implants, rootkits, and modular plug-ins. It also

automates evasion as much as possible by profiling a Linux environment and

intelligently choosing the best strategy for operating without detection.

Indeed, as Check Point researchers tracked VoidLink in real time, they watched

it transform quickly from what appeared to be a functional development build

into a comprehensive, modular framework that became fully operational in a short

timeframe. However, while the malware itself was high-functioning out of the

gate, VoidLink's creator proved to be somewhat sloppy in their execution.

An advanced cloud-first malware framework targeting Linux systems was created

almost entirely by artificial intelligence (AI), a move that signals significant

evolution in the use of the technology to develop advanced

malware. VoidLink — comprised of various cloud-focused capabilities and

modules and designed to maintain long-term persistent access to Linux systems —

is the first case of wholly original malware being developed by AI, according to

Check Point Research, which discovered and detailed the malware framework last

week. While other AI-generated malware exists, it's typically "been linked to

inexperienced threat actors, as in the case of FunkSec, or to malware that

largely mirrored the functionality of existing open-source malware tools," ...

The malware framework, linked to a suspected, unspecified Chinese actor,

includes custom loaders, implants, rootkits, and modular plug-ins. It also

automates evasion as much as possible by profiling a Linux environment and

intelligently choosing the best strategy for operating without detection.

Indeed, as Check Point researchers tracked VoidLink in real time, they watched

it transform quickly from what appeared to be a functional development build

into a comprehensive, modular framework that became fully operational in a short

timeframe. However, while the malware itself was high-functioning out of the

gate, VoidLink's creator proved to be somewhat sloppy in their execution.

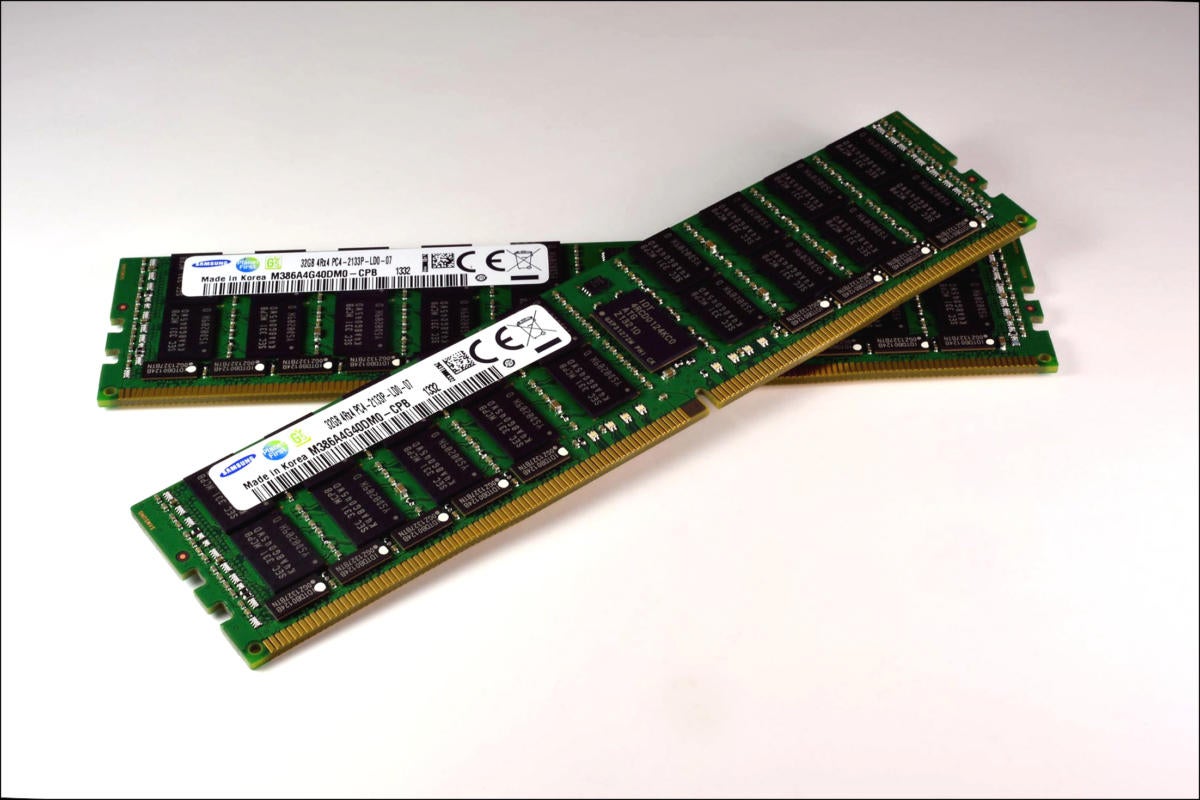

What’s causing the memory shortage?

Right now, the industry is suffering the worst memory shortage in history, and

that’s with three core suppliers: Micron Technology, SK Hynix, and Samsung.

TrendForce, a Taipei-based market researcher that specializes in the memory

market, recently said it expects average DRAM memory prices to rise between 50%

and 55% this quarter compared to the fourth quarter of 2025. Samsung recently

issued a similar warning. So what caused this? Two letters: AI. The rush to

build AI-oriented data centers has resulted in virtually all of the memory

supply being consumed by data centers. AI requires massive amounts of memory to

process its gigantic data sets. A traditional server would usually come with 32

GB to 64 GB of memory, while AI servers have 128 GB or more. ... There are other

factors at play here, too, of course. The industry is in a transition period

between DDR4 and DDR5, as DDR5 comes online and DDR4 fades away. These

transitions to a new memory format are never quick or easy, and it usually take

years to make a full shift. There has also been increased demand from both

client and server sides. With Microsoft ending support for Windows 10, a whole

lot of laptops are being replaced with Windows 11 systems, and new laptops come

with DDR5 memory — the same memory used in an AI server. ... “What’s likely to

happen, from a market perspective, is we’ll see the market grow less in ’26 than

we had anticipated, but ASPs are likely to stay or increase. ...” he said.

Right now, the industry is suffering the worst memory shortage in history, and

that’s with three core suppliers: Micron Technology, SK Hynix, and Samsung.

TrendForce, a Taipei-based market researcher that specializes in the memory

market, recently said it expects average DRAM memory prices to rise between 50%

and 55% this quarter compared to the fourth quarter of 2025. Samsung recently

issued a similar warning. So what caused this? Two letters: AI. The rush to

build AI-oriented data centers has resulted in virtually all of the memory

supply being consumed by data centers. AI requires massive amounts of memory to

process its gigantic data sets. A traditional server would usually come with 32

GB to 64 GB of memory, while AI servers have 128 GB or more. ... There are other

factors at play here, too, of course. The industry is in a transition period

between DDR4 and DDR5, as DDR5 comes online and DDR4 fades away. These

transitions to a new memory format are never quick or easy, and it usually take

years to make a full shift. There has also been increased demand from both

client and server sides. With Microsoft ending support for Windows 10, a whole

lot of laptops are being replaced with Windows 11 systems, and new laptops come

with DDR5 memory — the same memory used in an AI server. ... “What’s likely to

happen, from a market perspective, is we’ll see the market grow less in ’26 than

we had anticipated, but ASPs are likely to stay or increase. ...” he said.

OpenAI CFO Comments Signal End of AI Hype Cycle

By focusing on “practical adoption,” OpenAI can close the gap between what AI

now makes possible and how people, companies, and countries are using it day to

day. “The opportunity is large and immediate, especially in health, science, and

enterprise, where better intelligence translates directly into better outcomes,”

she noted. “Infrastructure expands what we can deliver,” she continued.

“Innovation expands what intelligence can do. Adoption expands who can use it.

Revenue funds the next leap. This is how intelligence scales and becomes a

foundation for the global economy.” The framing reflects a shift from

big-picture AI promise to day-to-day deployment and measurable results. ...

There’s also a gap between what AI can do and how people are actually using it

in daily life, noted Natasha August, founder of RM11, a content monetization

platform for creators in Carrollton, Texas. “AI tools are incredibly powerful,

but for many people and businesses, it’s still unclear how to turn that power

into something practical like saving time, making money, or improving how they

work,” she told TechNewsWorld. In business, the gap lies between AI’s raw

analytical capabilities and its ability to drive tangible, repeatable business

outcomes, maintained Nithin Mummaneni ... “The winning play is less ‘AI that

answers’ and more ‘AI that completes tasks safely and predictably,'” he

continued. “Adoption happens when AI becomes part of the workflow, not a

separate destination.”

By focusing on “practical adoption,” OpenAI can close the gap between what AI

now makes possible and how people, companies, and countries are using it day to

day. “The opportunity is large and immediate, especially in health, science, and

enterprise, where better intelligence translates directly into better outcomes,”

she noted. “Infrastructure expands what we can deliver,” she continued.

“Innovation expands what intelligence can do. Adoption expands who can use it.

Revenue funds the next leap. This is how intelligence scales and becomes a

foundation for the global economy.” The framing reflects a shift from

big-picture AI promise to day-to-day deployment and measurable results. ...

There’s also a gap between what AI can do and how people are actually using it

in daily life, noted Natasha August, founder of RM11, a content monetization

platform for creators in Carrollton, Texas. “AI tools are incredibly powerful,

but for many people and businesses, it’s still unclear how to turn that power

into something practical like saving time, making money, or improving how they

work,” she told TechNewsWorld. In business, the gap lies between AI’s raw

analytical capabilities and its ability to drive tangible, repeatable business

outcomes, maintained Nithin Mummaneni ... “The winning play is less ‘AI that

answers’ and more ‘AI that completes tasks safely and predictably,'” he

continued. “Adoption happens when AI becomes part of the workflow, not a

separate destination.”