AI is coming to the network

The dynamics of AI-infusing a network organization will, as with many other

forms of automation, center on four modes of interaction: offloading,

reskilling, deskilling, and displacing. AI offloading means putting AI tools at

the command of trained and experienced networking professionals to help them do

their work. The idea is to make network pros more effective by allowing them to

offload tasks that are repetitive, complex, time sensitive, or require extremely

high levels of focused attention, but that are not creative. This is supposed to

free these scarce and precious resources to do other, higher-level work instead,

while paying minimal and supervisory attention to what the AI is doing. (Human

attention is the most precious resource in any IT shop.) The network team

doesn’t shrink, and its portfolio of services can even grow without the team

also having to grow to make that possible. Reskilling allows network staff to be

trained to move into other parts of IT or into entirely different kinds of jobs.

It also encompasses the idea of using AI to help train new network staff up to

proficiency.

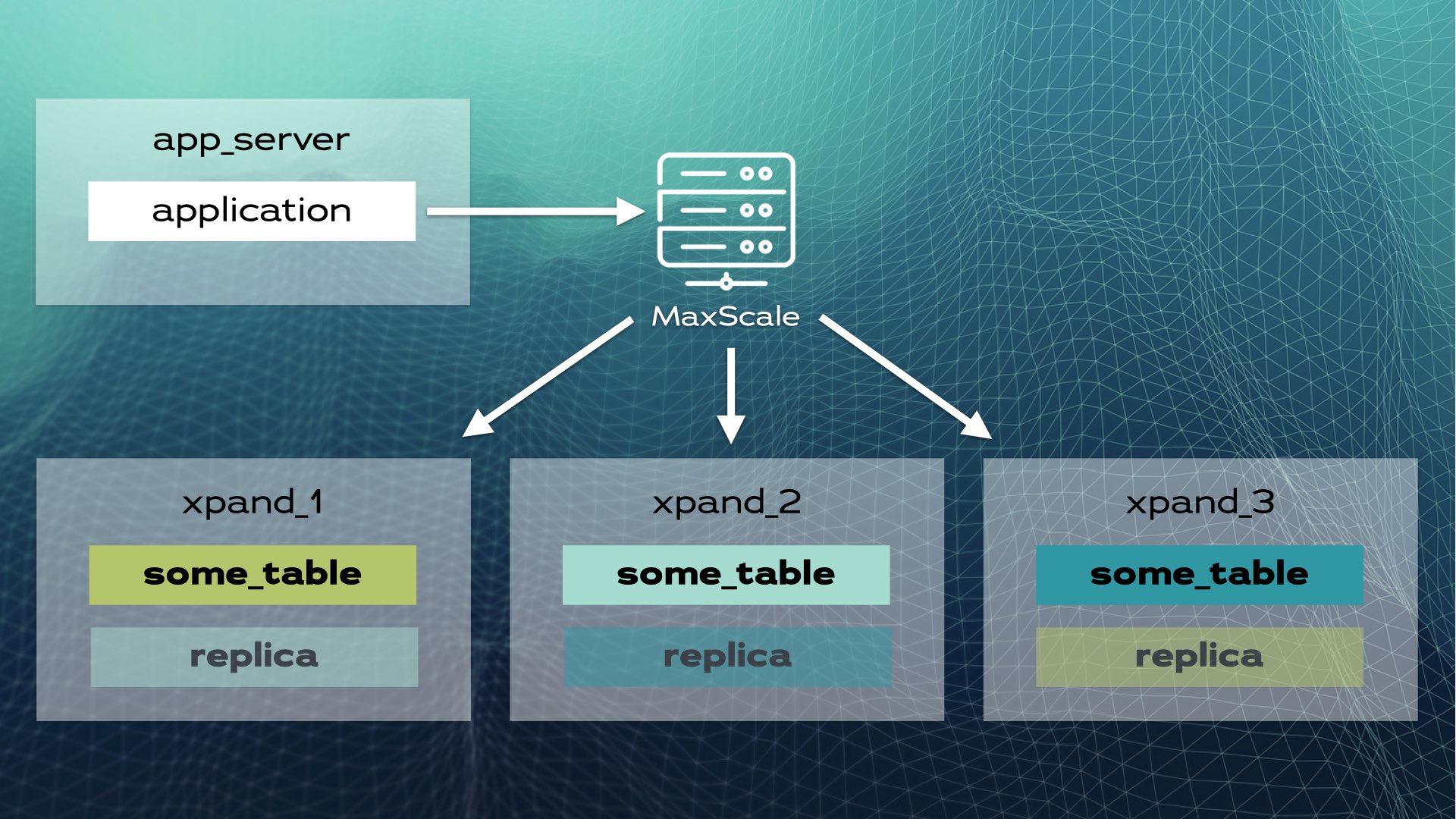

Distributed SQL: An Alternative to Database Sharding

Distributed SQL is the new way to scale relational databases with a

sharding-like strategy that's fully automated and transparent to applications.

Distributed SQL databases are designed from the ground up to scale almost

linearly. ... In simple terms, a distributed SQL database is a relational

database with transparent sharding that looks like a single logical database

to applications. Distributed SQL databases are implemented as a shared-nothing

architecture and a storage engine that scales both reads and writes while

maintaining true ACID compliance and high availability. Distributed SQL

databases have the scalability features of NoSQL databases—which gained

popularity in the 2000s—but don’t sacrifice consistency. They keep the

benefits of relational databases and add cloud compatibility with multi-region

resilience. A different but related term is NewSQL (coined by Matthew Aslett

in 2011). This term also describes scalable and performant relational

databases. However, NewSQL databases don’t necessarily include horizontal

scalability.

How layoffs can affect diversity in tech—and what to do about it

Although layoffs have dominated the conversation during the latter part of the

year, evidence shows that the Great Resignation isn’t over yet. Online job

site Hired found that attracting, hiring, and retaining top talent has proven

to be difficult, citing employee burnout as a key challenge, placing the blame

on rapid changes in the employment environment and angst over mass layoffs and

hiring freezes. For companies yet to announce job cuts, Laman said that before

any decision is made, organizations need to be sure they factor DE&I into

decisions around layoffs. ... However, Williams argued that there's a lot of

evidence to suggest that we pattern match when we try to spot potential,

meaning that one of the really big risks from all these layoffs is that if you

disproportionately have just one type of person represented at a leadership

level making the decisions about who stays and who goes, they're not going to

have understood or realize the potential of some people who look very

different or are very different from them. Carver agrees, noting that being a

good manager and being a good technologist are not one and the same, meaning

people are often promoted despite lacking some necessary management skills.

How Global Turmoil and Inflation Will Impact Cybersecurity and Data Management in 2023

Rising geopolitical tensions between China, Russia, and NATO allies are

responsible for increased cybersecurity threats. This will lead to companies

tightening security measures in 2023. With healthcare, financial, defense, and

public utility sectors facing new threats from politically motivated bad

actors, the organizations with cloud-based IT operations should consider

employing “data geofencing” through contractual agreements with their cloud

providers -- many of which store data in global data centers -- to ensure data

is kept within designated regions due to national security concerns and local

legal requirements. Organizations in highly regulated industries must be on

high alert to protect data and websites against DDoS attacks and phishing

expeditions. Data management and cybersecurity professionals should work

together to devise and execute new strategies that “meet the moment” and

mitigate the potential for critical customer and corporate data eventually

winding up on the Dark Web. One way data teams can support company security

policies is by “flipping the script” on data asset management.

Cyberattackers Torch Python Machine Learning Project

In the latest attack on PyTorch, the attacker used the name of a software

package that PyTorch developers would load from the project's private

repository, and because the malicious package existed in the PyPI repository,

it gained precedence. The PyTorch Foundation removed the dependency in its

nightly builds and replaced the PyPI project with a benign package, the

advisory stated. ... Fortunately, because the torchtritan dependency was only

imported into the nightly builds of the program, the impact of the attack did

not propagate to typical users, Paul Ducklin, a principal research scientist

at cybersecurity firm Sophos, said in a blog post. "We're guessing that the

majority of PyTorch users won't have been affected by this, either because

they don't use nightly builds, or weren't working over the vacation period, or

both," he wrote. "But if you are a PyTorch enthusiast who does tinker with

nightly builds, and if you've been working over the holidays, then even if you

can't find any clear evidence that you were compromised, you might

nevertheless want to consider generating new SSH key pairs as a precaution,

and updating the public keys that you've uploaded to the various servers that

you access via SSH."

Why it might be time to consider using FIDO-based authentication devices

Every business needs a secure way to collect, manage, and authenticate

passwords. Unfortunately, no method is foolproof. Storing passwords in the

browser and sending one-time access codes by SMS or authenticator apps can be

bypassed by phishing. Password management products are more secure, but they

have vulnerabilities as shown by the recent LastPass breach that exposed an

encrypted backup of a database of saved passwords. For organizations with high

security requirements, that leaves hardware-based login options such as FIDO

devices. The FIDO (Fast Identity Online) standard is maintained by the FIDO

Alliance and aims to reduce reliance on passwords for security. It does so by

complementing or replacing them with strong authentication based on public-key

cryptography. FIDO includes specs that take advantage of biometric and other

hardware-based security measures, either from specialized hardware security

gadgets or the biometric features built into most new smartphones and some

PCs. That makes FIDO and other physical key or token methods more phishing

resistant and harder for attackers to bypass.

Why organizations tend to fall short on secure data management

Developing a more comprehensive structure for data classification by

determining a piece of data’s value, its risk profile, or its level of

sensitivity can improve understanding of the data retention period, thus

informing data policy to help mitigate risk and reducing the attack surface

for a potential breach. That means determining from the outset that data needs

to get sanitized after a set time and through a set policy, rather than

waiting until the asset it sits on is disposed. Equally, by thinking about the

information lifecycle from the get-go, enterprises can make quick decisions on

whether they should even have that data, and if not, they should erase it

immediately with a certificate proving that the erasure has been successful.

If data has only been held as part of a project, then when that project

finishes the team should remove it from the infrastructure under that

organization’s command. Classifying data appropriately can provide actionable

insight to restructure policies and help employees better understand the

information lifecycle management process.

7 downsides of open source culture

The word community gets thrown around a lot in open source circles, but that

doesn’t mean open source culture is some sort of Shangri-La. Open source

developers can be an edgy group: brusque, distracted, opinionated, and even

downright mean. It is also well known that open source has a diversity

problem, and certain prominent figures have been accused of racism and sexism.

Structural inequality may be less visible when individuals contribute to open

source projects with relative anonymity, communicating only through emails or

bulletin boards. But sometimes that anonymity begets feelings of

disconnection, which can make the collaborative process less enjoyable, and

less inclusive, than it's cracked up to be. Many enterprise companies release

open source versions of their product as a “community edition.” It's a great

marketing tool and also a good way to collect ideas and sometimes code for

improving the product. Building a real community around that project, though,

takes time and resources. If a user and potential contributor posts a question

to an online community bulletin board, they expect an answer.

Is Silicon Valley's Unique Aura Fading Away?

The Silicon Valley mindset is about using technology to push for what’s

possible, not what’s probable, says Shannon Goggin, co-founder and CEO of San

Francisco-based benefits data platform provider Noyo. “It’s about taking big

swings, building the future, and creating breakthroughs.” Yet after decades of

tech dominance, doubts about Silicon Valley's long-term industry supremacy are

beginning to appear. ... With a rise in remote work, the value that Silicon

Valley employees once placed on a vibrant office life with trendy workspaces,

elaborate on-site meals, and transportation, has faded, Jain observes. “People

are now looking for solid employers who offer opportunities for collaboration

and the ability to make a difference,” he says. “Silicon Valley tech firms are

starting to take notice.” Thanks to emerging distributed company models, the

Silicon Valley mindset will continue spreading to other areas, Goggin says.

“The startup ecosystem is incredibly supportive, and I will be proud to see

the next generation of companies create even more opportunity for people who

haven’t historically had the chance to participate in the startup and tech

ecosystem,” she says.

9 steps to protecting backup servers from ransomware

The backup server should not be connected to lightweight directory access

protocol (LDAP) or any other centralized authentication system. These are

often compromised by ransomware and can easily be used to gain usernames and

passwords to the backup server itself or to its backup application. Many

security professionals believe that no administrator accounts should be put in

LDAP, so a separate password-management system may already be in place. A

commercial password manager that allows sharing of passwords only among people

who require access could fit the bill. MFA can increase security of backup

servers, but use some other method than SMS or email, both of which are

frequently targeted and circumvented. Consider a third-party authentication

application such as Google Authenticator or Authy or one of the many

commercial products. Backups systems should be configured so nearly no one has

to login directly to an administrator or root account. For example, if a user

account is set up on Windows as an administrator account, that user should not

have to log into it in order to administer the backup system.

Quote for the day:

"A tough hide with a tender heart is a

goal that all leaders must have." -- Wayde Goodall

No comments:

Post a Comment