Supply-Chain Hack Breaches 35 Companies, Including PayPal, Microsoft, Apple

“The vast majority of the affected companies fall into the 1000+ employees

category, which most likely reflects the higher prevalence of internal library

usage within larger organizations,” Birsan noted. The researcher received more

than $130,000 in both bug bounties and pre-approved financial arrangements with

targeted organizations, who all agreed to be tested. The hack’s original target

PayPal, as well as Apple and Canada’s Shopify, each contributed $30,000 to that

amount. Birsan said he came up with an idea to explore the trust that developers

put in a “simple command,” “pip install package_name,” which they commonly use

with programming languages such as Python, Node, Ruby and others to install

dependencies, or blocks of code shared between projects,. These installers—such

as Python Package Index for Python or npm and the npm registry for Node–are

usually tied to public code repositories where anyone can freely upload code

packages for others to use, Birsan noted. However, using these packages comes

with a level of trust that the code is authentic and not malicious, he observed.

Continual Learning will be the Cornerstone to Success

In the Fourth Industrial Revolution, the urgency to future-proof and transition

careers has required nothing short of a reskilling revolution. According to

global Salesforce research, since the onset of the pandemic 40% of the workforce

have considered a career change. As the digital economy continues to evolve,

businesses don’t just have a responsibility to provide employees opportunities

to retrain and transition to the jobs of the future. It’s increasingly within

their interest to do so. Now more than ever, people need access to the

technologies and skills necessary to land the jobs of the future. This why at

Salesforce we launched Trailhead in 2014, our free online learning platform, to

democratise education and provide an equal pathway into the tech industry. Since

the onset of the pandemic we’ve seen a 37% increase in registrations to courses

– joining over 2.2 million learners gaining technical, business, partner, and

soft skills. Delivering in-demand skills and resume-worthy credentials, we’re

addressing the skills imperative and equipping people with the tools they need

to succeed. As a society, we need to continually ask ourselves whether we

are doing enough to provide everyone with the opportunity to

participate.

Artificial Intelligence In The Corporate Boardroom

With respect to accountability – human directors’ decision-making should not be

replaced or influenced by unaccountable artificial intelligence’s

decision-making. I warn that using artificial intelligence to make decisions in

boardrooms could lead to a void of accountability. The use of artificial

intelligence in boardrooms could raise other issues as well. ... Human

directors, who have consciousness and a conscience, would be accountable;

whereas I do not know how AI-directors could effectively be held accountable.

This would be an instance in which the risk that directors lose their

independent judgment intertwines with the accountability issues possibly arising

from the use of artificial intelligence in corporate boardrooms. ...

Philosophers warn us that if artificial intelligence developed a conscience and

consciousness, it could also possibly experience suffering. Uber-intelligence

could lead to uber-suffering. As I wrote in my article, “no potential benefits

resulting from the use of AI in the boardrooms, in corporate governance, or in

other settings could be worth the risk that artificial agents could suffer; even

more drastically, no potential benefit resulting from the use of AI is worth the

risk that relations between natural beings and artificial beings could evolve

into exploitative relations.”

Developers: This is the one skill most likely to get you hired, according to IBM

The conclusion falls in line with the findings of a recent study by the Linux

Foundation, which found that hiring managers are 70% more likely to hire a

professional with knowledge of open cloud technologies. At the same time, the

same report showed that 93% of respondents were struggling to find sufficient

talent with open-source skills. Mastering open-source tools and programming

libraries can add a lot of value to a developers' CV, therefore. Among the most

important tools to add to developers' skillset, Linux featured prominently, with

an overwhelming 95% of developers saying they considered the technology to be

important to their career; but the understanding of containers and databases

also ranked high. IBM's latest research comes in the midst of increasing

interest in open-source software, and a desire to tap the technology to create

value. Not-for-profit think tank the OpenForum Europe recently found that the

open-source ecosystem was contributing up to €95 billion ($113.7 billion) per

year to the EU's GDP; and that even a marginal increase of activity could boost

the continent's wealth by hundreds of billions of euros.

Is it time to ban ransomware insurance payments?

Erin Kenneally, director of cyber risk analytics at Guidewire, and previously a

staffer in the US Department of Homeland Security’s cyber division, says

dialogue is needed to disincentivise both the supply-side and the demand-side

for ransomware payments – banning insurance payments would evidently fall under

the former approach. She also highlights that current light touch interventions

for ransomware have been shown to be ineffective. “The US, for example, has

issued an Office of Foreign Assets Control [OFAC] advisory on the sanction risks

of paying ransoms and a FINCEN Advisory on reporting ransomware red flag

indicators. To date, there have been no civil penalties levied against victim

companies, insurers or response firms for paying or facilitating the payment of

cyber extortion,” she says. “In a nutshell, since the ransom is often lower than

the cost of recovery, business interruption and lost business – the convergence

of which can spell financial death – many victims and insurers simply pay the

ransom and risk sanctions. “As a result, insurers have taken a rational

economics approach to ransomware payments, leading to a growing sentiment that

the industry is worsening the problem by paying extortions.”

Are Autonomous Businesses Next?

The most extreme form of automation is an autonomous system that operates

without human intervention. That's not to say that autonomous systems don't need

oversight, however."Automation is a necessary, functional component of an

autonomous system. 'Autonomous' implies a degree of artificial intelligence,

decision making that is not necessarily rule or workflow based, rather taking

actions based on new patterns that are not hard coded into the system," said

Robert Greene, senior director, Oracle Autonomous Database product management.

"Automation…still requires a human to make the decision to invoke [an] action,

so a human is still in the loop." Organizations are automating more tasks using

robotics process automation (RPA) and in some cases, they're inheriting

autonomous capabilities from the enterprise products they use such as the Oracle

Autonomous Database. "You start out by automating smaller steps with smaller

stakes, so your organization builds its internal capacity to do automation well

and learn how to make it work in hybrid situations that involve people," said

Chris Nicholson, founder CEO of deep reinforcement learning solution provider

Pathmind.

Digital transformation: Leadership imperatives for 2021

Digital tools, used appropriately and effectively, can contribute to planning

and monitoring internal processes, increasing transparency and accountability

across all levels of management, and building customers’ trust. Digital tools

are not only helping leaders solve complex issues related to personnel and

minimizing operational costs, but also improving decision making. However,

leaders will have to verify the suitability of tech tools being implemented in

relation to organizational needs and objectives. These are not top-down

decisions. Leaders promoting open ways of working in their organization could

make this a more inclusive and participatory process by adopting and

implementing an approach such as the Open Decision-Making Framework. One key

factor to remember: While digital technologies have much potential to improve

organizational processes, leaders must take proactive actions and measured steps

to help employees internalize and integrate these processes. The easier that

leaders make it for employees to adapt to and use new technology in their daily

routines, the faster the integration. The hardest part is often the change

management: Leaders need to facilitate this in a way that instills a positive

attitude in employees.

How the SRE Role Is Evolving

First, not all companies have embraced an SRE model. A recent study by Blameless

found “… 50% of respondents employ an SRE model with dedicated engineers focused

on infrastructure and tooling, or an embedded model where full-time SREs are

assigned to a service.” The SRE model is gaining momentum, but there is still

room for greater adoption. There is also room for internal growth. Ostrowski

sees a single SRE team as a single point of failure. “It needs to be a whole

department,” he said. In addition, SREs are gaining a more prominent voice at

the table, influencing feature rollout. “With proper and mature SRE involvement,

teams can’t willy-nilly deploy,” he said. Ostrowski views these teams as

maintaining a critical balance between business risk and introducing new

technology. Many companies are experiencing rising user demands, and thus must

rapidly scale their application networks. Simultaneously, there has been a

Cambrian explosion of deployment types — systems could be using any assortment

of legacy infrastructure, mainframe, microservices, cloud environments and

multiple cloud vendors. “The complexity and topology of the IT space has grown

substantially, with many interdependencies,” Ostrowski said.

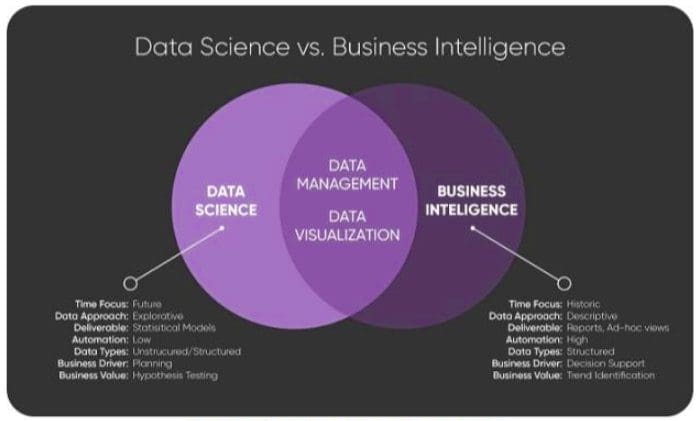

Data Science vs Business Intelligence, Explained

You will recognize business intelligence by its charts, dashboards, database

diagrams, and data integration projects. It is expensive and frustrating -- but

indispensable. BI has a permanent advantage over DS because it has concrete data

points; few, simple assumptions; self-explanatory metrics; and automated

processes. Furthermore, BI will never go away. It will always be a work in

progress because you will never stop changing your business or upgrading and

replacing the source systems. ... Looking in the rearview mirror of data is

important and helpful, but it's limited and will never get you where you want to

go. At some point you need to look ahead. BI needs to be accompanied by data

science. DS is a complicated, sophisticated form of planning and optimization.

Examples include: Predicting in real time which product a customer is most

likely to buy; Forming a weighted network between business micro events and

micro responses so that decisions can be made without human intervention, then

updating that network with every outcome so that it learns as it

acts; Forecasting at the SKU level, by day, with every

sale; Identifying and predicting rare events, such as credit card fraud,

and sending automatic notifications to customers and/or staff;

Piercing the Fog: Observability Tools from the Future

When we talk about observability, there are two sets of tools: specific

observability tools, such as Zipkin and Jaeger, as well as broader application

performance monitoring (APM) tools such as DataDog and AppDynamics. When

monitoring systems, we need information from all levels, from method and

operating system level tracing to database, server, API call, thread, and lock

data tracing. Asking developers to add instrumentation to get these statistics

is costly and time consuming and should be avoided whenever possible. Instead,

developers should be able to use plugins, interception, and code injection to

collect data as much as possible. APM tools have done a pretty good job of this.

Typically they have instrumentation (e.g. Java agents) built into program

languages to collect method-level tracing data, and they have added custom

filter logic to detect database, server, API call, thread, and lock data tracing

by looking at the method traces. Furthermore, they have added plugins to

commonly used middleware tools to collect and send instrumented data. One

downside of this approach is that the instrumentation needs will change as

programming languages and middleware evolve.

Quote for the day:

"Coaching isn't an addition to a

leader's job, it's an integral part of it." -- George S. Odiorne

2 comments:

This looks very intriguing

Yes agree wholeheartedly 😁👍

Post a Comment