AI progress depends on us using less data, not more

The data science community’s tendency to aim for data-“insatiable” and

compute-draining state-of-the-art models in certain domains (e.g. the NLP domain

and its dominant large-scale language models) should serve as a warning sign.

OpenAI analyses suggest that the data science community is more efficient at

achieving goals that have already been obtained but demonstrate that it requires

more compute, by a few orders of magnitude, to reach new dramatic AI

achievements. MIT researchers estimated that “three years of algorithmic

improvement is equivalent to a 10 times increase in computing power.”

Furthermore, creating an adequate AI model that will withstand concept-drifts

over time and overcome “underspecification” usually requires multiple rounds of

training and tuning, which means even more compute resources. If pushing the AI

envelope means consuming even more specialized resources at greater costs, then,

yes, the leading tech giants will keep paying the price to stay in the lead, but

most academic institutions would find it difficult to take part in this “high

risk – high reward” competition. These institutions will most likely either

embrace resource-efficient technologies or persue adjacent fields of

research.

How to Create a Bulletproof IoT Network to Shield Your Connected Devices

By far, the biggest threat that homeowners face concerning all of their

connected devices is the chance that an outsider might gain access to them and

use them for nefarious purposes. The recent past is littered with examples of

such devices becoming part of sophisticated botnets that end up taking part in

massive denial of service attacks. But although you wouldn’t want any of your

devices used for such a purpose, the truth is that if it happened, it likely

wouldn’t affect you at all (not that I’m advocating that anyone ignore the

threat). The average person really should be worried about the chance that a

hacker might use the access they gain to a connected device as a jumping-off

point to a larger breach of the network. That exact scenario has already played

out inside multiple corporate networks, and the same is possible for in-home

networks as well. And if it happens, a hacker might gain access to the data

stored on every PC, laptop, tablet, and phone connected to the same network as

the compromised device. And that’s what the following plan should help to

prevent. In any network security strategy, the most important tool available in

isolation. That is to say; the goal is to wall off access between the devices on

your network so that a single compromised device can’t be used as a means of

getting at anywhere else.

How to build a digital mindset to win at digital transformation

First, you need to overcome the technical skills barrier. For that you need the

right people. There is a difference in developing hardware or software as much

as selling a one-time sales product or a service with recurring fees. Yes, you

can train people to a certain extent to do so. But what we’ve realised at Halma

is that diversity, equality and inclusion are just as important to digital &

innovation success as every other aspect of business performance. At Halma this

approach to diversity is in our DNA. Attracting and recruiting people with

diverse viewpoints as well as diverse skills, mean that you will be able to see

new opportunities and imagine new solutions. Second, you need to overcome the

business model barrier. You need to think differently about how your business

generates revenue. Fixed mindsets in your team that don’t have an outside-in

approach to your market and are hooked on business as usual need to be changed.

You need to take a bold and visionary approach to doing business differently,

and helping your team reimagine their old business model. Third, you need to

overcome the business structure barrier. Often the biggest barrier to cultural

adaptation is the organisation itself. Using the same tools and strategies that

built your business today isn’t going to enable the digital transformation of

tomorrow. It requires a fundamental shift in the way your organisation works.

Tips for boosting the “Sec” part of DevSecOps

“If there’s a thing that, as a security person, you’d call a ‘vulnerability,’

keep that word to yourself and instead speak the language of the developers:

it’s a defect,” he pointed out. “Developers are already incentivized to manage

defects in code. Allow those existing prioritization and incentivization tools

to do their job and you’ll gain the security-positive outcomes that you’re

looking for.” ... “Organizations need to stop treating security as some kind of

special thing. We used to talk about how security was a non-functional

requirement. Turns out that this was a wrong assumption, because security is

very much a function of modern software. This means it needs to be included as

you would any other requirement and let the normal methods of development defect

management take over and do what they already do,” he noted. “There will be some

uplift requirements to ensure your development staff understands how to write

tests that validate security posture (i.e., a set of tests that exercise your

user input validation module), but this is generally not a significant problem

as long as you’ve built in the time to do this kind of work by including the

security requirements in that set of epics and stories that fit within the

team’s sprint budget.”

6 strategies to reduce cybersecurity alert fatigue in your SOC

Machine Learning is at the heart of what makes Azure Sentinel a game-changer in

the SOC, especially in terms of alert fatigue reduction. With Azure Sentinel we

are focusing on three machine learning pillars: Fusion, Built-in Machine

Learning, and “Bring your own machine learning.” Our Fusion technology uses

state-of-the-art scalable learning algorithms to correlate millions of lower

fidelity anomalous activities into tens of high fidelity incidents. With Fusion,

Azure Sentinel can automatically detect multistage attacks by identifying

combinations of anomalous behaviors and suspicious activities that are observed

at various stages of the kill-chain. On the basis of these discoveries, Azure

Sentinel generates incidents that would otherwise be difficult to catch.

Secondly, with built-in machine learning, we pair years of experience securing

Microsoft and other large enterprises with advanced capabilities around

techniques such as transferred learning to bring machine learning to the reach

of our customers, allowing them to quickly identify threats that would be

difficult to find using traditional methods. Thirdly, for organizations with

in-house capabilities to build machine learning models, we allow them to bring

those into Azure Sentinel to achieve the same end-goal of alert noise reduction

in the SOC.

How To Stand Out As A Data Scientist In 2021

Jack of all trades doesn’t cut it anymore. While data science has many

applications, people will pay more bucks if you are an expert at one thing. For

instance, your value as a data scientist will be worth its weight in gold if you

are exceptional at data visualisations in a particular language rather than a

bits and pieces player. The top technical skills in demand in 2021 are data

wrangling, machine learning, data visualisation, analytics tools, etc. As a data

scientist, it’s imperative to know your fundamentals down cold. It would help if

you spent enough time with your data to extract actionable insights. A data

scientist should sharpen her skills by exploring, plotting and visualising data

as much as possible. Most data scientists or aspiring data scientists doing

statistics learn to code or take up a few machine learning or statistics

classes. However, it is one thing to code little models on practice platforms

and another thing to build a robust machine learning project deployable in the

real world. As a rule, data scientists need to learn the fundamentals of

software engineering and real-world machine learning tools.

AI startup founders reveal their artificial intelligence trends for 2021

Matthew Hodgson, CEO and founder of Mosaic Smart Data, says AI and automation is

“permeating virtually every corner of capital markets.” He believes that this

technology will form the keystone of the future of business intelligence for

banks and other financial institutions. The capabilities and potential of AI are

enormous for our industry. According to Hodgson, recent studies have found that

companies not using AI are likely to suffer in terms of revenue. “As the link

between AI use and revenue growth continues to strengthen, there can be no doubt

that AI will be a driving force for the capital markets in 2021 and in the

decade ahead — those firms who are unwilling to embrace it are unlikely to

survive,” he continues. Hodgson predicts that with the continued tightening

regulatory environment, financial institutions will have to do more with less

and many will need to act fast to remain both competitive and relevant in this

‘new normal’. “As a result, we are seeing that financial institutions are

increasingly looking to purchase out-of-the-box third-party solutions that can

be onboarded within a few short months and that deliver immediate results rather

than taking years to build their own systems with the associated risks and vast

hidden costs,” he adds.

How Reading Papers Helps You Be a More Effective Data Scientist

In the first pass, I scan the abstract to understand if the paper has what I

need. If it does, I skim through the headings to identify the problem statement,

methods, and results. In this example, I’m specifically looking for formula on

how to calculate the various metrics. I give all papers on my list a first pass

(and resist starting on a second pass until I’ve completed the list). In this

example, about half of the papers made it to the second pass. In the second

pass, I go over each paper again and highlight the relevant sections. This helps

me quickly spot important portions when I refer to the paper later. Then, I take

notes for each paper. In this example, the notes were mostly around metrics

(i.e., methods, formula). If it was a literature review for an application

(e.g., recsys, product classification, fraud detection), the notes would focus

on the methods, system design, and results. ... In the third pass, I synthesize

the common concepts across papers into their own notes. Various papers have

their own methods to measure novelty, diversity, serendipity, etc. I consolidate

them into a single note and compare their pros and cons. While doing this, I

often find gaps in my notes and knowledge and have to revisit the original

paper.

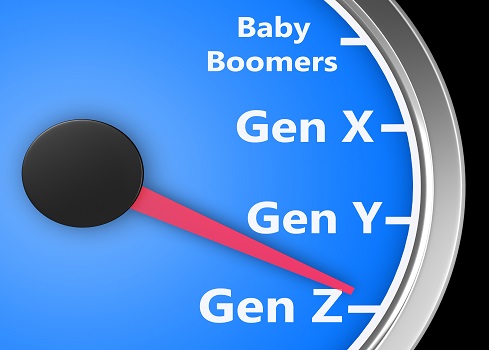

Generation Z Is Bringing Dramatic Transformation to the Workforce

While Gen Zers and Millennials are coming into their own in the workforce, Baby

Boomers are leaving in droves, taking valuable expertise and experience with

them that’s often not documented throughout the organization. Pew Research

reports 3.3 million people retired in the third quarter of 2020 -- likely driven

by staff reductions and incentivized retirement packages created by the

pandemic. The change in rank will inevitably drive how people interact with

technology, particularly around the transfer of knowledge to bridge the skills

gap. While this transition is still in flux, we’ve already been able to imagine

the impact. Coding languages risk becoming extinct, and machinery risks grinding

to a halt. Data from recruitment firm Robert Half reveals three quarters of

finance directors believe the skills gap created by retiring Baby Boomers will

negatively impact their business within 2-5 years. To that point, the COVID

pandemic is not only creating turnover in the workforce but is also making

in-person knowledge sharing difficult. Technology is helping to soften this

challenge, ensuring business resiliency against the “disruption” of retirement.

Where practical knowledge handovers are less viable, in the case of remote work

or global organizations, programming languages or process-specific knowledge can

be taught through artificial intelligence (AI).

The Theory and Motive Behind Active/Active Multi-Region Architectures

The concept of active/active architectures is not a new one and can in fact be

traced back to the 70s when digital database systems were being newly introduced

in the public sphere. Now as cloud vendors roll out new services, one of the

factors they are abstracting away for users is the set-up of such a system.

After all, one of the major promises of moving to the cloud is the abstraction

of these types of complexities along with the promise of reliability. Today, an

effective active/active multi-region architecture can be built on almost all

cloud vendors out there. Considering the ability and maturity of cloud services

in the market today, this article will not act as a tutorial on how to build the

intended architecture. There are already various workshop guides and talks on

the matter. In fact, one of the champions of resilient and high available cloud

architectures, Adrian Hornsby who is the Principal Technical Evangelist at AWS,

has a great series of blogs guiding the reader through active/active

multi-region architectures on AWS. However, what is missing, or at least what

has been lost, is the theory and clear understanding of the motive behind

implementing such an architecture.

Quote for the day:

"Expression is saying what you wish to

say, Impression is saying what others wish to listen." --

Krishna Sagar

No comments:

Post a Comment