A tougher balancing act in 2024, the year of the CISO

What’s making things more difficult for CISOs? The ESG/ISSA data indicates

that business aspects of running a cybersecurity program like working with

the board, overseeing regulatory compliance, and managing a budget are

primary contributing factors. This makes sense as the CISO role has evolved

from technical overseer to business executive over the past few years. At

the same time, organizations have increased their dependence on IT for

automation, optimization, customer service, and digital transformation. ...

Like their non-CISOs colleagues, CISOs are particularly stressed by things

like an overwhelming workload, working with disinterested business managers,

and keeping up with the security requirements of new business initiatives.

It’s worth noting that 26% of CISOs are also stressed about monitoring the

security status of third parties their organization does business with as

compared with 12% of non-CISOs. Third-party relationships are often

associated with business processes and therefore tied closely with business

units. Unfortunately, security teams probably don’t have deep visibility

into the day-to-day security performance at these firms.

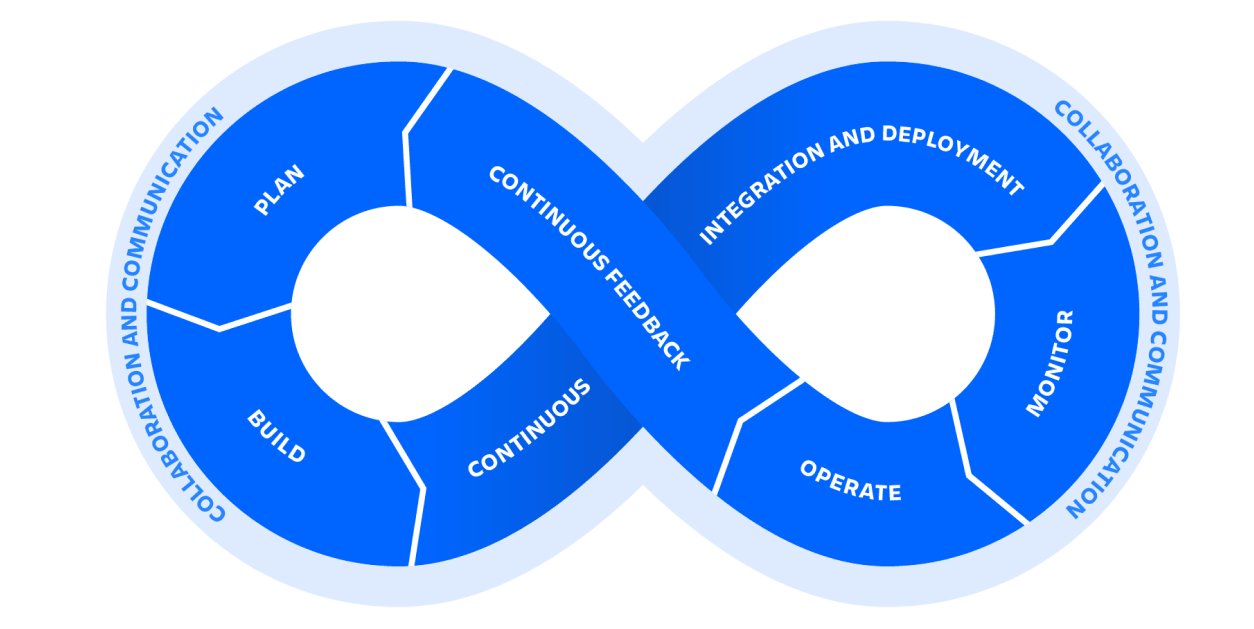

How do agile and DevOps interrelate?

Agile and DevOps have much in common. In fact, DevOps grew as an offshoot (or

improvement) of agile, as many industry leaders found dysfunction in IT and

software development. While incremental improvements support quality products,

the competing objectives of individual IT workers lowered overall performance.

To remedy the problem, developers proposed the more integrated approach of

DevOps. Of course, the new philosophy offered different core values, which

caused a split between two opposing communities. Developers grappled with what

looked like conflicting philosophies, leading to the most common misconception

about agile and DevOps: that they don’t interrelate. On the surface, the

pundits have much to draw on. DevOps engineers focus on software scalability,

speed, and team integration. Agile focuses on the slower, iterative process of

software development, with more emphasis on continuous testing. More

importantly, Agile silos individuals while DevOps integrates. Without the

operability of DevOps, infrastructure responsibility falls to the wayside. But

without the basic building blocks of the incremental, customer-focused method

of agile, DevOps has no fundamental processes on which to stand.

AI Fraud Act Could Outlaw Parodies, Political Cartoons, and More

So just how broad is this bill? For starters, it applies to the voices and

depictions of all human beings "living or dead." And it defines digital

depiction as any "replica, imitation, or approximation of the likeness of an

individual that is created or altered in whole or part using digital

technology." Likeness means any "actual or simulated image… regardless of

the means of creation, that is readily identifiable as the individual."

Digital voice replica is defined as any "audio rendering that is created or

altered in whole or part using digital technology and is fixed in a sound

recording or audiovisual work which includes replications, imitations, or

approximations of an individual that the individual did not actually

perform." This includes "the actual voice or a simulation of the voice of an

individual, whether recorded or generated by computer, artificial

intelligence, algorithm, or other digital means, technology, service, or

device." These definitions go way beyond using AI to create a fraudulent ad

endorsement or musical recording. They're broad enough to include

reenactments in a true-crime show, a parody TikTok account, or depictions of

a historical figure in a movie.

Navigating digital transformation in insurance sector: Challenges, opportunities and innovations

Notable developments are the changes that regulators have come up with in

cybersecurity, the Information Security Management, the connectivity

management with the website, with the vendors and employees, and the digital

transformation that they have pushed us to. Because today, everything is

digitally handled, an employee actually meets a customer, and the customer

fills the form digitally; there is no mechanical filling of forms, although

that practice is still there in many parts of the country and in many

companies also. Having said that the digital absorption has become higher in

percentage. So, when we handle things digitally, you will have to think

through, and therefore today, employees are forced to think through what are

the controls they could have. Like we have introduced OTP, so each state’s

customer is forced to think and answer questions on OTP. You have to give

OTP for that, like a policy that I bought about last week, so I had to do

six OTPs in that company. I was wondering why so many OTPs are required, but

when I look at the way the processes were handled by the salesperson, it was

quite effective and efficient, and at the same time, it’s all for the safety

of the customer, that thought process is given to the customer.

Productivity Paradox: Productivity in the Age of Knowledge Work

We sometimes forget that every employee within an enterprise is, at their

core, also a consumer. Their personal preferences, shaped by daily

interactions with personal technology, inevitably spill over into their

professional lives. Consequently, the ubiquity of Macs, iPhones and iPads in

the consumer market has sparked a growing demand for these devices in the

workplace. This shift has only been hastened by the “Bring Your Own Device”

(BYOD) movement, wherein employees sought to use their trusted personal

devices for professional tasks, yearning for the familiarity and ease of use

they’ve grown accustomed to. Instead of resisting this tide, more and more

forward-thinking enterprises are instead leaning in. For one, IT leaders

have recognized that specific hardware platforms matter less these days as

they shift more of their applications to the public cloud. Reliability is

another major factor, especially for remote employees who don’t have an IT

helpdesk at their disposal. And when our survey respondents were asked if

they agreed or disagreed with the statement “Apple takes enterprise

security, compliance, and privacy concerns more seriously than other

vendors,” three-quarters of them concurred.

6 hot networking and data center skills for 2024

“The current rage in AI technology is more than just a fad,” Leary says. “It

is delivering real measurable benefits to IT organizations and the

businesses they serve. And there is no sign of slowdown on the horizon.

Driven by pressing capacities and costs, physical data center designs are

changing significantly” with generative AI. Organizations need IT staffers

who can help assure that generative AI is provided the data, data

processing, and data exchanges needed to deliver on its promise, Leary says.

... Knowledge about cyber security products and services—as well as the

threats they guard against—never go out of fashion. Organizations are facing

a barrage of threats against their networks and data centers, so finding

people with related skills remains a high priority. “Companies are

constantly having to pay attention to their security as more and more cyber

attacks happen,” Vick says. “That is something that is not slowing down, so

they are having to update their firewalls and other security features.”

Enterprises are building out their security teams, in some cases looking for

people to update their security posture with a variety of technologies.

Navigating data management modernisation to deliver the AI-ready tech stack of the future

Forward-thinking IT leaders already see a direct correlation between

modernising the data management journey across the entire tech stack and

facilitating the extraction of value from data at the speed and scale needed

for real-time intelligence. The same Alteryx research suggests that digital

transformation relating to AI and machine learning will be the number one

characteristic of the future enterprise, and tech stack priorities are

already shifting to reflect this. Generative AI, quantum computing and

machine learning operations (MLOps) are cited as the technologies most

likely to see the largest shift in accelerated adoption in the future. While

it only seems a short time since the pandemic forced many organisations to

accelerate transformation at breakneck speeds, the rise of AI-related

technologies will reinfuse these transformations. Why? Because AI has

lowered the barrier to delivering productivity gains by delivering

data-driven insights with just a sentence or a prompt. With countless

data-oriented AI technologies and intelligent systems already available, the

ultimate goal of this transformation is to modernise the data management

journey across the entire stack.

Continuous Quality Assurance: Strategizing Automated Regression Testing for Codebase Resilience

In times of QA software testing, automation regression processes can be

enabled to autonomously identify any unexpected behaviors or regressions in

the software. ... End-users anticipate a consistent and dependable

performance from software, recognizing that any disruptions or failures can

profoundly affect their productivity and overall user experience. The

implementation of regression testing proves invaluable in identifying

unintended consequences, validating bug fixes, upholding consistency across

versions, and securing the success of continuous deployment. Through early

identification and resolution of regressions, development teams can

proactively safeguard against issues reaching end-users, thereby preserving

the quality and reliability of their software. ... Automated regression

testing can be strategized based on the complexity of the codebase for

approaches like retesting everything, selective re-testing, and prioritized

re-testing. Tools such as Functionize, Selenium, Watir, Sahi Pro, and IBM

Rational Functional Tester can be used to automate regression testing and

improve efficiency.

Sustainable Partnerships Pay Dividends

The first step in establishing highly effective partnerships, von Koeller

says, is to identify what an organization hopes to achieve and establish a

roadmap for meeting specific goals and metrics. This requires a focus on

shared values and coordination across departments and groups. “You have to

understand your footprint, understand what environmental impact an action

has, and how to engage suppliers to achieve alignment around your targets,”

she explains. Open and honest communication among partners is vital. Too

often, larger companies fail to understand what suppliers can do and what

they can’t do, particularly when they’re located in faraway countries, or a

supply chain has numerous layers. Partners downstream and upstream face

their own set of challenges -- environmental, political, and practical --

that can make it difficult to conform to strict standards. A particularly

daunting aspect -- especially for smaller firms supplying raw materials or

specialized components -- is onerous data collection and reporting

requirements, Linich notes. As a result, smaller companies may require

funding and technical assistance from larger partners, including aid in

setting up software and IT systems that support sustainability.

Get started with Anaconda Python

The Anaconda distribution is a repackaging of Python aimed at developers who

use Python for data science. It provides a management GUI, a slew of

scientifically oriented work environments, and tools to simplify the process

of using Python for data crunching. It can also be used as a general

replacement for the standard Python distribution, but only if you’re

conscious of how and why it differs from the stock version of Python. ...

The most noticeable thing Anaconda adds to the experience of working with

Python is a GUI, the Anaconda Navigator. It is not an IDE, and it doesn’t

try to be one, because most Python-aware IDEs can register and use the

Anaconda Python runtime themselves. Instead, the Navigator is an

organizational system for the larger pieces in Anaconda. With the Navigator,

you can add and launch high-level applications like RStudio or Jupyterlab;

manage virtual environments and packages; set up “projects” as a way to

manage work in Anaconda; and perform various administrative functions.

Although the Navigator provides the convenience of a GUI, it doesn’t replace

any command-line functionality in Anaconda, or in Python generally.

Quote for the day:

"Leaders are more powerful role models when they learn than when they

teach." -- Rosabeth Moss Kantor