2023 could be the year of public cloud repatriation

High cloud bills are rarely the fault of the cloud providers. They are often

self-inflicted by enterprises that don’t refactor applications and data to

optimize their cost-efficiencies on the new cloud platforms. Yes, the

applications work as well as they did on the original platform, but you’ll pay

for the inefficiencies you chose not to deal with during the migration. The

cloud bills are higher than expected because lifted-and-shifted applications

can’t take advantage of native capabilities such as auto-scaling, security, and

storage management that allow workloads to function efficiently. It’s easy to

point out the folly of not refactoring data and applications for cloud platforms

during migration. The reality is that refactoring is time-consuming and

expensive, and the pandemic put many enterprises under tight deadlines to

migrate to the cloud. For enterprises that did not optimize systems for

migration, it doesn’t make much economic sense to refactor those workloads now.

Repatriation is often a more cost-effective option for these enterprises, even

considering the hassle and expense of operating your own systems in your own

data center.

How Cyber Pathways Can Help Your Career

The Cyber Pathways Framework will introduce chartered standards that align with

16 cybersecurity specialties. These job roles that have, until now, been loosely

defined will be given specific descriptions and linked to existing

qualifications and certifications to see the establishment for the first time of

minimum requirements, as called for by the DCMS in its Understanding the cyber

security recruitment pool report. On the plus side, this will set a bar to

achieve certain roles, helping to standardize role requirements. This could

prove helpful in the current climate where the demand for talent is leading to

job creep, with job descriptions containing myriad skillsets. And it could prove

fundamental in stopping the current job hopping that we’re seeing. But it will

also see roles become more rigid, and given that the sector has always grown

organically, there will need to be some provision made for the evolution of new

roles as and when needed, such as ones involving AI and DevSecOps. Another key

pathway proposal is the creation of a register for cybersecurity practitioners,

similar to that seen in the medical, legal and accountancy professions, for

senior positions.

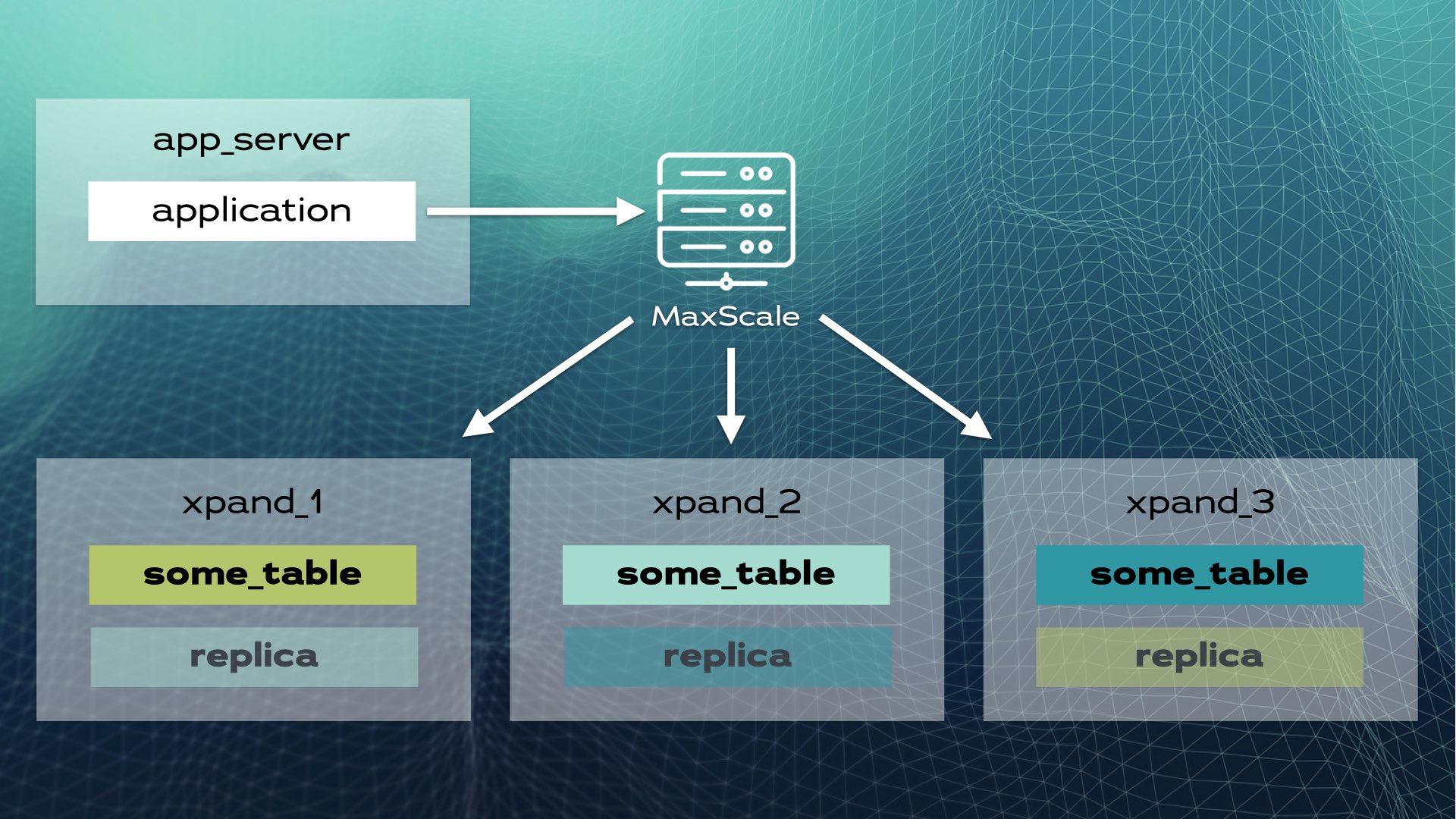

The Cloud Computing Boom: A Test for Database Companies

The threat of the cloud also resonated with Shoolman, who said that “the cloud

is all over”. For instance, a lot of Oracle’s business has been taken by cloud

service providers. ... More importantly, he says, there is a change in the top

five: what was dominated by the likes of IBM, SAP, and Oracle is now highly

dominated by AWS, Azure, GCP, etc. The rest 20%, he says, is occupied by ISVs

like Redis, MongoDB, and others. All of the big-tech cloud providers have come

up with data services similar to those provided by these independent vendors

to cater to their business needs. But, recognising that despite being their

competition, ISVs have to depend on cloud platforms too to host their

services, MongoDB said, “We are in a love-hate relationship with our cloud

partners”. When Shoolman was asked if he sees more hyperscalers like AWS,

Azure, and GCP coming up in future to meet organisation demands for massive

scaling in computing, he said that we are indeed seeing a trend of developing

more and more data centres since organisations intend to bring data much

closer to the user.

Ukraine War and Upcoming SEC Rules Push Boards to Sharpen Cyber Oversight

The war, along with the hybrid work models that have been put in place at many

companies as a result of the pandemic, prompted corporate directors to

carefully consider how their companies might be exposed to cyber risks, said

Andrea Bonime-Blanc, chief executive of GEC Risk Advisory LLC, a New

York-based firm that advises boards and executives about cybersecurity and

risk management. Board awareness of cybersecurity “was already increasing

glacially, but I think the Ukraine war has sharpened the minds,” Ms.

Bonime-Blanc said. Some boards now rate cyber threats on a par with trade wars

and supply-chain problems among risks that could have major impact on

companies, said Michael Hilb ... A communication gap between boards and

security chiefs means neither side is as effective as needed to govern

cybersecurity, said Yael Nagler, chief executive of Yass Partners, a

consulting firm focused on aligning security leadership. Directors sometimes

fail to understand core threats, Ms. Nagler said. “They’re not shy people but

when it comes to cyber, they feel like they’re asking dumb questions,” she

said.

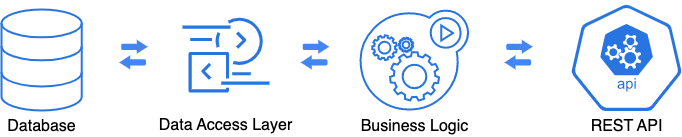

Pro Coders Key to Stopping Citizen Developer Security Breach

Another Forrester prediction that may directly impact developers is their

forecast that enterprise business leaders, not IT, will direct more than 40%

of the API strategies. That goes against conventional wisdom that IT drives

the API strategy, Gardner noted. “APIs have transcended from being just pure

application or infrastructure APIs. There [are] now business APIs, there [are]

ones that take advantage of data and take advantage of transactions, and

essentially, enable the data economy,” he said “It’s not an IT conversation

anymore. IT will make sure it stays secured and locked down and make sure that

it’s tightly woven with everything else, but the business leader decides which

ones are the most beneficial.” API strategy is even becoming a board-level

topic, as board members and C-level leaders have grasped that APIs can be a

central part of the business strategy, he said. That makes sense because the

greatest value of APIs comes when organizations use them to create new

products, business models, and channels, according to

Today’s Software Developers Will Stop Coding Soon

Coding will never account for 100% of your time. Even junior ICs will have

meetings to attend and non-coding tasks to complete. As you progress the IC

ranks to senior and beyond, the non-coding work will grow. Apart from

attending meetings, I’ve highlighted below a few prominent responsibilities

that fall under this work. ... Begin doing this at any time, and never stop.

No one is “not experienced enough” — even new hires can begin by filling in

any conceptual gaps or “gotchas” they encountered while onboarding. ...

Software engineering teams will always face skills gaps. You will lack some

cross-functional support like a project or program manager, product designer,

etc. As such, you may need to develop the skills of an adjacent role, like

project management, to set deadlines and perform other duties associated with

overseeing a project’s completion. ... As you gain experience no your team,

you’ll be asked to onboard new hires including mentoring junior devs. Being a

mentor will improve your communication skills and bolster your promotion

packet.

Can the world’s de facto tech regulator really rein in AI?

The AI Act may also run into enforcement challenges. The regulation will apply

mainly to companies or other entities developing and designing AI systems —

not to public authorities or other institutions that use them. For example, a

facial recognition system could have vastly different implications depending

on whether it’s used in a consumer context (i.e., to recognize your face on

Instagram) or at a border crossing to scan people’s faces as they enter a

country. “We are arguing that a lot of the potential risks or adverse impacts

of AI systems depend on the context of use,” said Karolina Iwanska, a digital

civic space advisor at the European Center for Not-for-Profit Law in the

Hague. “That level of risk seems different in both of these circumstances, but

the AI Act primarily targets the developers of AI systems and doesn’t pay

enough attention to how the systems are actually going to be used,” she told

me. Although there has been plenty of discussion of how the draft regulation

will — or will not — protect people’s rights, this is only part of the

picture.

Why Do Ransomware Victims Pay for Data Deletion Guarantees?

Many ransomware-wielding attackers are expert at preying on their victims'

compulsion to clean up the mess. Hence victims often face a menu of options:

Pay a ransom for a decryptor, and you'll be able to unlock forcibly encrypted

data. Pay more, and your name gets deleted from the list of victims on a

ransomware group's data-leak site. Pay even more and you get a promise that

whatever data they've stolen - or already leaked - will be immediately

deleted. Of course, many victims will feel the impulse to do something,

anything, for the illusion that they can belatedly protect stolen data and

salvage their reputation. That impulse is understandable. But it's not only

too late, but also being used against them by extortionists. Psychologically

speaking, criminals don't hesitate to find the levers that will compel a

victim to act - as in, give them money. Most ransomware groups' promises are

bunk, and most of all anything they guarantee that a victim cannot verify.

Unfortunately, seeing victims pay for data-deletion promises isn't

new.

4 career paths for software developers on the move

One of the paths is architecture. “These roles are highly technical and are

focused on designing, building, and integrating the foundational components of

applications or systems,” Blackwell says. “This would include roles like

technical/application architect, solution architect, or enterprise architect.”

... The move into devops is another common path for software developers. These

positions are also highly technical, says Blackwell, and are focused on

optimizing the tools, processes, and systems to build, test, release, and

manage high-quality software in complex or high-availability environments.

Devops roles include release manager, engineer, and architect. ... A third

path is leadership. “Roles in this area require both good people skills and

good technical skills,” Blackwell says. “And each, in their own way, is

responsible for ensuring that teams have what they need to succeed, whether

technical, process, tools, or skills.” Roles on the leadership path include

scrum master, technical project manager, product manager, technical lead, and

development manager.

A Skeptic’s Guide to Software Architecture Decisions

/filters:no_upscale()/articles/architecture-skeptics-guide/en/resources/2Picture1-1672223515148.jpg)

The power of positive thinking is real, and yet, when taken too far, it can

result in an inability, or even unwillingness, to see outcomes that don’t

conform to rosy expectations. More than once, we’ve encountered managers who,

when confronted with data that showed a favorite feature of an important

stakeholder had no value to customers, ordered the development team to

suppress that information so the stakeholder would not "look bad." Even when

executives are not telling teams to do things the team suspects are wrong,

pressure to show positive results can discourage teams from asking questions

that might indicate they are not on the right track. Or the teams may

experience confirmation bias and ignore data that does not reinforce the

decision they "know" to be correct, dismissing data as "noise." Such might be

the case when a team is trying to understand why a much-requested feature has

not been embraced by customers, even weeks after its release. The team may

believe the feature is hard to find, perhaps requiring a UI redesign.

Quote for the day:

"Effective team leaders realize they

neither know all the answers, nor can they succeed without the other members

of the team." -- Katzenbach & Smith

/filters:no_upscale()/articles/blue-green-deployments/en/resources/13figure-1-1672220471233.jpg)

/2022/12/28/image/jpeg/H0Q6wcA1EMGi3SIiMcxr9RP6Iq9ELfyA8ye28NkF.jpg)