The evolving role of operations in DevOps

To better understand how DevOps changes the responsibilities of operations

teams, it will help to recap the traditional, pre-DevOps role of operations.

Let’s take a look at a typical organization’s software lifecycle: before

DevOps, developers package an application with documentation, and then ship it

to a QA team. The QA teams install and test the application, and then hand off

to production operations teams. The operations teams are then responsible for

deploying and managing the software with little-to-no direct interaction with

the development teams. These dev-to-ops handoffs are typically one-way, often

limited to a few scheduled times in an application’s release cycle. Once in

production, the operations team is then responsible for managing the service’s

stability and uptime, as well as the infrastructure that hosts the code. If

there are bugs in the code, the virtual assembly line of dev-to-qa-to-prod is

revisited with a patch, with each team waiting on the other for next steps.

This model typically requires pre-existing infrastructure that needs to be

maintained, and comes with significant overhead. While many businesses

continue to remain competitive with this model, the faster, more collaborative

way of bridging the gap between development and operations is finding wide

adoption in the form of DevOps.

Monitoring Microservices the Right Way

The common practice by StatsD and other traditional solutions was to collect

metrics in push mode, which required explicitly configuring each component and

third-party tool with the metrics collector destination. With the many

frameworks and languages involved in modern systems it has become challenging to

maintain this explicit push-mode sending of metrics. Adding Kubernetes to the

mix increased the complexity even further. Teams were looking to offload the

work of collecting metrics. This was a distinct strongpoint of Prometheus, which

offered a pull-mode scraping, together with service discovery of the components

("targets" in Prometheus terms). In particular, Prometheus shined with its

native scraping from Kubernetes, and as Kubernetes’s demand skyrocketed so did

Prometheus. As the popularity of Prometheus grew, many open source projects

added support for the Prometheus Metrics Exporter format, which has made metrics

scraping with Prometheus even more seamless. Today you can find Prometheus

exporters for many common systems including popular databases, messaging

systems, web servers, or hardware components.

Will Blockchain Replace Clearinghouses? A Case Of DVP Post-Trade Settlement

Blockchain technology can improve settlement processes substantially. First,

using a blockchain makes it possible to decrease counterparty risk as it

enables a trustless settlement process that is similar to DVP settlement in a

way that the delivery of an asset is directly linked to the instantaneous

payment for the asset. Therefore, atomic swaps enable direct “barter”

operations when one tokenized asset is directly exchanged for another

tokenized asset (delivery versus delivery). Here, “directly exchanged” means

that the technology guarantees that both transfers have to happen. It is

technologically not possible that only one transfer is executed if the other

transfer is interrupted for whatever reason. Besides, if a blockchain is used

for settlement, a third party intermediary that helps to facilitate settlement

in the case of a conventional, not DLT-based DVP is no longer necessary. This

implies peer-to-peer settlement that leads to substantial cost savings for the

settlement. In addition, cross-chain atomic swaps cover more complex cases

such as trustless settlement among more than two parties.

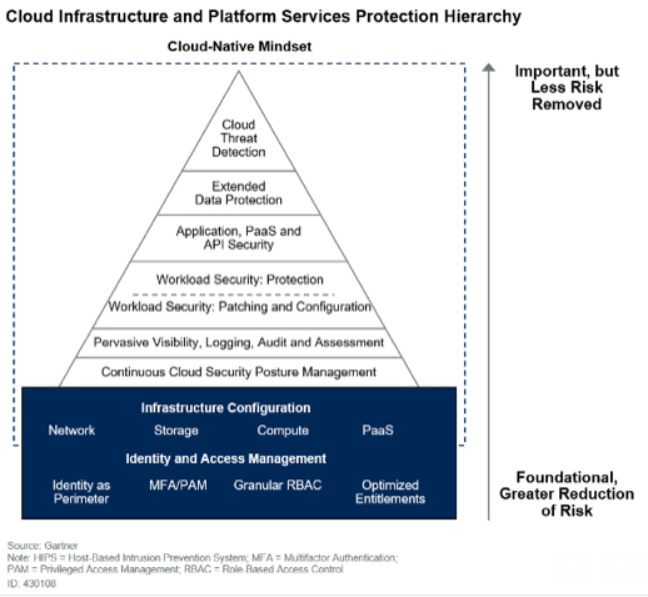

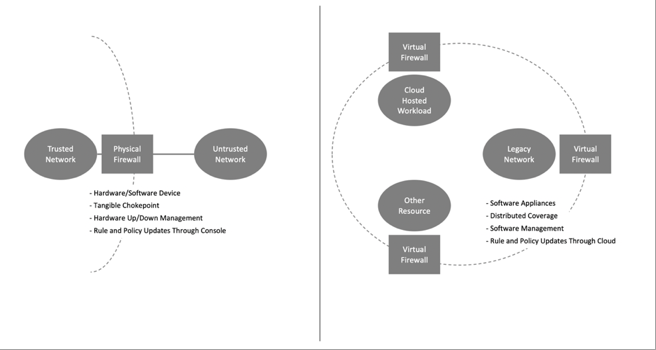

Cloud native security: A maturing and expanding arena

Along with the usual array of preventative controls that are deployed as part

of a cloud native platform, companies need to focus on detection and response

to breaches. It’s important to note that the usual toolsets that are put in

place will need to be supplemented by cloud native tools that can provide

targeted visibility into container-based workflows. Projects like Falco, which

can integrate with container workloads at a low level, are an important part

of this. Additionally, companies should make sure to properly use the

facilities that Kubernetes provides. For example, Kubernetes audit logging is

rarely enabled by default, but it’s an important control for any production

cluster. A key takeaway for container security deployments is the importance

of getting security controls in place before workloads are placed into

production. Ensuring that developers are making use of Kubernetes features

like Security Contexts to harden their deployments will make the deployment of

mandatory controls much easier. Also ensuring that a “least privilege” initial

approach is taken to network traffic in a cluster can help avoid the “hard

shell, soft inside” approach to security that allows attackers to easily

expand their access after an initial compromise has occurred.

Cloud computing in the real world: The challenges and opportunities of multicloud

In an ideal world, application workloads -- whatever their heritage -- should

be able to move seamlessly between, or be shared among, cloud service

providers (CSPs), alighting wherever the optimal combination of performance,

functionality, cost, security, compliance, availability, resilience, and so

on, is to be found -- while avoiding the dreaded 'vendor lock-in'. "Businesses

taking a multicloud approach can cherry-pick the solutions that best meet

their business needs as soon as they become available, rather than having to

wait for one vendor to catch up," John Abel, technical director, office of the

CTO, Google Cloud, told ZDNet. "Avoiding vendor lock in, increased agility,

more efficient costs and the promise of each provider's best solutions are all

too great to ignore." That's certainly the view taken by many respondents to

the survey underpinning the 2020 State of Multicloud report from application

resource management company Turbonomic. ... "Bottom-line, cultural change is a

prerequisite for managing the complexity of today's hybrid and multicloud

environments. Teams must operate faster, dynamically adapting to shifting

market trends to stay competitive.

An Architect's guide to APIs: REST, GraphQL, and gRPC

The benefit of taking an API-based approach to application architecture design

is that it allows a wide variety of physical client devices and application

types to interact with the given application. One API can be used not only for

PC-based computing but also for cellphones and IoT devices. Communication is

not limited to interactions between humans and applications. With the rise of

machine learning and artificial intelligence, service-to-service interaction

facilitated by APIs will emerge as the Internet's principal activity. APIs

bring a new dimension to architectural design. However, while network

communication and data structures have become more conventional over time,

there is still variety among API formats. There is no "one ring to rule them

all." Instead, there are many API formats, with the most popular being REST,

GraphQL, and gRPC. Thus a reasonable question to ask is, as an Enterprise

Architect, how do I pick the best API format to meet the need at hand? The

answer is that it's a matter of understanding the benefits and limitations of

the given format.

Unlock the Power of Omnichannel Retail at the Edge

The Edge exists wherever the digital world and physical world intersect, and data is securely collected, generated, and processed to create new value. According to Gartner, by 2025, 75 percent6 of data will be processed at the Edge. For retailers, Edge technology means real-time data collection, analytics and automated responses where they matter most — on the shop floor, be that physical or virtual. And for today’s retailers, it’s what happens when Edge computing is combined with Computer Vision and AI that is most powerful and exciting, as it creates the many opportunities of omnichannel shopping. With Computer Vision, retailers enter a world of powerful sensor-enabled cameras that can see much more than the human eye. Combined with Edge analytics and AI, Computer Vision can enable retailers to monitor, interpret, and act in real-time across all areas of the retail environment. This type of vision has obvious implications for security, but for retailers it also opens up huge possibilities in understanding shopping behavior and implementing rapid responses. For example, understanding how customers flow through the store, and at what times of the day, can allow the retailer to put more important items directly in their paths to be more visible.Hacking Group Used Crypto Miners as Distraction Technique

The use of the monero miners helped the hacking group establish persistence

within targeted networks and enabled them to deploy other spy tools and

malware without raising suspicion. That's because cryptocurrency miners are

usually low-level security priorities for most organizations, according to

Microsoft. "Cryptocurrency miners are typically associated with cybercriminal

operations, not sophisticated nation-state actor activity," the Microsoft

report notes. "They are not the most sophisticated type of threats, which also

means that they are not among the most critical security issues that defenders

address with urgency. Recent campaigns from the nation-state actor Bismuth

take advantage of the low-priority alerts coin miners cause to try and fly

under the radar and establish persistence." The Microsoft report also notes:

"While this actor's operational goals remained the same - establish continuous

monitoring and espionage, exfiltrating useful information as it surfaced -

their deployment of coin miners in their recent campaigns provided another way

for the attackers to monetize compromised networks."

Cypress vs. Selenium: Compare test automation frameworks

Selenium suits applications that don't have many complex front-end components.

Selenium's support for multiple languages makes it a good choice as the test

automation framework for development projects that aren't in JavaScript.

Selenium is open source, has ample documentation and is well supported by many

other open source tools. Also, when a project calls for behavior-driven

development (BDD), organizations find Selenium fits the approach well, as many

libraries, like Cucumber or Capybara, make writing tests within BDD structured

and implementable. Cypress is a great tool to automate JavaScript application

testing. And that's a large group, as JavaScript is the language of choice for

many modern web applications. Cypress integrates well with the client side and

asynchronous design of these applications, as it natively ties into the web

browser. Thus, test scripts run much quicker and more reliably than they would

for the same application tested with Selenium for automation. Cypress might be

better suited for a testing team with programming experience, as JavaScript is

a complex single-threaded, non-blocking, asynchronous, concurrent language.

The Complexity of Product Management and Product Ownership

An issue for organisational leaders considering how to design for product flow

is that when someone says product ownership or product management we are

immediately uncertain which of many possible definitions the person is referring

to. This level of ambiguity is a constant struggle in the software world. Agile,

DevOps, and Digital are all now terms which are the subject of confusion and

passionate neverending debates. Product ownership/management has now joined

them. Kent Beck described a similar issue in software teams when everyone has

slightly different concepts in their minds when describing system components. He

called this the problem of metaphor and prescribed it as a key practice in

eXtreme programming. We need to take this practice of System Metaphor to our

wider discussions as product delivery groups if we are going to resolve bigger

issues. To help consider some of the Metaphor surrounding the product owner

function I highly recommend the blog by Roman Pilcher (an author on product

management) He does a good job of creating metaphors for the key variations in

product management roles.

Quote for the day:

"Real generosity towards the future lies in giving all to the present." -- Camus